New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

Recurrent DQN families with a new interface #436

Conversation

since it does not make much sense to use this without recurrent models. supporting recurrent models in these examples can be future work.

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

Can you add checkboxes in the main ChainerRL repo README for the models that are now supported as recurrent?

Co-Authored-By: muupan <muupan@gmail.com>

Co-Authored-By: muupan <muupan@gmail.com>

Co-Authored-By: muupan <muupan@gmail.com>

|

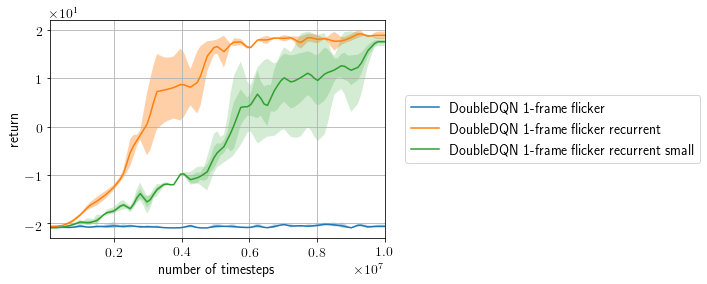

I performed experiments to validate recurrent DQN on flickering Pong with 1-frame observations and p=0.5.

Each configuration is evaluated with 3 trials with 3 different random seeds. As you can see from the |

Co-Authored-By: Prabhat Nagarajan <prabhat.nagarajan@gmail.com>

Co-Authored-By: Prabhat Nagarajan <prabhat.nagarajan@gmail.com>

Co-Authored-By: Prabhat Nagarajan <prabhat.nagarajan@gmail.com>

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

Almost done... Can you make these small changes.

Co-Authored-By: Prabhat Nagarajan <prabhat.nagarajan@gmail.com>

Co-Authored-By: Prabhat Nagarajan <prabhat.nagarajan@gmail.com>

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

Looks good. Please take a look at the comments for minor improvements.

| rbuf = replay_buffer.EpisodicReplayBuffer(10 ** 6) | ||

| else: | ||

| # Q-network without LSTM | ||

| q_func = chainer.Sequential( |

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

Any reason not to copy this code: https://github.com/chainer/chainerrl/blob/master/examples/atari/train_dqn_ale.py#L50?

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

Partly because it is consistent with a recurrent version, partly because I think it is easier to understand, thus better as an example.

| args.final_exploration_frames, | ||

| lambda: np.random.randint(n_actions)) | ||

|

|

||

| opt = chainer.optimizers.Adam(1e-4, eps=1e-4) |

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

I'm assuming this should be fine. But why did you use Adam?

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

It is just because Adam seems preferred in literature recently. I don't mean to reproduce any paper in this example.

Co-Authored-By: Prabhat Nagarajan <prabhat.nagarajan@gmail.com>

Merge #431 before this PR.This PR resolves #112

StatelessRecurrentinterface to support recurrent models in DQN variants.examples/ale/train_drqn_ale.pyas a solid example of recurrent DQN.TODO