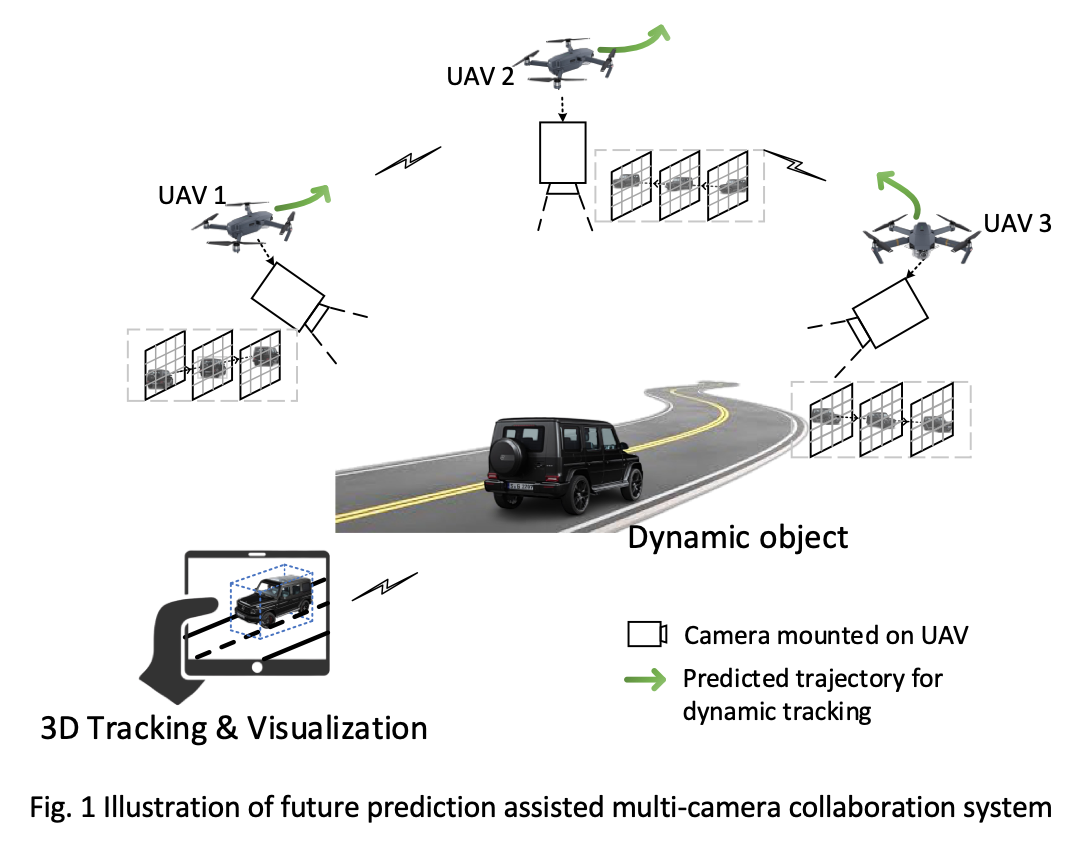

In this project, we propose a novel real-time pipeline, future prediction assisted feedback control, to address these challenges and achieve multi-camera tracking and 3D visualization. In particular, the proposed multi-camera control system includes three functional component:

- Predicting future scene condition by extrapolated frames;

- Decision-making of optimal camera settings for predicted scene condition and further optimal control trajectory from current settings to the future settings;

- Cameras settings are updated by following the optimal control trajectory to actively adapt to the changes of scene condition.

Based on the proposed solution, real-time adaption of camera settings to ever-changing scene features could be achieved. Besides, the system could alleviate the negative effect of network latency and achieve synchronous multi-camera control.

Application scenario:

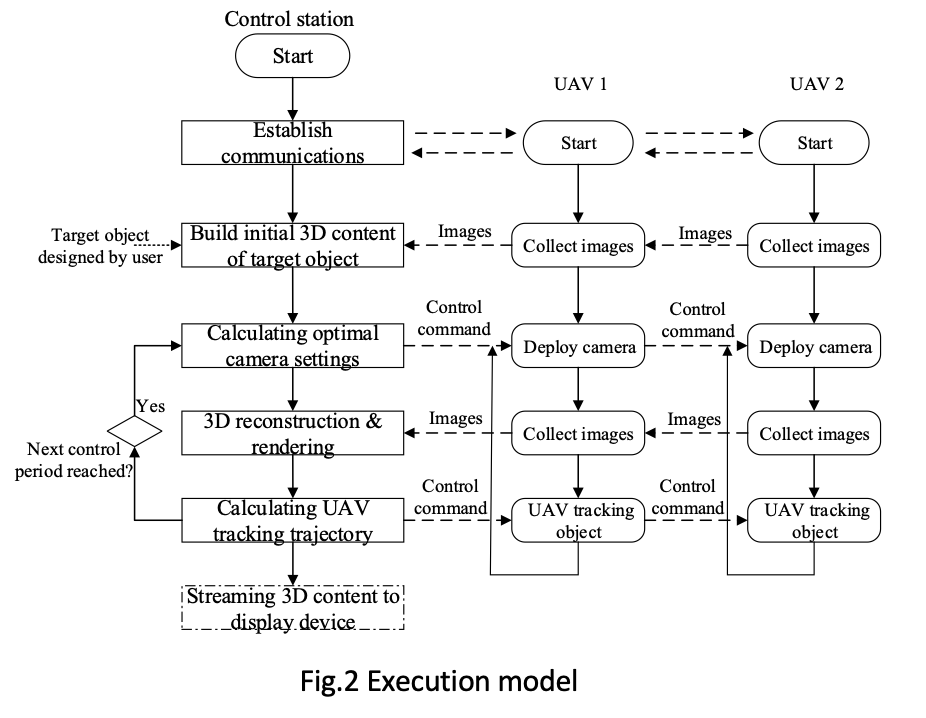

Execution flow:

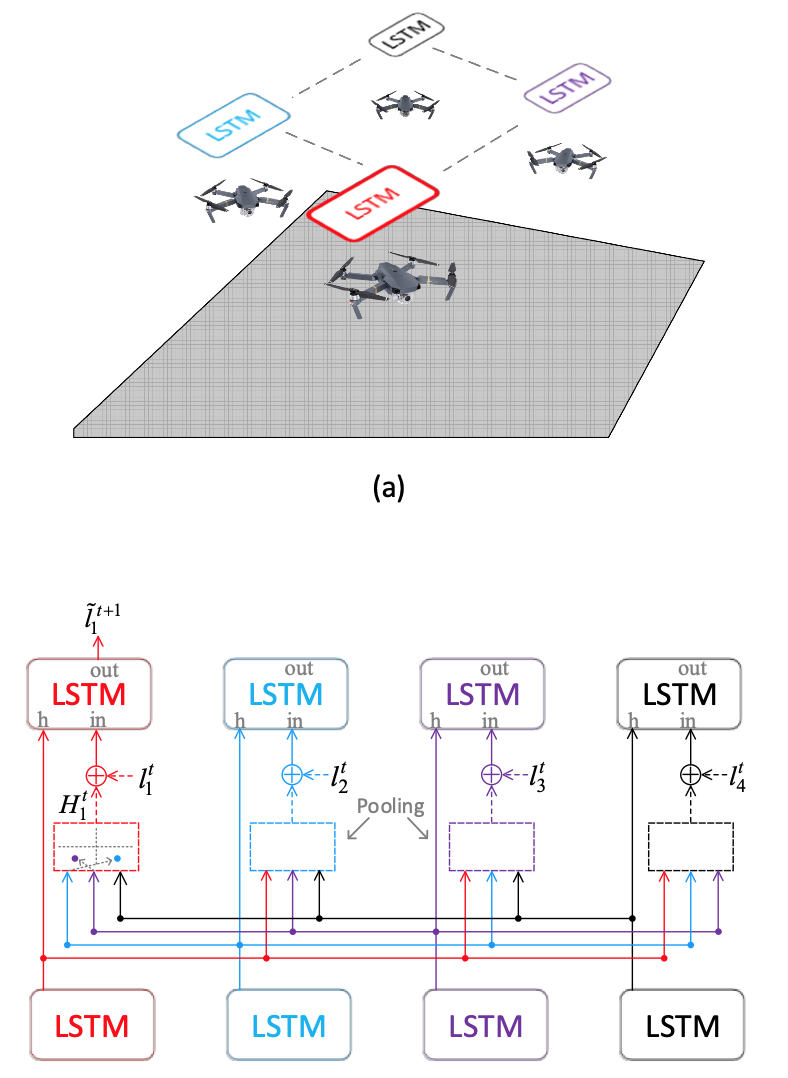

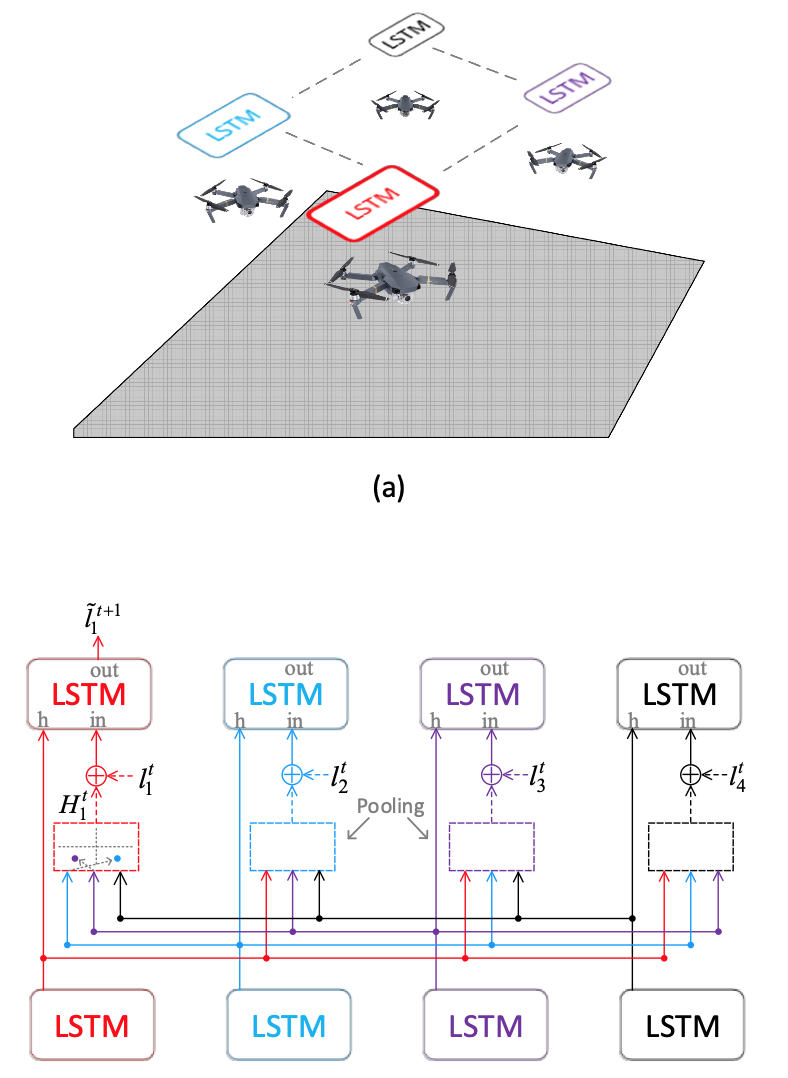

Collaborative LSTM model:

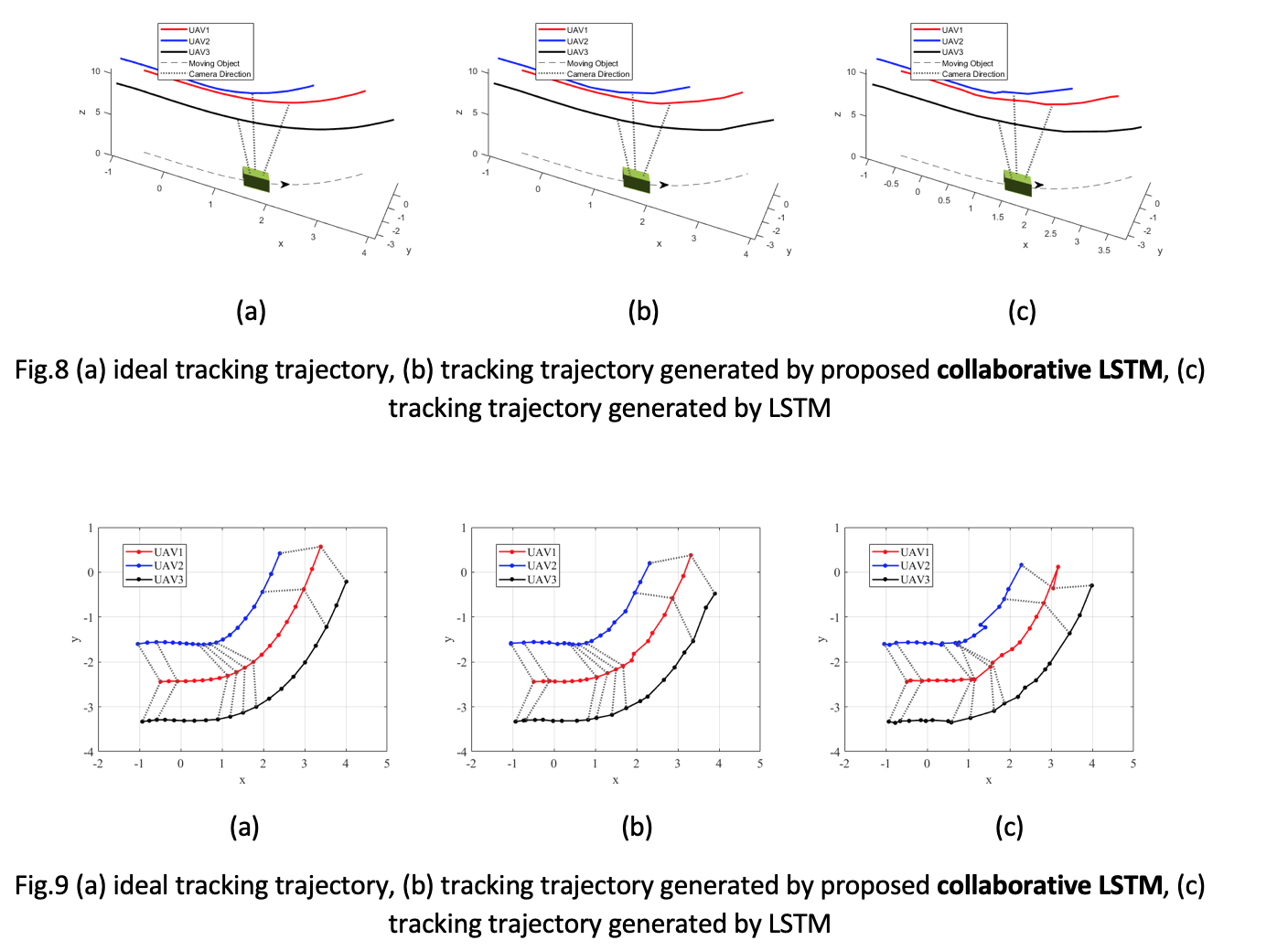

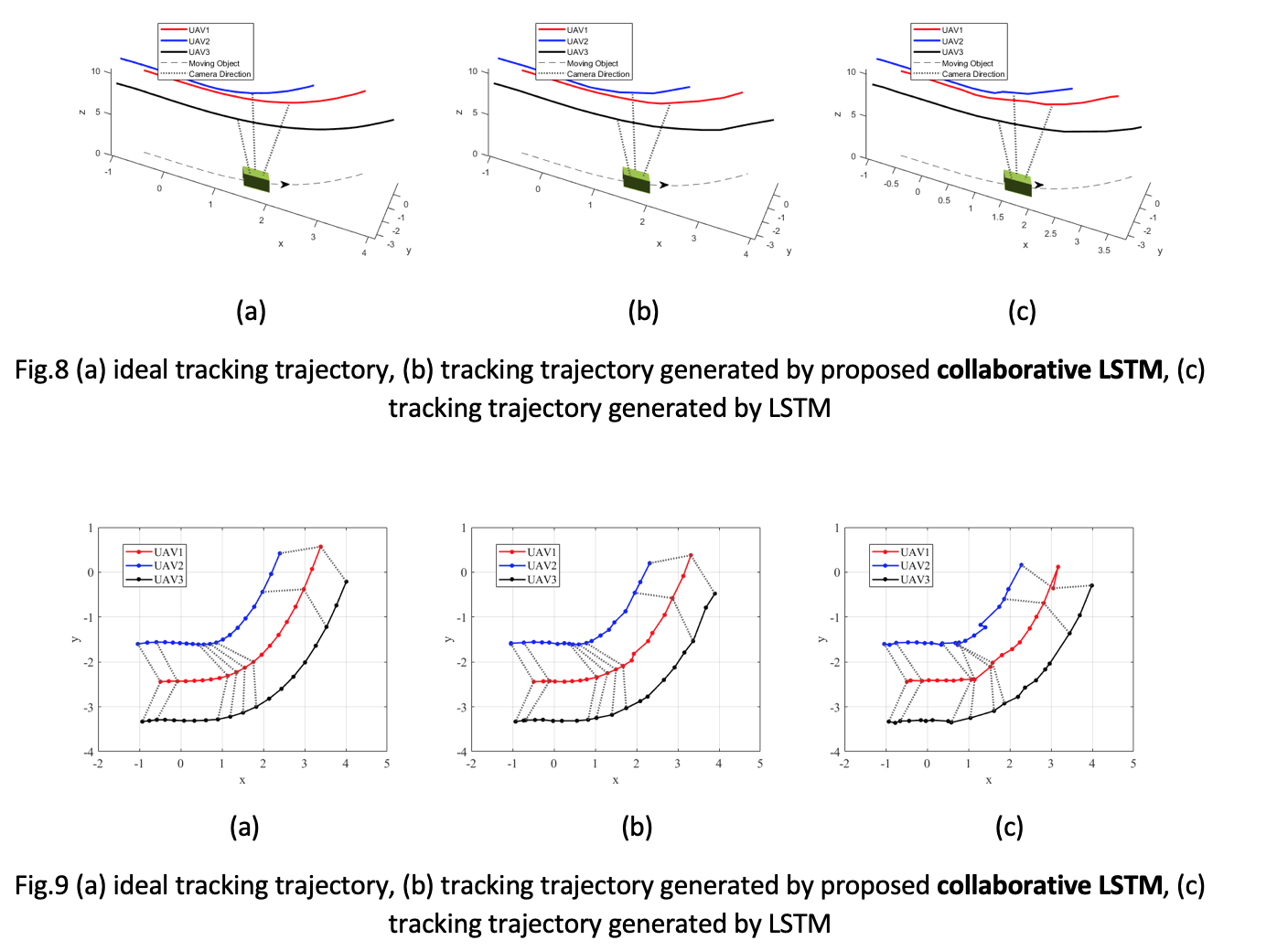

Performance evalution:

Pytorch program is developed by using the source code of Social LSTM implementation as a basis (https://github.com/quancore/social-lstm)