New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

can not export data,there is nothing in the export compressed file #1749

Comments

|

After several attempts, a zip. XML file was exported Txt file, the content is {"status":"Not ready"} |

|

The project created is a sequence annotation |

|

Same issue happens to me. I deploy the project on OpenShift Container Platform. The task is Text Classification. And I got the exported txt file with content {"status":"Not ready"} too. |

|

Hmm, I can't reproduce the problem. What is output in the > docker logs YOUR_CONTAINER_ID

[2022-03-24 02:25:47 +0000] [18] [INFO] Starting gunicorn 20.1.0

[2022-03-24 02:25:47 +0000] [18] [INFO] Listening at: http://0.0.0.0:8000 (18)

[2022-03-24 02:25:47 +0000] [18] [INFO] Using worker: sync

[2022-03-24 02:25:47 +0000] [23] [INFO] Booting worker with pid: 23

[2022-03-24 02:25:47 +0000] [24] [INFO] Booting worker with pid: 24

-------------- celery@8d85ef7a6f5f v5.2.3 (dawn-chorus)

--- ***** -----

-- ******* ---- Linux-5.10.47-linuxkit-x86_64-with-glibc2.2.5 2022-03-24 02:25:50

- *** --- * ---

- ** ---------- [config]

- ** ---------- .> app: config:0x7f59c7580190

- ** ---------- .> transport: sqla+sqlite:////data/doccano.db

- ** ---------- .> results:

- *** --- * --- .> concurrency: 2 (prefork)

-- ******* ---- .> task events: OFF (enable -E to monitor tasks in this worker)

--- ***** -----

-------------- [queues]

.> celery exchange=celery(direct) key=celery

[tasks]

. data_export.celery_tasks.export_dataset

. data_import.celery_tasks.import_dataset

. health_check.contrib.celery.tasks.add

[2022-03-24 02:25:50,824: INFO/MainProcess] Connected to sqla+sqlite:////data/doccano.db

[2022-03-24 02:25:50,956: INFO/MainProcess] celery@8d85ef7a6f5f ready.

[2022-03-24 02:26:38,314: INFO/MainProcess] Task data_import.celery_tasks.import_dataset[3490eade-4d8f-42cd-a3a7-a312f79c361a] received

[2022-03-24 02:26:38,380: INFO/ForkPoolWorker-1] Task data_import.celery_tasks.import_dataset[3490eade-4d8f-42cd-a3a7-a312f79c361a] succeeded in 0.05503669998142868s: {'error': []}

[2022-03-24 02:26:49,363: INFO/MainProcess] Task data_export.celery_tasks.export_dataset[d1c9a5ff-781f-4cec-a8d7-acc44503cfb4] received

[2022-03-24 02:26:49,400: INFO/ForkPoolWorker-2] Task data_export.celery_tasks.export_dataset[d1c9a5ff-781f-4cec-a8d7-acc44503cfb4] succeeded in 0.03536610002629459s: '/doccano/backend/media/66c0c1a6-549b-4d7d-a434-a029ec4926a7.zip' |

|

By the way, I show you how to copy the data from the container to the host as a quick fix:

> docker exec -it doccano bash

> doccano@8d85ef7a6f5f:/doccano/backend$ ls /data

doccano.db

> doccano@8d85ef7a6f5f:/doccano/backend$ exit

exit

# Replace 8d85ef7a6f5f with your container id.

> docker cp 8d85ef7a6f5f:/data/doccano.db .

> sqlite3 doccano.db

sqlite> select text from examples_example;

exampleA

exampleB

exampleA

exampleB

exampleC |

|

I understand the problem. The frontend tries to get the task status repeatedly. If the task is ready, it tries to download the file: doccano/frontend/pages/projects/_id/dataset/export.vue Lines 114 to 125 in 27eff5c

The download API is the following. This should be called after the task is ready. But it seems to me that the task is not ready for some reason and returns doccano/backend/data_export/views.py Lines 25 to 35 in 27eff5c

|

|

Log streaming from OpenShift give me something like this. I do not know if it helps. |

There is nothing in the exported compressed file, and it can't even be decompressed |

the way is ok, but i want the annotationed data. |

For example, If you want to write the data id, start offset, end offset, and label name to some a csv file, the following queries are useful: sqlite> .headers on

sqlite> .mode csv

sqlite> .output annotation.csv

sqlite> SELECT example_id, start_offset, end_offset, text FROM labels_span, label_types_spantype WHERE labels_span.label_id=label_types_spantype.id;

sqlite> .quitContents: > head annotation.csv

example_id,start_offset,end_offset,text

20757,0,8,LOC

20758,4,23,ORG

20758,59,65,MISC

... |

|

Thanks. The |

|

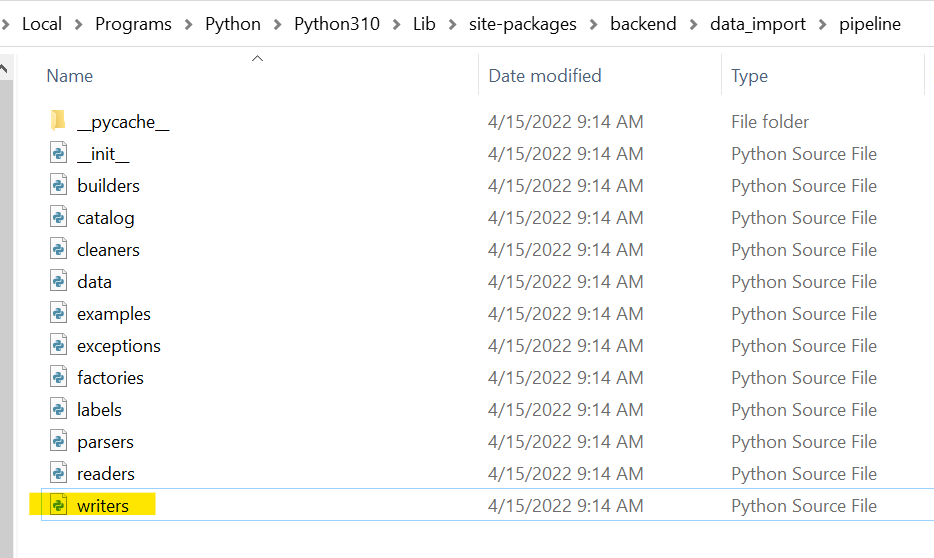

I solve this bug. in the D:\conda\envs\doccano\Lib\site-packages\backend\data_import\pipeline\writer.py file, class LineWriter(BaseWriter):

extension = 'txt'

def write(self, records: Iterator[Record]) -> str:

files = {}

for record in records:

filename = os.path.join(self.tmpdir, f'{record.user}.{self.extension}')

if filename not in files:

f = open(filename, mode='a',encoding="utf-8") #here

files[filename] = f

f = files[filename]

line = self.create_line(record)

f.write(f'{line}\n')

for f in files.values():

f.close()

save_file = self.write_zip(files)

for file in files:

os.remove(file)

return save_filewhen export the file , dont "export only approved file" |

|

Fixed #1754 |

How to reproduce the behaviour

Your Environment

The text was updated successfully, but these errors were encountered: