New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

Compiling on host. #63

Comments

|

Hi bpinaya, look for the CUDA_NVCC_FLAGS and add -std=c++11 as follows. This was shown on another comment. set( |

|

@abdo-abaco thanks for the quick response, I tried that one but I am still getting stuck at: I have a tx1 where I was successfully running this but in the host computer it fails. If you know any other tensorRT examples I'd be thankful! |

|

I am also trying to compile this on a host computer. What the IDE is Nsight Eclipse Edition from Nvidia and the host computer is ubuntu14.04 .and I connect Tx1 and the host with ssh according to the follows what is from Cmakelist,I include the libraries and the .h ``# build C/C++ interface file(GLOB inferenceSources .cpp .cu util/.cpp util/camera/.cpp util/cuda/.cu util/display/.cpp) cuda_add_library(jetson-inference SHARED ${inferenceSources}) but now i can not compile it, what the error is |

|

@bpinaya it should compile with the correct libraries installed. Make sure TensorRT is installed and try https://github.com/Abaco-Systems/jetson-inference-gv. This has webcam support. This should compile as well and currently working on getting it to run on host. |

|

I got this demo to run on the host. So you don't need the Abaco one. Go to CMakeLists.txt and add the lines underneath: -gencode arch=compute_50,code=sm_50 Another note: make sure you have the host tools from jetpack installed. Not sure if this can help. |

|

This is the error while iam compiling jetson inference.Please someone help me in finding a way ut. |

|

Running jetson-inference on the host (which is not officially supported), you are missing NVIDIA TensorRT (https://developer.nvidia.com/tensorrt) and gstreamer.

From: sulth [mailto:notifications@github.com]

Sent: Friday, June 09, 2017 9:14 AM

To: dusty-nv/jetson-inference

Cc: Subscribed

Subject: Re: [dusty-nv/jetson-inference] Compiling on host. (#63)

admin@apnsfoml01:~/jetson-inference/build$ sudo make -j8

[ 5%] Building CXX object CMakeFiles/jetson-inference.dir/imageNet.cpp.o

[ 5%] Building CXX object CMakeFiles/jetson-inference.dir/detectNet.cpp.o

[ 8%] Building CXX object CMakeFiles/jetson-inference.dir/segNet.cpp.o

[ 10%] Building CXX object CMakeFiles/jetson-inference.dir/util/camera/gstUtility.cpp.o

In file included from /home/admin/jetson-inference/detectNet.h:9:0,

from /home/admin/jetson-inference/detectNet.cpp:5:

/home/admin/jetson-inference/tensorNet.h:9:21: fatal error: NvInfer.h: No such file or directory

#include "NvInfer.h"

^

compilation terminated.

In file included from /home/admin/jetson-inference/segNet.h:9:0,

from /home/admin/jetson-inference/segNet.cpp:5:

/home/admin/jetson-inference/tensorNet.h:9:21: fatal error: NvInfer.h: No such file or directory

#include "NvInfer.h"

^

compilation terminated.

CMakeFiles/jetson-inference.dir/build.make:1373: recipe for target 'CMakeFiles/jetson-inference.dir/detectNet.cpp.o' failed

make[2]: *** [CMakeFiles/jetson-inference.dir/detectNet.cpp.o] Error 1

make[2]: *** Waiting for unfinished jobs....

In file included from /usr/include/glib-2.0/glib/galloca.h:32:0,

from /usr/include/glib-2.0/glib.h:30,

from /usr/include/gstreamer-1.0/gst/gst.h:27,

from /home/admin/jetson-inference/util/camera/gstUtility.h:9,

from /home/admin/jetson-inference/util/camera/gstUtility.cpp:5:

/usr/include/glib-2.0/glib/gtypes.h:32:24: fatal error: glibconfig.h: No such file or directory

[ 13%] Building CXX object CMakeFiles/jetson-inference.dir/tensorNet.cpp.o

compilation terminated.

CMakeFiles/jetson-inference.dir/build.make:1421: recipe for target 'CMakeFiles/jetson-inference.dir/segNet.cpp.o' failed

make[2]: *** [CMakeFiles/jetson-inference.dir/segNet.cpp.o] Error 1

In file included from /home/admin/jetson-inference/imageNet.h:9:0,

from /home/admin/jetson-inference/imageNet.cpp:5:

/home/admin/jetson-inference/tensorNet.h:9:21: fatal error: NvInfer.h: No such file or directory

#include "NvInfer.h"

^

compilation terminated.

CMakeFiles/jetson-inference.dir/build.make:1517: recipe for target 'CMakeFiles/jetson-inference.dir/util/camera/gstUtility.cpp.o' failed

make[2]: *** [CMakeFiles/jetson-inference.dir/util/camera/gstUtility.cpp.o] Error 1

CMakeFiles/jetson-inference.dir/build.make:1397: recipe for target 'CMakeFiles/jetson-inference.dir/imageNet.cpp.o' failed

make[2]: *** [CMakeFiles/jetson-inference.dir/imageNet.cpp.o] Error 1

[ 16%] Building CXX object CMakeFiles/jetson-inference.dir/util/camera/gstCamera.cpp.o

In file included from /home/admin/jetson-inference/tensorNet.cpp:5:0:

/home/admin/jetson-inference/tensorNet.h:9:21: fatal error: NvInfer.h: No such file or directory

#include "NvInfer.h"

^

compilation terminated.

CMakeFiles/jetson-inference.dir/build.make:1445: recipe for target 'CMakeFiles/jetson-inference.dir/tensorNet.cpp.o' failed

make[2]: *** [CMakeFiles/jetson-inference.dir/tensorNet.cpp.o] Error 1

In file included from /usr/include/glib-2.0/glib/galloca.h:32:0,

from /usr/include/glib-2.0/glib.h:30,

from /usr/include/gstreamer-1.0/gst/gst.h:27,

from /home/admin/jetson-inference/util/camera/gstCamera.h:8,

from /home/admin/jetson-inference/util/camera/gstCamera.cpp:5:

/usr/include/glib-2.0/glib/gtypes.h:32:24: fatal error: glibconfig.h: No such file or directory

compilation terminated.

CMakeFiles/jetson-inference.dir/build.make:1565: recipe for target 'CMakeFiles/jetson-inference.dir/util/camera/gstCamera.cpp.o' failed

make[2]: *** [CMakeFiles/jetson-inference.dir/util/camera/gstCamera.cpp.o] Error 1

CMakeFiles/Makefile2:67: recipe for target 'CMakeFiles/jetson-inference.dir/all' failed

make[1]: *** [CMakeFiles/jetson-inference.dir/all] Error 2

Makefile:127: recipe for target 'all' failed

make: *** [all] Error 2

This is the error while iam compiling jetson inference.Please someone help me in finding a way ut.

—

You are receiving this because you are subscribed to this thread.

Reply to this email directly, view it on GitHub<#63 (comment)>, or mute the thread<https://github.com/notifications/unsubscribe-auth/AOpDK0FVMumNGSrFY3oiHCPkAn6Shg7Fks5sCUUpgaJpZM4MtX4g>.

…-----------------------------------------------------------------------------------

This email message is for the sole use of the intended recipient(s) and may contain

confidential information. Any unauthorized review, use, disclosure or distribution

is prohibited. If you are not the intended recipient, please contact the sender by

reply email and destroy all copies of the original message.

-----------------------------------------------------------------------------------

|

|

Thankyou.So whether i should install the jetson inference inside the board than in the host pc. |

|

Now i tried installing jetson inference on jetson tx2 board,i have errors as mentioned down.Please may help me build it in cmake ../ -- Configuring incomplete, errors occurred! |

|

OK, it looks like qt dev package is missing. Can you try running this from the command line:

`sudo apt-get install -y libqt4-dev qt4-dev-tools libglew-dev glew-utils libgstreamer1.0-dev libgstreamer-plugins-base1.0-dev libglib2.0-dev`

Also this should have already been run in `CMakePreBuild.sh` script during `cmake ../` step… you may want to delete build directory and try again.

From: sulth [mailto:notifications@github.com]

Sent: Friday, June 09, 2017 9:39 AM

To: dusty-nv/jetson-inference

Cc: Dustin Franklin; Comment

Subject: Re: [dusty-nv/jetson-inference] Compiling on host. (#63)

Now i tried installing jetson inference on jetson tx2 board,i have errors as mentioned down.Please may help me build it in cmake ../

FCN-Alexnet-Aerial-FPV-720p/snapshot_iter_10280.caffemodel

FCN-Alexnet-Aerial-FPV-720p/fpv-labels.txt

FCN-Alexnet-Aerial-FPV-720p/fpv-deploy-colors.txt

FCN-Alexnet-Aerial-FPV-720p/fcn_alexnet.deploy.prototxt

FCN-Alexnet-Aerial-FPV-720p/fcn_alexnet.digits.deploy.prototxt

FCN-Alexnet-Aerial-FPV-720p/fpv-training-colors.txt

[Pre-build] Finished CMakePreBuild script

Finished installing dependencies

qmake: could not exec '/usr/lib/aarch64-linux-gnu/qt4/bin/qmake': No such file or directory

CMake Error at /usr/share/cmake-3.5/Modules/FindQt4.cmake:1326 (message):

Found unsuitable Qt version "" from NOTFOUND, this code requires Qt 4.x

Call Stack (most recent call first):

CMakeLists.txt:24 (find_package)

…-- Configuring incomplete, errors occurred!

See also "/home/nvidia/jetson-inference/build/CMakeFiles/CMakeOutput.log".

nvidia@tegra-ubuntu:~/jetson-inference/build$

—

You are receiving this because you commented.

Reply to this email directly, view it on GitHub<#63 (comment)>, or mute the thread<https://github.com/notifications/unsubscribe-auth/AOpDK33yE05IxfyT3IQN6ZJ-Ge06-G4Iks5sCUr9gaJpZM4MtX4g>.

-----------------------------------------------------------------------------------

This email message is for the sole use of the intended recipient(s) and may contain

confidential information. Any unauthorized review, use, disclosure or distribution

is prohibited. If you are not the intended recipient, please contact the sender by

reply email and destroy all copies of the original message.

-----------------------------------------------------------------------------------

|

|

Thanks i have installed all those packages except Package 'glew-utils' has no installation candidate in the location/usr/lib/aarch64-linux-gnu -- Configuring incomplete, errors occurred! |

|

CMake Error: The following variables are used in this project, but they are set to NOTFOUND. -- Configuring incomplete, errors occurred! Please may help me analyse the problem.Iam stuck.Is it with any problem on jetpack installation. |

|

Hi, any follow up? I have the same problem too and stuck here. |

|

Hello @dusty-nv @bpinaya @abdo-abaco , I am trying to run Jetson-Inference on a host machine and got stuck with this error. I have installed TensorRT and GStreamer and all other Cuda related requirements. Have anyone successfully able to install this on host if so please can you see what i am doing wrong here? |

|

@trohit920 it should work with @abdo-abaco tips, at the end I got it compiling on a 16.04 with a fresh install. Also I think that to better get a grasp on TensorRT it's a good idea to check their dev-guide |

|

Thanks for your reply but its not working for me..! I have CUDA 9 and GTX1080 installed on host side with ububtu 16.04. |

|

Did you use c++11 to compile your code? As per the suggestions above? Some of the errors you have to fix yourself, just check the output, for example: For that check this link. You just have to include the vector library as: #include <vector>Or your first error: That one is regarding a conversion from a string to a gchar, you can find more here but basically, you'd have to do use I remember I was struggling with getting it to compile on host but it depends on what you want to do with it, I just wanted to learn more about tensorRt and ended up using their dev-guide and build my own examples. If you want to use object detection with tensorrt then it might be worth while to get it to compile on host, but if you just want to learn more about tensorRT then I recommend you just use the code here, the dev-guide and go from there. Good luck! |

|

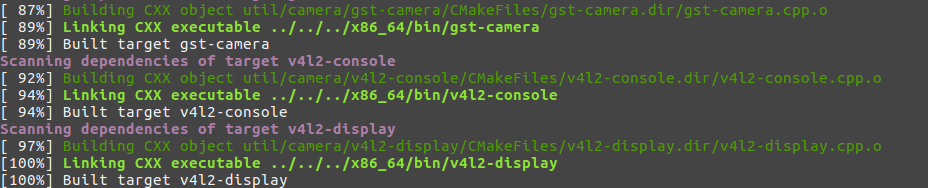

@trohit920 I just cloned the repo again and built it on host, it seems its easier to build now, I'll post some notes so that you can build it yourself. My And my To build on host I had to make the following extra installs:

Afterward you should cmake and make and you'll have some issues with those two libraries not being found. if you check this section of the # build C/C++ interface

include_directories(${PROJECT_INCLUDE_DIR} ${GIE_PATH}/include)

include_directories(/usr/include/gstreamer-1.0 /usr/lib/aarch64-linux-gnu/gstreamer-1.0/include /usr/include/glib-2.0 /usr/include/libxml2 /usr/lib/aarch64-linux-gnu/glib-2.0/include/)

file(GLOB inferenceSources *.cpp *.cu util/*.cpp util/camera/*.cpp util/cuda/*.cu util/display/*.cpp)

file(GLOB inferenceIncludes *.h util/*.h util/camera/*.h util/cuda/*.h util/display/*.h)

cuda_add_library(jetson-inference SHARED ${inferenceSources})

target_link_libraries(jetson-inference nvcaffe_parser nvinfer Qt4::QtGui GL GLEW gstreamer-1.0 gstapp-1.0) # gstreamer-0.10 gstbase-0.10 gstapp-0.10

So you see it's looking for If you have further problems building it let me know, hopefully this helps other folks trying to build on host. Which is useful if you don't have a jetson on hand and want to try TensorRT out. |

|

And I have a 1080Ti, and CUDA 9.2 but it shouldn't matter at all. set(

CUDA_NVCC_FLAGS

${CUDA_NVCC_FLAGS};

-O3

-gencode arch=compute_53,code=sm_53

-gencode arch=compute_62,code=sm_62

)Add the architecture corresponding to your device. |

|

I successfully ran the command of $cmake ../ in /build file folder, and the result of this step shows "Build files have been written to: /home/dc2-user/jetson-inference/build", but when I run $make, it told me that the function 'gst_structure_get_list' was not declared. |

Hi there Dustin, I am trying to compile this on a host computer just to see the TensorRT examples, been looking around and this repo seems to have it the clearest.

I am trying on the master branch, with cuda 8 and tensorRT installed and I am seeing errors like these:

`:0:7: warning: ISO C99 requires whitespace after the macro name [enabled by default]

:0:7: warning: ISO C99 requires whitespace after the macro name [enabled by default]

:0:7: warning: ISO C99 requires whitespace after the macro name [enabled by default]

:0:7: warning: ISO C99 requires whitespace after the macro name [enabled by default]

/usr/lib/gcc/x86_64-linux-gnu/4.8/include/stddef.h(432): error: identifier "nullptr" is undefined

/usr/lib/gcc/x86_64-linux-gnu/4.8/include/stddef.h(432): error: expected a ";"

/usr/include/x86_64-linux-gnu/c++/4.8/bits/c++config.h(190): error: expected a ";"

/usr/include/c++/4.8/exception(63): error: expected a ";"

/usr/include/c++/4.8/exception(68): error: expected a ";"

I assume those are some C++11 errors but I saw the CmakeList and found:set(CMAKE_CXX_FLAGS "${CMAKE_CXX_FLAGS} -std=c++11")`Any tips I could use? All help is appreciated.

The text was updated successfully, but these errors were encountered: