This repository contains material from Udacity's Value-based Methods github.

For this project, we train an agent to navigate (and collect bananas!) in a large, square world.

A reward of +1 is provided for collecting a yellow banana, and a reward of -1 is provided for collecting a blue banana. Thus, the goal of your agent is to collect as many yellow bananas as possible while avoiding blue bananas.

The state space has 37 dimensions and contains the agent's velocity, along with ray-based perception of objects around agent's forward direction. Given this information, the agent has to learn how to best select actions. Four discrete actions are available, corresponding to:

0- move forward.1- move backward.2- turn left.3- turn right.

The task is episodic, and in order to solve the environment, an agent must get an average score of +13 over 100 consecutive episodes.

For the Optional Challenge: Learning from Pixels

This environment is almost identical to the project environment, where the only difference is that the state is an 84 x 84 RGB image, corresponding to the agent's first-person view of the environment.

To set up your python environment to run the code in this repository, follow the instructions below.

-

Create (and activate) a new environment with Python 3.9.

- Linux or Mac:

conda create --name drlnd source activate drlnd- Windows:

conda create --name drlnd activate drlnd

-

Follow the instructions in Pytorch web page to install pytorch and its dependencies (PIL, numpy,...). For Windows and cuda 11.6

conda install pytorch torchvision torchaudio cudatoolkit=11.6 -c pytorch -c conda-forge

-

Follow the instructions in this repository to perform a minimal install of OpenAI gym.

- Install the box2d environment group by following the instructions here.

pip install gym[box2d]

-

Follow the instructions in Navigation to get the environment.

-

Clone the repository, and navigate to the

python/folder. Then, install several dependencies.

git clone https://github.com/eljandoubi/DQN-Navigation.git

cd DQN-Navigation/python

pip install .- Create an IPython kernel for the

drlndenvironment.

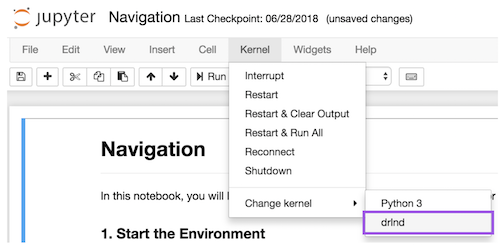

python -m ipykernel install --user --name drlnd --display-name "drlnd"- Before running code in a notebook, change the kernel to match the

drlndenvironment by using the drop-downKernelmenu.

You can train and/or inference Navigation (Pixels) environment:

First, go to p1_navigation/.

Then, run the training and/or inference cell of Deep_Q_Network_Navigation(_Pixels).ipynb.

The pre-trained model with the highest score is stored in Navigation_(Pixels_)checkpoint.

The implementation and resultats are discussed in the report.