-

|

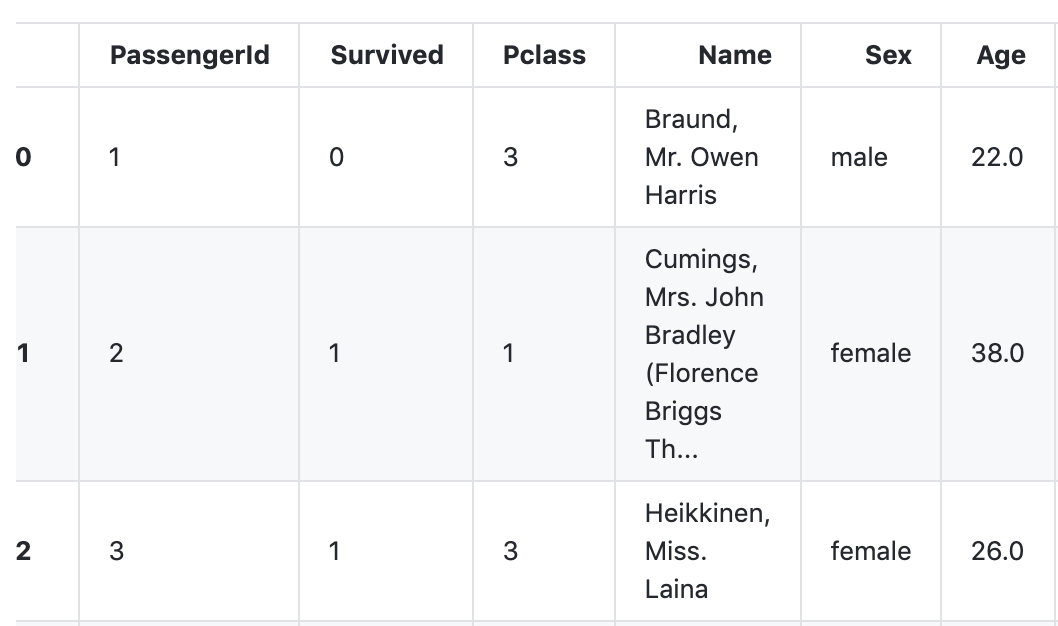

I'm playing with a toy datascience problem on Kaggle using Copilot. Overall, it appears immensely useful for quick data exploration and transformation. However, I've encountered a weird quirk. There is a column named 'Sex' in the training data: As soon as Copilot sees the word 'Sex' in the code it stops working. E.g., when I add this line to my notebook, Copilot completions stop working for all lines following the aforementioned code (they still work for the preceding lines). Is this some kind of content filter misbehaving? Is this behavior coming from OpenAI, or is it something on the Copilot side? Is it supposed to work like this? UPD I tried to create a minimal reproducible test for this weirdness. Here is the whole content of the file ( Cases when completion stops working:Cases when completion works fine:Is this some kind of gender discrimination prevention mechanism or am I just seeing things? |

Beta Was this translation helpful? Give feedback.

Replies: 1 comment 2 replies

-

|

Ok, after some research, I discovered, that there is indeed a content filter, that, at this point, appears to be pretty rudimentary (as in, it produces false negatives, while it can be easily circumvented by string concatenation). I just hope that this filter is improved in the future, so innocuous words, such as 'boy' won't break copilot altogether. |

Beta Was this translation helpful? Give feedback.

Ok, after some research, I discovered, that there is indeed a content filter, that, at this point, appears to be pretty rudimentary (as in, it produces false negatives, while it can be easily circumvented by string concatenation).

I just hope that this filter is improved in the future, so innocuous words, such as 'boy' won't break copilot altogether.