New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

Why does train_text_to_image.py perform so differently from the CompVis script? #1153

Comments

|

Thanks a lot for posting the question here @john-sungjin! Sorry for the moment we try to keep everything on GitHub. From the discussion it seems like many people are encountering your issue so let's maybe spend some time to figure out what's going on. @patil-suraj do you think you might have some time soon to look into it? If not I can try to allocate time (or maybe cc @anton-l) |

|

Thanks for posting the detailed issue @john-sungjin ! As you said, the implementation is very similar to the compvis one. The one difference that I'm aware of is that, in the compvis script, for example the Pokemon fine-tuning script, the model is initialised from the I am going to add an option which enables loading both the non-ema (for training) and ema (for EMA updates) in diffusers script and then compare again. Will report here as soon as possible :) |

|

I had the same problem, looking forward to your experiment。 |

|

same problem |

|

Mark it |

|

Going to update the script soon, I am getting good results with script now, see for example the emoji model |

|

Hi @patil-suraj , Here are my code snippets: |

|

Any update? The results are bad as least on the pokemon dataset. |

|

Ping @patil-suraj here |

|

@patil-suraj can you please make the required updates? Also cc @williamberman |

|

The problem goes away in the lastest 0.10.0 version. But it would be helpful to show what specific modifications make it work. |

|

Another ping here @patil-suraj - could you look into this. Should we show how to use the EMA weights? |

|

Super sorry for being late here. I think using non-ema weights for training and ema weights for EMA updates will fix this issue. Also, the script is working for some users (and me also) as is, for example, @Norod has gotten good results with it, see https://huggingface.co/Norod78/sd2-simpsons-blip. Here's the fix I'm proposing.

|

|

Also, make sure to pass the |

|

WIP PR is here #1834, will start running some experiments with it. |

|

Have started a training run with SD1.5, and will get to know the results after 5 hours. |

|

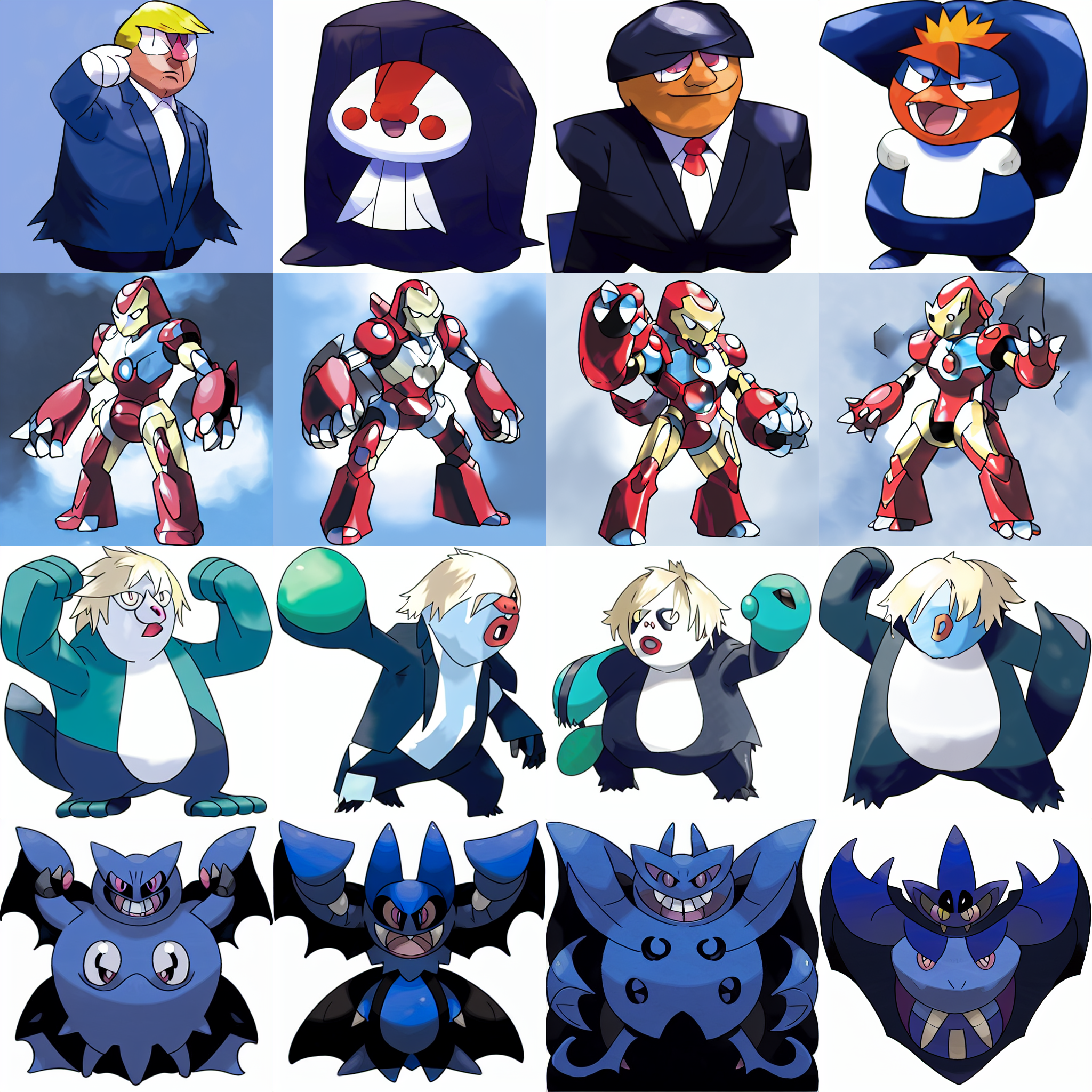

Hey everyone here, we merged two PRs today that should fix the issues with script and make it perform similarly to compvis script. #1868: which fixes a subtle big with ema updates. With these changes I trained a model on the pokemon dataset and results are looking good now! (Guess the prompts -:) ) Here's the model if you want to try yourself . Trained it for about 140 epochs on 2 A100s, here's the command I used export MODEL_NAME="CompVis/stable-diffusion-v1-4"

export NOM_EMA_REVISION="non-ema"

export DATASET_NAME="lambdalabs/pokemon-blip-captions"

export WANDB_PROJECT="stable-diffusion-pokemon"

accelerate launch --multi_gpu --gpu_ids="0,1" --mixed_precision="no" \

../diffusers/examples/text_to_image/train_text_to_image.py \

--pretrained_model_name_or_path=$MODEL_NAME \

--non_ema_revision=$NOM_EMA_REVISION \

--dataset_name=$DATASET_NAME --caption_column="text" \

--resolution=512 --random_flip \

--train_batch_size=12 --gradient_checkpointing \

--max_train_steps=5000 --checkpointing_steps=500 \

--learning_rate=1e-04 --use_ema \

--lr_scheduler="constant" --lr_warmup_steps=0 \

--output_dir="models/pokemon-model" --seed=34637847 \

--allow_tf32 \

--enable_xformers_memory_efficient_attention \The script should work well now, but if you still issues with it, feel free to re-open this issue :) |

|

Cool thanks. In my experiment, I got the same results, the setting was as below: |

I posted about this on the forum but didn't get any useful feedback - would love to hear from someone who knows the in and outs of the diffusers codebase!

https://discuss.huggingface.co/t/discrepancies-between-compvis-and-diffuser-fine-tuning/25556

To summarize the post: the

train_text_to_image.pyscript and original CompVis repo perform very differently when fine-tuning on the same dataset with the same hyperparameters. I'm trying to reproduce the Lamda Labs Pokemon fine-tuning results and finding difficulty doing so (picture results in forum post).I've been digging into the implementations and I'm not noticing any obvious differences in how the models are trained, losses are calculated, etc - so what explains the large behavioral discrepancies?

Would really appreciate any insight on what might be causing this.

The text was updated successfully, but these errors were encountered: