The purpose of this guide is to give you an introduction to the mathematical operations that we can perform on vectors and matrices and to give you a feel of the power of linear algebra. A lot of complex problems in science, business, and technology can be described in terms of vectors and matrices so it's important that people understand how it all works.

Note: You can easily convert this markdown file to a PDF in VSCode using this handy extension Markdown PDF.

-

- i. Vector operations

- ii. Matrix operations

- iii. Matrix-vector product

- iv. Linear transformations

- v. Fundamental vector spaces

-

- i. Solving systems of equations

- ii. Systems of equations as matrix equations

-

Computing the Inverse of a Matrix

- i. Using row operations

- ii. Using elementary matrices

- iii. Transpose of a Matrix

-

- i. Basis

- ii. Matrix representations of linear transformations

- iii. Dimension and Basis for Vector Spaces

- iv. Row space, columns space, and rank of a matrix

- v. Invertible matrix theorem

- vi. Determinants

- vii. Eigenvalues and eigenvectors

- viii. Linear Regression

Linear algebra is the math of vectors and matrices. The only prerequisite for this guide is a basic understanding of high school math concepts like numbers, variables, equations, and the fundamental arithmetic operations on real numbers: addition (denoted +), subtraction (denoted −), multiplication (denoted implicitly), and division (fractions). Also, you should also be familiar with functions that take real numbers as inputs and give real numbers as outputs, f : R → R.

Linear Algebra - Online Courses | Harvard University

Linear Algebra | MIT Open Learning Library

Top Linear Algebra Courses on Coursera

Mathematics for Machine Learning: Linear Algebra on Coursera

Top Linear Algebra Courses on Udemy

Learn Linear Algebra with Online Courses and Classes on edX

The Math of Data Science: Linear Algebra Course on edX

Linear Algebra in Twenty Five Lectures | UC Davis

Linear Algebra | UC San Diego Extension

Linear Algebra for Machine Learning | UC San Diego Extension

Introduction to Linear Algebra, Interactive Online Video | Wolfram

Linear Algebra Resources | Dartmouth

We now define the math operations for vectors. The operations we can perform on vectors ~u = (u1, u2, u3) and ~v = (v1, v2, v3) are: addition, subtraction, scaling, norm (length), dot product, and cross product:

The dot product and the cross product of two vectors can also be described in terms of the angle θ between the two vectors.

Vector Operations. Source: slideserve

Vector Operations. Source: pinterest

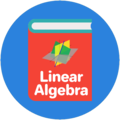

We denote by A the matrix as a whole and refer to its entries as aij .The mathematical operations defined for matrices are the following:

• determinant (denoted det(A) or |A|) Note that the matrix product is not a commutative operation.

Matrix Operations. Source: SDSU Physics

Check for modules that allow Matrix Operations. Source: DPS Concepts

The matrix-vector product is an important special case of the matrix product.

There are two fundamentally different yet equivalent ways to interpret the matrix-vector product. In the column picture, (C), the multiplication of the

matrix A by the vector ~x produces a linear combination of the columns of the matrix: y = Ax = x1A[:,1] + x2A[:,2], where A[:,1] and A[:,2] are the first and second columns of the matrix A. In the row picture, (R), multiplication of the matrix A by the vector ~x produces a column vector with coefficients equal to the dot products of rows of the matrix with the vector ~x.

Matrix-vector product. Source: wikimedia

Matrix-vector Product. Source: mathisfun

The matrix-vector product is used to define the notion of a linear transformation, which is one of the key notions in the study of linear algebra. Multiplication by a matrix A ∈ R m×n can be thought of as computing a linear transformation TA that takes n-vectors as inputs and produces m-vectors as outputs:

Linear Transformations. Source: slideserve

Elementary matrices for linear transformations in R^2. Source: Quora

Fundamental theorem of linear algebra for Vector Spaces. Source: wikimedia

Fundamental theorem of linear algebra. Source: wolfram

System of Linear Equations by Graphing. Source: slideshare

Systems of equations as matrix equations. Source: mathisfun

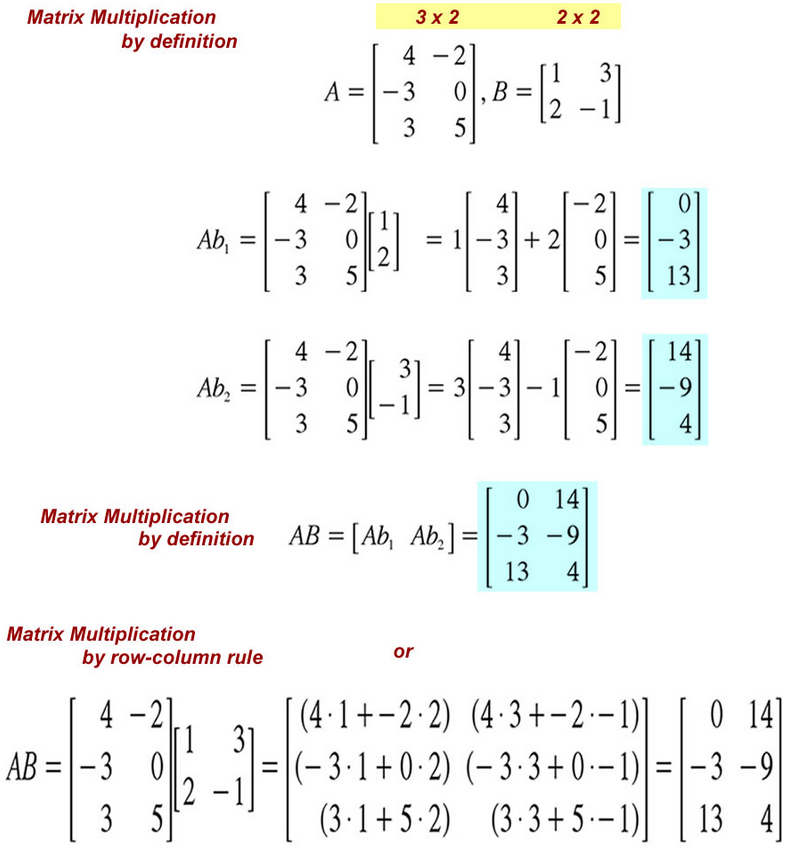

In this section we’ll look at several different approaches for computing the inverse of a matrix. The matrix inverse is unique so no matter which method we use to find the inverse, we’ll always obtain the same answer.

Inverse of 2x2 Matrix. Source: pinterest

One approach for computing the inverse is to use the Gauss–Jordan elimination procedure.

Elementray row operations. Source: YouTube

Every row operation we perform on a matrix is equivalent to a leftmultiplication by an elementary matrix.

Elementary Matrices. Source: SDSU Physics

Finding the inverse of a matrix is to use the Transpose method.

Transpose of a Matrix. Source: slideserve

In this section discuss a number of other important topics of linear algebra.

Intuitively, a basis is any set of vectors that can be used as a coordinate system for a vector space. You are certainly familiar with the standard basis for the xy-plane that is made up of two orthogonal axes: the x-axis and the y-axis.

Basis. Source: wikimedia

Change of Basis. Source: wikimedia

Matrix representations of linear transformations. Source: slideserve

The dimension of a vector space is defined as the number of vectors in a basis for that vector space. Consider the following vector space S = span{(1, 0, 0),(0, 1, 0),(1, 1, 0)}. Seeing that the space is described by three vectors, we might think that S is 3-dimensional. This is not the case, however, since the three vectors are not linearly independent so they don’t form a basis for S. Two vectors are sufficient to describe any vector in S; we can write S = span{(1, 0, 0),(0, 1, 0)}, and we see these two vectors are linearly independent so they form a basis and dim(S) = 2. There is a general procedure for finding a basis for a vector space. Suppose you are given a description of a vector space in terms of m vectors V = span{~v1, ~v2, . . . , ~vm} and you are asked to find a basis for V and the dimension of V. To find a basis for V, you must find a set of linearly independent vectors that span V. We can use the Gauss–Jordan elimination procedure to accomplish this task. Write the vectors ~vi as the rows of a matrix M. The vector space V corresponds to the row space of the matrix M. Next, use row operations to find the reduced row echelon form (RREF) of the matrix M. Since row operations do not change the row space of the matrix, the row space of reduced row echelon form of the matrix M is the same as the row space of the original set of vectors. The nonzero rows in the RREF of the matrix form a basis for vector space V and the numbers of nonzero rows is the dimension of V.

Basis and Dimension. Source: sliderserve

Recall the fundamental vector spaces for matrices that we defined in Section II-E: the column space C(A), the null space N (A), and the row space R(A). A standard linear algebra exam question is to give you a certain matrix A and ask you to find the dimension and a basis for each of its fundamental spaces. In the previous section we described a procedure based on Gauss–Jordan elimination which can be used “distill” a set of linearly independent vectors which form a basis for the row space R(A). We will now illustrate this procedure with an example, and also show how to use the RREF of the matrix A to find bases for C(A) and N (A).

Row space and Column space. Source: slideshare

Row space and Column space. Source: slideshare

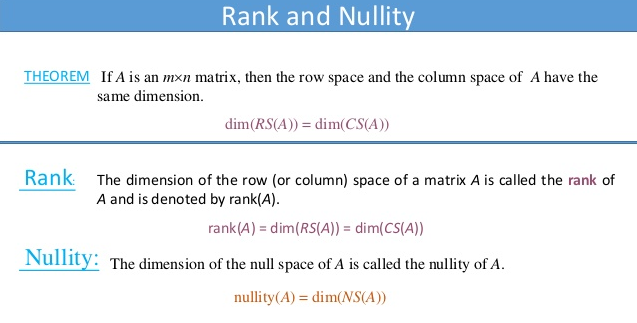

Rank and Nullity. Source: slideshare

There is an important distinction between matrices that are invertible and those that are not as formalized by the following theorem. Theorem. For an n×n matrix A, the following statements are equivalent:

Invertible Matrix theorem. Source: SDSU Physics

The determinant of a matrix, denoted det(A) or |A|, is a special way to combine the entries of a matrix that serves to check if a matrix is invertible or not.

Determinant of a Square Matrix. Source: stackexchange

Determinant of matrix. Source: onlinemathlearning

The set of eigenvectors of a matrix is a special set of input vectors for which the action of the matrix is described as a simple scaling. When a matrix is multiplied by one of its eigenvectors the output is the same eigenvector multiplied by a constant Aeλ = λeλ. The constant λ is called an eigenvalue of A.

Generalized EigenVectors. Source: YouTube

Linear regression is an approach to model the relationship between two variables by fitting a linear equation to observed data. One variable is considered to be an explanatory variable, and the other is considered to be a dependent variable.

Multiple Linear Regression. Source: Medium

MATLAB is a programming language that does numerical computing such as expressing matrix and array mathematics directly.

MATLAB and Simulink Training from MATLAB Academy

MathWorks Certification Program

MATLAB Online Courses from Udemy

MATLAB Online Courses from Coursera

MATLAB Online Courses from edX

Setting Up Git Source Control with MATLAB & Simulink

Pull, Push and Fetch Files with Git with MATLAB & Simulink

Create New Repository with MATLAB & Simulink

PRMLT is Matlab code for machine learning algorithms in the PRML book.

MATLAB Online allows to users to uilitize MATLAB and Simulink through a web browser such as Google Chrome.

Simulink is a block diagram environment for Model-Based Design. It supports simulation, automatic code generation, and continuous testing of embedded systems.

MATLAB Schemer is a MATLAB package makes it easy to change the color scheme (theme) of the MATLAB display and GUI.

LRSLibrary is a Low-Rank and Sparse Tools for Background Modeling and Subtraction in Videos. The library was designed for moving object detection in videos, but it can be also used for other computer vision and machine learning problems.

Robotics Toolbox for MATLAB provides a toolbox that brings robotics specific functionality(designing, simulating, and testing manipulators, mobile robots, and humanoid robots) to MATLAB, exploiting the native capabilities of MATLAB (linear algebra, portability, graphics). The toolbox also supports mobile robots with functions for robot motion models (bicycle), path planning algorithms (bug, distance transform, D*, PRM), kinodynamic planning (lattice, RRT), localization (EKF, particle filter), map building (EKF) and simultaneous localization and mapping (EKF), and a Simulink model a of non-holonomic vehicle. The Toolbox also including a detailed Simulink model for a quadrotor flying robot.

SEA-MAT is a collaborative effort to organize and distribute Matlab tools for the Oceanographic Community.

Gramm is a complete data visualization toolbox for Matlab. It provides an easy to use and high-level interface to produce publication-quality plots of complex data with varied statistical visualizations. Gramm is inspired by R's ggplot2 library.

hctsa is a software package for running highly comparative time-series analysis using Matlab.

Plotly is a Graphing Library for MATLAB.

YALMIP is a MATLAB toolbox for optimization modeling.

GNU Octave is a high-level interpreted language, primarily intended for numerical computations. It provides capabilities for the numerical solution of linear and nonlinear problems, and for performing other numerical experiments. It also provides extensive graphics capabilities for data visualization and manipulation.

- If would you like to contribute to this guide simply make a Pull Request.

Distributed under the Creative Commons Attribution 4.0 International (CC BY 4.0) Public License.