New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

Error response from daemon: devicemapper failed to remove the rootFS for <id> : devicemapper : Error running DeleteDevice dm_task_run failed #34463

Comments

|

Hi @thaJeztah :) I have referred issue number #26900. I don't want to delete Regards |

|

Check state of thin pool. Paste journal messages here to see what failed. I suspect either device is busy or something is wrong with thin pool where its not allowing deleting devices. |

Ping me if you need any other info :) |

|

Thin pool seems to be ok. Check journalctl logs and see if something is there which gives details about why task failed. If that does not, then enable libdm logging and re-run and see if something shows up. We need to know the details of why it failed. Recently a daemon option was added to enable libdm logging. I think --storage-opt dm.libdm_log_level. |

|

Hi Guys, I got this problem. I found that when thin device is deleted but metadata of the layer exists. Then we can not remove this container, with error "Error response from daemon: devicemapper failed to remove the rootFS for : devicemapper : Error running DeleteDevice dm_task_run failed" In the container-deleteing process, we delete the thin device first and then delete the metadata. So if docker is killing between these two actions. we can reproduce this issue. |

|

ping @rhvgoyal |

|

I stumbled upon this on CentOS 7, using docker-1.13.1-162.git64e9980. In my case, our app is trying to restart a container, but the container was dead, and it could not be removed:

I was seeing errors when trying to remove the container with

I noticed @liusdu 's comment about the device getting deleted before metadata, so I thought maybe deleting the metadata would work. So I stopped the docker service, then: (rm would be just as good, but I didn't expect this to work)

To people who are stuck on CentOS 7 (we are, at least for the time being), I guess this can be used as a workaround. |

|

docker-1.13.1 is really old (and reached EOL), but I suspect you're running the Red Hat fork of Docker. Note that CentOS 7 is still supported, and current versions of Docker for CentOS support overlay2 on CentOS (https://docs.docker.com/engine/install/centos/). Using the overlay2 storage driver may be a good alternative (but only a single storage driver can be used at a time, and switching storage drivers will make content stored with the other storage driver inaccessible (image in your image cache, and containers), so generally recommended to use a "fresh" install. |

|

Yes, we're running the version shipped by RedHat in their extras repo. So you're saying we can also run the current Docker on CentOS 7? I hadn't thought of that, it's probably something worth trying. |

|

Running the Docker Engine should definitely work (but as mentioned, you may need to do a fresh install). That said, if you need to run other container tools from Red Hat's ecosystem, things can sometimes be somewhat complicated due to package dependencies (both the "docker" packages and red hat forked packages |

Pinging @rhvgoyal @thaJeztah

Details:

OS- CentOS Linux release 7.3.1611 (Core)uname -a: 3.10.0-514.26.2.el7.x86_64Docker version:

Docker info:

Problem:

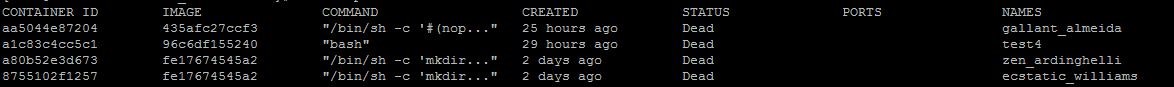

There are four containers in this node which are dead. I am unable to remove them.

Details:

docker rm -f <container_id>is not workingI browsed through GitHub issues for any solution. Instead I got an impression that this is not an error but a serious bug which is rearing its head again and again.

Help needed in resolving this issue.

Regards

Aditya

The text was updated successfully, but these errors were encountered: