Table of Contents

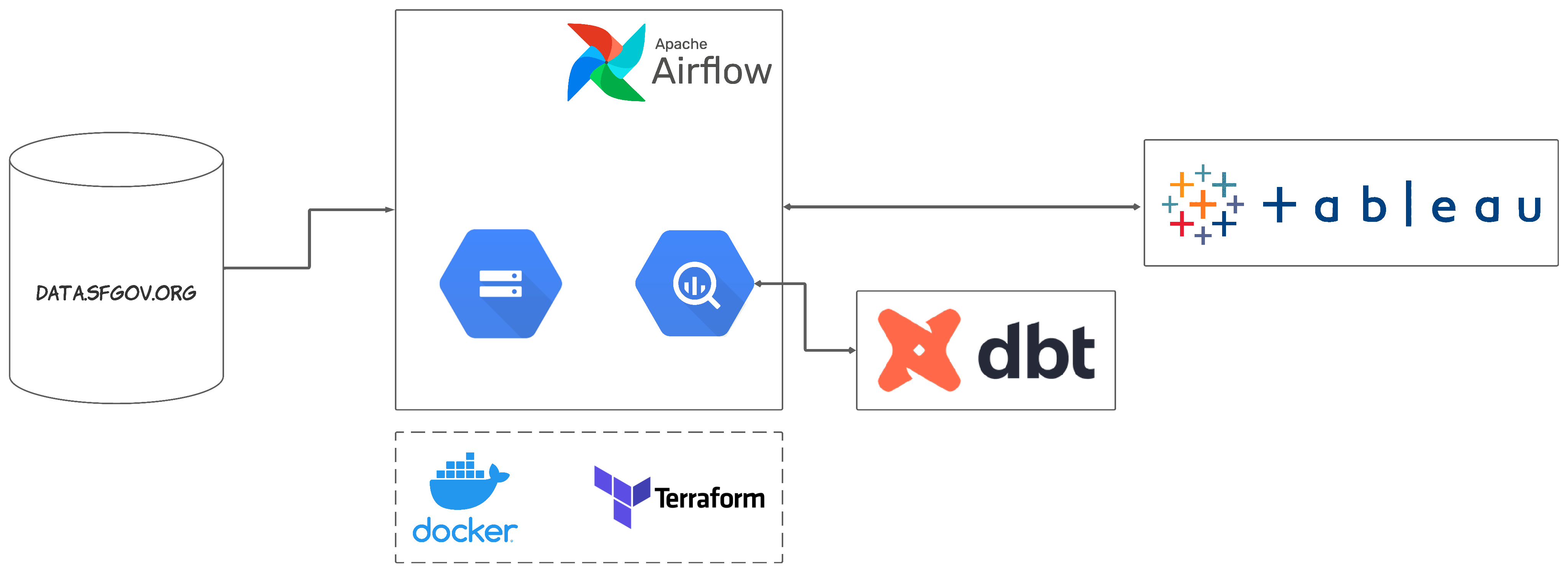

In this project, we first extract by downloading CSVs using SODA API. We then consume and put them into a data lake (Google Cloud Storage). After that we schedule a data pipeline (Airflow) to run monthly to load to a data warehouse (Google BigQuery). Later on, we transform the data in the warehouse using dbt. Finally, once the data is cleaned and transformed, we can monitor and analyze the data on a dashboard (Tableau).

The visualization results are two dashboards. On the first of them, you can see the ratio of the number of crimes to the previous year with the possibility of choosing a district. The second is a map crime with additional information regarding the dynamics of the most committed crimes.

Police Department Incident Reports Historical (2003 - 2017)

Police Department Incident Reports (2018 to Present)

- Apache Airflow for orchestrating workflow

- Google Cloud Storage for data lake storage

- dbt for data transformation

- Google BigQuery for data warehousing and analysis

- Tableau for visualization

- Terraform as an Infrastructure-as-Code (IaC) tool

- Docker to proceed to the containerization of other technologies

Follow below steps to set up the project. I've tried to explain steps where I can. Feel free to make improvements/changes.

NOTE: This was developed using a Google VM instance. If you're on Windows or Linux, you may need to amend certain components if issues are encountered.

As Google offers a free trial for 3 months, this shouldn't cost you anything with proper settings (which will be mentioned later). However, please check Google Free Trial and Free Tier limits, as this may change.