New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

Fix lagging 3d view for big data in auto color mode #6411

Conversation

Codecov Report

@@ Coverage Diff @@

## main #6411 +/- ##

==========================================

- Coverage 92.19% 92.13% -0.06%

==========================================

Files 599 601 +2

Lines 52987 53088 +101

==========================================

+ Hits 48853 48915 +62

- Misses 4134 4173 +39

|

|

This pull request has been mentioned on Image.sc Forum. There might be relevant details there: https://forum.image.sc/t/request-for-testing-napari-0-4-19-release-candidate/87972/14 |

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

@Czaki I've left some initial comments. Still getting my head around the flow, but I think I understand the gist. It's very cool and I love that you fixed the direct case too where possible. ❤️

| ): | ||

| self._on_display_change(data) | ||

| else: | ||

| node.set_data(data) | ||

|

|

||

| node.visible = not self.layer._slice.empty and self.layer.visible |

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

Is this change intentional? I find it surprising... Is it because _on_display_change correctly sets the node visibility anyway?

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

Yes. It is intentional not to trigger obsolete events.

napari/utils/colormaps/colormap.py

Outdated

| def _cast_labels_to_minimum_type_auto( | ||

| data: np.ndarray, num_colors: int, dtype | ||

| ) -> np.ndarray: | ||

| result_array = np.zeros_like(data, dtype=dtype) |

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

Oof. This is a big step. However, I tried my best to optimise this in NumPy, avoiding unnecessary allocations, and got 10x worse speed...

In [14]: %timeit cm._cast_labels_to_minimum_type_auto(data, 50, np.uint8)

OMP: Info #276: omp_set_nested routine deprecated, please use omp_set_max_active_levels instead.

33 ms ± 2.19 ms per loop (mean ± std. dev. of 7 runs, 1 loop each)

In [16]: %%timeit

...: np.add(np.mod(data, np.uint8(50), out=out, casting='unsafe'),

...: np.uint8(1),

...: where=(data >= 50) | (data < 0),

...: out=out)

...:

388 ms ± 830 µs per loop (mean ± std. dev. of 7 runs, 1 loop each)

Even if we optimise for unsigned ints, we are still close to 10x worse:

In [15]: %%timeit

...: np.add(np.mod(data, np.uint8(50), out=out, casting='unsafe'),

...: np.uint8(1),

...: where=(data >= 50),

...: out=out)

...:

280 ms ± 458 µs per loop (mean ± std. dev. of 7 runs, 1 loop each)

So, maybe we should try to bite the bullet. Alternatively, maybe we can dispatch to the above NumPy code if numba is not available, but recommend numba (and include it in [all]).

What do you think @Czaki?

Certainly, depending on numba would open up a lot of optimisations. But it feels like we are making a big decision under time pressure. 😅

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

Ah, I just saw what you think in the PR description 😅. Yes I guess you are right that for 0.4.19 it is not a huge risk. But maybe since this is going into main, we make the dependency optional (as discussed above), and when backporting to 0.4.19 we tear out the compatibility code? Or vice versa, we can add non-numba option in a future PR, your call.

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

I think that the best option will be to donate such code to some of our compiled dependencies (vispy, scikit?)

Here I see a double allocation:

where=(data >= 50) | (data < 0),

I would rather open an issue for this problem than add much slower/memory consummimg code.

Nice catch! |

Co-authored-by: Juan Nunez-Iglesias <jni@fastmail.com>

|

restarting what looks like random failure. |

I have opened PR to fix this random failures #6423 |

I remember this being added specifically cause someone asked for it and they had data with -1 as background... I think @kevinyamauchi was implementing this at the time maybe? |

|

Gaaah, @Czaki while I was rewording the description, I thought of another issue with this PR: we should not use num_colors to do the mod operation, or labels that have the same color will always have the same color. The mod operation (a) should only occur when the label data dtype doesn't fit in uint8 in the first place, and (b) should be by a prime number that is greater than num_colors but still fits in the desired dtype, and that prime number should get changed when Shuffle is hit. 😕 And we should figure out a test that catches this issue in the future... At any rate, though, in order to avoid complicating this PR further, I think that we should merge this, and fix it in a subsequent PR. We can raise an issue with the 0.4.19 milestone to make sure it gets handled... Have I got the analysis right? |

|

Ok after a quick chat with @Czaki we have determined that this issue also exists on main. Therefore, I'm going to merge this and make an issue, which we can then fix. (It may even go back further, but I'm not certain about this, will need to test properly.) |

|

The issue is explained in detail in #6448. |

closes #6397 This array fixes fps performance issues in OpenGL introduced by #3308. In that PR, the texture type was changed to float32 in order to directly pass the labels values to the texture. It turns out that OpenGL performance for float32 textures is much worse than for uint8 textures. Here we change the code to use uint8 whenever the final number of colors is less than 255 in automatic coloring mode, or uint16 if the number is less than 65535. This is achieved by transforming original data using a modulo-like operation that avoids the background label landing on 0. This PR introduces numba dependency, which might not be a long-term solution. We may try to move this utility to some package that already contains compiled code. We can revisit the decision if it causes issues (such as a delay in supporting newer Python versions), and perhaps push such a function to a compiled dependency such as scikit-image. This PR also disables caching used for speedup painting until someone starts painting. It is a significant speedup and reduces memory usage. --------- Co-authored-by: Juan Nunez-Iglesias <jni@fastmail.com> Co-authored-by: Matthias Bussonnier <bussonniermatthias@gmail.com> Co-authored-by: pre-commit-ci[bot] <66853113+pre-commit-ci[bot]@users.noreply.github.com>

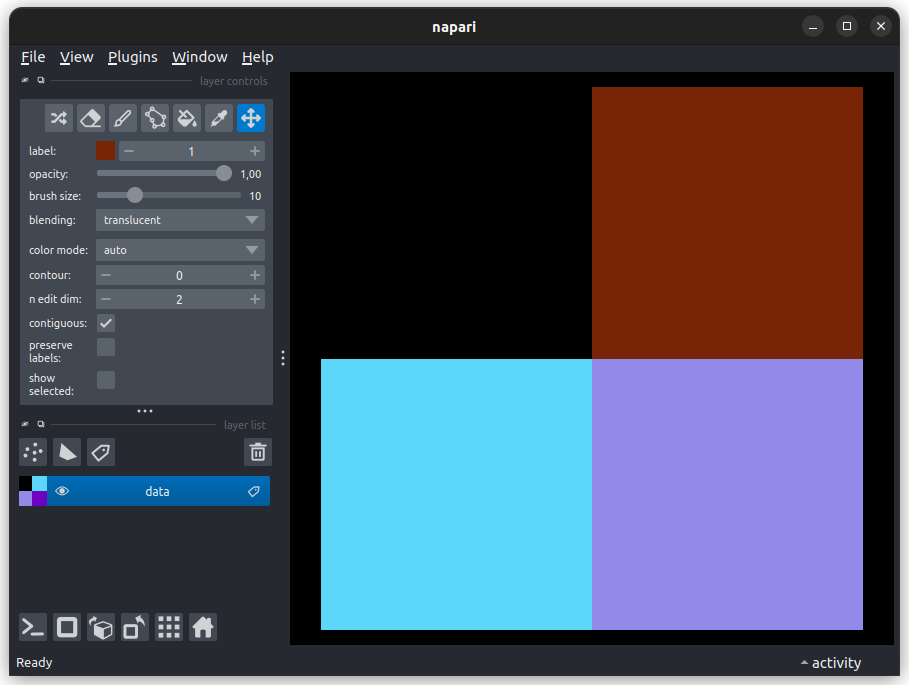

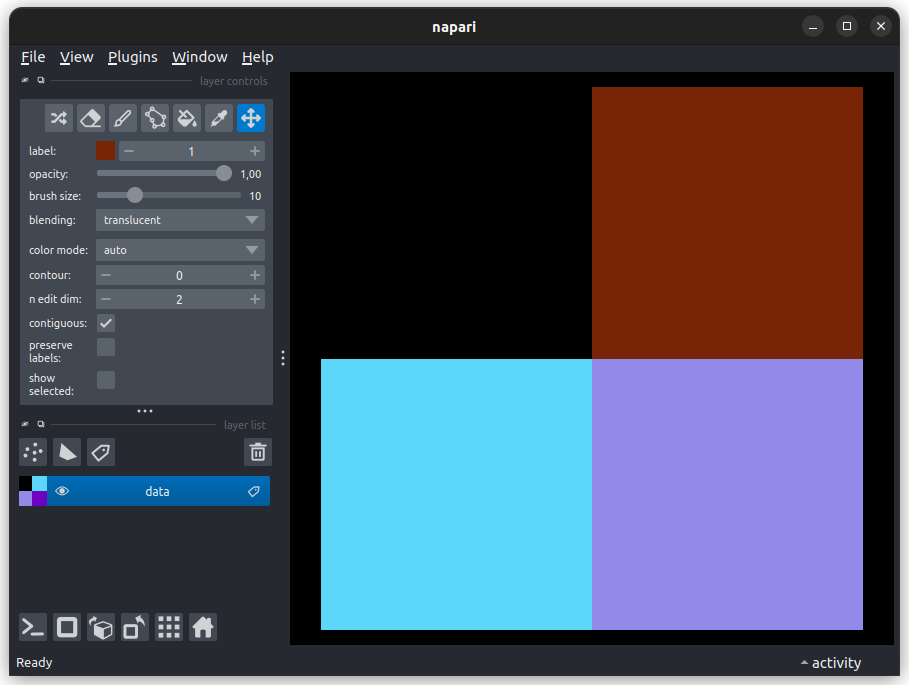

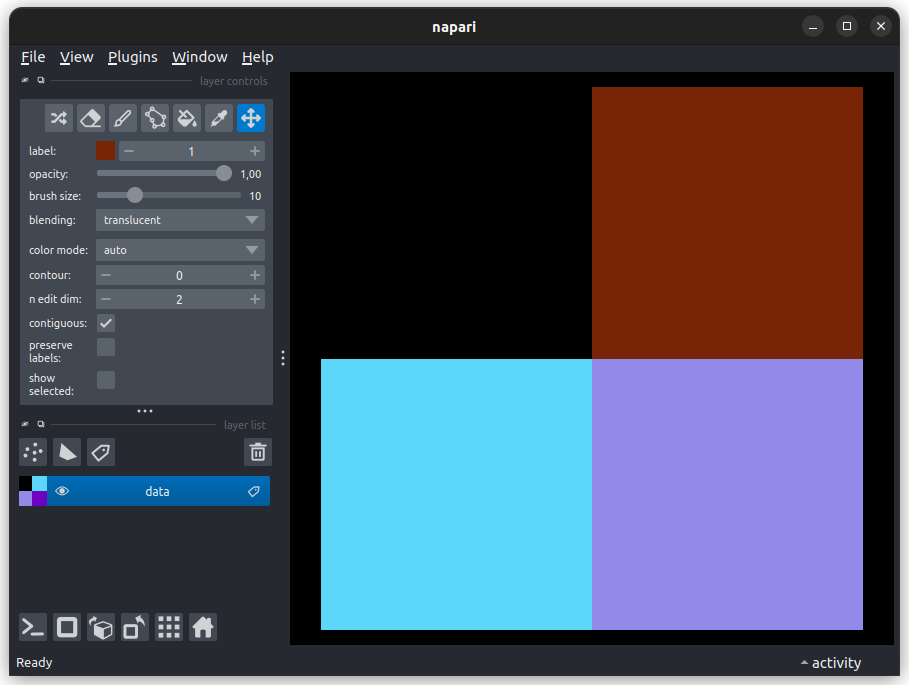

# Description Changes from #6411 make the thumbnail not work correctly as the slice is storing cast values, not the original ones for thumbnail generation. Before this PR:  With this PR:  Code: ```python import napari import numpy as np data = np.asarray([[0, 1], [2, 3]]) viewer = napari.Viewer() viewer.add_labels(data, opacity=1) napari.run() ``` --------- Co-authored-by: Juan Nunez-Iglesias <jni@fastmail.com>

Changes from #6411 make the thumbnail not work correctly as the slice is storing cast values, not the original ones for thumbnail generation. Before this PR:  With this PR:  Code: ```python import napari import numpy as np data = np.asarray([[0, 1], [2, 3]]) viewer = napari.Viewer() viewer.add_labels(data, opacity=1) napari.run() ``` --------- Co-authored-by: Juan Nunez-Iglesias <jni@fastmail.com>

closes #6397 This array fixes fps performance issues in OpenGL introduced by #3308. In that PR, the texture type was changed to float32 in order to directly pass the labels values to the texture. It turns out that OpenGL performance for float32 textures is much worse than for uint8 textures. Here we change the code to use uint8 whenever the final number of colors is less than 255 in automatic coloring mode, or uint16 if the number is less than 65535. This is achieved by transforming original data using a modulo-like operation that avoids the background label landing on 0. This PR introduces numba dependency, which might not be a long-term solution. We may try to move this utility to some package that already contains compiled code. We can revisit the decision if it causes issues (such as a delay in supporting newer Python versions), and perhaps push such a function to a compiled dependency such as scikit-image. This PR also disables caching used for speedup painting until someone starts painting. It is a significant speedup and reduces memory usage. --------- Co-authored-by: Juan Nunez-Iglesias <jni@fastmail.com> Co-authored-by: Matthias Bussonnier <bussonniermatthias@gmail.com> Co-authored-by: pre-commit-ci[bot] <66853113+pre-commit-ci[bot]@users.noreply.github.com>

Changes from #6411 make the thumbnail not work correctly as the slice is storing cast values, not the original ones for thumbnail generation. Before this PR:  With this PR:  Code: ```python import napari import numpy as np data = np.asarray([[0, 1], [2, 3]]) viewer = napari.Viewer() viewer.add_labels(data, opacity=1) napari.run() ``` --------- Co-authored-by: Juan Nunez-Iglesias <jni@fastmail.com>

…#6467) # Description This is follow-up to #6411. When labels data are int8, uint8, int16, or uint16, no copy is made — the data are sent directly as a uint8 or uint16 view to the GPU for rendering. Higher bit depth integer data is first converted on the CPU to uint8, uint16, or float32 (depending on the number of colors in the colormap) using the formula `texture = (values - 1) % ncolors + 1` — but with the background value always mapping to 0. --------- Co-authored-by: Juan Nunez-Iglesias <jni@fastmail.com> Co-authored-by: Lorenzo Gaifas <brisvag@gmail.com>

…rect color mode (#6439) Closes #6518 Closes #6084 # Description In this PR, similarly to #6411, instead of using `float32` to pass data to the GPU there we introduce heuristics for choosing smaller data types, while keeping high performance. Instead of complex calculation of color in the shader, a precomputed texture array is used. To avoid repetitive texture calculation, the textures are cached in the `Colormap` objects. For data of type uint8/int8/uint16/int16 we do not perform any transform of data. We send them to the GPU as it is. This allows to reduce computational time. Based on experiments, the rendering performance is a little worse for uint16/int16 than for uint8/int8. But it may depend on the GPU. Also, using uint16/int16 means usage more GPU memory than for 8 bits type. Still less than current main. For datatypes using at least 32 bits, we add a preprocessing step where we identify a set of labels that are mapped to the same color and map all of them to the same value. This often saves enough space to fall back to uint8/uint16. It allows using a smaller additional array, and use less GPU memory. If there are more than `2**16` distinct colors, then float32 is used, though performance will be reduced. We support only up to `2**23` distinct colors for now. For reduced memory usage, part of the functions used for data preprocessing are compiled using numba. We provide a version of the function that does not require `numba` but it limits the number of distinct colors to `2**16` and involves additional array creation (more memory usage). --------- Co-authored-by: Juan Nunez-Iglesias <jni@fastmail.com> Co-authored-by: pre-commit-ci[bot] <66853113+pre-commit-ci[bot]@users.noreply.github.com> Co-authored-by: Lorenzo Gaifas <brisvag@gmail.com> Co-authored-by: Andy Sweet <andrew.d.sweet@gmail.com>

…#6467) This is follow-up to #6411. When labels data are int8, uint8, int16, or uint16, no copy is made — the data are sent directly as a uint8 or uint16 view to the GPU for rendering. Higher bit depth integer data is first converted on the CPU to uint8, uint16, or float32 (depending on the number of colors in the colormap) using the formula `texture = (values - 1) % ncolors + 1` — but with the background value always mapping to 0. --------- Co-authored-by: Juan Nunez-Iglesias <jni@fastmail.com> Co-authored-by: Lorenzo Gaifas <brisvag@gmail.com>

…rect color mode (#6439) Closes #6518 Closes #6084 In this PR, similarly to #6411, instead of using `float32` to pass data to the GPU there we introduce heuristics for choosing smaller data types, while keeping high performance. Instead of complex calculation of color in the shader, a precomputed texture array is used. To avoid repetitive texture calculation, the textures are cached in the `Colormap` objects. For data of type uint8/int8/uint16/int16 we do not perform any transform of data. We send them to the GPU as it is. This allows to reduce computational time. Based on experiments, the rendering performance is a little worse for uint16/int16 than for uint8/int8. But it may depend on the GPU. Also, using uint16/int16 means usage more GPU memory than for 8 bits type. Still less than current main. For datatypes using at least 32 bits, we add a preprocessing step where we identify a set of labels that are mapped to the same color and map all of them to the same value. This often saves enough space to fall back to uint8/uint16. It allows using a smaller additional array, and use less GPU memory. If there are more than `2**16` distinct colors, then float32 is used, though performance will be reduced. We support only up to `2**23` distinct colors for now. For reduced memory usage, part of the functions used for data preprocessing are compiled using numba. We provide a version of the function that does not require `numba` but it limits the number of distinct colors to `2**16` and involves additional array creation (more memory usage). --------- Co-authored-by: Juan Nunez-Iglesias <jni@fastmail.com> Co-authored-by: pre-commit-ci[bot] <66853113+pre-commit-ci[bot]@users.noreply.github.com> Co-authored-by: Lorenzo Gaifas <brisvag@gmail.com> Co-authored-by: Andy Sweet <andrew.d.sweet@gmail.com>

…#6652) # Description In #6411, we missed these lines: https://github.com/napari/napari/blob/4f4c063ae5dd79d6d188e201d44b8d57eba71909/napari/_vispy/layers/image.py#L25-L32 These were added in #3990 because 'auto' has a slightly different meaning in VisPy than *really* fully auto: it chooses the default texture dtype for the input NumPy array's dtype. For float arrays, this is float. However, if your OpenGL implementation doesn't support float textures, VisPy will raise. Instead, passing `texture_format=None` when creating the ImageNode tells VisPy to transform the data to whatever texture format it sees fit. In #6411, when getting the VisPy node for a given dtype, we only check for `texture_format != 'auto'` as the "catch-all" texture format. But, in fact, if we are on a machine that doesn't support float32 textures, by this point in the code the format has been changed to None, incorrectly triggering these lines: https://github.com/napari/napari/blob/89f8194d3fa4eef620755804806ac69ef684df63/napari/_vispy/layers/image.py#L59-L73 and causing a ValueError. This PR fixes that by also checking for None in that same clause. This PR also adds a test by monkeypatching the function that checks for float32 texture support. # References #3988 #3990 --------- Co-authored-by: Juan Nunez-Iglesias <jni@fastmail.com> Co-authored-by: pre-commit-ci[bot] <66853113+pre-commit-ci[bot]@users.noreply.github.com>

…#6652) # Description In #6411, we missed these lines: https://github.com/napari/napari/blob/4f4c063ae5dd79d6d188e201d44b8d57eba71909/napari/_vispy/layers/image.py#L25-L32 These were added in #3990 because 'auto' has a slightly different meaning in VisPy than *really* fully auto: it chooses the default texture dtype for the input NumPy array's dtype. For float arrays, this is float. However, if your OpenGL implementation doesn't support float textures, VisPy will raise. Instead, passing `texture_format=None` when creating the ImageNode tells VisPy to transform the data to whatever texture format it sees fit. In #6411, when getting the VisPy node for a given dtype, we only check for `texture_format != 'auto'` as the "catch-all" texture format. But, in fact, if we are on a machine that doesn't support float32 textures, by this point in the code the format has been changed to None, incorrectly triggering these lines: https://github.com/napari/napari/blob/89f8194d3fa4eef620755804806ac69ef684df63/napari/_vispy/layers/image.py#L59-L73 and causing a ValueError. This PR fixes that by also checking for None in that same clause. This PR also adds a test by monkeypatching the function that checks for float32 texture support. # References #3988 #3990 --------- Co-authored-by: Juan Nunez-Iglesias <jni@fastmail.com> Co-authored-by: pre-commit-ci[bot] <66853113+pre-commit-ci[bot]@users.noreply.github.com>

References and relevant issues

closes #6397

Description

This array fixes fps performance issues in OpenGL introduced by #3308. In that PR, the texture type was changed to float32 in order to directly pass the labels values to the texture. It turns out that OpenGL performance for float32 textures is much worse than for uint8 textures.

Here we change the code to use uint8 whenever the final number of colors is less than 255 in automatic coloring mode, or uint16 if the number is less than 65535.

This is achieved by transforming original data using a modulo-like operation that avoids the background label landing on 0.

This PR introduces numba dependency, which might not be a long-term solution. We may try to move this utility to some package that already contains compiled code. We can revisit the decision if it causes issues (such as a delay in supporting newer Python versions), and perhaps push such a function to a compiled dependency such as scikit-image.

This PR also disables the caching strategy used to speed up painting until someone actually starts painting. It is a significant speedup in startup time and reduces memory usage.