-

Notifications

You must be signed in to change notification settings - Fork 3

New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

controller manager dies #6

Comments

|

Original comment by dan (Bitbucket: dan77062).

|

1 similar comment

|

Original comment by dan (Bitbucket: dan77062).

|

|

Original comment by Nate Koenig (Bitbucket: Nathan Koenig). Can you make sure that you followed every step of the installation tutorial? Also, have you ever installed Gazebo and/or ROS in the past? |

|

Original comment by Steve Peters (Bitbucket: Steven Peters, GitHub: scpeters).

|

|

Original comment by Steve Peters (Bitbucket: Steven Peters, GitHub: scpeters). The crux of the error seems to be here: cc @jordanlack |

|

Original comment by Jordan Lack (Bitbucket: jordanlack). Is this happening every time for you? It's likely a race condition as @scpeters mentioned. We have 2 nodes that are launched and the 2nd one needs the first one to be alive when it launches. We currently hack in waits to try to make sure the first node has time to get started. I see this sometimes, but it never happens for me when things go smoothly. I see it sometimes when gazebo segfaults or something. |

|

Original comment by dan (Bitbucket: dan77062). I did follow all the steps (and by the way, suggested it may be a race condition). I have used ROS for a long time and am familiar with setups. This is on a new Ubuntu/Inidgo installation (because I had 16.04 and kinetic running, so I did a clean install). I did not install Gazebo. It also happens on a second machine that already had 14.04/Indigo installed. That machine also did not have Gazebo installed. It crashes every time on both machines for one reason or another, either the seg fault or the controller issue. If it gets all the way through to showing the world with the robot, then the robot collapses after about 30 seconds. None of the input messages have any effect. If I exclude the following part of the launch file, then it loads most of the time and the robot remains in the harness. This works for both qual1.world and qual2.world: |

|

Original comment by Jordan Lack (Bitbucket: jordanlack). Could you try |

|

Original comment by Erica Tiberia (Bitbucket: T_AL). I also have the same setup as Dan. I tried the first simulation, Val launched in the simulation but I received the following errors. ROS_MASTER_URI=http://localhost:11311 core service [/rosout] found |

|

Original comment by Jordan Lack (Bitbucket: jordanlack). It's a bit difficult to parse through that. Could you format that please next time? It looks to me though like you don't have any critical problems there though. A lot of those are typical things that I don't think would cause the sim to not work. I see an A lot of those SDF warnings are because we put a bunch of tags in our URDF that our controller manager parses out, so those aren't anything to be concerned about. |

|

Original comment by dan (Bitbucket: dan77062). Jordan, the launch files you suggested both load the model OK. There are a bunch of warnings, but gazebo comes up very quickly and the robot model loads fine. See my additional comments for the behavior when I break up the launch file into two launch files, where you can see the first part loads OK (leaving the robot in the harness) and then the second part generates errors. Just curious, you have gotten the tutorial to work with a completely new install of Ubuntu 14.04 and ROS Indigo, right? Just want to be sure there is not some environment variable or something like that I'm missing. Here is the log from the launch file you asked me to run: |

|

Original comment by dan (Bitbucket: dan77062). Also, here is the modified launch file, along with the log. In this case, the world and the robot load OK and the robot just stays in the harness. log output: |

|

Original comment by dan (Bitbucket: dan77062). Now, if I load the modified launch file as in the comment above, then run the rest of the original launch file as a separate launch file, errors show up and the robot eventually collapses. Here is the separate launch file, followed by the log from its terminal window, followed by the log from the terminal window where the first launch file (see comment above) is running. log from this terminal window: and the continuation of the log from the terminal window where the shortened launch file from the comment above is running: |

|

Original comment by Jordan Lack (Bitbucket: jordanlack). @scpeters has this launch configuration worked reliably for you all? @dan77062 try launching the full thing again and in another terminal run |

|

Original comment by dan (Bitbucket: dan77062). Jordan, there is no log file by that name in /var/log |

|

Original comment by Jordan Lack (Bitbucket: jordanlack). Ah ok, this is helpful! Disregard my previous request. How many cores are on your processor? IHMC's controller does some fancy core isolation stuff, so if you don't have enough cores then they can't pin their processes to cores that don't exist. @scpeters it should be clearly stated in the documentation that they must 4 cores to run this stuff. Since IHMC's controller/estimator steal 2 cores and those are hardcoded as cores(zero indexed) 1 & 2 I think, so if they don't have those cores then none of this will work. |

|

Original comment by dan (Bitbucket: dan77062). 4 cores. I am using a Lenovo X1 carbon with an Intel Core i7-6600U CPU, 2.60 GHz x 4, with 16 GB of RAM, and 64 bit Ubuntu 14.04 |

|

Original comment by Jordan Lack (Bitbucket: jordanlack). Is this Ubuntu in a virtual machine? |

|

Original comment by dan (Bitbucket: dan77062). no not a VM. |

|

Original comment by Jordan Lack (Bitbucket: jordanlack). Hmm, ok well then i'm a bit stumped as to why this is happening then. What's happening is, in IHMC's java code, the following line |

|

Original comment by dan (Bitbucket: dan77062). interesting. Top shows all 4 cores are available and running. See below. |

|

Original comment by sringer99 (Bitbucket: sringer99). Do not know if this will help, but I am having the same issues. controller_manager crashes. zero successes. Based on this thread and a little poking around I doubled the sleep time in /opt/nasa/indigo/share/val_deploy/scripts/delayed_roslaunch.sh #!/bin/bash echo "delaying: $1 seconds" sleep $1 sleep $1 # Added this extra line shift echo "starting: roslaunch $@" roslaunch $@ Still get lots of error messages but the walk test now works about 90% of the time. I have a new AMD FX 6300 with 6 cores, 16GB memory, 256GB SSD drive, running on a ASUS M5A97 motherboard. |

|

Original comment by Jordan Lack (Bitbucket: jordanlack). @sringer99 that probably means we need to extend some of our sleeps to give some nodes more time to spin up. |

|

Original comment by dan (Bitbucket: dan77062). I wrote a short java script to check on the processors available to Java. It prints out 4. |

|

Original comment by Steve Peters (Bitbucket: Steven Peters, GitHub: scpeters). Here's some extra debugging steps: and cc @dljsjr |

|

Original comment by dan (Bitbucket: dan77062). The first returns 0-3. However all the others return 0. What's up with that? |

|

Original comment by Steve Peters (Bitbucket: Steven Peters, GitHub: scpeters). The |

|

Original comment by Jedediyah Williams (Bitbucket: Jedediyah). I'll chime in with @dan77062 @sringer99 and @T_AL, as I'm running into what looks like the identical problem getting the controller to run. I'm also on a fresh install of Ubuntu 14.04 / Indigo, 4 cores and 32 GB RAM, and used the updated install instructions. @sringer99, I'm going to try out adding that extra sleep time. |

|

Original comment by Steve Peters (Bitbucket: Steven Peters, GitHub: scpeters). Are you all using laptops? |

|

Original comment by dan (Bitbucket: dan77062). yes, lenovo x1 carbon laptop and it turns out the Intel Core i7-6600U CPU does indeed have only 2 cores, 4 threads. |

|

Original comment by Jordan Lack (Bitbucket: jordanlack). For those having the problem where it launches successfully sometimes and sometimes you get the following exception, I have some debug steps that'll help me be certain what the problem is. For the debug, do Then launch it multiple times and see if you get that exception still or if it launches cleanly 100% of the time. If it is still failing occasionally, in the following line change the Let me know how it goes! |

|

Original comment by Erica K (Bitbucket: Erica K). Jordan, I've had 100% failure up to now, but I pass your debug test (even without the larger values). |

|

Original comment by DouglasS (Bitbucket: DouglasS). Hey folks, just wanted to chime in on what's happening from the IHMC side of things. At the very least, this definitely relates to the problem that @dan77062 is having. The control algorithm for the robot is designed to run in an environment with a real-time kernel patch and core isolation. On the physical Val hardware this requires a quad core processor with hyper threading and turboboost disabled. We reserve Core 0 for IRQ/interrupt handling, cores 1 and 2 for our state estimation and control algorithm threads respectively, and core 4 for all other processes that have to run. Note that these are physical cores, not virtual cores, because hyper threading is problematic in a realtime environment which is why we disable it on the real robot. This is why we inspect the Obviously all of that isn't really needed in simulation because we run in lock-step with the physics. We're now tracking this issue as well: ihmcrobotics/ihmc-open-robotics-software#99 We should have a fix for it and a release in the next few days. |

|

Original comment by Jordan Lack (Bitbucket: jordanlack). It is likely that your running into the same problem that @dan77062 is, which is due to you not having enough physical cores on your processor. A way to verify that is to do what @dan77062 did above and launch the IHMC controller stuff separately and you should get this error, |

|

Original comment by sringer99 (Bitbucket: sringer99). I can get it to run 100% of the time at 8 and 4 seconds. Fails every time at 2 seconds with the same error as we have been getting. Note: I have the doubler on the delay script. roslaunch val_gazebo val_sim.launch ... logging to /home/sringer/.ros/log/5a68a7cc-a5d1-11e6-bc2b-f832e4bba978/roslaunch-Mingo-Mtn-Robotics-24044.log Checking log directory for disk usage. This may take awhile. Press Ctrl-C to interrupt Done checking log file disk usage. Usage is <1GB. started roslaunch server http://Mingo-Mtn-Robotics:43310/ SUMMARY ======== PARAMETERS

NODES / control_py_Mingo_Mtn_Robotics_24044_828331878780894790 (robonet_tools/control) controller_manager_Mingo_Mtn_Robotics_24044_2827938922632370286 (val_deploy/delayed_roslaunch.sh) smtcore_Mingo_Mtn_Robotics_24044_2209162283755310182 (shared_memory_transport/smtcore) auto-starting new master process[master]: started with pid [24056] ROS_MASTER_URI=http://localhost:11311 setting /run_id to 5a68a7cc-a5d1-11e6-bc2b-f832e4bba978 process[rosout-1]: started with pid [24069] started core service [/rosout] process[smtcore_Mingo_Mtn_Robotics_24044_2209162283755310182-2]: started with pid [24084] process[control_py_Mingo_Mtn_Robotics_24044_828331878780894790-3]: started with pid [24087] process[controller_manager_Mingo_Mtn_Robotics_24044_2827938922632370286-4]: started with pid [24088] delaying: 1 seconds Log file /home/sringer/.log already exists, proceeding... Log file /home/sringer/.log already exists, proceeding... starting: roslaunch val_deploy val_control_sim.launch controller_manager_looprate:=500 __log:=/home/sringer/.ros/log/5a68a7cc-a5d1-11e6-bc2b-f832e4bba978/controller_manager_Mingo_Mtn_Robotics_24044_2827938922632370286-4.log ... logging to /home/sringer/.ros/log/5a68a7cc-a5d1-11e6-bc2b-f832e4bba978/roslaunch-Mingo-Mtn-Robotics-24096.log Checking log directory for disk usage. This may take awhile. Press Ctrl-C to interrupt Done checking log file disk usage. Usage is <1GB. started roslaunch server http://Mingo-Mtn-Robotics:40870/ SUMMARY ======== PARAMETERS

NODES / controller_manager_Mingo_Mtn_Robotics_24096_6578599215757810822 (val_controller_manager_rtt/controller_exec) ROS_MASTER_URI=http://localhost:11311 core service [/rosout] found process[controller_manager_Mingo_Mtn_Robotics_24096_6578599215757810822-1]: started with pid [24114] exception subscribing to JointAPS_Angle_Rad : /pelvis/waist/JointAPS_Angle_Rad has not been registered. exception subscribing to JointAPS_Vel_Radps : /pelvis/waist/JointAPS_Vel_Radps has not been registered. exception subscribing to JointTorque_Des_Nm : /pelvis/waist/JointTorque_Des_Nm has not been registered. exception subscribing to JointTorque_Meas_Nm : /pelvis/waist/JointTorque_Meas_Nm has not been registered. exception subscribing to Position_Des_Rad : /pelvis/waist/Position_Des_Rad has not been registered. exception subscribing to Velocity_Des_Radps : /pelvis/waist/Velocity_Des_Radps has not been registered. 2016-11-08 08:35:29 localhost ncl_cpp: [ERROR ] [gov.nasa.RobotInterface.addJointsAndActuators] Exception encountered while attempting to add an actuator! Check your [ERROR] [1478622929.865724729]: Exception encountered while attempting to add an actuator! Check your URDF for correct XML formatting! 2016-11-08 08:35:29 localhost ncl_cpp: [ERROR ] [gov.nasa.HardwareInterface.makeHandle] Robot Hardware has failed Initialized properly. [ERROR] [1478622929.866061262]: Fatal exception error encountered initializing RobotHardwareInterface, exiting 2016-11-08 08:35:29 localhost ncl_cpp: [FATAL ] [gov.nasa.ControllerExec.ORO_main] Error encountered during configure hook for controller_manager component! ================================================================================REQUIRED process [controller_manager_Mingo_Mtn_Robotics_24096_6578599215757810822-1] has died! process has died [pid 24114, exit code 1, cmd /opt/nasa/indigo/lib/val_controller_manager_rtt/controller_exec --rate 500 -s __name:=controller_manager_Mingo_Mtn_Robotics_24096_6578599215757810822 __log:=/home/sringer/.ros/log/5a68a7cc-a5d1-11e6- log file: /home/sringer/.ros/log/5a68a7cc-a5d1-11e6-bc2b-f832e4bba978/controller_manager_Mingo_Mtn_Robotics_24096_6578599215757810822-1*.log Initiating shutdown! ================================================================================ shutting down processing monitor... ... shutting down processing monitor complete done [controller_manager_Mingo_Mtn_Robotics_24044_2827938922632370286-4] process has finished cleanly log file: /home/sringer/.ros/log/5a68a7cc-a5d1-11e6-bc2b-f832e4bba978/controller_manager_Mingo_Mtn_Robotics_24044_2827938922632370286-4*.log ^C[control_py_Mingo_Mtn_Robotics_24044_828331878780894790-3] killing on exit [smtcore_Mingo_Mtn_Robotics_24044_2209162283755310182-2] killing on exit [rosout-1] killing on exit [master] killing on exit shutting down processing monitor... ... shutting down processing monitor complete done |

|

Original comment by Jordan Lack (Bitbucket: jordanlack). Ok thanks @sringer99. I'm a bit confused as to why those delays need to be so long, because the processes that are being waited for are pretty quick to spin up. Only guess I have is it's something to do with roslaunch or maybe your computer in general? Anywho, i'll extend those wait times and work them into our next release. Thanks for taking the time to debug a bit! |

|

Original comment by sringer99 (Bitbucket: sringer99).

Here are the log files for my last fail |

|

Original comment by Rud Merriam (Bitbucket: rmerriam). Jordan, could you make the delays arguments for the startup so those who don't have the problem can start the system more quickly? |

|

Original comment by Jordan Lack (Bitbucket: jordanlack). Yeah that's a good idea. I'll add that in |

|

Original comment by dan (Bitbucket: dan77062). Douglas Stephen, |

|

Original comment by Erica Tiberia (Bitbucket: T_AL). Memory: 11.6 GIB I have increased the delay in (delayed_roslaunch.sh) file to 8 seconds and I commented out the lines to lower the harness and detach Val in the (init_robot.sh) file.

|

|

Original comment by Jordan Lack (Bitbucket: jordanlack). Erica could you post that as its own issue? @nmertins should probably see that as well |

|

Original comment by Nate Koenig (Bitbucket: Nathan Koenig). |

|

Original comment by Nate Koenig (Bitbucket: Nathan Koenig). Issue #16 was marked as a duplicate of this issue. |

|

Original comment by Nate Koenig (Bitbucket: Nathan Koenig). Issue #21 was marked as a duplicate of this issue. |

|

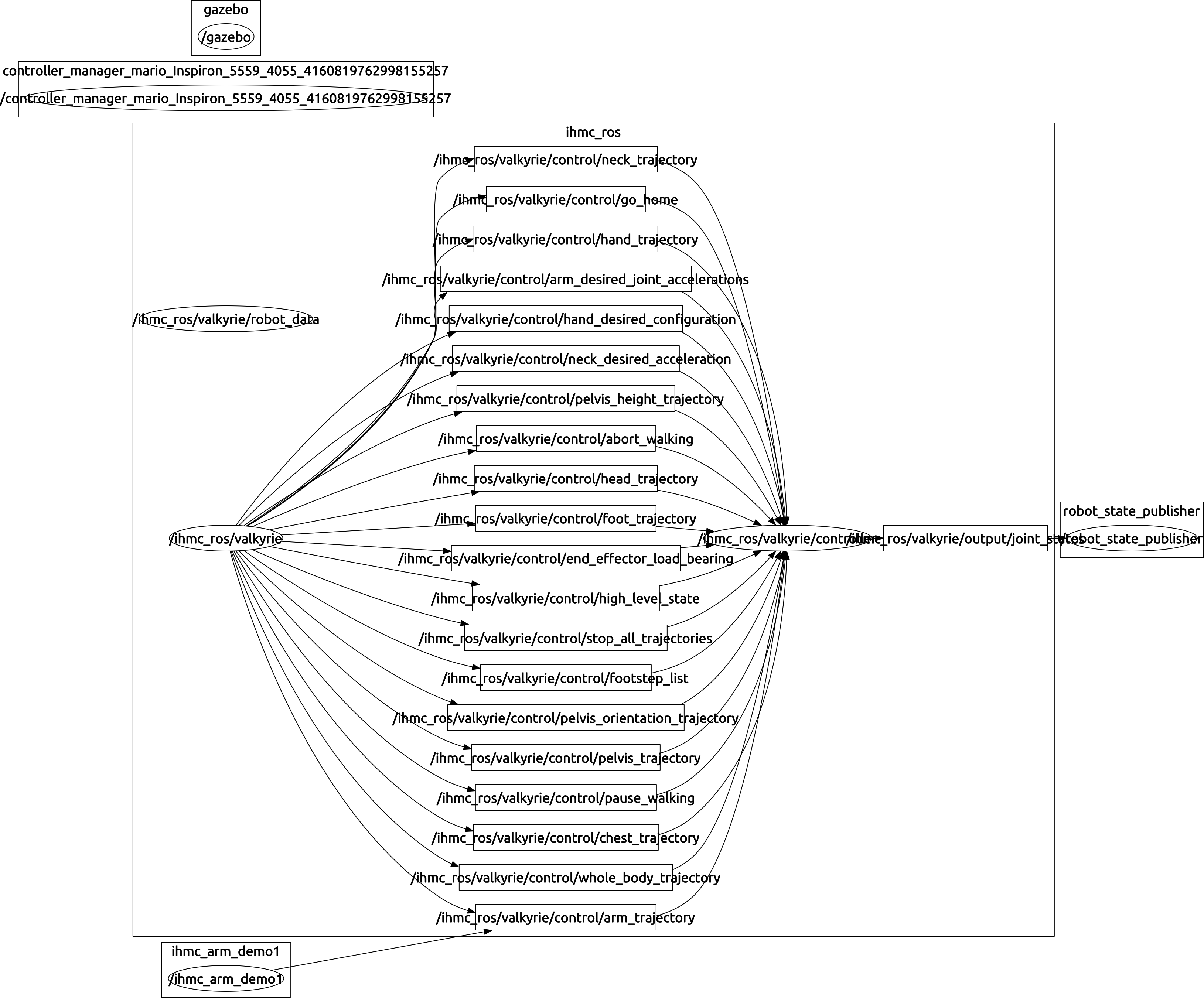

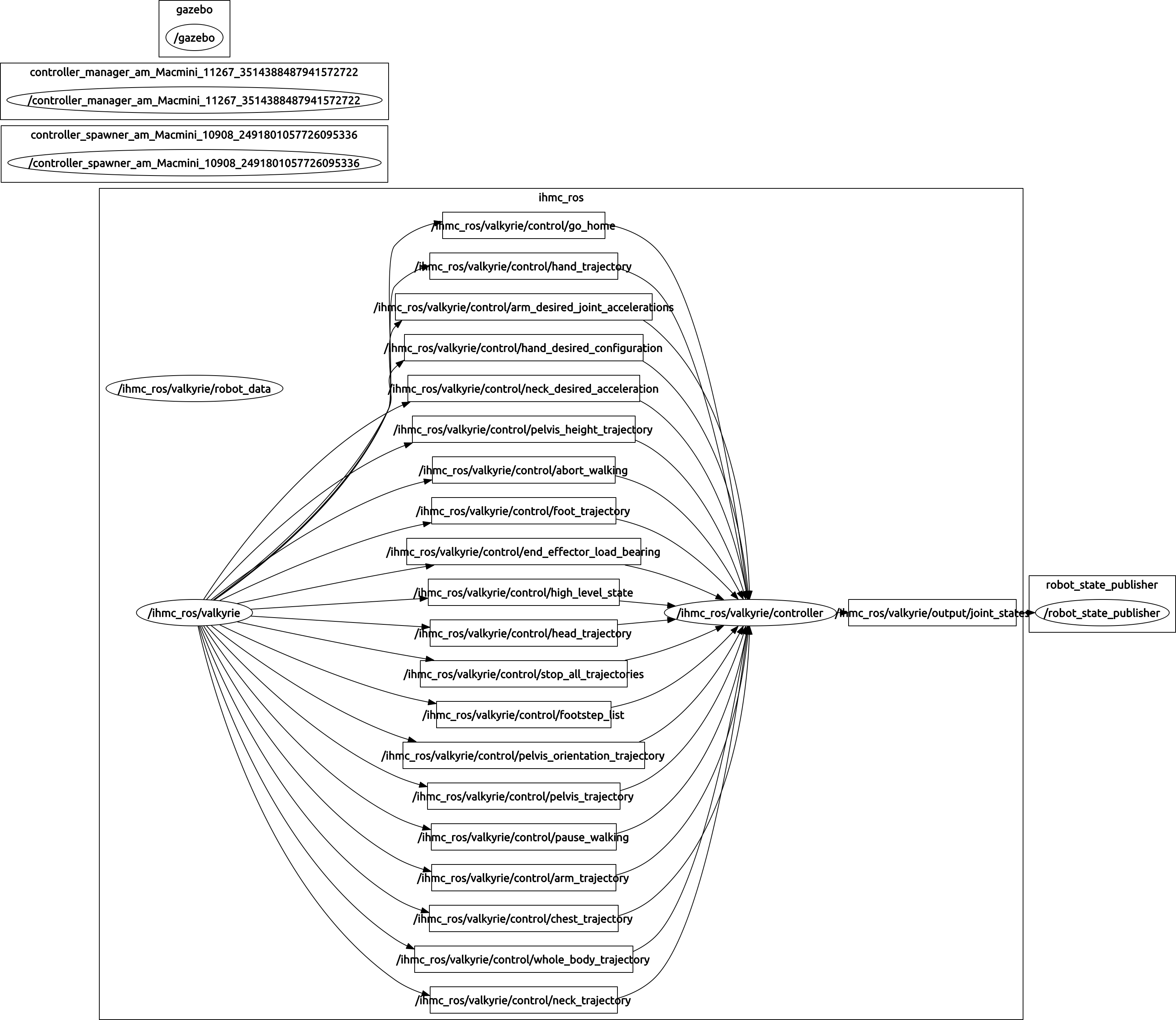

Original comment by kapoor_amita@yahoo.com (Bitbucket: Amita94). Attached is the roslaunch log and rqt_graph image when val falls (90% of time) Script started on Monday 21 November 2016 10:54:55 PM IST SUMMARYPARAMETERS

NODES auto-starting new master �[0m[ INFO] [1479749096.239155720]: Finished loading Gazebo ROS API Plugin.�[0m SUMMARYPARAMETERS

NODES �[1mROS_MASTER_URI=http://localhost:11311�[0m [INFO] [WallTime: 1479749101.652679] [4.037000] Controller Spawner: Waiting for service controller_manager/switch_controller SUMMARYPARAMETERS

NODES �[1mROS_MASTER_URI=http://localhost:11311�[0m A fatal error has been detected by the Java Runtime Environment:SIGSEGV (0xb) at pc=0x00007fa8709bec4a, pid=11288, tid=140361427134400JRE version: OpenJDK Runtime Environment (7.0_121) (build 1.7.0_121-b00)Java VM: OpenJDK 64-Bit Server VM (24.121-b00 mixed mode linux-amd64 compressed oops)Derivative: IcedTea 2.6.8Distribution: Ubuntu 14.04 LTS, package 7u121-2.6.8-1ubuntu0.14.04.1Problematic frame:C [liborocos-rtt-gnulinux.so.2.8+0x159c4a] RTT::ComponentLoader::unloadComponent(RTT::TaskContext*)+0x6aFailed to write core dump. Core dumps have been disabled. To enable core dumping, try "ulimit -c unlimited" before starting Java againAn error report file with more information is saved as:/home/am/.ros/hs_err_pid11288.logpure virtual method called

Script done on Monday 21 November 2016 10:58:04 PM IST |

|

Original comment by Jordan Lack (Bitbucket: jordanlack). @Amita94 it looks like this is a separate issue than what this ticket was initially opened up to address. It's a bit difficult to parse through that output, but it sounds like you're having a problem with Valkyrie actually falling down in Gazebo, where this issue was created due to a crashing issue. I am currently working on a release that includes a number of features including a fix for the crashing issue reported by a few users here(see top of this thread). |

|

Original comment by Steve Peters (Bitbucket: Steven Peters, GitHub: scpeters). Ok, everyone, IHMC has released a new version of their controller (version 0.8.1), and I've uploaded a new valkyrie_controller.tar.gz (step 10 in installation instructions), so please delete the Can some folks test this out to see if it helps? |

|

Original comment by Nuttaworn Sujumnong (Bitbucket: nsujumnong). Excellent. The controller works on my computer now. Also, I don't get hyperthreading error message any longer, which is kinda cool. Thank you! |

|

Original comment by dan (Bitbucket: dan77062). It no longer has the IndexOutOfBoundsException, so the patch has solved the 2 core problem-- thanks! Unfortunately, the robot still collapses. Since I think that is due to other reasons, I put a comment along with output into issue #9 |

|

Original comment by Adam Allevato (Bitbucket: Kukanani). The new controller definitely seems to have improved software stability. Good work. |

|

Original comment by dan (Bitbucket: dan77062).

The two core problem is solved-- thanks! |

Original report (archived issue) by dan (Bitbucket: dan77062).

The original report had attachments: master.log, roslaunch-Mingo-Mtn-Robotics-24044.log, control_py_Mingo_Mtn_Robotics_24044_828331878780894790-3-stdout.log, roslaunch-Mingo-Mtn-Robotics-24096.log, rosout.log, rosout-1-stdout.log

The controller manager crashes as it tries to initialize the hardware interface. This continues after updating with the fix for the $HOME/valkyrie directory missing issue (issue #4). See the log below.

I am wondering if it is a race condition. It will load when gazebo_gui seg faults (that happens about 20% of the time). Log of that follows the controller manager crash log.

Here is the log of the controller manager crashing:

Here the controller manager loads after gazebo_gui seg faults:

The text was updated successfully, but these errors were encountered: