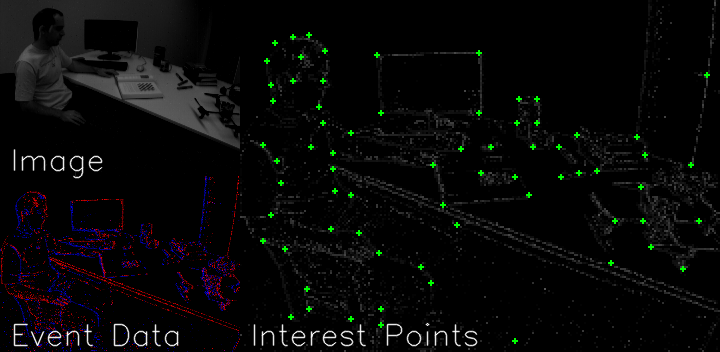

We developed an interest point detector to detect interest point location from event camera data. This idea was adapted from the literature "SuperPoint: Self-Supervised Interest Point Detection and Description." Daniel DeTone, Tomasz Malisiewicz, Andrew Rabinovich. ArXiV 2018. [1] This code is partially based on the pytorch implementation. https://github.com/eric-yyjau/pytorch-superpoint.

Our algorithm mainly consists of two parts:

- We first train our detector network with synthetic event data.

- We iteratively use the trained detector network to label interest points of a real-world dataset and then train the network with the labeled dataset.

The environment is run in python 3.6, Pytorch 1.5.0 and ROS. We ran our code with Ubuntu 18.04 and ROS Melodic. Installation instructions for ROS can be found here. To generate syntheic event data, we used "ESIM: an Open Event Camera Simulator". You may find installation details of ESIM here.

conda create --name py36-sp python=3.6

conda activate py36-sp

pip install -r requirements.txt

pip install -r requirements_torch.txt # install pytorch

sudo apt-get update

sudo apt-get install ros-melodic-desktop-full

sudo apt install python-rosdep python-rosinstall python-rosinstall-generator python-wstool build-essential

After installed Ros, don't forget to install the Event Camera Simulator.

In step 2, we used data sequences (in ros format) from MVSEC [2] and IJRR (ETH event dataset) [1] to further train our network. This code processes the events in HDF5 format. To convert the rosbags to this format, open a new terminal and source a ROS workspace. We command to use packages from https://github.com/TimoStoff/event_cnn_minimal

source /opt/ros/kinetic/setup.bash

python events_contrast_maximization/tools/rosbag_to_h5.py <path/to/rosbag/or/dir/with/rosbags> --output_dir <path/to/save_h5_events> --event_topic <event_topic> --image_topic <image_topic>

All commands should be executed within the pytorch-sp folder.

Modify the output path in the bash script to set your synthetic data output location. We developed a python event simulator pyV2E, which mimics the existing ESIM simulator and generatesevent data from video/image input.

cd generate_data

bash generate.sh

python train.py train_base configs/magicpoint_shapes_pair.yaml magicpoint_synth --eval

python export.py export_detector_homoAdapt configs/magicpoint_coco_export.yaml magicpoint_synth_homoAdapt_coco

python train4.py <train task> <config file> <export folder> --eval

| boxes_6dof |  |

|||

| dynamic_6dof |  |

|||

| office_spiral |  |

|||

| urban |  |

|||

[1] DeTone, D., Malisiewicz, T., & Rabinovich, A. (2018). SuperPoint: Self-Supervised Interest Point Detection and Description. 2018 IEEE/CVF Conference on Computer Vision and Pattern Recognition Workshops (CVPRW), 337-33712.

[2] Zhu, A.Z., Thakur, D., Özaslan, T., Pfrommer, B., Kumar, V., & Daniilidis, K. (2018). The Multivehicle Stereo Event Camera Dataset: An Event Camera Dataset for 3D Perception. IEEE Robotics and Automation Letters, 3, 2032-2039.

[3] Mueggler, E., Rebecq, H., Gallego, G., Delbrück, T., & Scaramuzza, D. (2017). The event-camera dataset and simulator: Event-based data for pose estimation, visual odometry, and SLAM. The International Journal of Robotics Research, 36, 142 - 149.

[4] Rebecq, H., Gehrig, D., & Scaramuzza, D. (2018). ESIM: an Open Event Camera Simulator. CoRL.

[5]. Stoffregen, T., Scheerlinck, C., Scaramuzza, D., Drummond, T., Barnes, N., Kleeman, L., & Mahony, R. (2020). Reducing the Sim-to-Real Gap for Event Cameras. ECCV.