New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

Fix distributed documentation for asynchronous collective Work objects #45709

Conversation

Codecov Report

@@ Coverage Diff @@

## master #45709 +/- ##

=======================================

Coverage 68.25% 68.25%

=======================================

Files 410 410

Lines 53246 53246

=======================================

+ Hits 36343 36344 +1

+ Misses 16903 16902 -1

Continue to review full report at Codecov.

|

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

Thanks for fixing!

docs/source/distributed.rst

Outdated

| return anything | ||

|

|

||

| asynchronous operation - when ``async_op`` is set to True. The collective operation function | ||

| Every collective operation function supports the following two kinds of operations, depending on the setting of the ``async_op`` flag passed into the collective: |

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

let's break this into shorter lines

docs/source/distributed.rst

Outdated

| further function calls utilizing the output of the collective call will behave as expected. For CUDA collectives, | ||

| function calls utilizing the output on the same CUDA stream will behave as expected. Users must take care of | ||

| synchronization under the scenario of running under different streams. For details on CUDA semantics such as stream | ||

| synchronization, see `cuda semantics <https://pytorch.org/docs/stable/autograd.html#profiler>`__. |

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

cuda semantics points to profiler, is this intentional?

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

Thanks for the catch! It should actually point to https://pytorch.org/docs/stable/notes/cuda.html

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

@rohan-varma has imported this pull request. If you are a Facebook employee, you can view this diff on Phabricator.

|

@rohan-varma merged this pull request in 154347d. |

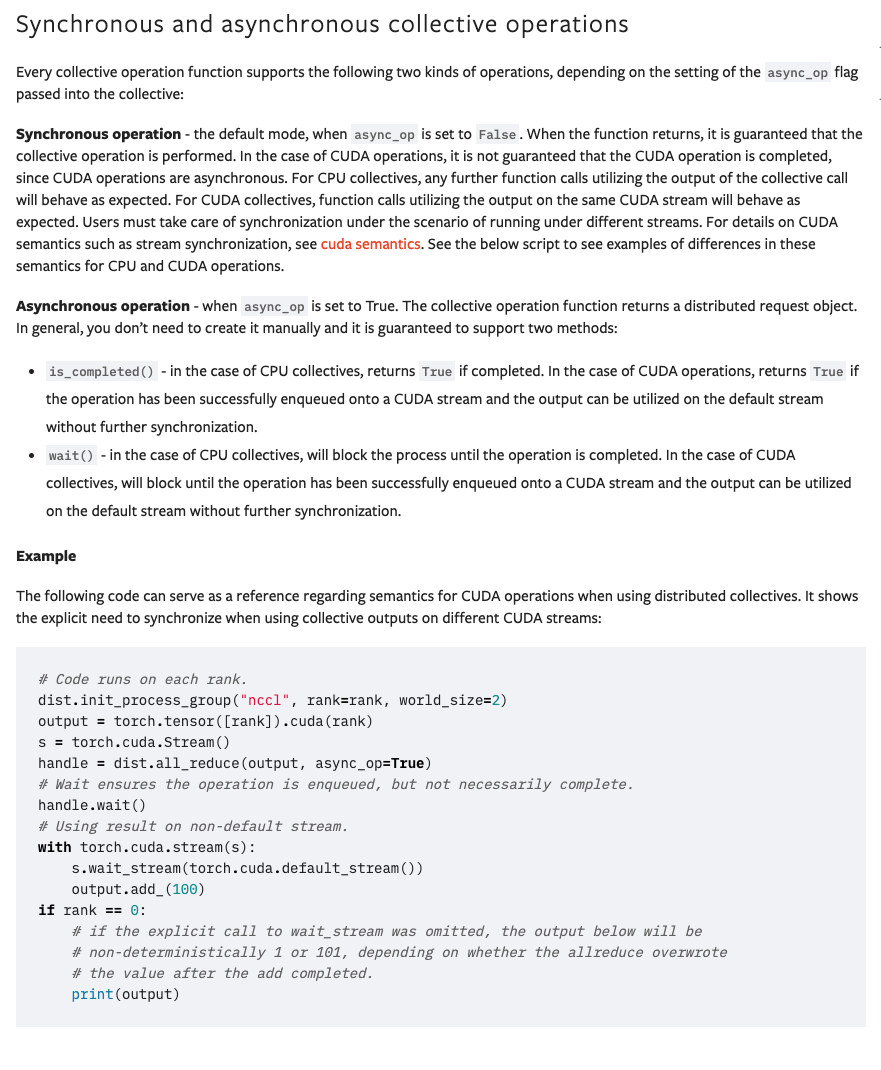

Closes #42247. Clarifies some documentation related to

Workobject semantics (outputs of async collective functions). Clarifies the difference between CPU operations and CUDA operations (on Gloo or NCCL backend), and provides an example where the difference in CUDA operation's wait() semantics is necessary to understand for correct code.