-

Notifications

You must be signed in to change notification settings - Fork 21.6k

New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

Back out "reuse consant from jit" #50521

Closed

Closed

Conversation

This file contains bidirectional Unicode text that may be interpreted or compiled differently than what appears below. To review, open the file in an editor that reveals hidden Unicode characters.

Learn more about bidirectional Unicode characters

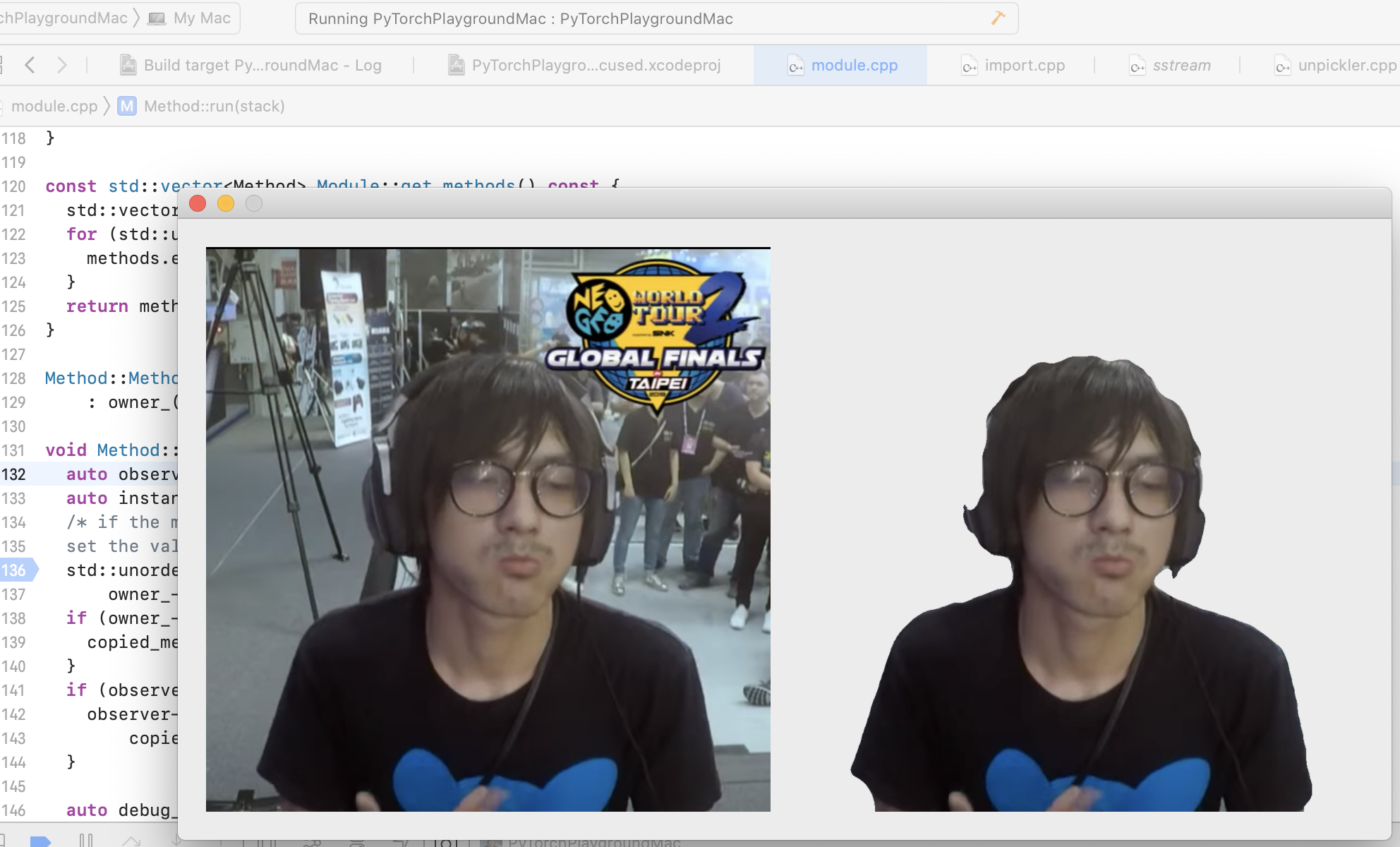

Summary: Original commit changeset: 9731ec1e0c1d Test Plan: - run `arc focus2 -b pp-ios //xplat/arfx/tracking/segmentation:segmentationApple -a ModelRunner --force-with-bad-commit ` - build via Xcode, run it on an iOS device - Click "Person Segmentation" - Crash observed without the diff patched, and the segmentation image is able to be loaded with this diff patched Reviewed By: husthyc Differential Revision: D25908493 fbshipit-source-id: c848a6d423b930a6504d0788793a259e586e2c54

💊 CI failures summary and remediationsAs of commit d7aa8b8 (more details on the Dr. CI page):

❄️ 1 failure tentatively classified as flakybut reruns have not yet been triggered to confirm:

|

|

This pull request was exported from Phabricator. Differential Revision: D25908493 |

xta0

approved these changes

Jan 14, 2021

|

This pull request has been merged in e05882d. |

cccclai

added a commit

that referenced

this pull request

Jan 22, 2021

## Summary This change is originally introduce in #49916, however, it ran into issue for one model and reverted in #50521. This pr re-enable reusing constant from jit with the change to fix. The issue is in the last else statement. It shouldn't be an else statement, but to catch all cases when any if statement fails. The correct code should remove the else, and add a continue when the current `const_item` meets all criterias and push to `updated_constant_vals`. It's a bit tricky to debug this problem, since the code is running in different threads and sometimes breaks at unrelated place. Before: ``` std::vector<IValue> updated_constant_vals; for (const auto& const_item : consts_list) { if (const_item.isTuple()) { const auto& tensor_jit = const_item.toTuple()->elements(); if (tensor_jit.size() > 1) { const auto& tensor_jit_index_key = tensor_jit[0]; const auto& tensor_jit_index = tensor_jit[1]; if (tensor_jit_index_key.isString() && tensor_jit_index_key.toString().get()->string() == mobile::kTensorJitIndex) { updated_constant_vals.push_back( constant_vals_from_jit[tensor_jit_index.toInt()]); } } } else { updated_constant_vals.push_back(const_item); } } ``` Current: ``` for (const auto& const_item : consts_list) { if (const_item.isTuple()) { const auto& tensor_jit = const_item.toTuple()->elements(); if (tensor_jit.size() > 1) { const auto& tensor_jit_index_key = tensor_jit[0]; const auto& tensor_jit_index = tensor_jit[1]; if (tensor_jit_index_key.isString() && tensor_jit_index_key.toString().get()->string() == mobile::kTensorJitIndex) { updated_constant_vals.push_back( constant_vals_from_jit[tensor_jit_index.toInt()]); continue; } } } updated_constant_vals.push_back(const_item); } ``` ## Test plan In addition to run the test in #49916, 1. Run PyTorchPlayGroundMac  2. `buck test pp-macos` ### Copy the summary and test plan in #49916 here ## Summary Jit will generate constant tensor value, and it locates in the constant folder after unzip model.ptl. Bytecode generated by lite interpreter also includes constant tensor, which are almost the same with the constant tensor value from jit. This pr reuses the constant tensor from jit. The implementation is: 1. In `export_module.cpp`, store all constant tensor value from jit in an `unordered_map constants_from_jit`, where the tensor value use tensor string as a hash. When writing bytecode (`writeByteCode` function), the map `constants_from_jit` will also be passed. If the constant in bytecode exist in `constants_from_jit`, it will be replaced by a tuple in this format `('tensor_jit_index', 4)`, where 4 is the index in tensor table from jit. 2. In `import.cpp`, it will also read from `constants` folder and loads the constant tensor. Then it scans the constants tensor from bytecode. If there exists tuple in this format `('tensor_jit_index', 4)` under `constants` fields, it will fetch the constant from the tensor table from jit and replace with it accordingly. Previous `python -m torch.utils.show_pickle bytecode.pkl` ``` ... ('constants', (0.1, 1e-05, None, 2, 1, False, 0, True, False, 1, 2, 3, torch._utils._rebuild_tensor_v2(pers.obj(('storage', torch.FloatStorage, '0', 'cpu', 90944),), 0, (1, 116, 28, 28), (90944, 784, 28, 1), False, collections.OrderedDict()), torch._utils._rebuild_tensor_v2(pers.obj(('storage', torch.FloatStorage, '1', 'cpu', 90944),), 0, (1, 116, 28, 28), (90944, 784, 28, 1), False, collections.OrderedDict()), torch._utils._rebuild_tensor_v2(pers.obj(('storage', torch.FloatStorage, '2', 'cpu', 90944),), 0, (1, 116, 28, 28), (90944, 784, 28, 1), False, collections.OrderedDict()), 0.1, 1e-05, None, 2, 1, False, ... ) ... ``` Now `python -m torch.utils.show_pickle bytecode.pkl` ``` ('constants', (0.1, 1e-05, None, 2, 1, False, 0, True, False, 1, 2, 3, ('tensor_jit_index', 4), ('tensor_jit_index', 3), ('tensor_jit_index', 2), 0.1, 1e-05, None, 2, 1, False, 0, 24, True, 58, ('tensor_jit_index', 0), 3, ('tensor_jit_index', 1), -1, ('tensor_jit_index', 12), ('tensor_jit_index', 11), ('tensor_jit_index', 10), ('tensor_jit_index', 9), ('tensor_jit_index', 8), ('tensor_jit_index', 7), ('tensor_jit_index', 6), 0.1, 1e-05, None, 2, 1, False, ) ... ``` ## Test 1. Build pytorch locally. `MACOSX_DEPLOYMENT_TARGET=10.9 CC=clang CXX=clang++ USE_CUDA=0 DEBUG=1 MAX_JOBS=16 python setup.py develop` 2. Run `python save_lite.py` ``` import torch # ~/Documents/pytorch/data/dog.jpg model = torch.hub.load('pytorch/vision:v0.6.0', 'shufflenet_v2_x1_0', pretrained=True) model.eval() # sample execution (requires torchvision) from PIL import Image from torchvision import transforms import pathlib import tempfile import torch.utils.mobile_optimizer input_image = Image.open('~/Documents/pytorch/data/dog.jpg') preprocess = transforms.Compose([ transforms.Resize(256), transforms.CenterCrop(224), transforms.ToTensor(), transforms.Normalize(mean=[0.485, 0.456, 0.406], std=[0.229, 0.224, 0.225]), ]) input_tensor = preprocess(input_image) input_batch = input_tensor.unsqueeze(0) # create a mini-batch as expected by the model # move the input and model to GPU for speed if available if torch.cuda.is_available(): input_batch = input_batch.to('cuda') model.to('cuda') with torch.no_grad(): output = model(input_batch) # Tensor of shape 1000, with confidence scores over Imagenet's 1000 classes print(output[0]) # The output has unnormalized scores. To get probabilities, you can run a softmax on it. print(torch.nn.functional.softmax(output[0], dim=0)) traced = torch.jit.trace(model, input_batch) sum(p.numel() * p.element_size() for p in traced.parameters()) tf = pathlib.Path('~/Documents/pytorch/data/data/example_debug_map_with_tensorkey.ptl') torch.jit.save(traced, tf.name) print(pathlib.Path(tf.name).stat().st_size) traced._save_for_lite_interpreter(tf.name) print(pathlib.Path(tf.name).stat().st_size) print(tf.name) ``` 3. Run `python test_lite.py` ``` import torch from torch.jit.mobile import _load_for_lite_interpreter # sample execution (requires torchvision) from PIL import Image from torchvision import transforms input_image = Image.open('~/Documents/pytorch/data/dog.jpg') preprocess = transforms.Compose([ transforms.Resize(256), transforms.CenterCrop(224), transforms.ToTensor(), transforms.Normalize(mean=[0.485, 0.456, 0.406], std=[0.229, 0.224, 0.225]), ]) input_tensor = preprocess(input_image) input_batch = input_tensor.unsqueeze(0) # create a mini-batch as expected by the model reload_lite_model = _load_for_lite_interpreter('~/Documents/pytorch/experiment/example_debug_map_with_tensorkey.ptl') with torch.no_grad(): output_lite = reload_lite_model(input_batch) # Tensor of shape 1000, with confidence scores over Imagenet's 1000 classes print(output_lite[0]) # The output has unnormalized scores. To get probabilities, you can run a softmax on it. print(torch.nn.functional.softmax(output_lite[0], dim=0)) ``` 4. Compare the result with pytorch in master and pytorch built locally with this change, and see the same output. 5. The model size was 16.1 MB and becomes 12.9 with this change. Differential Revision: [D25982807](https://our.internmc.facebook.com/intern/diff/D25982807) [ghstack-poisoned]

Sign up for free

to join this conversation on GitHub.

Already have an account?

Sign in to comment

Labels

Add this suggestion to a batch that can be applied as a single commit.

This suggestion is invalid because no changes were made to the code.

Suggestions cannot be applied while the pull request is closed.

Suggestions cannot be applied while viewing a subset of changes.

Only one suggestion per line can be applied in a batch.

Add this suggestion to a batch that can be applied as a single commit.

Applying suggestions on deleted lines is not supported.

You must change the existing code in this line in order to create a valid suggestion.

Outdated suggestions cannot be applied.

This suggestion has been applied or marked resolved.

Suggestions cannot be applied from pending reviews.

Suggestions cannot be applied on multi-line comments.

Suggestions cannot be applied while the pull request is queued to merge.

Suggestion cannot be applied right now. Please check back later.

Summary: Original commit changeset: 9731ec1e0c1d

Test Plan:

arc focus2 -b pp-ios //xplat/arfx/tracking/segmentation:segmentationApple -a ModelRunner --force-with-bad-commitReviewed By: husthyc

Differential Revision: D25908493