New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

Use puma worker count equal to processor count in production #46838

Conversation

|

mightn't it break a environment like Heroku, since the eco plan has only 512 MB of memory? 🤔 |

|

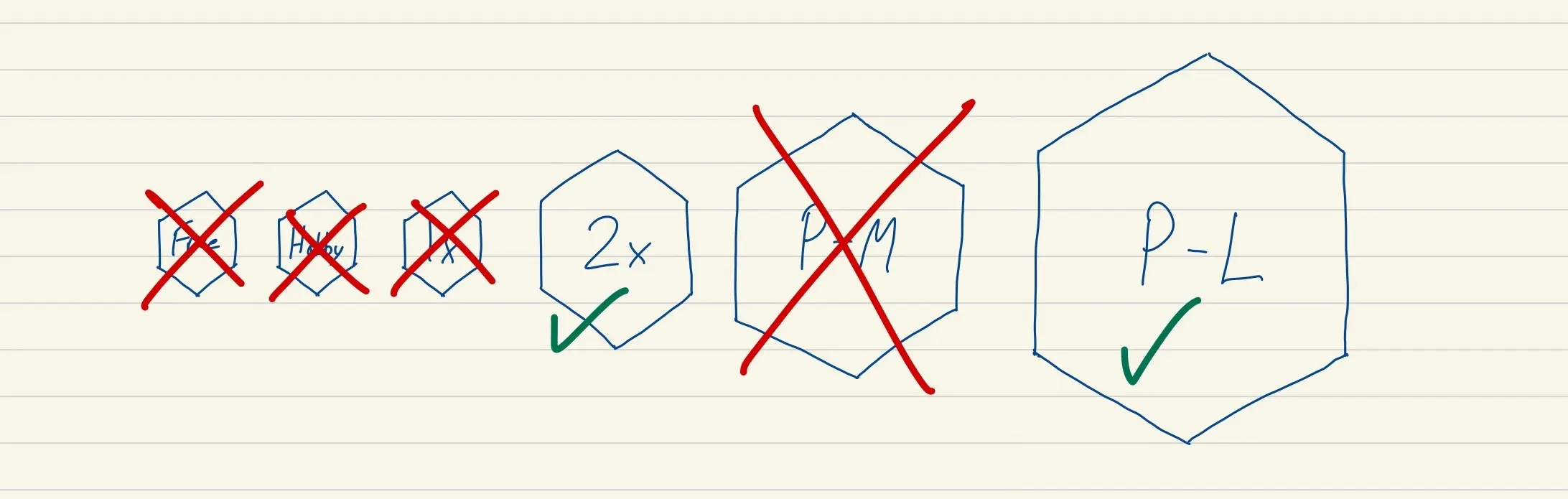

Optimizing the default production config for the cheapest possible Heroku plan seems pretty unreasonable. People who are on plans that cheap can set their This would be in 7.1 which is a "major" version in Rails terms, and this is only going to affect people who accept the diff on the generated file. A few commits ago, The article you quoted says this:

That's exactly what this PR does. Seems like a pretty sane default. |

|

But even the plan The first line of the article makes it clear:

If memory would be the same, independent of the number of workers, I wouldn't have objections here. |

|

Performance-M is not a good choice for Rails apps. https://judoscale.com/guides/how-many-dynos |

|

So we are in a worse situation, just 1024 MB of RAM. Even in the article, they recommend a WEB_CONCURRENCY of 2, with the current puma configuration file we would have this value set to 4 or even 8(I'm not sure). |

|

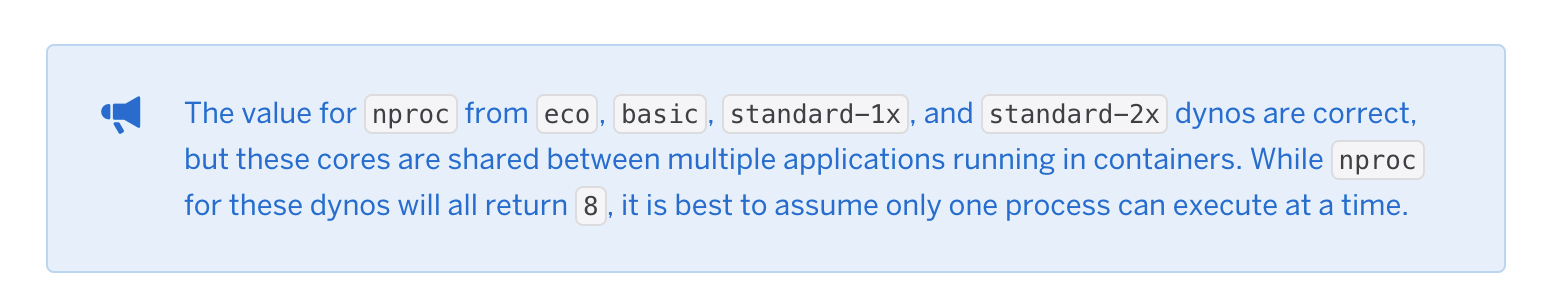

Looks like I misspoke earlier and the Free (now Eco) have 1 CPU. Which means this change does nothing for Eco dynos. |

|

No, it has 1 DYNO, 4 physical cores, and 4 virtual cores, but I'm not sure if you see 4 or 8 using the method |

|

FWIW, on Heroku, with a I don't have a strong opinion on changing this default, but I do think it may be slightly problematic for Heroku users. Perhaps Heroku could update their documentation, or set a default ENV var for you. Rails Guides could also detail some caveats, and it may be worth considering a comment in this template file for clarity. |

|

That's the point 👍. On Heroku, you can set |

|

ℹ️ This could be also problem in K8s deploys where scaling should be done through pods, not by running daemons and multiple processes. |

|

Oh interesting about Heroku's SENSIBLE_DEFAULTS, I hadn't seen that before. Thanks for the tip. In that case, it looks like 2 is Heroku's recommended WEB_CONCURRENCY for a Standard-1x Dyno. (I'm using Falcon instead of Puma, which wants 1 process per CPU.) I still think it may be fine to change this default, but it seems like some additional documentation for cloud hosting providers might be nice to have. |

Scaling with pods only means missing out on memory savings via copy-on-write. I'm no K8s expert, but does it really matter from a container perspective whether it's a single worker or master process with forked workers is accepting requests? Based on puma/puma#2645 (comment) it doesn't seem to be. |

|

This implementation doesn't return the right values when running in a container (it will always return 1). To handle the container case, the code should look at For this specific PR, choosing to use the |

|

Old default was 1, so that would amount to the same. But would be great if we could get it fixed up in a way so we by default use all available cores inside a container too. Please do investigate a patch ✌️ |

Would you mind to report this to https://github.com/ruby-concurrency/concurrent-ruby/issues/new? |

|

I just tried to replicate the problem, but couldn't. |

Use all the processor performance of the host by default in production.