An implementaion of the paper Huang et al. [Arbitrary Style Transfer in Real-time with Adaptive Instance Normalization](https://arxiv.org/pdf/1703.06868.pdf) Created in Tensorflow 2.0

The code borrows elements of githubs posts:

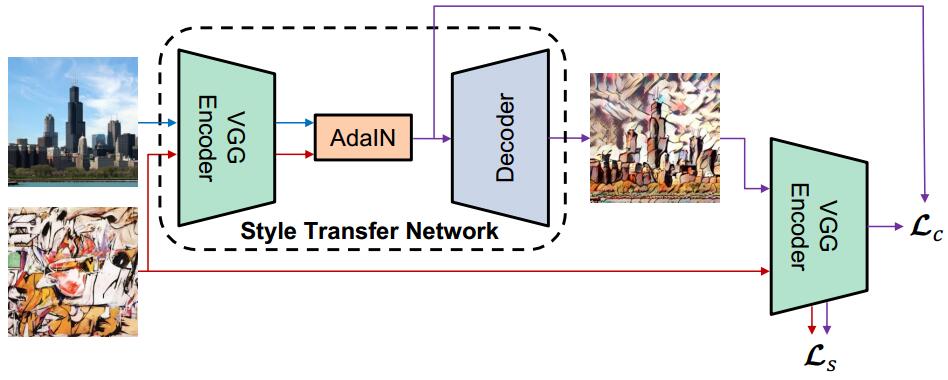

The style transfer network follows an encoder-decoder architecure with Adaptive Instance Normalization in between the encoder and decoder.

A normalized pretrained VGG19 model is used as the encoder for this network which weigths can be found here:

Pre-trained VGG19 normalised network npz format (MD5 c5c961738b134ffe206e0a552c728aea)

The network is trained using the MS-COCO dataset the content images and the WikiArt dataset for the style images.

- Tensorflow 2.0

- Numpy

- OpenCV

- Tqdm

A style weight of 1e-1 was initially used, as it is value used in the code for the original paper. I found these result to be less colorful than wanted, so I tried to experiment with a color loss, in order to train it to mimic the color of the style image better. This did work, however I found that I got better results just by increasing the style weight to 2.0.