If you wanna run VINS-Mono with MYNT EYE camera, please follow the steps:

When use MYNTEYE-S camera / MYNTEYE-D camera, you should Download MYNT-EYE-S-SDK / MYNT-EYE-D-SDK first and then make ros on mynteye-s / make ros on mynteye-d.

cd ~

wget https://raw.githubusercontent.com/oroca/oroca-ros-pkg/master/ros_install.sh && \

chmod 755 ./ros_install.sh && bash ./ros_install.sh catkin_ws kinetic

sudo apt-get update

sudo apt-get install \

apt-transport-https \

ca-certificates \

curl \

gnupg-agent \

software-properties-common

curl -fsSL https://download.docker.com/linux/ubuntu/gpg | sudo apt-key add -

sudo add-apt-repository \

"deb [arch=amd64] https://download.docker.com/linux/ubuntu \

$(lsb_release -cs) \

stable"

sudo apt-get update

sudo apt-get install docker-ce docker-ce-cli containerd.io

Then add your account to docker group by sudo usermod -aG docker $YOUR_USER_NAME. Relaunch the terminal or logout and re-login if you get Permission denied error.

To complie with docker,we recommend that you should use more than 16G RAM, or ensure that the RAM and virtual memory space is greater than 16G.

first make sure ros and docker are installed on your machine. type:

git clone -b docker_feat https://github.com/slightech/MYNT-EYE-VINS-Sample.git

cd MYNT-EYE-VINS-Sample/docker

make build

Note that the docker building process may take a while depends on your network and machine. After VINS-Mono successfully started, open another terminal and play your bag file, then you should be able to see the result. If you need modify the code, simply run ./run.sh LAUNCH_FILE_NAME after your changes.

When you use mynteye-s device

cd (local path of MYNT-EYE-S-SDK)

source ./wrappers/ros/devel/setup.bash

roslaunch mynt_eye_ros_wrapper vins_mono.launch

Open another terminal

cd path/to/MYNT-EYE-VINS-Sample/docker

./run.sh mynteye_s.launch

When you use mynteye-s2100 device

cd (local path of MYNT-EYE-S-SDK)

source ./wrappers/ros/devel/setup.bash

roslaunch mynt_eye_ros_wrapper vins_mono.launch

Open another terminal

cd path/to/MYNT-EYE-VINS-Sample/docker

./run.sh mynteye_s2100.launch

When you use mynteye-s2110 device

cd (local path of MYNT-EYE-S-SDK)

source ./wrappers/ros/devel/setup.bash

roslaunch mynt_eye_ros_wrapper vins_mono.launch

Open another terminal

cd path/to/MYNT-EYE-VINS-Sample/docker

./run.sh mynteye_s2110.launch

When you use mynteye-d device

cd (local path of MYNT-EYE-D-SDK)

source ./wrappers/ros/devel/setup.bash

roslaunch mynteye_wrapper_d vins_mono.launch stream_mode:=0

Open another terminal

cd path/to/MYNT-EYE-VINS-Sample/docker

./run.sh mynteye_d.launch

11 Jan 2019: An extension of VINS, which supports stereo cameras / stereo cameras + IMU / mono camera + IMU, is published at VINS-Fusion

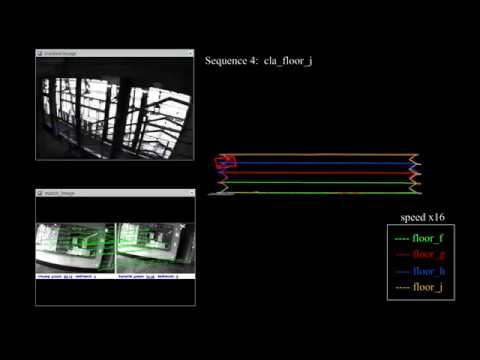

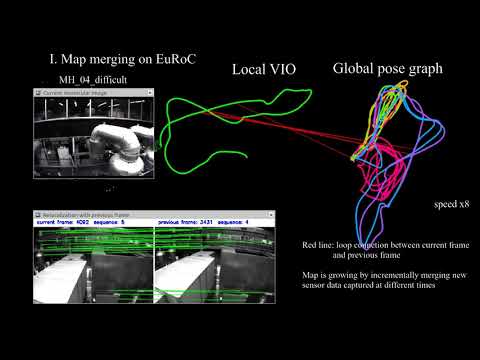

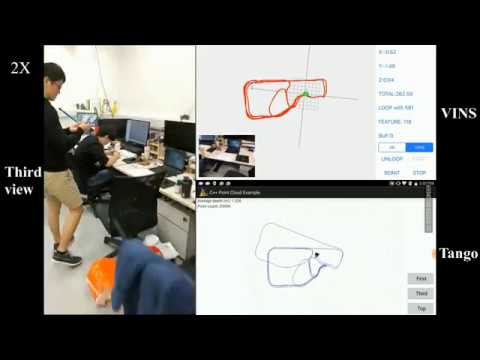

29 Dec 2017: New features: Add map merge, pose graph reuse, online temporal calibration function, and support rolling shutter camera. Map reuse videos:

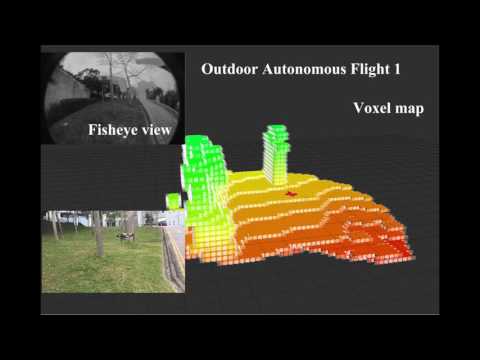

VINS-Mono is a real-time SLAM framework for Monocular Visual-Inertial Systems. It uses an optimization-based sliding window formulation for providing high-accuracy visual-inertial odometry. It features efficient IMU pre-integration with bias correction, automatic estimator initialization, online extrinsic calibration, failure detection and recovery, loop detection, and global pose graph optimization, map merge, pose graph reuse, online temporal calibration, rolling shutter support. VINS-Mono is primarily designed for state estimation and feedback control of autonomous drones, but it is also capable of providing accurate localization for AR applications. This code runs on Linux, and is fully integrated with ROS. For iOS mobile implementation, please go to VINS-Mobile.

Authors: Tong Qin, Peiliang Li, Zhenfei Yang, and Shaojie Shen from the HUKST Aerial Robotics Group

Videos:

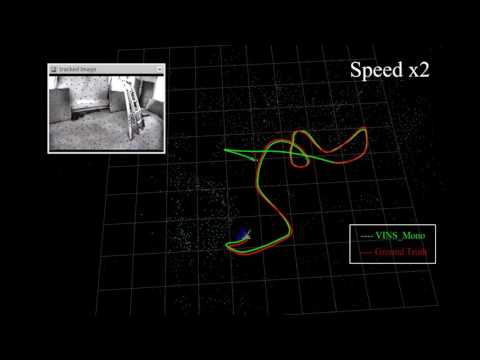

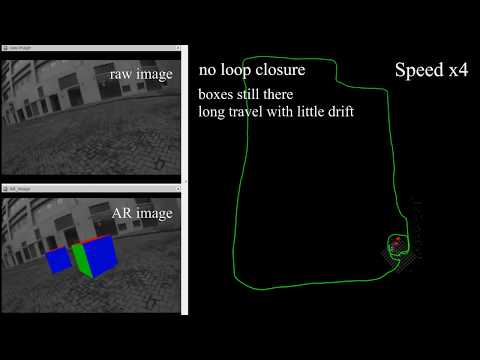

EuRoC dataset; Indoor and outdoor performance; AR application;

MAV application; Mobile implementation (Video link for mainland China friends: Video1 Video2 Video3 Video4 Video5)

Related Papers

-

Online Temporal Calibration for Monocular Visual-Inertial Systems, Tong Qin, Shaojie Shen, IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS, 2018), best student paper award pdf

-

VINS-Mono: A Robust and Versatile Monocular Visual-Inertial State Estimator, Tong Qin, Peiliang Li, Zhenfei Yang, Shaojie Shen, IEEE Transactions on Roboticspdf

If you use VINS-Mono for your academic research, please cite at least one of our related papers.bib

1.1 Ubuntu and ROS Ubuntu 16.04. ROS Kinetic. ROS Installation additional ROS pacakge

sudo apt-get install ros-YOUR_DISTRO-cv-bridge ros-YOUR_DISTRO-tf ros-YOUR_DISTRO-message-filters ros-YOUR_DISTRO-image-transport

1.2. Ceres Solver Follow Ceres Installation, remember to make install. (Our testing environment: Ubuntu 16.04, ROS Kinetic, OpenCV 3.3.1, Eigen 3.3.3)

Clone the repository and catkin_make:

cd ~/catkin_ws/src

git clone https://github.com/HKUST-Aerial-Robotics/VINS-Mono.git

cd ../

catkin_make

source ~/catkin_ws/devel/setup.bash

Download EuRoC MAV Dataset. Although it contains stereo cameras, we only use one camera. The system also works with ETH-asl cla dataset. We take EuRoC as the example.

3.1 visual-inertial odometry and loop closure

3.1.1 Open three terminals, launch the vins_estimator , rviz and play the bag file respectively. Take MH_01 for example

roslaunch vins_estimator euroc.launch

roslaunch vins_estimator vins_rviz.launch

rosbag play YOUR_PATH_TO_DATASET/MH_01_easy.bag

(If you fail to open vins_rviz.launch, just open an empty rviz, then load the config file: file -> Open Config-> YOUR_VINS_FOLDER/config/vins_rviz_config.rviz)

3.1.2 (Optional) Visualize ground truth. We write a naive benchmark publisher to help you visualize the ground truth. It uses a naive strategy to align VINS with ground truth. Just for visualization. not for quantitative comparison on academic publications.

roslaunch benchmark_publisher publish.launch sequence_name:=MH_05_difficult

(Green line is VINS result, red line is ground truth).

3.1.3 (Optional) You can even run EuRoC without extrinsic parameters between camera and IMU. We will calibrate them online. Replace the first command with:

roslaunch vins_estimator euroc_no_extrinsic_param.launch

No extrinsic parameters in that config file. Waiting a few seconds for initial calibration. Sometimes you cannot feel any difference as the calibration is done quickly.

3.2 map merge

After playing MH_01 bag, you can continue playing MH_02 bag, MH_03 bag ... The system will merge them according to the loop closure.

3.3 map reuse

3.3.1 map save

Set the pose_graph_save_path in the config file (YOUR_VINS_FOLEDER/config/euroc/euroc_config.yaml). After playing MH_01 bag, input s in vins_estimator terminal, then enter. The current pose graph will be saved.

3.3.2 map load

Set the load_previous_pose_graph to 1 before doing 3.1.1. The system will load previous pose graph from pose_graph_save_path. Then you can play MH_02 bag. New sequence will be aligned to the previous pose graph.

4.1 Download the bag file, which is collected from HKUST Robotic Institute. For friends in mainland China, download from bag file.

4.2 Open three terminals, launch the ar_demo, rviz and play the bag file respectively.

roslaunch ar_demo 3dm_bag.launch

roslaunch ar_demo ar_rviz.launch

rosbag play YOUR_PATH_TO_DATASET/ar_box.bag

We put one 0.8m x 0.8m x 0.8m virtual box in front of your view.

Suppose you are familiar with ROS and you can get a camera and an IMU with raw metric measurements in ROS topic, you can follow these steps to set up your device. For beginners, we highly recommend you to first try out VINS-Mobile if you have iOS devices since you don't need to set up anything.

5.1 Change to your topic name in the config file. The image should exceed 20Hz and IMU should exceed 100Hz. Both image and IMU should have the accurate time stamp. IMU should contain absolute acceleration values including gravity.

5.2 Camera calibration:

We support the pinhole model and the MEI model. You can calibrate your camera with any tools you like. Just write the parameters in the config file in the right format. If you use rolling shutter camera, please carefully calibrate your camera, making sure the reprojection error is less than 0.5 pixel.

5.3 Camera-Imu extrinsic parameters:

If you have seen the config files for EuRoC and AR demos, you can find that we can estimate and refine them online. If you familiar with transformation, you can figure out the rotation and position by your eyes or via hand measurements. Then write these values into config as the initial guess. Our estimator will refine extrinsic parameters online. If you don't know anything about the camera-IMU transformation, just ignore the extrinsic parameters and set the estimate_extrinsic to 2, and rotate your device set at the beginning for a few seconds. When the system works successfully, we will save the calibration result. you can use these result as initial values for next time. An example of how to set the extrinsic parameters is inextrinsic_parameter_example

5.4 Temporal calibration: Most self-made visual-inertial sensor sets are unsynchronized. You can set estimate_td to 1 to online estimate the time offset between your camera and IMU.

5.5 Rolling shutter: For rolling shutter camera (carefully calibrated, reprojection error under 0.5 pixel), set rolling_shutter to 1. Also, you should set rolling shutter readout time rolling_shutter_tr, which is from sensor datasheet(usually 0-0.05s, not exposure time). Don't try web camera, the web camera is so awful.

5.6 Other parameter settings: Details are included in the config file.

5.7 Performance on different devices:

(global shutter camera + synchronized high-end IMU, e.g. VI-Sensor) > (global shutter camera + synchronized low-end IMU) > (global camera + unsync high frequency IMU) > (global camera + unsync low frequency IMU) > (rolling camera + unsync low frequency IMU).

To further facilitate the building process, we add docker in our code. Docker environment is like a sandbox, thus makes our code environment-independent. To run with docker, first make sure ros and docker are installed on your machine. Then add your account to docker group by sudo usermod -aG docker $YOUR_USER_NAME. Relaunch the terminal or logout and re-login if you get Permission denied error, type:

cd ~/catkin_ws/src/VINS-Mono/docker

make build

./run.sh LAUNCH_FILE_NAME # ./run.sh euroc.launch

Note that the docker building process may take a while depends on your network and machine. After VINS-Mono successfully started, open another terminal and play your bag file, then you should be able to see the result. If you need modify the code, simply run ./run.sh LAUNCH_FILE_NAME after your changes.

We use ceres solver for non-linear optimization and DBoW2 for loop detection, and a generic camera model.

The source code is released under GPLv3 license.

We are still working on improving the code reliability. For any technical issues, please contact Tong QIN <tong.qinATconnect.ust.hk> or Peiliang LI <pliapATconnect.ust.hk>.

For commercial inquiries, please contact Shaojie SHEN <eeshaojieATust.hk>