New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

SimpMessagingTemplate.convertAndSend causes hanging thread #26464

Comments

|

I can't tell what's going on but the Future it's waiting on should be from the send through the ReactorNettyTcpConnection. It sounds like the send through Reactor Netty TcpClient perhaps doesn't complete. Not sure why though. @violetagg any idea what this might mean, i.e. why the |

|

@hurahurat Can you provide some wire logging? |

|

Unfortunately this time I turn off all websocket logs so I cannot provide any helpful logs about this problem. |

|

If you would like us to look at this issue, please provide the requested information. If the information is not provided within the next 7 days this issue will be closed. |

|

Closing due to lack of requested feedback. If you would like us to look at this issue, please provide the requested information and we will re-open the issue. |

|

We're using Spring Boot 2.4.5 with ActiveMQ as a message broker and we're experiencing similar issues. The fault happens at random for us, but running a test with @violetagg what do you mean by wire logging? Are we talking Wireshark or? |

|

@boginw Please check this for TcpClient wire logging https://projectreactor.io/docs/netty/release/reference/index.html#client-tcp-level-configurations-event-wire-logger |

|

@violetagg I'm unsure how to enable the wire-logging you mentioned in a Spring Boot application. I have tried using what you mentioned in this other issue but to no avail. The project uses @Component

public class NettyWebServerCustomizer

implements WebServerFactoryCustomizer<NettyReactiveWebServerFactory> {

@Override

public void customize(NettyReactiveWebServerFactory factory) {

factory.addServerCustomizers(httpServer -> httpServer.wiretap(

"cool-logger",

LogLevel.DEBUG,

AdvancedByteBufFormat.TEXTUAL

));

}

}and in my properties file, I have logging.level.root=DEBUG

logging.level.reactor.netty=trace

logging.level.cool-logger=DEBUGBut my logs do not contain any matches for |

|

@boginw If this is not working please open an issue in Reactor Netty with a reproducible example. Thanks. |

|

same exact problem and thread dump in here. did anyone figure out any clues on why this is happening? the only thing suspicious in my case is that the thread that's trying to write on the socket is a rabbitmq listener container thread - so it's not one of my app's threads, but a rabbit listener trying to dump smth back into rabbit (via stomp) here's the thread dump: |

|

Just managed to get the same exact problem when a diff thread writes on the wire, so it's not rabbitmq related. Is SimpMessagingTemplate (and all its dependencies down to netty) thread safe? WebsocketClient is certainly not, so if smth in between is not sync-ing, this could be a possible reason for what we're seeing here.. here's the second thread, same exact behavior (hanging on a future) |

|

The issue has nothing to do with the thread safety of the SimpMessagingTemplate.. I've synced all writing to it through a dedicated thread with the only difference being - now this thread hangs, waiting on the netty future that never resolves.. Reproduces easily after a few tries with intense & simultaneous bi-directional traffic on the wire (srv->client and client->srv) |

|

@alekol, see #26464 (comment). |

|

yeah.. was trying for an hour to get the netty logs in place for a spring boot app with log4j2, so all the examples where netty client/server is manually instantiated are of no help... at this point it's probably easier to build netty from source and debug :/ I'll try to reach out to their community. could be a known problem. it's just too easy to repro.. |

|

It turns out that SimpMessagingTemplate already supports sending with a timeout (SimpMessagingTemplate.setSendTimeout()), but it seems that this timeout is not considered within the implementation of StompBrokerRelayMessageHandler:SystemSessionConnectionHandler.forward() With this in mind, it seems it'd be a fair assumption to expect this timeout to be respected in SystemSessionConnectionHandler::forward() Would you guys consider a fix? If needed, I could draft the merge request.. |

|

@alekol I prefer |

|

I cannot provide an isolated repro of this and I can't share the code of my project (IP and all) :/ What's causing the app-level deadlock is the unbound future.get() which is not respecting the value set via template.setSendTimeout(). So a greater time delay is not going to solve anything for anyone. I realize the issue is hard to reproduce and is probably beyond spring-messaging per se, but that doesn't make it any less real, nor the need to address the issue with unbound future.get() any less working in this case |

|

Just for some context, we have a failure rate of 0.5%. But given that we have around 2,500 tests, then we are sure that at least one test fails almost every time we run them. |

|

@alekol thanks for the wire logs. A couple of things I'm not able to make sense of. You wrote the last good write is at 09:27:01,287 and the last good read at 09:27:03,532. First, those are in reverse, read and write respectively, but more importantly the READ is followed by READ_COMPLETE, the WRITE is followed by FLUSH, and there are many subsequent reads and writes on the same connection id You wrote that once the issue happens, the app keeps receiving messages, but it can't write due to the unresolved future and blocked thread. If messages are sent through the One suggestion for something to try. I'm wondering if the issue could be related to #25561 where the I/O works fine but the Future isn't getting completed correctly. One way to try is to to check with Spring Framework 5.2.15 (Boot 2.3.11 I think). Alternatively, you could undo 7758ba3 locally, i.e. by forking As for the suggestion for sendTimeout, I think that's something we can consider but I'd like to try to find out more about the root cause. |

|

@rstoyanchev look at my log please. Especially on last successful write of 83 bytes at 2021-05-24 08:23:56.236. Yes, it has FLUSH after it, and same connection still has writes in future, but no longer for this 83bytes message. And that thread is no longer used. It's stuck forever. In a longer run, it's possible to get all available threads hanging. (no writes performed). With SpringBoot 2.3.4 i had no issue with same code. After upgrading to 2.4.x/2.5.x it started to get locked. |

|

@xor22h I did look at it but it doesn't have wire logging (see the log by @alekol) and I don't see anything unusual there. Just a sequence of writes and flushes. What other log messages are missing for the 83 bytes message?

This is good to know about 2.3.4 vs 2.4/2.5. Would you be able to try a more isolated change along the lines of what I suggested:

|

|

@rstoyanchev Thanks for looking into this You are correct - the nio thread is not blocked and it keeps on sending/receiving keep-alives and other things. however, the writing thread is blocked because the future never gets completed (get() never returns), so the app outbound traffic gets ultimately stalled in my case (all our wire-facing writes happen in a single thread) For the suggestion to try out - I'll give it a whack tomorrow and post here |

Okay, if I/O keeps working, then an issue in the |

|

@rstoyanchev I've missing log lines which contained Minimum product where i can still see it reproducing: https://we.tl/t-YNoeojTPZy Sometimes it happens very soon, sometimes it might take 10min for it to happen. |

|

@xor22h I change |

In class CompletableToListenableFutureAdapter, whenComplete function will never been run if there is exception in mono.toFuture() of Mono class. In onError function of MonoToCompletableFuture class, I think ref.getAndSet(null) is null so completeExceptionally is not fired which cause future.get() never been resolved. |

|

nice find @hurahurat ! I'll try and see if this is indeed what's happening :) |

|

I have created a simple project which can reproduce this problem easily. I will upload it here tomorrow because now I'm trying to find the root cause. |

|

I have put some breakpoints to see if it has happened as I thought but it's not. I don't want to fork spring-websocket and reactor-netty so it's better to wait for you guys. I have confirmed that it works normally with springboot before 2.4.x version. |

|

I confirm that in my machine the messages stop after ~1sec for spring boot 2.5.1 |

|

I have reverted the change 7758ba3 in spring-boot version 2.5.1(spring version 5.3.8) and found that it worked fine although the thread was in sleeping mode occasionally during the test. |

|

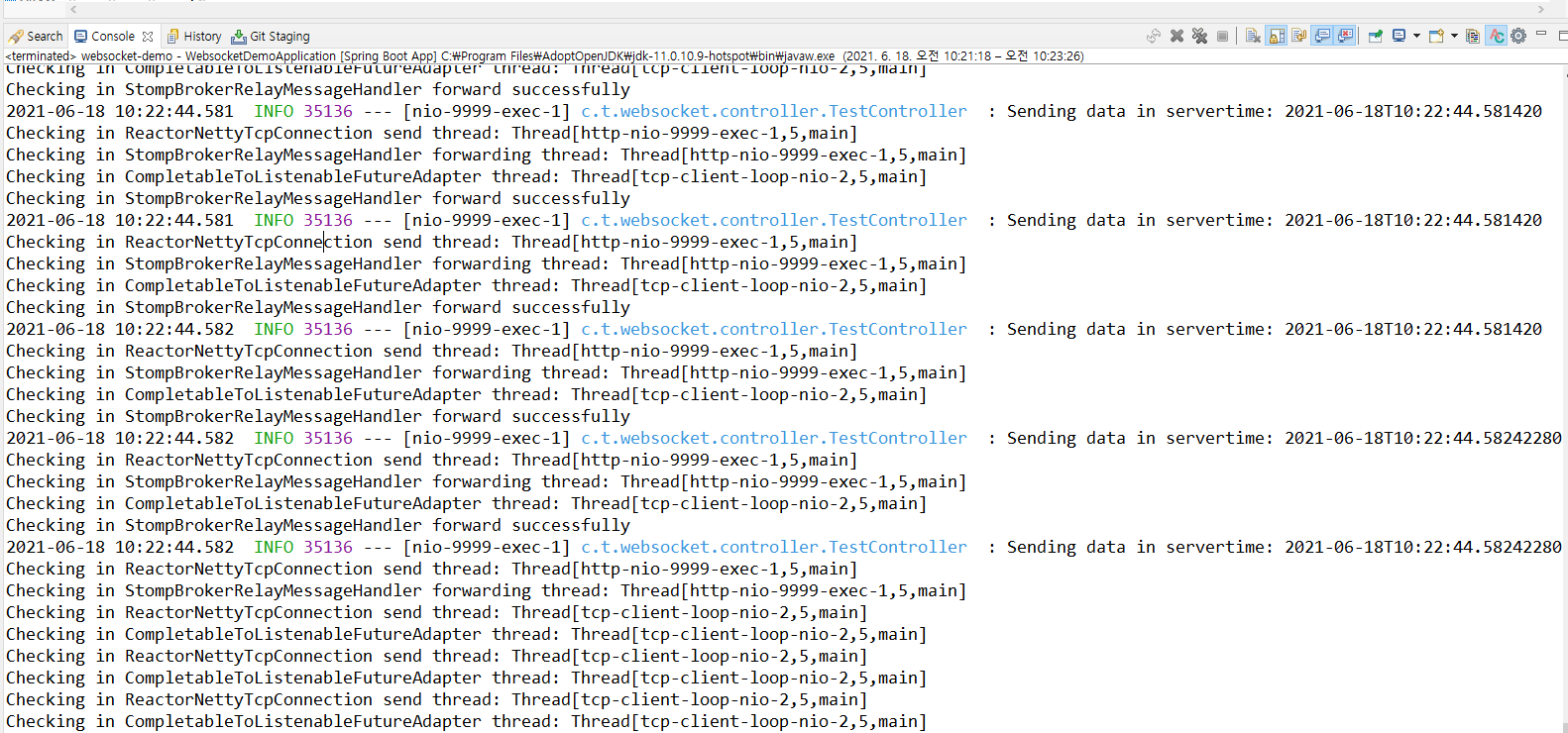

I added some logs to check and found that this problem appeared when send() method in ReactorNettyTcpConnection class is called at the same time. |

|

After adding more logs in the project reactor-core, I think that I have found the root cause of this issue. It is about the order of execution. In MonoToCompletableFuture class, the onComplete event has been fired before the onSubscribe event as you can see in the below log lines. I think it happened due to the fact that CompleteFuture is not lazy as nature, onComplete and onSubscribe events were executed by separate threads and the onSubscribe event might take more time because of message size and encoding step. |

|

@hurahurat, thanks for persisting and finding the root cause! I'm closing this in favor of the Reactor Netty issue. |

Affects: 5.3.3.RELEASE

I'm using spring boot 2.4.2 with RabbitMQ as message broker and spring-boot-starter-jetty.

I got an issue on my production server with a jetty thread hangs so long time(almost 2 days) with the thread dump information as below

For sending messages, I just using this code:

Any help would be appreciated.

The text was updated successfully, but these errors were encountered: