New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

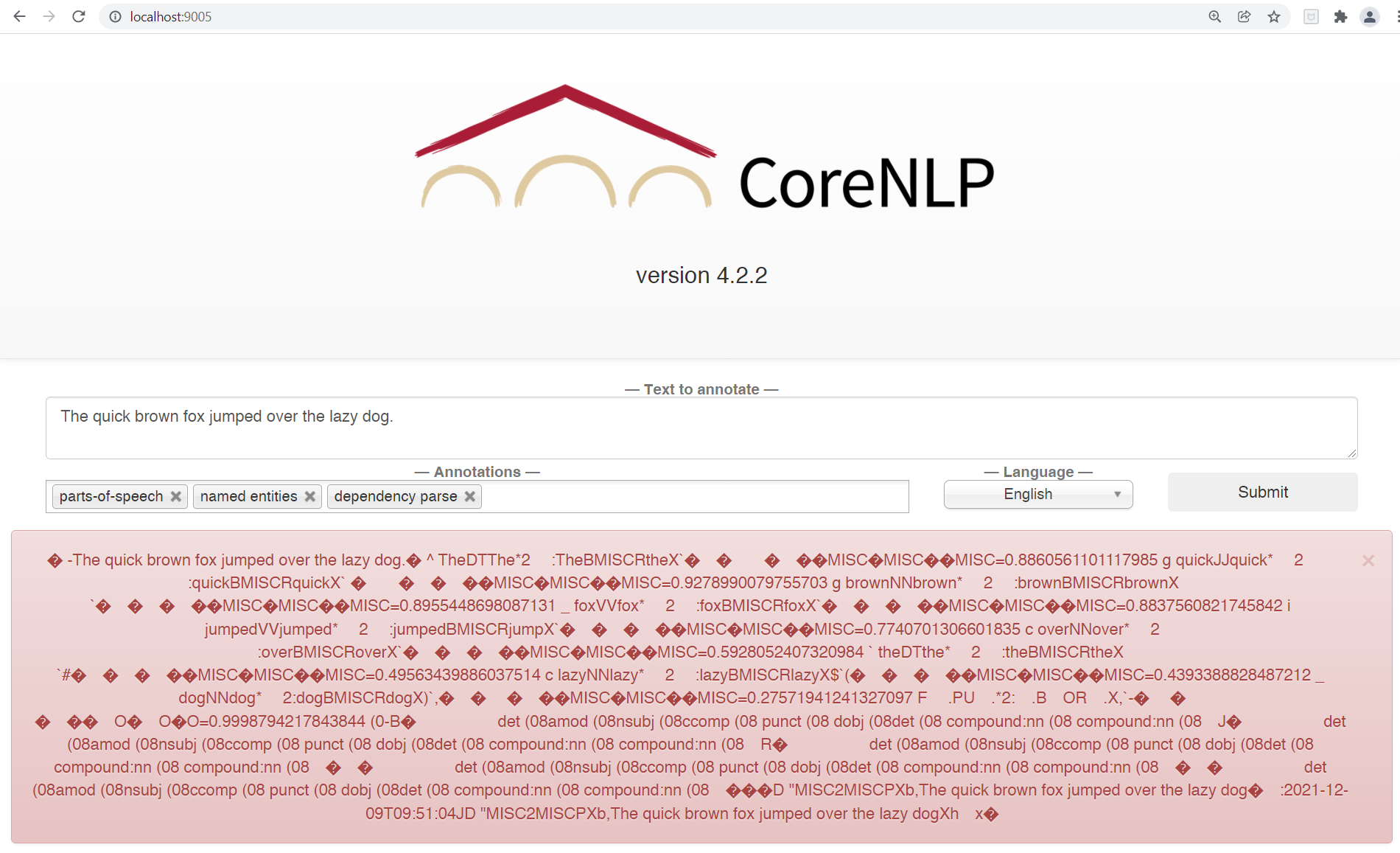

Garbage characters in printed result #894

Comments

|

This is clearly an encoding issue. What platform are you using?

…On Wed, Dec 8, 2021 at 3:37 AM jiangweiatgithub ***@***.***> wrote:

*When I run the following python code:*

import stanza

from stanza.server import CoreNLPClient

text = "中国是一个伟大的国家。"

print(text)

with CoreNLPClient(

properties='chinese',

classpath=r'F:\StanfordCoreNLP\stanford-corenlp-4.2.2*',

strict=False,

start_server=stanza.server.StartServer.TRY_START ,

annotators=['tokenize','ssplit','pos','lemma','ner', 'parse', 'depparse'],

timeout=30000,

memory='16G') as client:

pattern = 'NP'

matches = client.tregex(text, pattern)

# You can access matches similarly

print(matches['sentences'][0]['0']['match'])

*I got:*

中国是一个伟大的国家。

2021-12-08 19:35:10 INFO: Using CoreNLP default properties for: chinese.

Make sure to have chinese models jar (available for download here:

https://stanfordnlp.github.io/CoreNLP/) in CLASSPATH

2021-12-08 19:35:10 INFO: Connecting to existing CoreNLP server at

localhost:9000

2021-12-08 19:35:10 INFO: Connecting to existing CoreNLP server at

localhost:9000

(NP (NNP �й���һ��ΰ��Ĺ���) (SYM ��))

Any idea about the garbage characters?

Process finished with exit code 0

—

You are receiving this because you are subscribed to this thread.

Reply to this email directly, view it on GitHub

<#894>, or unsubscribe

<https://github.com/notifications/unsubscribe-auth/AA2AYWOYTS4KM22K5Q4ZDITUP47QHANCNFSM5JTSUOMQ>

.

Triage notifications on the go with GitHub Mobile for iOS

<https://apps.apple.com/app/apple-store/id1477376905?ct=notification-email&mt=8&pt=524675>

or Android

<https://play.google.com/store/apps/details?id=com.github.android&referrer=utm_campaign%3Dnotification-email%26utm_medium%3Demail%26utm_source%3Dgithub>.

|

|

Windows 11, with Simplified Chinese enabled. |

|

For the corenlp / stanza connection, there's a setting where the text needs to converted to utf-8, and then on the receiving end the text needs to be converted back. Otherwise it doesn't work on Windows. Unfortunately this means both packages need to be released in order to fix this! I'll send a temporary update soon-ish. |

|

Sure. Let me know when it is ready and how I can get it. Thanks you for your quick response! |

|

Ok, this should work. Stanza side:

CoreNLP side: https://nlp.stanford.edu/software/stanford-corenlp-4.3.2b.zip ... for posterity, those will both eventually be incorporated into future releases, in case the branch and/or temp corenlp release don't exist in the future. |

|

This issue has been automatically marked as stale because it has not had recent activity. It will be closed if no further activity occurs. Thank you for your contributions. |

|

CoreNLP with this update is now available - 4.4.0 stanza update should be soon |

|

stanza 1.4.0 and corenlp 4.4.0 together should have this fix |

When I run the following python code:

import stanza

from stanza.server import CoreNLPClient

text = "中国是一个伟大的国家。"

print(text)

with CoreNLPClient(

properties='chinese',

classpath=r'F:\StanfordCoreNLP\stanford-corenlp-4.2.2*',

strict=False,

start_server=stanza.server.StartServer.TRY_START ,

annotators=['tokenize','ssplit','pos','lemma','ner', 'parse', 'depparse'],

timeout=30000,

memory='16G') as client:

I got:

中国是一个伟大的国家。

2021-12-08 19:35:10 INFO: Using CoreNLP default properties for: chinese. Make sure to have chinese models jar (available for download here: https://stanfordnlp.github.io/CoreNLP/) in CLASSPATH

2021-12-08 19:35:10 INFO: Connecting to existing CoreNLP server at localhost:9000

2021-12-08 19:35:10 INFO: Connecting to existing CoreNLP server at localhost:9000

(NP (NNP �й���һ��ΰ��Ĺ���) (SYM ��))

Any idea about the garbage characters?

Process finished with exit code 0

The text was updated successfully, but these errors were encountered: