Try the web app on this link https://nst-v01.streamlit.app/

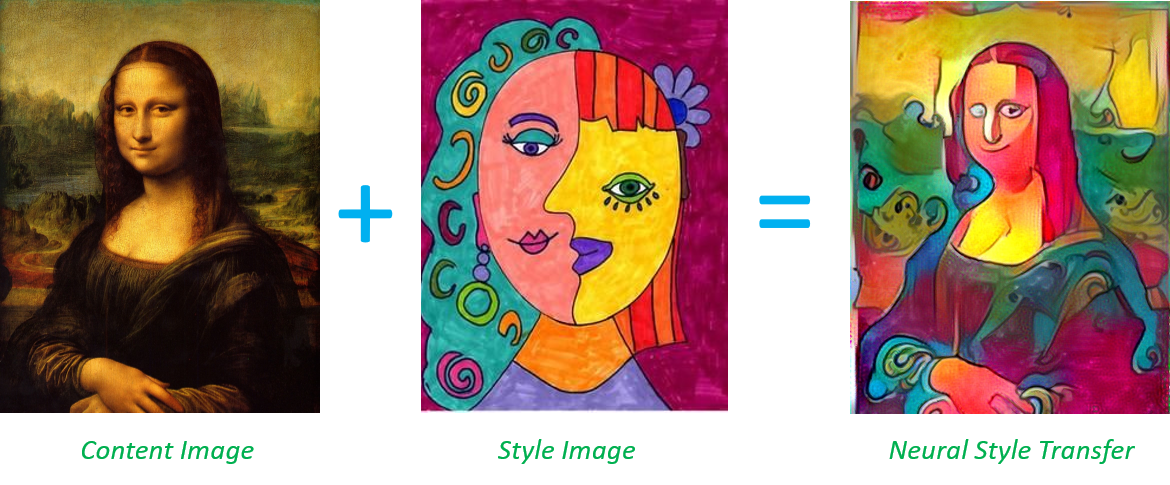

This project is based on the Neural-Style algorithm developed by Leon A. Gatys, Alexander S. Ecker and Matthias Bethge. Neural-Style, or Neural-Transfer, allows you to take an image and reproduce it with a new artistic style. The algorithm takes three images, an input image, a content-image, and a style-image, and changes the input to resemble the content of the content-image and the artistic style of the style-image.

We have referred to the paper Gatys_Image_Style_Transfer_CVPR_2016_paper.pdf for understanding the underlying principles.

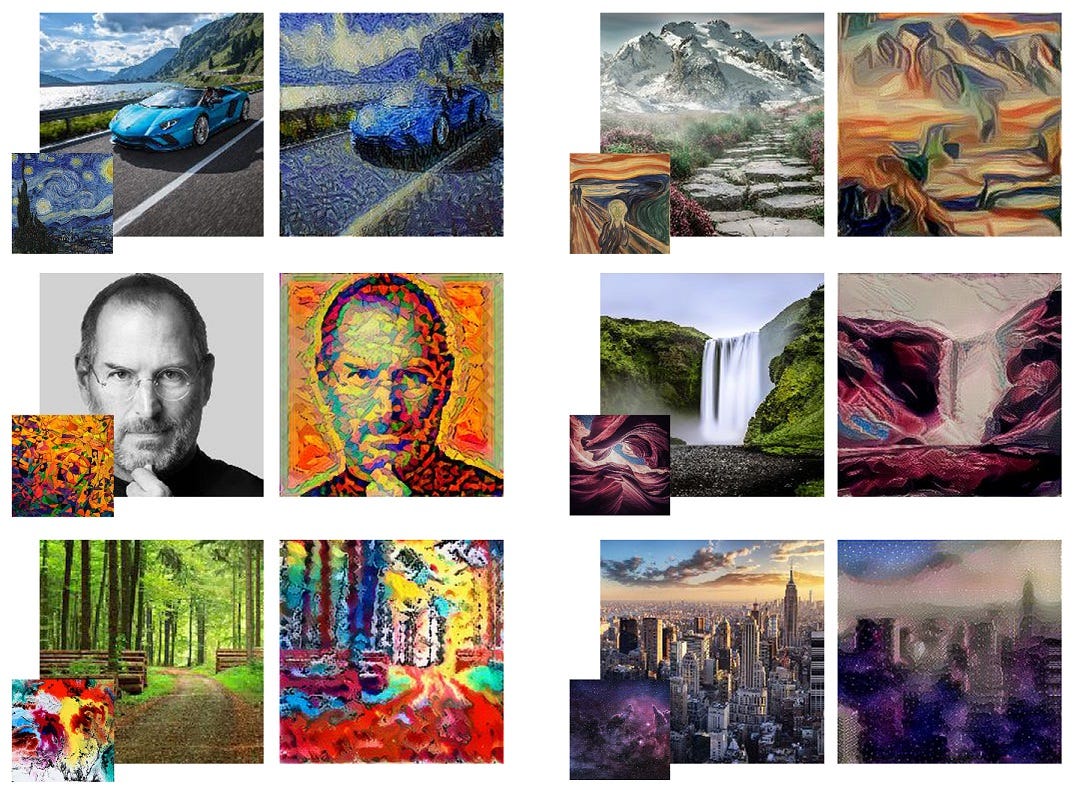

Here are a few examples of the style transfer in action:

- Apply the style of famous artworks to your photos.

- Adjustable style strength.

- Supports multiple image formats.

- Easy to use command-line interface.

- Python 3.6 or higher

- Git

- Virtual environment (optional but recommended)

-

Clone the repository:

git clone https://github.com/swas-kar/Neural_Style_Transfer.git cd Neural_Style_Transfer -

Create and activate a virtual environment:

python3 -m venv venv source venv/bin/activate # On Windows use `venv\Scripts\activate`

-

Install the required dependencies:

pip install -r requirements.txt

--content: Path to the content image.--style: Path to the style image.--output: Path to save the output image.--iterations: Number of iterations to run (default: 500).--style-weight: Weight of the style (default: 1e6).--content-weight: Weight of the content (default: 1).

We welcome contributions! If you find a bug or want to add a new feature, feel free to open an issue or submit a pull request. Please follow the guidelines below:

- Fork the repository.

- Create a new branch (

git checkout -b feature-branch). - Commit your changes (

git commit -am 'Add new feature'). - Push to the branch (

git push origin feature-branch). - Create a new pull request.

This project is licensed under the MIT License - see the MIT LICENSE file for details.