We observe that if we simply increase the batch size (bs) on each GPU from 4 (Titan X) to 12 (P40) for training BN layers, our DSOD300 can achieve much better performance without any other modifications (see comparisons below). We think if we have a better solution to tune BN layers' params, e.g., Sync BN [1] or Group Norm [2] when training detectors from scratch with limited batch size, the accuracy may be higher. This is also consistent with [3].

We have also provided some preliminary results on exploring the factors of training two-stage detectors from scratch in our extended paper (v2) [4].

New results on PASCAL VOC test set:

| Method | VOC 2007 test mAP | # parameters | Models |

|---|---|---|---|

| DSOD300 (07+12) bs=4 on each GPU | 77.7 | 14.8M | Download (59.2M) |

| DSOD300 (07+12) bs=12 on each GPU | 78.9 | 14.8M | Download (59.2M) |

[1] Chao Peng, Tete Xiao, Zeming Li, Yuning Jiang, Xiangyu Zhang, Kai Jia, Gang Yu, and Jian Sun. "Megdet: A large mini-batch object detector." In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 6181-6189. 2018.

[2] Yuxin Wu, and Kaiming He. "Group normalization." In Proceedings of the European Conference on Computer Vision (ECCV), pp. 3-19. 2018.

[3] Kaiming He, Ross Girshick, and Piotr Dollár. "Rethinking imagenet pre-training." In Proceedings of the IEEE International Conference on Computer Vision, pp. 4918-4927. 2019.

[4] Zhiqiang Shen, Zhuang Liu, Jianguo Li, Yu-Gang Jiang, Yurong Chen, and Xiangyang Xue. "Object detection from scratch with deep supervision." IEEE transactions on pattern analysis and machine intelligence (2019).

This repository contains the code for the following paper

DSOD: Learning Deeply Supervised Object Detectors from Scratch (ICCV 2017).

Zhiqiang Shen*, Zhuang Liu*, Jianguo Li, Yu-Gang Jiang, Yurong chen, Xiangyang Xue. (*Equal Contribution)

The code is based on the SSD framework.

Other Implementations: [Pytorch] by Yun Chen, [Pytorch] by uoip, [Pytorch] by qqadssp, [Pytorch] by Ellinier , [Mxnet] by Leo Cheng, [Mxnet] by eureka7mt, [Tensorflow] by Windaway.

If you find this helps your research, please cite:

@inproceedings{Shen2017DSOD,

title = {DSOD: Learning Deeply Supervised Object Detectors from Scratch},

author = {Shen, Zhiqiang and Liu, Zhuang and Li, Jianguo and Jiang, Yu-Gang and Chen, Yurong and Xue, Xiangyang},

booktitle = {ICCV},

year = {2017}

}

@article{shen2018object,

title={Object Detection from Scratch with Deep Supervision},

author={Shen, Zhiqiang and Liu, Zhuang and Li, Jianguo and Jiang, Yu-Gang and Chen, Yurong and Xue, Xiangyang},

journal={arXiv preprint arXiv:1809.09294},

year={2018}

}

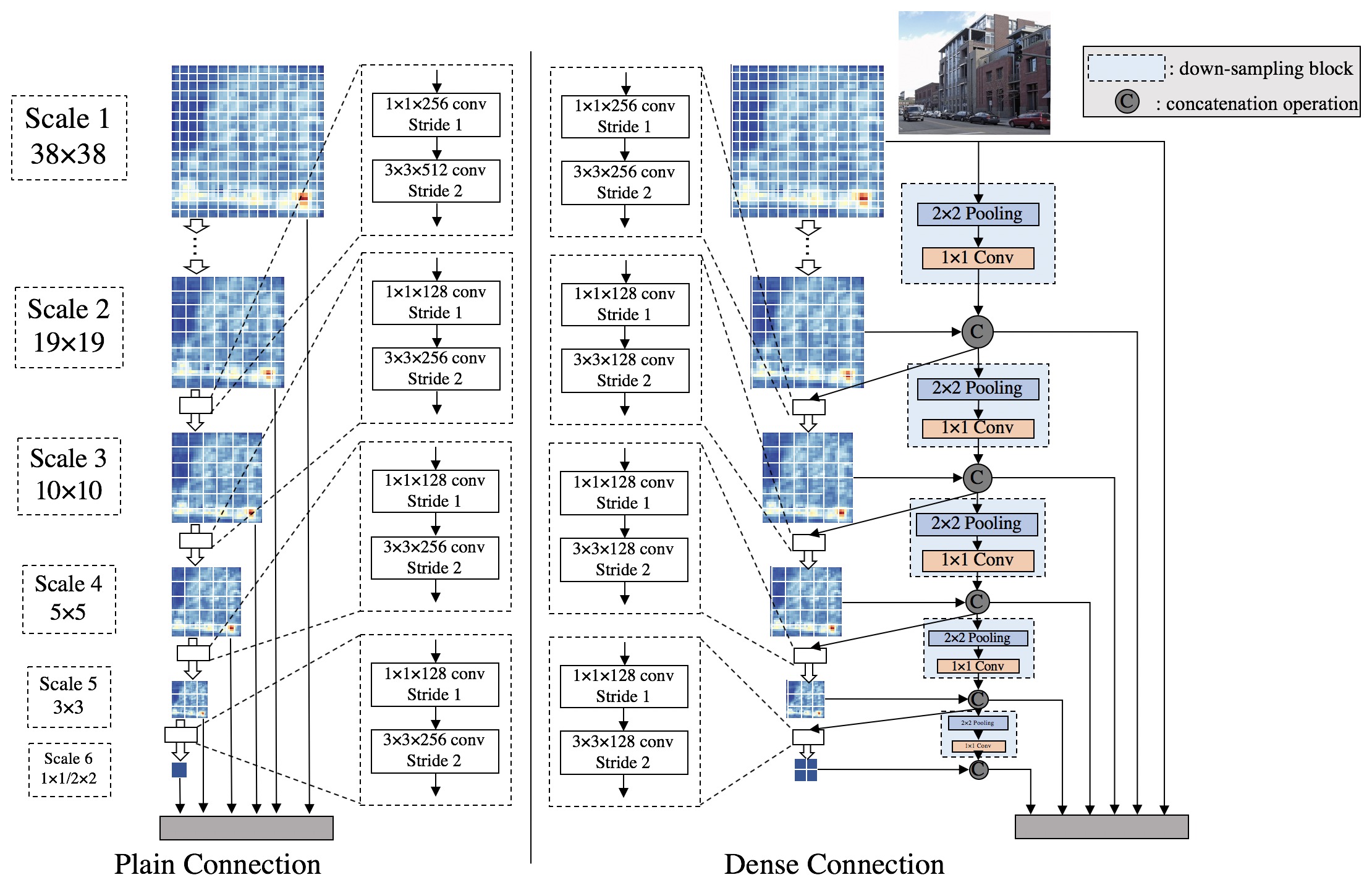

DSOD focuses on the problem of training object detector from scratch (without pretrained models on ImageNet). To the best of our knowledge, this is the first work that trains neural object detectors from scratch with state-of-the-art performance. In this work, we contribute a set of design principles for this purpose. One of the key findings is the deeply supervised structure enabled by dense layer-wise connections, plays a critical role in learning a good detection model. Please see our paper for more details.

- Visualizations of network structures (tools from ethereon, ignore the warning messages):

The tables below show the results on PASCAL VOC 2007, 2012 and MS COCO.

PASCAL VOC test results:

| Method | VOC 2007 test mAP | fps (Titan X) | # parameters | Models |

|---|---|---|---|---|

| DSOD300_smallest (07+12) | 73.6 | - | 5.9M | Download (23.5M) |

| DSOD300_lite (07+12) | 76.7 | 25.8 | 10.4M | Download (41.8M) |

| DSOD300 (07+12) | 77.7 | 17.4 | 14.8M | Download (59.2M) |

| DSOD300 (07+12+COCO) | 81.7 | 17.4 | 14.8M | Download (59.2M) |

| Method | VOC 2012 test mAP | fps | # parameters | Models |

|---|---|---|---|---|

| DSOD300 (07++12) | 76.3 | 17.4 | 14.8M | Download (59.2M) |

| DSOD300 (07++12+COCO) | 79.3 | 17.4 | 14.8M | Download (59.2M) |

COCO test-dev 2015 result (COCO has more object categories than VOC dataset, so the model size is slightly bigger.):

| Method | COCO test-dev 2015 mAP (IoU 0.5:0.95) | Models |

|---|---|---|

| DSOD300 (COCO trainval) | 29.3 | Download (87.2M) |

-

Install SSD (https://github.com/weiliu89/caffe/tree/ssd) following the instructions there, including: (1) Install SSD caffe; (2) Download PASCAL VOC 2007 and 2012 datasets; and (3) Create LMDB file. Make sure you can run it without any errors.

Our PASCAL VOC LMDB files:

Method LMDBs Train on VOC07+12 and test on VOC07 Download Train on VOC07++12 and test on VOC12 (Comp4) Download Train on VOC12 and test on VOC12 (Comp3) Download -

Create a subfolder

dsodunderexample/, add filesDSOD300_pascal.py,DSOD300_pascal++.py,DSOD300_coco.py,score_DSOD300_pascal.pyandDSOD300_detection_demo.pyto the folderexample/dsod/. -

Create a subfolder

grp_dsodunderexample/, add filesGRP_DSOD320_pascal.pyandscore_GRP_DSOD320_pascal.pyto the folderexample/grp_dsod/. -

Replace the file

model_libs.pyin the folderpython/caffe/with ours.

-

Train a DSOD model on VOC 07+12:

python examples/dsod/DSOD300_pascal.py

-

Train a DSOD model on VOC 07++12:

python examples/dsod/DSOD300_pascal++.py

-

Train a DSOD model on COCO trainval:

python examples/dsod/DSOD300_coco.py

-

Evaluate the model (DSOD):

python examples/dsod/score_DSOD300_pascal.py

-

Run a demo (DSOD):

python examples/dsod/DSOD300_detection_demo.py

-

Train a GRP_DSOD model on VOC 07+12:

python examples/grp_dsod/GRP_DSOD320_pascal.py

-

Evaluate the model (GRP_DSOD):

python examples/dsod/score_GRP_DSOD320_pascal.py

Note: You can modify the file model_lib.py to design your own network structure as you like.

Zhiqiang Shen (zhiqiangshen0214 at gmail.com)

Zhuang Liu (liuzhuangthu at gmail.com)

Any comments or suggestions are welcome!