Connect All is an application developed to help the disabled communicate and live life normally. This was developed as a term project for ET60029 (TECHNOLOGY FOR SPECIAL NEEDS EDUCATION) and is an umbrella service for multiple aids.

Demo at https://thealphadollar.me/T4SNE-Connnect-All/. You may use this website to access all facilities.

Sign language gives voice to the mute and is the sound for the deaf. Using deep neural networks, we convert sign language to text, aiding those unable to comprehend sign language.

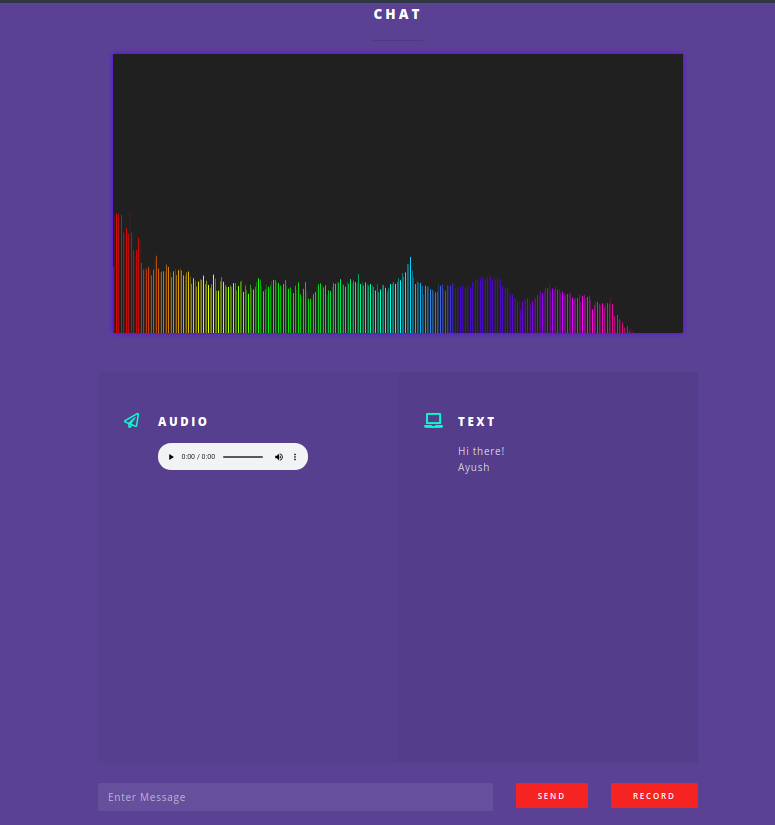

Real time lag-free chat is made possible by hacks at socket level.To aid the specially-abled, speech-to-text and text-to-speech is also used on the fly. Technology stack used for this is socket.io, google cloud speech API.

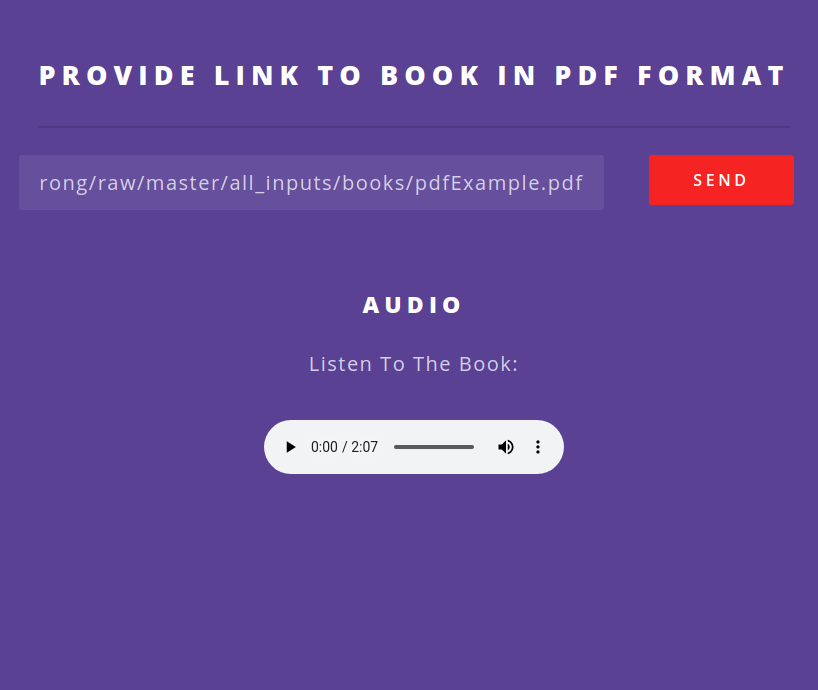

The workflow for a seamless narration of books begins by obtaining text using combination of pdf-parsing and OCR. The narration audios generated for each page is stitched together. The technology stack consists of Azure services for OCR and Audio. Apache Tika and PyPDF2 is used for parsing pdfs.

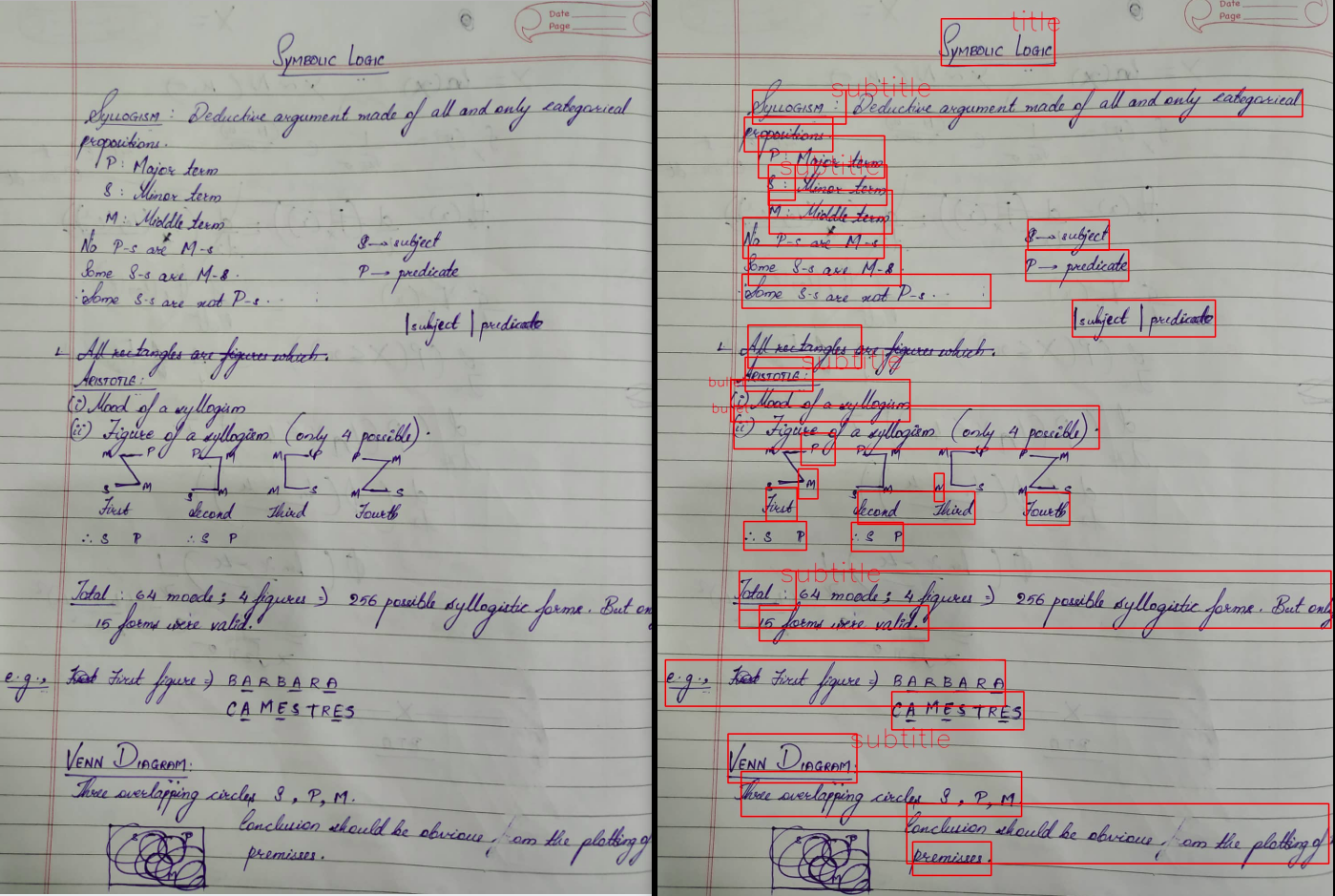

The workflow starts with image - preprocessing and identification of skewedness and inversion of text. The text is extracted along with exact position and font size. The text is then intelligently parsed for clubbing various parts of the note under a heading / sub-heading or bullets and numbering.

It then appropriately narrates the various sections of the note. The technology stack consists of Azure Vision service, OpenCV and Azure Speech service.

To run the backend.

cd /backend

pipenv shell --three #only the first time

python3 manage.py runWe have deployed frontend using Github Pages.

Run the instant messenger for all.

cd /im4all

pipenv shell --three

python3 server.py