This repository contains modules used to set up Amazon Web Services (AWS) infrastructure for an Exafunction ExaDeploy system using Terraform.

This root folder is an example of how to use these modules together but is not meant for production use. This example is included here because Terraform requires the root module to contain Terraform code. For production use, use the modules detailed in Modules. For details on how to use these modules in different infrastructure setups, see Configuration.

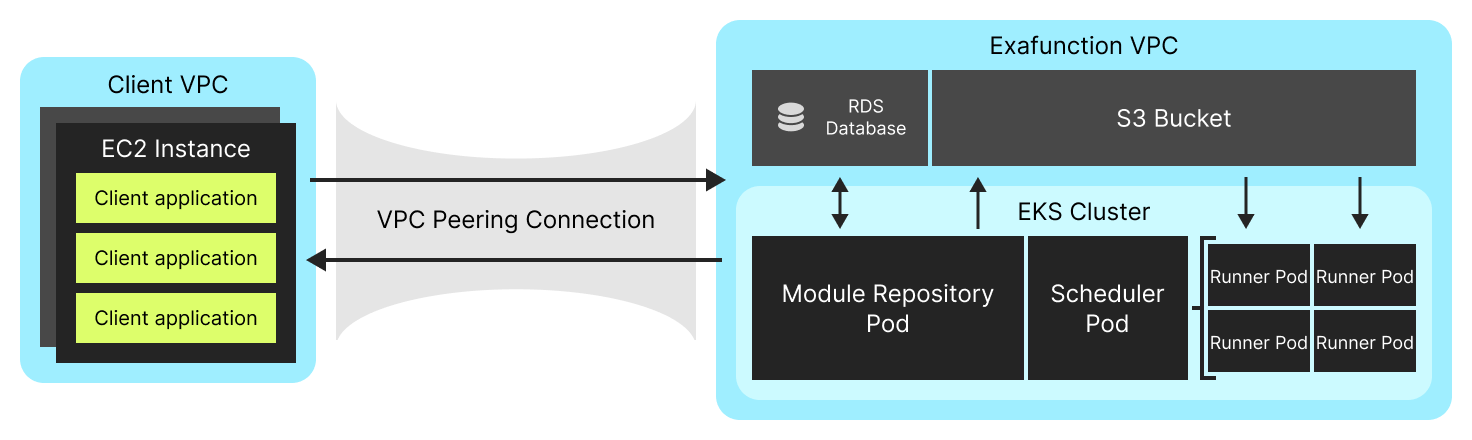

This diagram shows a typical setup of ExaDeploy on AWS (all within the user's cloud account). It consists of:

- A client VPC with client applications running on some AWS infrastructure. In this diagram, the applications are running on EC2 instances, but they could also be running in an EKS cluster, developer machine connected to the VPC using Amazon VPN, or some other AWS infrastructure. The modules in this repository are not responsible for setting up any of this client infrastructure.

- An Exafunction VPC used to contain all Exafunction infrastructure components. This is set up by the

modules/networkmodule. - A VPC peering connection between the client VPC and the Exafunction VPC. This is set up by the

modules/peeringmodule. - An RDS database and S3 bucket used as a persistent backend for the ExaDeploy module repository. This is set up by the

modules/module_repo_backendmodule. - An EKS cluster used to run the ExaDeploy system. This is set up by the

modules/clustermodule. - An ExaDeploy system running in the EKS cluster. The modules in this repository are not responsible for deploying this system. This is handled by the

Exafunction/terraform-aws-exafunction-kuberepository which manages the ExaDeploy Kubernetes resources.

This module is used to set up the network infrastructure for the ExaDeploy system. This includes creating a VPC and subnets that will be used to deploy the Exafunction EKS cluster in.

This module is used to set up an EKS cluster that will be used to deploy the ExaDeploy system in. This includes creating the EKS cluster as well as node groups in that cluster and autoscaling group tags to enable cluster autoscaling for these node groups. It is possible to configure the set of runner node pools in order to specify different instance types (including CPU vs. GPU nodes), autoscaling limits, instance capacity type (spot vs. on-demand), and other parameters.

This module is used to set up a persistent backend for the ExaDeploy module repository. This includes an S3 bucket and an RDS instance that will be used to store the module repository's objects and metadata respectively. These resources allows the data to be persisted even if the module repository pod is rescheduled. In addition to applying this module, the module repository must be configured to use these resources.

While persistence is a useful feature, it is not strictly required for an ExaDeploy system (though highly recommended in production). The module repository also supports a fully local backend (backed by its own local filesystem on disk) which is not persisted if the module repository pod is rescheduled. In this case, this module is not necessary and the module repository should not be configured to use a remote file storage or remote relational database.

This module is used to set up peering between the Exafunction VPC and another VPC. This includes creating a VPC peering connection, routing rules to route traffic between the VPCs, and a security group rule to allow ingress traffic from the peer VPC into the Exafunction VPC. In order for the peering setup to work, the VPCs' CIDR ranges must be disjoint.

The main reason to peer VPCs is to enable client applications running in a separate VPC to offload remote work to the ExaDeploy system running in the Exafunction VPC. The peer VPC clients can run in various infrastructure setups (e.g. on EC2 instances, in an EKS cluster, from a user's local machine through Amazon VPN, etc.). It is possible to call this module multiple times to create multiple peering connections between the Exafunction VPC and other VPCs which may be useful if client applications in multiple VPCs want to offload remote work to a single shared ExaDeploy system.

Peering is not strictly necessary to use the ExaDeploy system. Client applications running within the same VPC as the Exafunction EKS cluster can connect to the ExaDeploy system without requiring any peering setup.

This section covers how to use these modules for different infrastructure setups. To view details on how to configure individual modules (for example how to configure the set of runner node pools in the cluster module), visit the individual module READMEs.

This root directory is an example of how to use the modules in this repository together and is not meant for production use. It provides a simple but complete setup that includes the Exafunction VPC, an Exafunction EKS cluster within that network, remote storage (S3 bucket and RDS instance) used as a persistent backend for the module repository, and peering between the Exafunction VPC and another VPC where client applications could run and connect to the ExaDeploy system. In this example, the peer_vpc is created in this Terraform module, but in a production setup, it may already exist / be managed elsewhere and have its information passed in to the peering module.

This type of configuration is applicable to most use cases where client applications are running within a single existing AWS VPC (the peer VPC). The applications can be running on raw EC2 instances, in an EKS cluster, from a user's local machine through Amazon VPN, in some other AWS infrastructure, or a combination of the above as long as they are all running within the peer VPC.

If client applications are running in multiple different VPCs, a multiple peer VPC setup can be used. This is similar to the basic setup, but the peer_vpc module is called multiple times to create multiple peering connections between the Exafunction VPC and the different peer VPCs. This allows client applications in each peer VPC to connect to the ExaDeploy system running in the Exafunction VPC.

It is important to note that while the peer VPCs are not peered with each other, they must still have disjoint CIDR ranges as they will all be peered with the Exafunction VPC.

It is also possible to use the ExaDeploy system without any peering setup. For this to work, client applications must run within the same VPC as the Exafunction EKS cluster. In this case, the peer_vpc module is not called at all.

Some examples of this setup include clients running as pods within the deployed Exafunction EKS cluster, as pods within a different EKS cluster in the same VPC, or on EC2 instances within the same VPC.

As mentioned above, the persistent module repository backend is not strictly necessary in the ExaDeploy system. If using the local module repository backend (backed by its own local filesystem on disk), the module_repo_backend module should not be called.

To learn more about Exafunction, visit the Exafunction website.

For technical support or questions, check out our community Slack.

For additional documentation about Exafunction including system concepts, setup guides, tutorials, API reference, and more, check out the Exafunction documentation.

For an equivalent repository used to set up required infrastructure on Google Cloud Platform (GCP) instead of AWS, visit Exafunction/terraform-gcp-exafunction-cloud.

To deploy the ExaDeploy system within the EKS cluster set up using this repository, visit Exafunction/terraform-aws-exafunction-kube.