-

Notifications

You must be signed in to change notification settings - Fork 0

New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

Software testing response card #49

Comments

|

@bpmulligan: Quality control of results - false positive are less costly false negatives. |

|

Today was very informative! Although I am new to this type of analysis I can see how efficient and simple this will make coordination and can't imagine the complications that arise without this type of system in place.

Thanks for the invite today! |

|

Thanks, Rebecca, for voicing all of that. I echo all of it and honestly Keep us all posted on the progress! -Bryce. On 16-05-26 09:39 PM, Rebecca wrote:

Bryce P Mulligan |

|

Rebecca and Bryce have made some great points, and I also agree with most of them. Here are some additional thoughts.

Thanks again for including me in testing the software. I look forward to seeing how it continues to develop. Raquel |

|

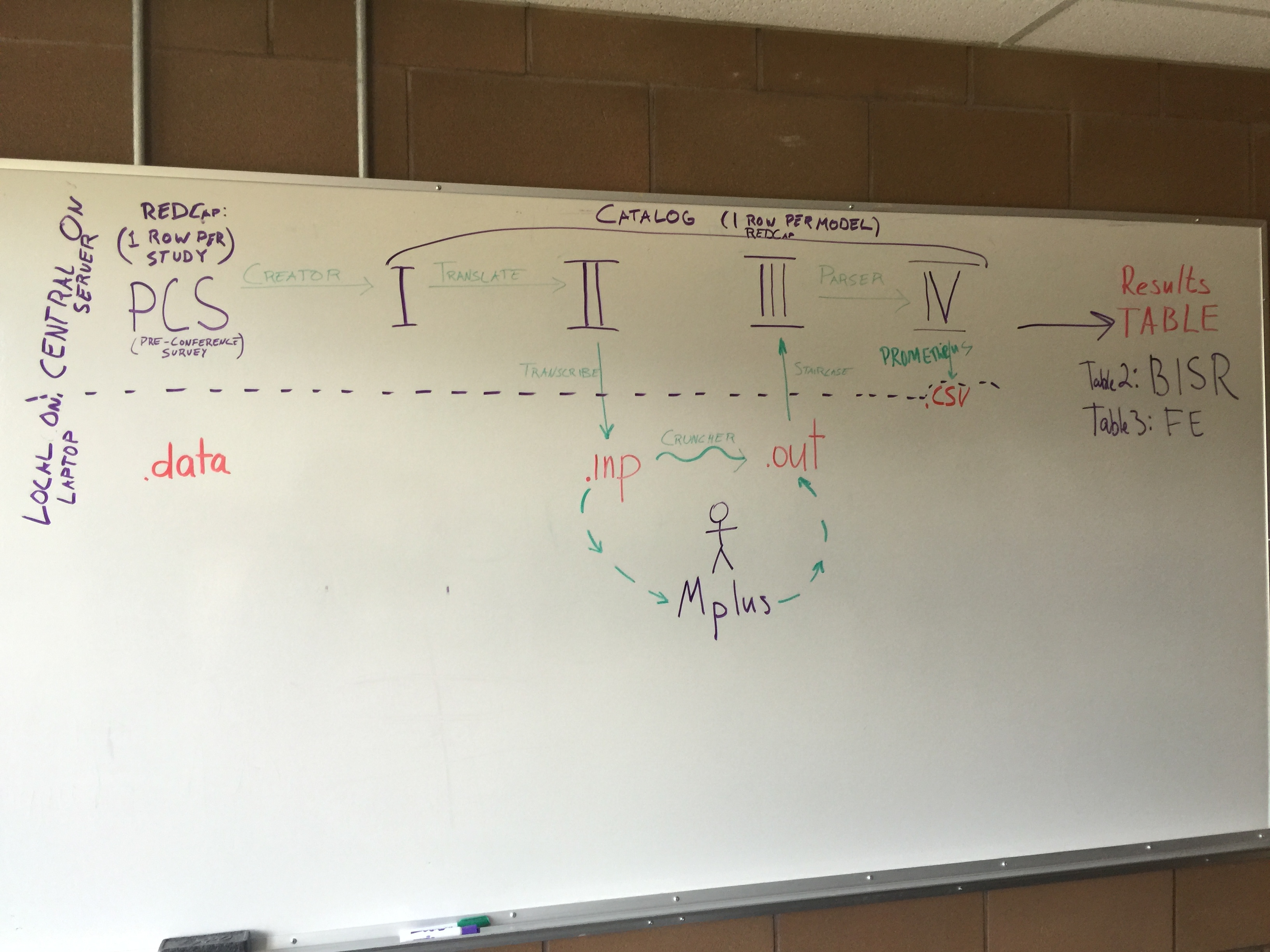

Thanks @beccav8, @raquelgraham, @bpmulligan. We read and appreciated all your comments. (@andkov is running errands for the party tonight, but I'm sure he'll respond later by himself.) Thank you again for attending yesterday and giving us valuable & thoughtful perspectives. I'm posting individual responses to the three of you. Here's yesterday's workflow image for reference. |

|

(These and other responses are being documented in a repo file too.)

Good points, and I hope that the real workshops will have a more polished & cohesive presentation of the scripts. I'm guessing that the real workshops won't cover the code as much as yesterday. The plan is to run much more behind the scenes (and most even before the conference) so that participants don't have to be aware of the code between the PCS and the transcriber code (which follows "II" --the first connection between the server and their laptop). Ideally the PCS is completed several weeks before the conference (which means everything until the transcriber is run on our server, instead of their laptops). We asked you guys to complete the PCS the morning of, so we could better gauge its usability. I'm glad we did, because you guys gave helpful suggestions that we hadn't anticipated (like presenting fewer survey pages to facilitate

It's funny, we built this initially, but thought it was overwhelming. So we broke it into pages (which in hindsight was a mistake) and removed the initial checkboxes (which probably wasn't a mistake). The checkboxes enabled something like your suggestion "and then only respond to questions on these variables". Based on the checkboxes, textboxes were shown for only their variables. (In a small mock-up, @Maleeha produced this effect with item-specific branching logic). However in the end, we felt the checkboxes unnecessarily doubled the amount of items, and increased the chances of incorrect/inconsistent responses. The user still had to go through each variable (and the checkbox stage), and then had to to the textboxes for all the checked variables. As we might have mentioned yesterday, we'd like to pre-populate their PCS responses when they return to subsequent workshops. That's one reason @GracielaMuniz, @ampiccinin, @Maleeha, and others spent so much time enumerating & categorizing the possible IALSA-ish variables. The most consistent we get the list, the more likely we'll be able to reuse (and pre-populate) those sections in future PCSes. If you have other ideas likes checkboxes, please tell @andkov in person, or share them here. We're very invested in making this as ergonomical as possible. For the sake of sharing scientific ideas, we want participants to focus more on concepts and not the interface. (Yikes, can you tell I've spent a solid week with @andov?) Pragmatically, they'll be more eager/likely to come back an participate.

That would be nice, and we should definitely do that. I'm almost afraid to ask @andkov what diagram he's designing to assist the participant along the future workshop's journey and dawn of discovery. |

Yeah, I'm glad you brought this use case to our attention. Last year, I believe IALSA supplied laptops to everyone. If this isn't the case in the future, we definitely should be more explicit about what software needs to be installed & operational on their laptop before they leave their school and get on the plane. Regarding that specific case, everything works on my Linux laptop, but I've been running these programs for months on it, and the cost of misbehaving packages has been amortized. We need to prevent them coming to the workshop with a fresh & untested laptop, regardless of the OS. Also, I think requiring an operational M_plus_ installation will filter out some of these cases. I wanted to address/regurgitate two more great suggestions of yours. Please correct my paraphrases if they're too far off.

That's something we never considered. You're right --providing a virtualized desktops (on a server that UVic/IALSA manages) could drastically reduce the variability of people's setup. As @andkov pointed out, some investigators would be allowed to put their PHI on another school's server. But for the other investigators (especially those using publicly available data), life would be a lot easier. It would also be cheaper for them if they don't have an M_plus_ license already on their laptop. UVic/IALSA could maintain the VM in between workshops, but keep it turned off most of the time (to save costs). Similarly, this could be hosted on Amazon EC2 server. As I showed after the workshop officially ended, I've had a lot of success with this EC2 arrangement in other situations. (Note to self, an Amazon Workspace VDI would be unnecessarily expensive if each desktop needed an M_plus_ license that license was used only ~2 workshops a year.) We'd need to:

This is such a good idea that we weren't even close to. We have some reports model diagnostics (and some more planned), but we never considered that different studies should have customizable settings, based on their (a) quality, (b) size, and (c) familiarity. As we build these mechanisms, we'll ask you for more thoughts on which dials are worthwhile. |

Good, in addition to the static diagram, we hope to have a live diagram that shows the workshop's percent progress through each stage (eg, 96% into "I"; 93% into "II", ...). Tell us if you have other thoughts how to make the diagram more salient to the experience.

@andkov will follow up with you on which aspects you thought were most important. We want to better balance between (a) providing enough implementation details so they're comfortable, yet (b) not distracting them from the forest (ie the aging research questions).

Even if they didn't need the pre-req knowledge (which they do), seeing this material ahead of time may make them more comfortable when entering the workshop.

Are you volunteering to be the hand model on the YouTube examples involving laptops?

You're right, and I'm glad you guys illuminated this yesterday. The PCS needs to more clearly communicate the consequences of a study lacking certain (quality) variables. In my mind the categorizes are:

I think this would couple nicely with the "dataset validator" we've sketched out. This is the software that checks the consistency between a participant's PCS responses and their dataset (eg, making sure the variables are spelled correctly, so the M_plus_ syntax will work). In addition to running the "dataset validator", they could run this check list that makes sure that the correct packages are installed. It could build on top of the existing install-packages.R. MplusAutomation should be added too. Anything else besides GitHub that you can think of? Thank you again everyone, including @Maleeha and @casslbrown. Again, this document is in the repo as a single document. |

@beccav8, @raquelgraham , @bpmulligan, please answer the following questions to help us improve the software use for coordinted analysis.

The text was updated successfully, but these errors were encountered: