New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

Fix plugins #3017

Fix plugins #3017

Conversation

|

Marking this as draft as Dragan has identified another problem which will need to be remedied for plugins to work. No point merging this until that is fixed |

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

lgtm!

…an be used in chat_chain.py for proper truncation so its safe with usage of basic hf inference Added torch.manual_seed to basic_hf_worker.py

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

@olliestanley Can you elaborate a bit more on what is being fixed or extended here regarding the original pull request? I noticed the merged original pull request no longer introduces the sample calculator plugin, what are the plans regarding this?

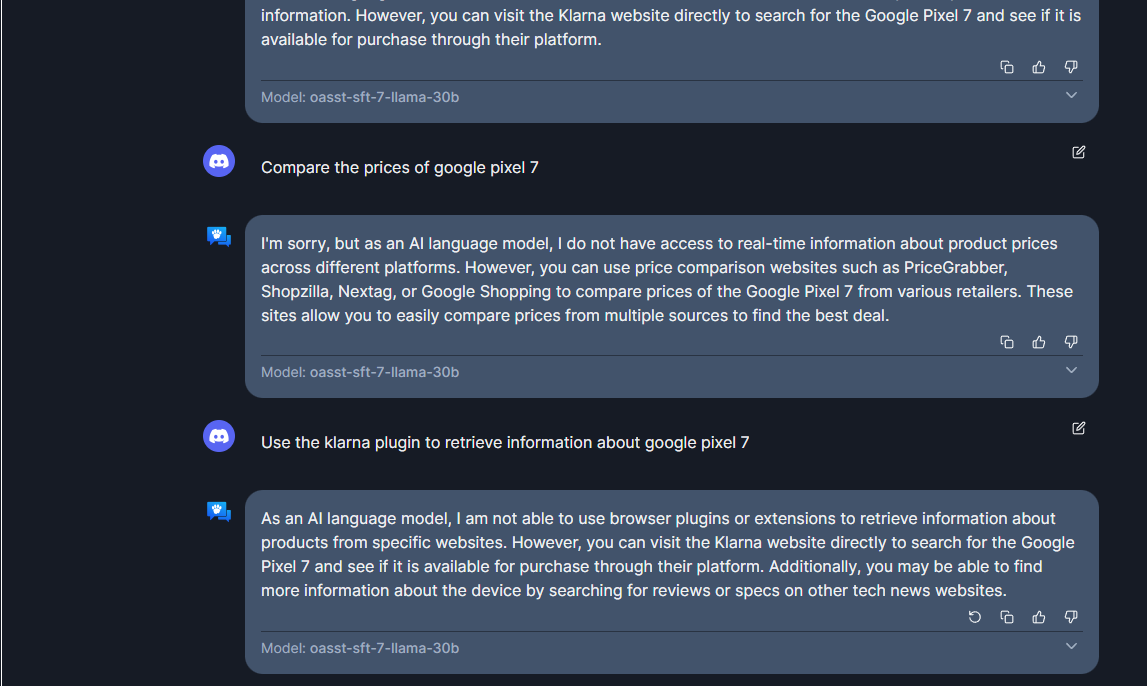

There were three problems in prod which did not show up in local testing, because prod uses

This PR resolves all three of those |

Plugins was compatible only with `text-generation-inference` based workers and therefore worked on Dragan's machines but did not work on OA prod. This resolves the incompatibility. --------- Co-authored-by: draganjovanovich <draganele@gmail.com>

Plugins was compatible only with

text-generation-inferencebased workers and therefore worked on Dragan's machines but did not work on OA prod. This resolves the incompatibility.