-

-

Notifications

You must be signed in to change notification settings - Fork 2k

New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

Slow import time (http.server) #6901

Comments

|

Already looked at. Will try to get back to merge it for 3.9: #6591 |

|

@Dreamsorcerer This issue reproduces even with the fix suggested in the pull request you mentioned |

|

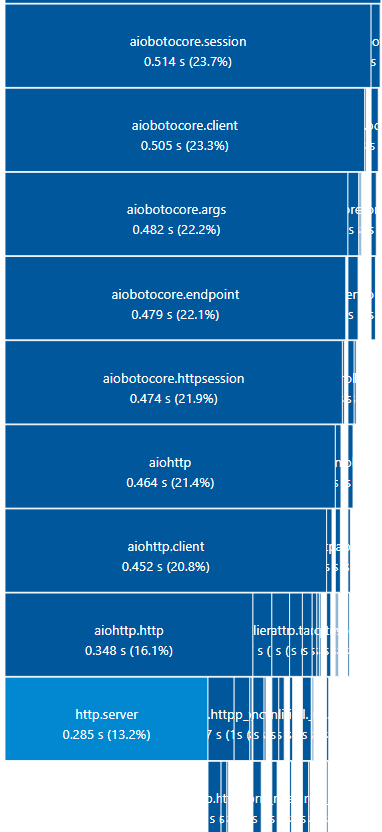

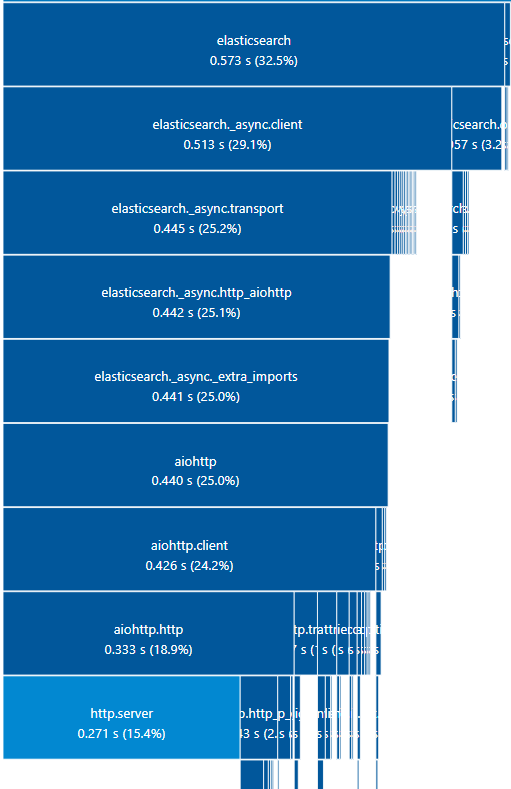

Actually, your chart doesn't seem to show gunicorn using up all the import time. Is that the one you cached? The biggest thing in your chart is importing http.server, which is stdlib, and accounts for nearly 40% of the import time. So, if you want to improve the import time substantially, I'd suggest seeing if you can make some improvements to http.server in cpython. 2nd biggest delay is importing asyncio, which is over 20% of the import time. So, those 2 libraries from cpython account for ~60% of the import time in your chart. I don't see anything we can do here. You could also retry with a newer version of Python, to see if there are already improvements. |

No, the one that already cached in the first graph is If we are only using aiohttp as a client why we need to import |

|

It appears to be referenced in 2 places, but 1 of them is here: https://github.com/aio-libs/aiohttp/blob/master/aiohttp/connector.py#L1230 Which looks like a client component. However, the only thing being used is this: So, we could probably refactor that code to use |

|

Hello, I'll like to be assigned to this issue. 😊 |

|

Well, no, that's cpython, not aiohttp. :P What we currently use is defined at: https://github.com/aio-libs/aiohttp/blob/master/aiohttp/http.py#L70 |

|

Alright, thanks for the clarification. |

|

@rafael-zilberman If you could verify that #6903 fixes the import time for you, that would be great. |

|

Yep, much better now. |

|

Happy to help. 😊 |

|

@Dreamsorcerer |

|

@rafael-zilberman there's a few things that need addressing before that can happen. |

|

The weird thing is when I try it on my machine, the branch seems to always be a bit slower, but I can't see why. I can see that email.parser, which seems to be a decent chunk of http.server import time, is still imported via aiohttp.helpers, so some import time is still used by that. But, it should still load atleast a little faster... |

|

Seems like even things like brotli and urllib.request import slower when I'm on that branch, which just doesn't make any sense to me. I'm inclined to say it's just something weird happening on my machine, but would be great if 1 more person could double check the difference between master and the linked branch. You can use There might be a hint of improvement visible in the CI, but it seems pretty insignificant. |

## What do these changes do? Refactor code to use HTTPStatus instead of http.server Fixes #6901 Co-authored-by: pre-commit-ci[bot] <66853113+pre-commit-ci[bot]@users.noreply.github.com> Co-authored-by: Sam Bull <aa6bs0@sambull.org>

Describe the bug

While using aiohttp in lambda functions we discovered that the import of the aiohttp packages takes nearly half a second (even more, but in our case some of the dependencies of aiohttp were already cached).

To Reproduce

You can reprosude the import time diagram using the following bash commands:

Expected behavior

A lot of packages are dependent on aiohttp (aiobotocore, elasticsearch, and more...) and the import time is too long for lambda functions.

Logs/tracebacks

Python Version

aiohttp Version

multidict Version

yarl Version

OS

Windows 11

Related component

Client

Additional context

No response

Code of Conduct

The text was updated successfully, but these errors were encountered: