Horovod is a distributed training framework for TensorFlow. The goal of Horovod is to make distributed Deep Learning fast and easy to use.

The primary motivation for this project is to make it easy to take a single-GPU TensorFlow program and successfully train it on many GPUs faster. This has two aspects:

- How much modifications does one have to make to a program to make it distributed, and how easy is it to run it.

- How much faster would it run in distributed mode?

Internally at Uber we found that it's much easier for people to understand an MPI model that requires minimal changes to source code than to understand how to set up regular Distributed TensorFlow.

To give some perspective on that, this commit

into our fork of TF Benchmarks shows how much code can be removed if one doesn't need to worry about towers and manually

averaging gradients across them, tf.Server(), tf.ClusterSpec(), tf.train.SyncReplicasOptimizer(),

tf.train.replicas_device_setter() and so on. If none of these things makes sense to you - don't worry, you don't have to

learn them if you use Horovod.

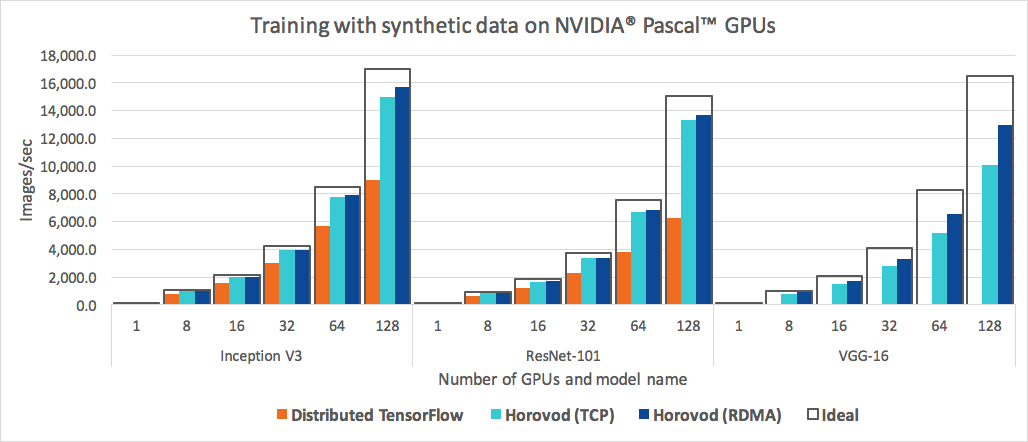

In addition to being easy to use, Horovod is fast. Below is a chart representing the benchmark that was done on 32 servers with 4 Pascal GPUs each connected by RoCE-capable 25 Gbit/s network:

Horovod achieves 90% scaling efficiency for both Inception V3 and ResNet-101, and 79% scaling efficiency for VGG-16.

While installing MPI and NCCL itself may seem like an extra hassle, it only needs to be done once by the team dealing with infrastructure, while everyone else in the company who builds the models can enjoy the simplicity of training them at scale.

To install Horovod:

- Install Open MPI or another MPI implementation.

Steps to install Open MPI are listed here.

- Install the

horovodpip package.

$ pip install horovodThis basic installation is good for laptops and for getting to know Horovod. If you're installing Horovod on a server with GPUs, read the Horovod on GPU page.

Horovod core principles are based on MPI concepts such as size, rank, local rank, allreduce, allgather and broadcast. See here for more details.

To use Horovod, make the following additions to your program:

-

Run

hvd.init(). -

Pin a server GPU to be used by this process using

config.gpu_options.visible_device_list. With the typical setup of one GPU per process, this can be set to local rank. In that case, the first process on the server will be allocated the first GPU, second process will be allocated the second GPU and so forth. -

Wrap optimizer in

hvd.DistributedOptimizer. The distributed optimizer delegates gradient computation to the original optimizer, averages gradients using allreduce or allgather, and then applies those averaged gradients. -

Add

hvd.BroadcastGlobalVariablesHook(0)to broadcast initial variable states from rank 0 to all other processes. Alternatively, if you're not usingMonitoredTrainingSession, you can simply execute thehvd.broadcast_global_variablesop after global variables have been initialized.

Example (see the examples directory for full training examples):

import tensorflow as tf

import horovod.tensorflow as hvd

# Initialize Horovod

hvd.init()

# Pin GPU to be used to process local rank (one GPU per process)

config = tf.ConfigProto()

config.gpu_options.visible_device_list = str(hvd.local_rank())

# Build model...

loss = ...

opt = tf.train.AdagradOptimizer(0.01)

# Add Horovod Distributed Optimizer

opt = hvd.DistributedOptimizer(opt)

# Add hook to broadcast variables from rank 0 to all other processes during

# initialization.

hooks = [hvd.BroadcastGlobalVariablesHook(0)]

# Make training operation

train_op = opt.minimize(loss)

# The MonitoredTrainingSession takes care of session initialization,

# restoring from a checkpoint, saving to a checkpoint, and closing when done

# or an error occurs.

with tf.train.MonitoredTrainingSession(checkpoint_dir="/tmp/train_logs",

config=config,

hooks=hooks) as mon_sess:

while not mon_sess.should_stop():

# Perform synchronous training.

mon_sess.run(train_op)To run on a machine with 4 GPUs:

$ mpirun -np 4 python train.pyTo run on 4 machines with 4 GPUs each using Open MPI:

$ mpirun -np 16 -x LD_LIBRARY_PATH -H server1:4,server2:4,server3:4,server4:4 python train.pyIf you're using Open MPI and you have RoCE or InfiniBand, we found this custom RDMA queue configuration to help performance a lot:

$ mpirun -np 16 -x LD_LIBRARY_PATH -mca btl_openib_receive_queues P,128,32:P,2048,32:P,12288,32:P,131072,32 -H server1:4,server2:4,server3:4,server4:4 python train.pyCheck your MPI documentation for arguments to the mpirun command on your system.

Horovod supports Keras and regular TensorFlow in similar ways.

Note: You must use keras.optimizers.TFOptimizer instead of native Keras optimizers.

See a full training example here.

Learn how to optimize your model for inference and remove Horovod operations from the graph here.

One of the unique things about Horovod is its ability to interleave communication and computation coupled with the ability to batch small allreduce operations, which results in improved performance. We call this batching feature Tensor Fusion.

See here for full details and tweaking instructions.

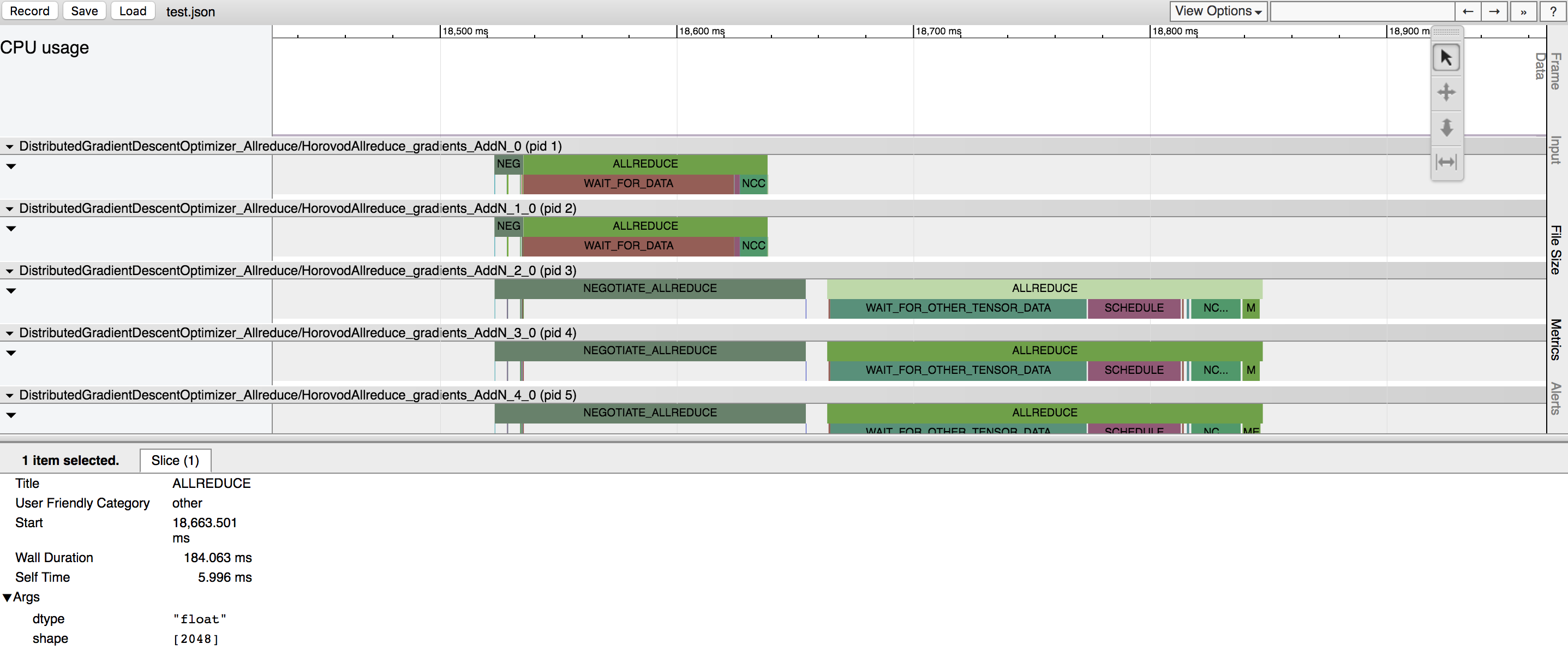

Horovod has the ability to record the timeline of its activity, called Horovod Timeline.

See here for full details and usage instructions.

See the Troubleshooting page and please submit the ticket if you can't find an answer.

- Sergeev, A., Del Balso, M. (2017) Meet Horovod: Uber’s Open Source Distributed Deep Learning Framework for TensorFlow. Retrieved from https://eng.uber.com/horovod/

- Gibiansky, A. (2017). Bringing HPC Techniques to Deep Learning. Retrieved from http://research.baidu.com/bringing-hpc-techniques-deep-learning/