New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

HDFS-16316.Improve DirectoryScanner: add regular file check related block. #3861

Conversation

|

💔 -1 overall

This message was automatically generated. |

112122d

to

3a0edea

Compare

|

💔 -1 overall

This message was automatically generated. |

3a0edea

to

2929261

Compare

|

💔 -1 overall

This message was automatically generated. |

|

Here are some unit tests that failed: It seems that these failed tests have little to do with the content I submitted. |

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

Would it be possible to verify this behavior in TestDirectoryScanner?

| @@ -2812,6 +2816,10 @@ public void checkAndUpdate(String bpid, ScanInfo scanInfo) | |||

| + memBlockInfo.getNumBytes() + " to " | |||

| + memBlockInfo.getBlockDataLength()); | |||

| memBlockInfo.setNumBytes(memBlockInfo.getBlockDataLength()); | |||

| } else if (!isRegular) { | |||

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

Unrelated: the checkAndUpdate() is way too long. We should refactor it in the future.

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

Thanks for your comment and review, @jojochuang .

I'll add some unit tests later.

This is indeed a bit too long for the checkAndUpdate() method.

I'll be fine-tuning this later if I can, but will handle this as a new jira.

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

Absolutely. Let's not worry about the refactor now. Thanks.

adede86

to

39177f5

Compare

|

💔 -1 overall

This message was automatically generated. |

|

🎊 +1 overall

This message was automatically generated. |

|

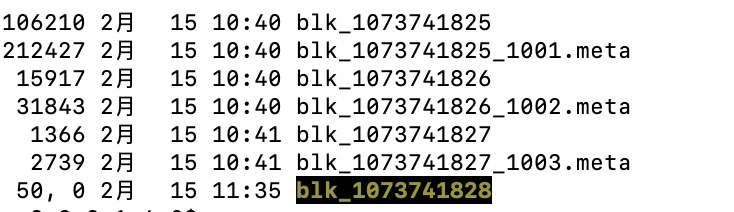

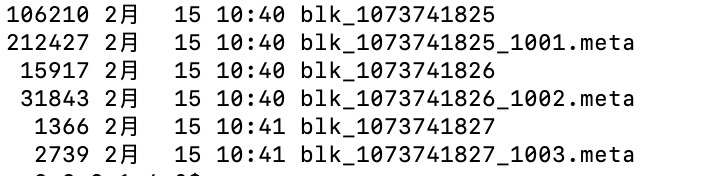

Here are some examples of online clusters. This file is non-standard. Then DataNode will tell NameNode that there are some unqualified blocks through NameNodeRpcServer#reportBadBlocks(). After the NameNode gets the data, it will process it further. Can you help review this pr again, @jojochuang . |

| @Test(timeout = 600000) | ||

| public void testRegularBlock() throws Exception { | ||

| // add a logger stream to check what has printed to log | ||

| ByteArrayOutputStream loggerStream = new ByteArrayOutputStream(); |

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

Can you use the Hadoop utility class LogCapturer

Line 533 in 6342d5e

| public static class LogCapturer { |

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

Yes, it was my mistake.

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

Hi I am not sure if this unit test is valid. The test doesn't seem to fail if the fix is removed.

39177f5

to

ca61b8b

Compare

|

🎊 +1 overall

This message was automatically generated. |

ca61b8b

to

f5e27e4

Compare

|

🎊 +1 overall

This message was automatically generated. |

|

Thanks for the suggestion, @jojochuang . When I remove the fix, the newly added unit test does not succeed, which is expected and does not affect the execution of other unit tests. |

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

+1

Thanks @jianghuazhu for reminding me. This check makes sense to me. And what I'm curious about is what was done before the metaFile became a device file? Or what is the root cause of this problem? |

| @@ -2812,6 +2816,9 @@ public void checkAndUpdate(String bpid, ScanInfo scanInfo) | |||

| + memBlockInfo.getNumBytes() + " to " | |||

| + memBlockInfo.getBlockDataLength()); | |||

| memBlockInfo.setNumBytes(memBlockInfo.getBlockDataLength()); | |||

| } else if (!isRegular) { | |||

| corruptBlock = new Block(memBlockInfo); | |||

| LOG.warn("Block:{} is not a regular file.", corruptBlock.getBlockId()); | |||

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

Maybe we should print the absolute path so that we can deal with these abnormal files?

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

Thanks @tomscut for the comment and review.

This happens occasionally, I've been monitoring it for a long time and still haven't found the root cause.

But I think this situation may be related to the Linux environment. When the normal data flow is working, no exception occurs. (I will continue to monitor this situation)

Here are some more canonical checks to prevent further worse cases on the cluster. This is a good thing for clusters.

When the file is actually cleaned up, the specific path will be printed. Here are some examples of online clusters:

2022-02-15 11:24:12,856 INFO org.apache.hadoop.hdfs.server.datanode.fsdataset.impl.FsDatasetAsyncDiskService: Deleted BP-xxxx blk_xxxx file /mnt/dfs/11/data/current/BP-xxxx.xxxx.xxxx/current/finalized/subdir0/subdir0/blk_xxxx

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

Thank you for explaining this. It looks like the operation related to mount has been performed. Did HDFS successfully clean the abnormal file on your online cluster you mentioned after this change?

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

Yes, these exception files are cleaned up.

When NameNode obtains such abnormal files, it treats them as invalid Blocks.

When the DataNode sends a heartbeat to the NameNode, the NameNode notifies the DataNode to clean up.

The specific cleaning action is performed by FsDatasetAsyncDiskServic

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

This is good.

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

LGTM.

...oject/hadoop-hdfs/src/main/java/org/apache/hadoop/hdfs/server/datanode/DirectoryScanner.java

Show resolved

Hide resolved

|

@jianghuazhu Thanks for your contribution. @jojochuang @tomscut Thanks for your reviews! Merged. |

Description of PR

When blk_xxxx and blk_xxxx.meta are not regular files, they will have adverse effects on the cluster, such as errors in the calculation space and the possibility of failure to read data.

For this type of block, it should not be used as a normal block file.

Details:

HDFS-16316

How was this patch tested?

Need to verify whether a file is a real regular file.