-

Notifications

You must be signed in to change notification settings - Fork 124

New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

Make region optional in Snowflake connection #100

Conversation

|

@dwreeves could you give me an example of what the formatted snowflake URI might look like (obviously without an actual password)? The way this is set up right now ensures that if there's a working snowflake connection in Airflow, then it can be used for cosmos. When I take away the region for a snowflake region, the connection is no longer valid in Airflow |

|

@pohek321 Hmm, that's interesting. To be clear, if you are on the legacy system for Snowflake account IDs, and you want to construct a URI without the region field, you move it over to the account part of the URI. I'm on provider package 2.7.0 and my secret looks something like this and it works fine: {"conn_type": "snowflake", "user": "SOME_USER", "password": "SOME_PASSWORD", "extra": "{\"account\": \"12345.us-east-1\", \"warehouse\": \"SOME_WAREHOUSE\", \"database\": \"SOME_DATABASE\", \"role\": \"SOME_ROLE\"}"}Note that it's For good measure, my Airflow dbt integration does the following. (1) Places the secrets directly in the .yml, i.e. I'm not using conn = self.snowflake_hook.get_connection(conn_id)

profile = self.dbt_profile_name: {

"outputs": {

self.dbt_target_name: {

"account": conn.extra_dejson.get("account"),

...

}

}

}Also FWIW, I also am the last person who committed to the docs for the Snowflake connection 😅 https://github.com/apache/airflow/blob/main/docs/apache-airflow-providers-snowflake/connections/snowflake.rst which I edited because I struggled a lot with this as it's very confusing. 🥴 |

|

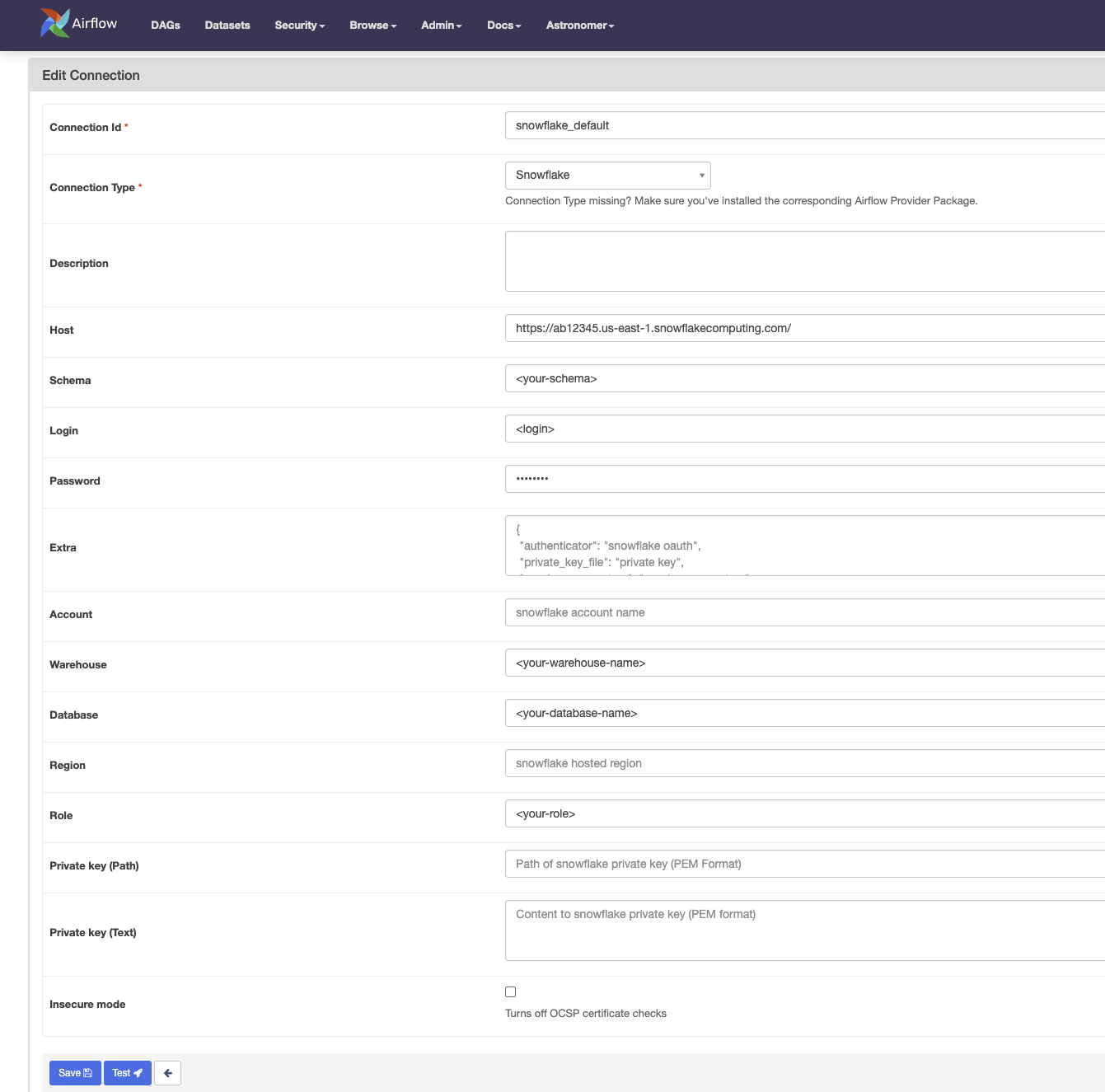

@dwreeves, I appreciate your patience while I wrap my head around this. I think this change would make sense, so in Airflow, your connection would look something like this, right: |

|

The So remove the Sorry for this PR being so confusing, but I know I can't be the only one who has my Snowflake Airflow connection set up to not use the region, so want to make sure this works trivially not just for me but for others as well. 😅 |

|

Turns out yes, we can remove the |

|

@dwreeves, good to know! I did what you suggested, and it worked! I did not realize that the However, I was able to break it (and by break, I mean the connection test in Airflow succeeds, but Cosmos cannot authenticate). It broke by putting So I added a couple of lines to handle that scenario! If you are cool with the commit, then I'll approve and merge, and we'll tag a new release so you can access these features. Additionally, thank you so much for your contributions! If you need anything else (or have ideas for improvements), don't hesitate to reach out or open issues. 🍻 |

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

Thanks, @dwreeves! Approved.

[Daniel Reeves](https://www.linkedin.com/in/daniel-reeves-27700545/) (@dwreeves ) is an experienced Open-Source Developer currently working as a Data Architect at Battery Ventures. He has significant experience with Apache Airflow, SQL, and Python and has contributed to many [OSS projects](https://github.com/dwreeve). Not only has he been using Cosmos since its early stages, but since January 2023, he has actively contributed to the project:  He has been a critical driver for the Cosmos 1.4 release, and some of his contributions include new features, bug fixes, and documentation improvements, including: * Creation of an Airflow plugin to render dbt docs: #737 * Support using dbt partial parsing file: #800 * Add more template fields to `DbtBaseOperator`: #786 * Add cancel on kill functionality: #101 * Make region optional in Snowflake profile mapping: #100 * Fix the dbt docs operator to not look for `graph.pickle`: #883 He thinks about the project long-term and proposes thorough solutions to problems faced by the community, as can be seen in Github tickets: * Introducing composability in the middle layer of Cosmos's API: #895 * Establish a general pattern for uploading artifacts to storage: #894 * Support `operator_arguments` injection at a node level: #881 One of Daniel's notable traits is his collaborative and supportive approach. He has actively engaged with users in the #airflow-dbt Slack channel, demonstrating his commitment to fostering a supportive community. We want to promote him as a Cosmos committer and maintainer for all these, recognising his constant efforts and achievements towards our community. Thank you very much, @dwreeves !

Snowflake allows and if anything seems to encourage not adding in the

regionparameter to a Snowflake connection. The reason is because Snowflake recently moved to a system where the "region" is no longer part of the URI.Under the hood, Airflow just passes to the Snowflake SQLAlchemy

URL(), and under the hood Snowflake'sURL()allows forregionto be optional.Fix

regionshould be allowed to be optional, basically. I wanted to do an approach that did not rely on magically trimming a trailing., so something that explicitly checks for when region is not specified.There were two approaches I could go with that meets the requirement: either fix it in Python, or fix it in

profiles/snowflake.py. I went with the former approach because doing lots of things in Jinja2 is usually harder to maintain than doing the equivalent in Python, but it would have looked like this:Pyproject.toml change

I also added

pytestto the project as it wasn't specified as a dependency, but thetests/folder uses it.