New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

Simulation performance #961

Comments

|

Some results: Python2Python3 |

|

Which version of python 2 and 3? |

|

Python versions:

|

|

Could you possibly test with a newer Python 3 version, Python 3.7 being preferred? (Only to narrow down the problem a bit, we are still aiming for good performance in Python 3.5.) |

|

There is also an environment variable |

|

I supposed that i was using the same COCOTB but in one terminal i was inside a docker with an older cocotb. So, I rerun all the test with current and old commit and i got the following results: Current masterPython 3.7.3Python 2.7.3Old Cocotb commit: 2744c4fPython 2.7Python 3.5.3:SummaryThe performance doesn't depends on the python version. Cocotb suffered a loss of performance in the last year. Maibe it is expected, i don't know. I will do a the profiling and share the results. |

|

The simulated time differs between your test runs, so things are not fully deterministic. My observations

Can you confirm the latter two observations with a more deterministic test case? The first item would then be a documentation issue (suggest to people to use a modern Python version), while the second observation would require more investigation. It would be very helpful to have a deterministic test case and bisect the cocotb history between 2744c4f and master to figure out in which commit (if it is a single one) the performance regression was introduced. |

|

And no, the performance regression is not expected. |

|

Just one testcase has randomization (the testcase with the longest name), the others are deterministic. I can confirm both observations. These results are similar in all our tests (we have around 50 cores that are currently tested with cocotb). Currently i'm looking for a shorter test case without confidential information for sharing the profiling. |

|

As a hunch, #870 might be a good starting point to test before and after |

|

Using python3.7 Current mastercommit: 714e129 After

|

|

I'm searching the guilty commits with brute force: An script which executes and grep the performance for each commit. |

|

For the difference between the Python versions I've opened #965 to track the further investigation and implementation, leaving this bug to actually figure out the performance regression within cocotb. |

|

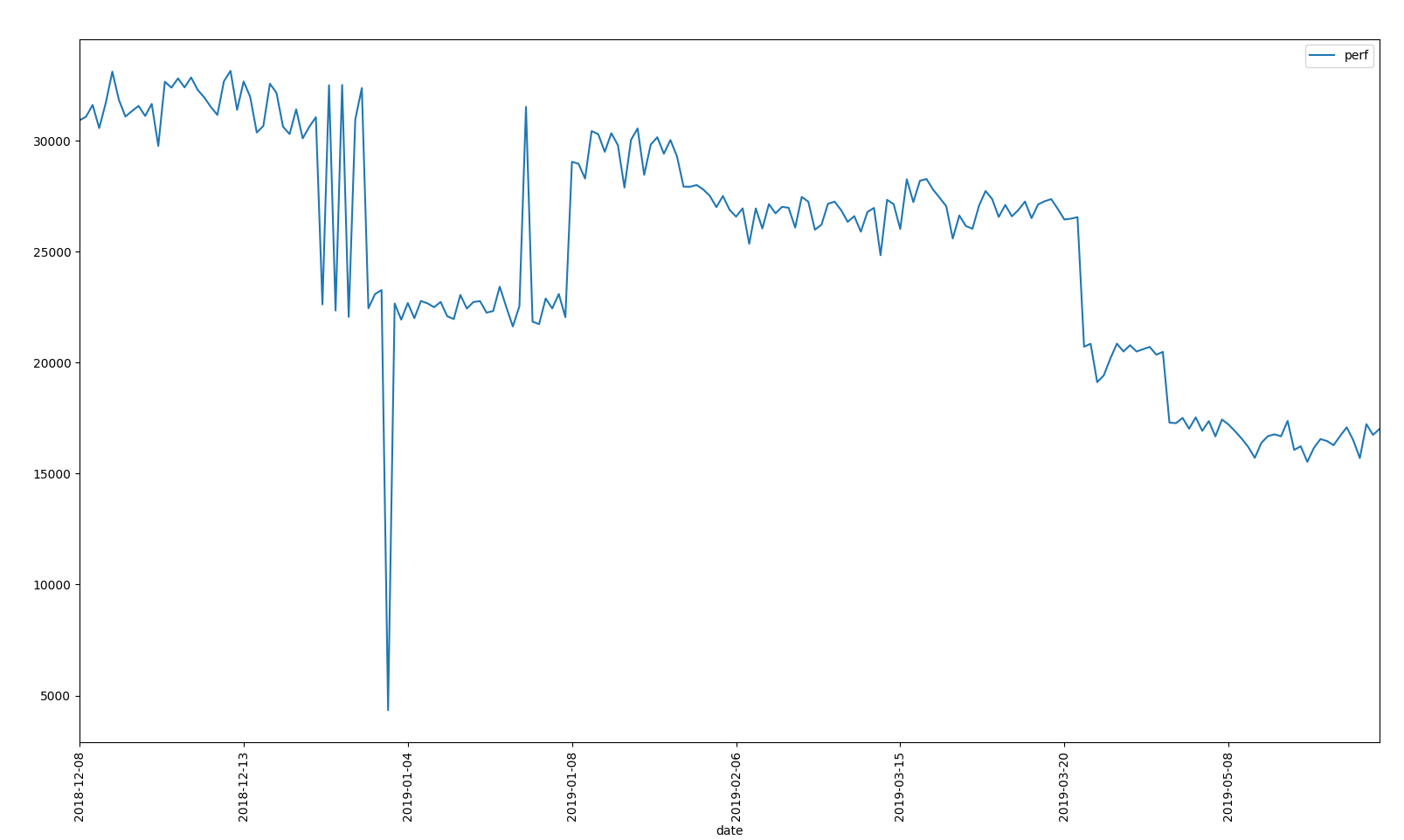

Here the results: CSV file with the results: The outlier near 2019-01-04 was my fault. I slept the process. As is shown in the image, the performance has a negative derivative along the time and 2/3 commits with higher performance loss: Of course, those results are relative to the test but i think they are a good estimation. |

|

What's the y axis on that graph? |

|

The Y axis is For having a better performane estimator, It should be normalized to the max |

|

It would be good to confirm that the sim time is the same across all commits, in case the cause of the increased runtime is something like "we fixed a bug where the test ended silently too soon" |

|

I checked that simtime is the same. . If you want i can share the scripts to recreate these results. The scripts are so ugly but useful. |

|

So, I've found one obvious performance cost already - a logger is created for every single coroutine, which for a very simple test-case of: Seems to spend 12% of it's time calling Some of the scheduler refactors replaced some special-casing with more coroutines. What I didn't realize is that the each mini coroutine creates and destroys a full logger instance, which is not cheap at all. |

|

Patch for that at #967. @andresdemski, please let me know how much that helps your more realistic suite. |

|

I reprocess all the commits but this time i recorded simtime. As is shown in the picture, simtime is constant |

|

I tried #967 . The performance is improved a 10% but still far from the best performance

|

|

What's the tool you used to produce those diagrams? |

|

The same command that it is in endian_swapper example $ make COCOTB_ENABLE_PROFILING=true

$ python3 -m gprof2dot -f pstats test_profile.pstat | dot -Tsvg -o profile.svg |

|

Any chance you could upload the svg version? |

From the investigation at cocotbgh-961, this is shown to be 3% of the runtime on master. The version of python does not change at runtime, so we can replace this function with a simple constant. Doing so also brings our name in line with six's.

|

Let's please keep the discussion in this issue focused on understanding and fixing the performance issues. |

|

I made a simple tool which allow us to do performance analysis in an easy way: https://github.com/andresdemski/cocotb-performance Terminal output: $ ./run_in_docker.sh python3 compare.py

sha256:7a4d132d15ca92f95b7f75b637c2c95b277bf7a3609918815f276bbe9fb8c5e4

url https://github.com/potentialventures/cocotb/

commit: master

path: /tmp/cocotb-master

results:

simtime: 142610.10 ns

realtime: 18.65s

performance: 7648.49 ns/s

url https://github.com/eric-wieser/cocotb/

commit: avoid-runtime-coroutine-creation

path: /tmp/cocotb-eric-wieser

results:

simtime: 142610.10 ns

realtime: 18.20s

performance: 7833.63 ns/s

url https://github.com/potentialventures/cocotb/

commit: 2744c4fec607bf598d677484e6c0290183360f7a

path: /tmp/cocotb-master

results:

simtime: 142610.10 ns

realtime: 15.53s

performance: 9182.79 ns/s |

|

@andresdemski now that all outstanding performance PRs have been merged, how close to 1.1 performance are we with current master? I'd like to use this information to make a judgement call if we can actually release master right now, or if we need to do further performance work. (A small performance regression is fine, a huge regression would be a bad thing.) |

|

If #764 (f692e8d) is really significant, it would be fairly straightforward to revert - most of the value in it was to provide the simplicity needed to refactor. The refactor has now happened, and the three |

|

Can you replot that graph with an origin of 0? |

|

So we're roughly 30 % slower than we were in the 1.1 release. |

|

Phrased another way, (that) simulation will take At the point this issue was opened, the simulation took 2.0 times longer |

|

Thanks @andresdemski for all the analysis runs, and @eric-wieser for the great work on performance patches! Are there any more patches upcoming in the next couple of days which target performance? (Otherwise I'm going to release 1.2 with what we have now, and we can build on top of that without time pressure.) |

|

@imphil: Came up with another one at #1012. Thinking back, I think #727 (and perhaps some of #723) might have been a performance mistake, and the cost of the weak dictionaries may not justify the minor gain of avoiding reference cycles. I don't really want to look at reverting those just yet. In the long term though, there's a lot of efficiency to be gained by eliminating internal uses of |

|

With these real-life testcases and current performance numbers, it may be worth re-investigating gcc options like in #178 (this would be set in |

Update 11/26/19No considerable changes since July. Profile |

|

Has your question been resolved? If so please close this issue. If it has not been resolved, you may need to provide more information. If no more activity on this issue occurs in 7 days, it will be closed. |

|

Maybe something more like a real case using https://github.com/alexforencich/verilog-axi/tree/master/tb/axil_ram |

Hi,

At work, i'm porting all the repositories to mainline cocotb with python3 and i'm seeing that the performance using python3 is ~4 times worse than python2 For executing the tests, i just changed the

PYTHON_BINenv,I can't share a testcase now, i will try to recreate this performance issue with a sharable test.

My environment:

Thanks

The text was updated successfully, but these errors were encountered: