-

Notifications

You must be signed in to change notification settings - Fork 199

New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

Second try for utf support. #532

base: master

Are you sure you want to change the base?

Conversation

|

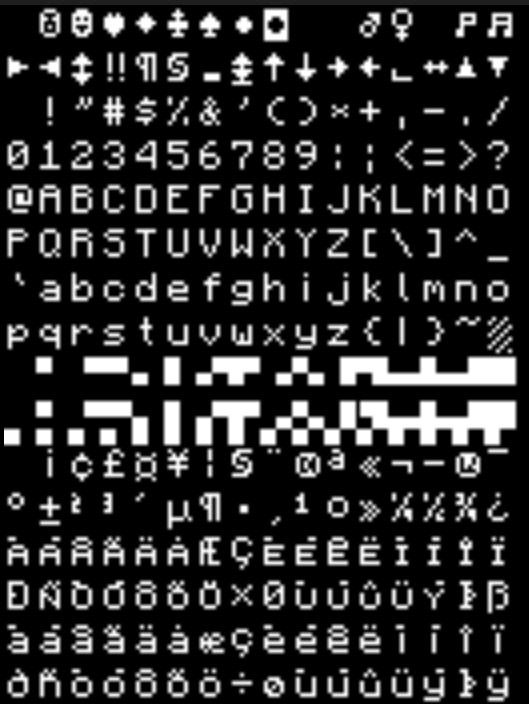

Resource pack for Unicode-Font: Sample lua script: Custom build including the patch: Extensions:

Known limitations/ bugs:

Hope you enjoy |

|

Finally got round to looking at this, sorry. It looks much nicer. 👍 Just a couple of questions/comments:

I'm rather confused about the whole font name implementation and the rationale behind it. It seems a little overkill - I'd personally just have two fonts (CC's default one and a unicode supporting one) and a flag to switch between the two. Supporting anything else introduces all sorts of issues if the server is asking for a font the client doesn't have. I'd personally remove any storing fonts on the tile entity: it should just be stored on the terminal object and configured on computer startup, not persisted across saves.

I'm a bit flakey about this. Most of Lua's IO system (and thus ComputerCraft's) rather assumes strings are nothing more than byte arrays. For instance, file handles' just return always return strings, even in binary mode. I'd rather expose something like Lua5.3's I think it's also worth noting that indexing strings is now O(n) instead of O(1), so we might want to review how the It's worth noting that I'm just spitballing here. It's probably worth waiting for Dan to comment before making any major changes, as he's the only one with authority round here :). Other people: please chip in! I know I'm absolutely awful at API design. Edit: And another thing (sorry.

|

Yes. But that assumes the client has a know fontset we know of. Becaue of licening poblem we do not know the font the client likes to use. And maybe someone likes to use multiple Fonts on different Monitors, some monospaced adventure font set to display a "film" etc.

I would have a closer look at it. The saveDescription is used to distribute it to client over Messages. So I have chosen it. I will review it. It is not meant to be stored persistent at all.

This assumes a convention where all api developers and program developers always use this utf library. The main problem are the blint methods in terminals. They assume that the color strings are of same length than the given text.

Three aspects:

I modified build.gradle to include the luja source folders. And removed luaj jar from libs and instead add the libraries luaj requires into libs folder. It is not using the ant build at all.

I will review it. But why this is a problem at all? If a script really likes to send binary values over events in this way it ran into Trouble even with old versions of the lib. They eat the values and send '?' If I remember right. So one always gets not the expected result right? |

|

I agree with squid with regards to the Lua 5.3 |

|

But what's with the existing Input methods like the char or the paste event? They works with a normal String. If utf8 is only a API, it would not possible to integrate new Chars from UTF8 in these events. |

|

A |

|

To sum up:

May work. I will have to think a while if we get into trouble. I am still afraid what happens if an "old script" gets utf8 strings (wherever they are from) and tries to print them. It won't be fully backward compatible. Still open question what to do for multi-char sequences. Do we depend on java strings or do we "fully support" utf8 and even treat multi-char-sequences as single character? |

|

|

It's starting to sound like those functions would fit in their own api rather in all apis piecemeal. |

|

Why? At least term/window/monitor are objects. Putting their utf methods to some other place would be confusing. |

|

I missed point 2 somehow. |

|

Ah ok :-) The string and the new utf8 API are defined by lua standard itself. So we should indeed follow it. It's easier to document and easier to adapt existing utf aware scripts and code samples for users ;-) |

|

You could arguably have something like With respect to events, one shouldn't need special unicode variants of

This shouldn't be as much of a problem, as they'll should just be printed using CC's latin1 codepage instead of the full unicode one. It may result in some funky output, but it won't be fundamentally broken per-say. |

Yes, but on Java side we will receive a string with valid utf characters. We still need on java side the "hack" in old terminal api to replace the utf codes by '?'. The newer utf versions of the functions will use the java strings without modification. |

|

Wasn't the whole point of having 2 functions for all utf8 stuff so that current ones would work with this charset and utf8 ones would work with utf8? |

|

Yes, of course. But think of following simple script: local s = string.char(252) What do you expect? In current ccraft version you will get a "1" and a "ü". Because of dans hacks. |

It shouldn't. The original string library must still funtion as before, otherwise you're going to break any funtion which operates on binary data. Dan has always been verf strict in has backwards compatibility requirements and, whilst I think you can be more flexible here, breaking fundamental Lua semantics is not an option. |

|

ok, I will try to find a solution. But it may not be very nice. |

|

And now the third try. Now we are fully backward compatible! Custom build can be found here: And last but not least a test: As you can see it now even works if one function prints classic ASCII and another one prints utf characters. See my current working list: |

|

And yes, in screenshot you see a smiley although not very nicely drawn (U+1F600, http://www.utf8-chartable.de/unicode-utf8-table.pl) |

|

Judging by the changelog and images that's looking good! I'll have a play with it later tonight. Just a couple of nitpicky things and some questions:

I'd dump this (ha-ha) -

As someone in an English speaking country, I'd prefer to make this

Blughr. I'm stuck between "this is good for support" and "oh goodness, even more code". I haven't looked at the implementation, but I'd suggest copying the pattern matching and formatting code from Cobalt, as that's much less broken then LuaJ's implementation.

There's a bit of me which is reluctant to add more complexity to the file system and HTTP APIs, but I guess binary mode doesn't really cut it as it doesn't have

Sadly we still need to handle the old values boolean ones as well (I wasn't imagining ever needing to extend this, sorry). I'd personally use magic strings ("b" for binary, "u" for unicode or something) instead of magic numbers.

I'm still blurghr about this, but there you go :P. Ideally we could have "one unified font" which looks consistent. It might be possible to fall back to Minecraft's built-in font renderer (or a fixed width variant) for non-latin1 characters.

Is

And this is why you shouldn't rebase when tired! This is entirely my fault, but it didn't seem worth putting a PR together for something so minor.

Nope. As long as the encoding is consistent between the entry and exit points it shouldn't matter. |

Hmmm. That means to put some kind of utf logic and utf string inside the LuaJ Code too. And I don't even know if the LuaJ classes are available at client (for font renderer etc.) I will check this.

I don't prefer global globals or placing them alongside into utf8 API. However someone should make a decision. :-)

Hmmm. Maybe a solution with optional locale parameter? As "lower" and "upper" are not part of the official lua 5.3 utf8 api we could add a custom function doing whatever we like to do... Again someone has to make a decision.

If there are some other custom APIs for utf8 we should add we can put them into the code. Hmmm. Someone has to make a decision ;-)

Yeah, that could be an alternative. Treat binary streams with string reading functions. For the Moment to solve the issue with corrupt characters I added those new modes.

I dod not remove that ;-)

If thats the decisio I will change it :-)

I did not find some other one. And Gnu Unifont has some incompatible license. So there must be some System where we do not depend on installed Fonts. It already falls back to the old font if the font Name is not known by the client. Resulting in '?' for every Extended utf character...

If we like to be fully backward compatible we need it. Because the dan hacks corrupt the utf characters. You can see it in screenshot. The first and ssecond print gives us 3 '?' characters. The computer craft wiki says that CCraft is compatible with utf8. But actually it isn't. Either we break compatibility to let "load" Support all utf characters or we have a second function "loadutf8". You find some changes from dan inside LuaJ that breaks the load function or more concret: That changes unwanted utf multi byte characters to '?'.

That was a todo marker for me ;-) Did not yet review it. I only find some string handling inside. Will have to check it the next days. |

Dan's modifications should only matter when bridging between Java strings and Lua ones. I'm fairly sure the lexer, parser and compiler (and so I guess the it's possible the parser may break on some multi-byte sequences, but I short of creating an entire unicode-aware copy of the parser, I don't think that's avoidable.

Yes, one rather need to get Dan's opinion on this, as it is rather the only opinion which matters. Sadly he's not really had time/willpower to look at the repo lately :( |

Yes, it works. But saving files in IDE as UTF8 and using the character unescaped directly does not work (see my example lua script above, which is actually doing this). Maybe this is even the lua standard way to not be able to read utf source files in a proper way. But it's strange for me as a non lua evangelist.

So the community has to decide and to vote. Maybe everyone has to vote for how many utf functions will be needed and where they reside and how they work. We will vote for and hopefully dan shares our opinion at the end. I peronally do not care where functions reside as long as it works. As far as I can see. We basically require:

Anyone that can contact dan directly to ask him for sharing his opinion with us? |

No description provided.