Bark is a transformer-based text-to-speech model proposed by Suno AI in suno-ai/bark.

Bark is made of 4 main models:

- [

BarkSemanticModel] (also referred to as the 'text' model): a causal auto-regressive transformer model that takes as input tokenized text, and predicts semantic text tokens that capture the meaning of the text. - [

BarkCoarseModel] (also referred to as the 'coarse acoustics' model): a causal autoregressive transformer, that takes as input the results of the [BarkSemanticModel] model. It aims at predicting the first two audio codebooks necessary for EnCodec. - [

BarkFineModel] (the 'fine acoustics' model), this time a non-causal autoencoder transformer, which iteratively predicts the last codebooks based on the sum of the previous codebooks embeddings. - having predicted all the codebook channels from the [

EncodecModel], Bark uses it to decode the output audio array.

It should be noted that each of the first three modules can support conditional speaker embeddings to condition the output sound according to specific predefined voice.

This model was contributed by Yoach Lacombe (ylacombe) and Sanchit Gandhi (sanchit-gandhi). The original code can be found here.

Bark can be optimized with just a few extra lines of code, which significantly reduces its memory footprint and accelerates inference.

You can speed up inference and reduce memory footprint by 50% simply by loading the model in half-precision.

from transformers import BarkModel

import torch

device = "cuda" if torch.cuda.is_available() else "cpu"

model = BarkModel.from_pretrained("suno/bark-small", torch_dtype=torch.float16).to(device)As mentioned above, Bark is made up of 4 sub-models, which are called up sequentially during audio generation. In other words, while one sub-model is in use, the other sub-models are idle.

If you're using a CUDA device, a simple solution to benefit from an 80% reduction in memory footprint is to offload the submodels from GPU to CPU when they're idle. This operation is called CPU offloading. You can use it with one line of code as follows:

model.enable_cpu_offload()Note that 🤗 Accelerate must be installed before using this feature. Here's how to install it.

Better Transformer is an 🤗 Optimum feature that performs kernel fusion under the hood. You can gain 20% to 30% in speed with zero performance degradation. It only requires one line of code to export the model to 🤗 Better Transformer:

model = model.to_bettertransformer()Note that 🤗 Optimum must be installed before using this feature. Here's how to install it.

Flash Attention 2 is an even faster, optimized version of the previous optimization.

First, check whether your hardware is compatible with Flash Attention 2. The latest list of compatible hardware can be found in the official documentation. If your hardware is not compatible with Flash Attention 2, you can still benefit from attention kernel optimisations through Better Transformer support covered above.

Next, install the latest version of Flash Attention 2:

pip install -U flash-attn --no-build-isolationTo load a model using Flash Attention 2, we can pass the attn_implementation="flash_attention_2" flag to .from_pretrained. We'll also load the model in half-precision (e.g. torch.float16), since it results in almost no degradation to audio quality but significantly lower memory usage and faster inference:

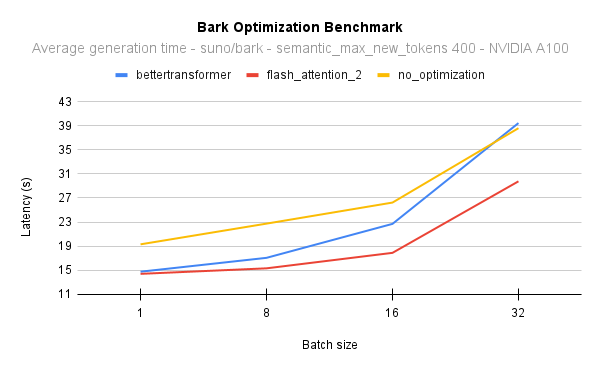

model = BarkModel.from_pretrained("suno/bark-small", torch_dtype=torch.float16, attn_implementation="flash_attention_2").to(device)The following diagram shows the latency for the native attention implementation (no optimisation) against Better Transformer and Flash Attention 2. In all cases, we generate 400 semantic tokens on a 40GB A100 GPU with PyTorch 2.1. Flash Attention 2 is also consistently faster than Better Transformer, and its performance improves even more as batch sizes increase:

To put this into perspective, on an NVIDIA A100 and when generating 400 semantic tokens with a batch size of 16, you can get 17 times the throughput and still be 2 seconds faster than generating sentences one by one with the native model implementation. In other words, all the samples will be generated 17 times faster.

At batch size 8, on an NVIDIA A100, Flash Attention 2 is also 10% faster than Better Transformer, and at batch size 16, 25%.

You can combine optimization techniques, and use CPU offload, half-precision and Flash Attention 2 (or 🤗 Better Transformer) all at once.

from transformers import BarkModel

import torch

device = "cuda" if torch.cuda.is_available() else "cpu"

# load in fp16 and use Flash Attention 2

model = BarkModel.from_pretrained("suno/bark-small", torch_dtype=torch.float16, attn_implementation="flash_attention_2").to(device)

# enable CPU offload

model.enable_cpu_offload()Find out more on inference optimization techniques here.

Suno offers a library of voice presets in a number of languages here. These presets are also uploaded in the hub here or here.

>>> from transformers import AutoProcessor, BarkModel

>>> processor = AutoProcessor.from_pretrained("suno/bark")

>>> model = BarkModel.from_pretrained("suno/bark")

>>> voice_preset = "v2/en_speaker_6"

>>> inputs = processor("Hello, my dog is cute", voice_preset=voice_preset)

>>> audio_array = model.generate(**inputs)

>>> audio_array = audio_array.cpu().numpy().squeeze()Bark can generate highly realistic, multilingual speech as well as other audio - including music, background noise and simple sound effects.

>>> # Multilingual speech - simplified Chinese

>>> inputs = processor("惊人的!我会说中文")

>>> # Multilingual speech - French - let's use a voice_preset as well

>>> inputs = processor("Incroyable! Je peux générer du son.", voice_preset="fr_speaker_5")

>>> # Bark can also generate music. You can help it out by adding music notes around your lyrics.

>>> inputs = processor("♪ Hello, my dog is cute ♪")

>>> audio_array = model.generate(**inputs)

>>> audio_array = audio_array.cpu().numpy().squeeze()The model can also produce nonverbal communications like laughing, sighing and crying.

>>> # Adding non-speech cues to the input text

>>> inputs = processor("Hello uh ... [clears throat], my dog is cute [laughter]")

>>> audio_array = model.generate(**inputs)

>>> audio_array = audio_array.cpu().numpy().squeeze()To save the audio, simply take the sample rate from the model config and some scipy utility:

>>> from scipy.io.wavfile import write as write_wav

>>> # save audio to disk, but first take the sample rate from the model config

>>> sample_rate = model.generation_config.sample_rate

>>> write_wav("bark_generation.wav", sample_rate, audio_array)[[autodoc]] BarkConfig - all

[[autodoc]] BarkProcessor - all - call

[[autodoc]] BarkModel - generate - enable_cpu_offload

[[autodoc]] BarkSemanticModel - forward

[[autodoc]] BarkCoarseModel - forward

[[autodoc]] BarkFineModel - forward

[[autodoc]] BarkCausalModel - forward

[[autodoc]] BarkCoarseConfig - all

[[autodoc]] BarkFineConfig - all

[[autodoc]] BarkSemanticConfig - all