This model is in maintenance mode only, we don't accept any new PRs changing its code.

If you run into any issues running this model, please reinstall the last version that supported this model: v4.40.2.

You can do so by running the following command: pip install -U transformers==4.40.2.

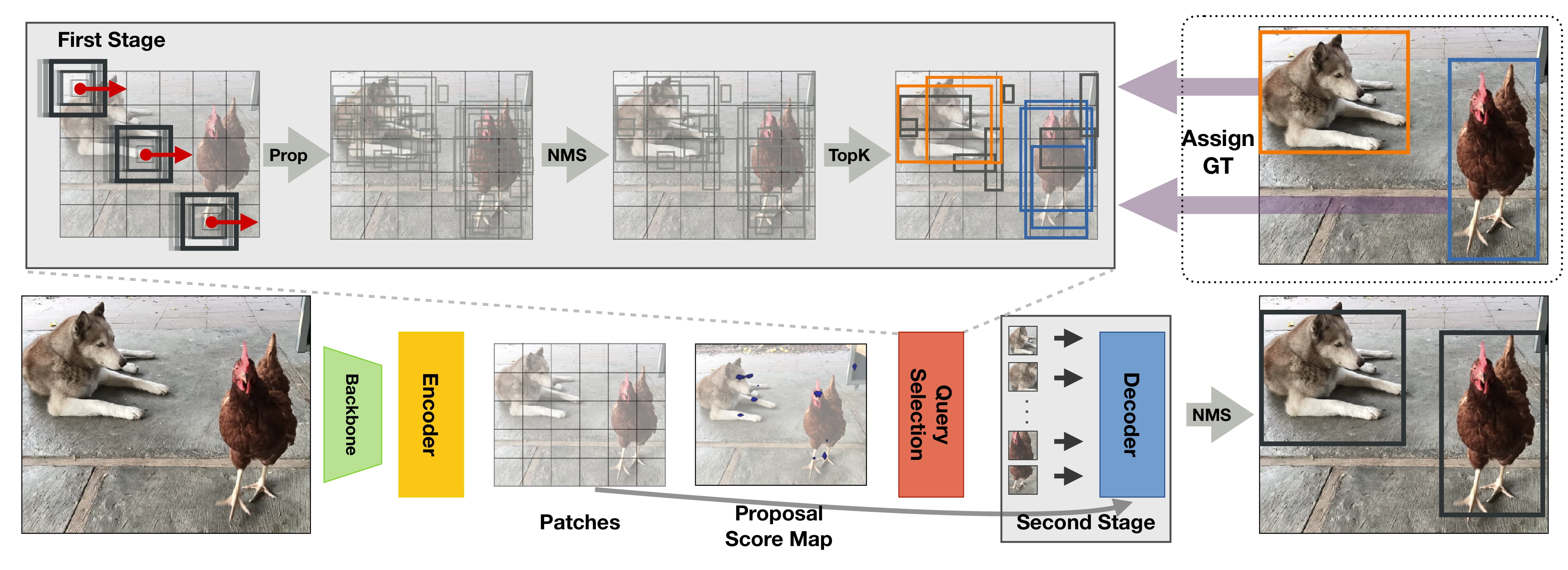

The DETA model was proposed in NMS Strikes Back by Jeffrey Ouyang-Zhang, Jang Hyun Cho, Xingyi Zhou, Philipp Krähenbühl. DETA (short for Detection Transformers with Assignment) improves Deformable DETR by replacing the one-to-one bipartite Hungarian matching loss with one-to-many label assignments used in traditional detectors with non-maximum suppression (NMS). This leads to significant gains of up to 2.5 mAP.

The abstract from the paper is the following:

Detection Transformer (DETR) directly transforms queries to unique objects by using one-to-one bipartite matching during training and enables end-to-end object detection. Recently, these models have surpassed traditional detectors on COCO with undeniable elegance. However, they differ from traditional detectors in multiple designs, including model architecture and training schedules, and thus the effectiveness of one-to-one matching is not fully understood. In this work, we conduct a strict comparison between the one-to-one Hungarian matching in DETRs and the one-to-many label assignments in traditional detectors with non-maximum supervision (NMS). Surprisingly, we observe one-to-many assignments with NMS consistently outperform standard one-to-one matching under the same setting, with a significant gain of up to 2.5 mAP. Our detector that trains Deformable-DETR with traditional IoU-based label assignment achieved 50.2 COCO mAP within 12 epochs (1x schedule) with ResNet50 backbone, outperforming all existing traditional or transformer-based detectors in this setting. On multiple datasets, schedules, and architectures, we consistently show bipartite matching is unnecessary for performant detection transformers. Furthermore, we attribute the success of detection transformers to their expressive transformer architecture.

DETA overview. Taken from the original paper.

This model was contributed by nielsr. The original code can be found here.

A list of official Hugging Face and community (indicated by 🌎) resources to help you get started with DETA.

- Demo notebooks for DETA can be found here.

- Scripts for finetuning [

DetaForObjectDetection] with [Trainer] or Accelerate can be found here. - See also: Object detection task guide.

If you're interested in submitting a resource to be included here, please feel free to open a Pull Request and we'll review it! The resource should ideally demonstrate something new instead of duplicating an existing resource.

[[autodoc]] DetaConfig

[[autodoc]] DetaImageProcessor - preprocess - post_process_object_detection

[[autodoc]] DetaModel - forward

[[autodoc]] DetaForObjectDetection - forward