To warn, alert, or notify.

GitHub | PyPI | Documentation | Issues | Changelog

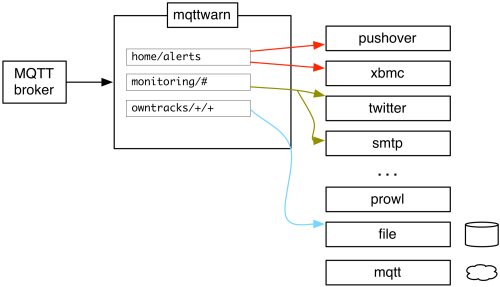

mqttwarn - subscribe to MQTT topics and notify pluggable services.

mqttwarn subscribes to any number of MQTT topics and publishes received payloads to one or more notification services after optionally applying sophisticated transformations.

A picture says a thousand words.

mqttwarn comes with over 70 notification handler plugins, covering a wide range of notification services and is very open to further contributions. You can enjoy the alphabetical list of plugins on the mqttwarn notifier catalog page.

On top of that, it integrates with the excellent Apprise notification library. Apprise notification services has a complete list of the 80+ notification services supported by Apprise.

The mqttwarn documentation is the right place to read all about mqttwarn's features and integrations, and how you can leverage all its framework components for building custom applications. Its service plugins can be inspected on the mqttwarn notifier catalog page.

Synopsis:

pip install --upgrade mqttwarn

You can also add support for a specific service plugin:

pip install --upgrade 'mqttwarn[xmpp]'

You can also add support for multiple services, all at once:

pip install --upgrade 'mqttwarn[apprise,asterisk,nsca,desktopnotify,tootpaste,xmpp]'

See also: Installing mqttwarn with pip.

For running mqttwarn on a container infrastructure like Docker or

Kubernetes, corresponding images are automatically published to the

GitHub Container Registry (GHCR).

ghcr.io/mqtt-tools/mqttwarn-standard:latestghcr.io/mqtt-tools/mqttwarn-full:latest

To learn more about this topic, please follow up reading the Using the OCI image with Docker or Podman documentation section.

In order to learn how to configure mqttwarn, please head over to the documentation section about the mqttwarn configuration.

Just launch mqttwarn:

# Run mqttwarn mqttwarn

To supply a different configuration file or log file, optionally use:

# Define configuration file export MQTTWARNINI=/etc/mqttwarn/acme.ini # Define log file export MQTTWARNLOG=/var/log/mqttwarn.log # Run mqttwarn mqttwarn

There are different ways to run mqttwarn as a system daemon. There are examples

for systemd, traditional init, OpenRC, and Supervisor in the etc directory

of this repository, for example supervisor.ini (Supervisor) and

mqttwarn.service (systemd).

In order to directly invoke notification plugins from custom programs, or for debugging them, see Running notification plugins standalone.

For hacking on mqttwarn, please install it in development mode, using a mqttwarn development sandbox installation.

These links will guide you to the source code of mqttwarn and its documentation.

You will need at least the following components:

- Python 3.x or PyPy 3.x.

- An MQTT broker. We recommend Eclipse Mosquitto.

- For invoking specific service plugins, additional Python modules may be required.

See

setup.pyfile.

We are always happy to receive code contributions, ideas, suggestions and problem reports from the community.

So, if you would like to contribute, you are most welcome. Spend some time taking a look around, locate a bug, design issue or spelling mistake, and then send us a pull request or create an issue.

Thank you in advance for your efforts, we really appreciate any help or feedback.

mqttwarn is copyright © 2014-2023 Jan-Piet Mens and contributors. All rights reserved.

It is and will always be free and open source software.

Use of the source code included here is governed by the Eclipse Public License 2.0, see LICENSE file for details. Please also recognize the licenses of third-party components.

If you encounter any problems during setup or operations or if you have further suggestions, please let us know by opening an issue on GitHub. Thank you already.

Thanks to all the contributors of mqttwarn who helped to conceive it in one way or another. You know who you are.

"MQTT" is a trademark of the OASIS open standards consortium, which publishes the MQTT specifications. "Eclipse Mosquitto" is a trademark of the Eclipse Foundation.

Have fun!