New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

there is something wrong with meteor when we have more than 4k users online (xhr flood) #11285

Comments

|

Can you reproduce in a load test type environment? |

|

@evolross no,we can't creat a test environment just like real environment,but we found: |

|

We just encountered a similar (the same?) issue. The client-side fell back immediately to XHR despite web sockets functioning correctly. This only happened in production, which is strange because our dev environment includes Nginx with the same configuration. Most importantly it only happens when dynamic imports (e.g. Did a little poking around: line 1967 in that.unload_ref = utils.unload_add(function () {

that.ws.close();

});Could it be that something about dynamic imports is causing this unload handler to fire immediately. I noticed that the dynamic imports code has changed in recent versions. |

|

@dj-foxxy Hello,where is socket-stream-clean.js |

|

@codeneno I'm not sure where the file comes from but inside you project it's |

|

@df-foxxy please give us one file,and How many online users do you have? |

|

Hi @codeneno @dj-foxxy I never saw this problem before but I have always worked in apps running on Galaxy and Galaxy has a custom proxy written in Go, we don't use nginx so it's a different environment. I'm saying that because maybe this is a clue where is the root cause or maybe it's something custom on Rocket.chat application, not sure. We have many clients with more than 15k simultaneous connections every day and we have no reports like this. |

|

@codeneno I not sure what you means, does the relative file path relative file path not work. As for users, very few we use it as the back end for a Twitch stream (the issues occurs regardless of user count). @filipenevola Does Meteor support running your own instance? If so, is there documentation describing what a proxy should provide? |

No, we don't. But I'm not saying that you need to run a custom proxy to solve your issue (as Meteor is using a WebSocket lib and not a custom implementation it would make no sense) but what I'm saying is that we have clients running more connections than you without problem and that MAYBE your issue is in your Nginx setup. |

|

@filipenevola no ,Meteor use sockjs |

|

@filipenevola filipenevola,you have more than 15k simultaneous connections ,we too. |

|

@filipenevola Chrome reported that the websocket was closed by the client before a connection to the server was established, so it's unlikely to be the server and more with sockjs's fallback mechanisms. I believe it happens in production due to timing, e.g., the server is not not the same box as the client. So the timing between Meteor dynamically loading JS and Sockjs starting up is different the the issue occurs. |

|

@a4xrbj1 Looks like what we got. Are you using dynamic imports? When a client falls back to XHR, does the browser dev tools say that websocket (that sockjs attempted to use) was closed before a connection was established? |

|

We're using ElectronJS as a client and therefore cannot identify what is happening on the dev tools (it's only happening on Production). The only way we can identify which customer is actually experiencing is from location data that we get along in DataDog. I've attached two screenshots from one of the log entries but we've got 8 XHR errors in 1 minute from the same client in Canada. The URL is always the same. Upon examine more of our log files we can see that the user had the last action at 2:25 and the XHR errors happen at 2:42, so 17 minutes later. It looks like the user left the computer and ElectronJS app running (3rd screenshot). We do have an automated process at the Backend to kick out inactive users after 15 minutes but there's no trace of that in the log file. So that might have caused the XHR errors. Meaning that the Backend logged the user out after 15 minutes and the Frontend app tried to contact the Backend 2 minutes later but as it wasn't connected anymore it threw the XHR error. So that would mean the Backend couldn't inform/reach the Frontend app properly of the logout somehow. |

|

i have same issues, how to hot fix? please help to consider. |

|

How to solve this. We have built a system total based on REST APIs but this is still coming. Also tried DISABLE_WEBSOCKETS=1 but it does not work. Please help! |

|

Hi, this issue was opened a year ago, and as we still don't have a way to reproduce this issue I'm closing it. Of course, if we have a reproduction in the future we can re-open it. No problem. |

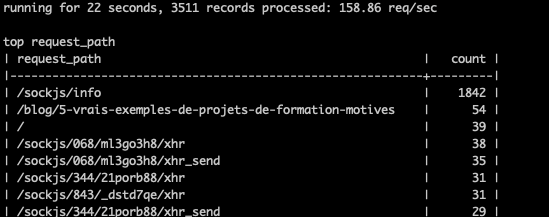

We use RoketChat (With Meteor), may be it is sockjs's bug, Presence Broadcast leads to clients flood front-end with long pooling XHR requests with websocket correctly configured. May be socket.io is better.

we enabled that sticky sessions in Nginx with ip_hash so one source IP is sticked to one upstream server. We see in Nginx logs, But we have about 4000 clients that send every second request like this:

POST /sockjs/464/4sqavoxf/xhr HTTP/1.1

Host: rocketchat.company.com

Connection: keep-alive

Content-Length: 0

Origin: https://rocketchat.company.com

User-Agent: Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Rocket.Chat/2.17.7 Chrome/78.0.3904.130 Electron/7.1.10 Safari/537.36

Accept: /

Sec-Fetch-Site: same-origin

Sec-Fetch-Mode: cors

Referer: https://rocketchat.company.com/direct/iodE4TwMg4i729GoHy5RWQyYZLZBKRuhpt

Accept-Encoding: gzip, deflate, br

Accept-Language: ru

Cookie: rc_uid=y5RWQyYZLZBKRuhpt; rc_token=2O55h3bWfNex-_KiYgwsvcEanzyL-Qdr7bXptnKir6m

And CPU Load and Connections will be very high,then Crash ........

With response like this:

HTTP/1.1 200 OK

Server: nginx

Date: Thu, 07 May 2020 05:02:20 GMT

Content-Type: application/javascript; charset=UTF-8

Transfer-Encoding: chunked

Connection: keep-alive

Cache-Control: no-store, no-cache, no-transform, must-revalidate, max-age=0

Access-Control-Allow-Credentials: true

Access-Control-Allow-Origin: https://rocketchat.company.com

Vary: Origin

Access-Control-Allow-Origin: *.company.com

X-Frame-Options: SAMEORIGIN

X-XSS-Protection: 1; mode=block

X-Content-Type-Options: nosniff

Strict-Transport-Security: max-age=31536000; includeSubDomains; preload

So, it is defiantly not a websocket. But Rocket.Chat works pretty normal for such problem clients.

I don't why!

What is this? Is it some kind of compatibility to websocket or what? JavaScript Socket?

RocketChat/Rocket.Chat#17559

itional context

CTRL+R on client totally fixed that problem. Just after reload client successfully open websocket (response 101) and request flood stops.

https://user-images.githubusercontent.com/4023037/81270072-de543500-9052-11ea-8bb5-ba2c421ee2fc.png

But I want to know what exactly is the mode in which problem clients are working and how to fix it permanently?

And what developers think could be the reason for that behavior?

The text was updated successfully, but these errors were encountered: