-

Notifications

You must be signed in to change notification settings - Fork 852

New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

$PATH does not contain paths defined on /etc/environment #3461

Comments

|

Hi @vladislav-ryzhov ! Thanks for your help with this issue. Please let me know if I can help with any futher information. |

|

Any news on this issue? We continue to investigate but because the PATH is provisioned during the VM image generation, the I understand this:

This is strange because if we create a custom script extension that run a script to read the value of the variable Also, the Microsoft Hosted pools that use the same based images as us don't have this issue. With some commands, I have check the content of the agent directory and I see some differences with the official agent. One difference is: no What are the differences? How we can reproduce the same result? As workaround, we recommend to our users to use the Thanks! |

|

Hello @marcuslopes @ChristopheLav

Could you please describe deployment process in greater details, so we would be able to understand where the issue is? |

|

Hi, We've been having a similar issue since approx. last week thursday on our VMSS agents. Our setup is (roughly):

The VSTS_AGENT_INPUT_WORK variable is being read correctly (agent sets this to an attached disk), it's just the PATH that's not being set correctly anymore (it's being set to the same value as secure_path in /etc/sudoers iirc) |

|

@AlexrDev I'm pretty sure that's different issue from what @marcuslopes and @ChristopheLav have, though it doesn't seem to be agent issue if the setting a variable is the issue. |

|

Hi @kuleshovilya, We use ‘Azure Virtual Machine Scale Set’ agent pool type. There is nothing custom from our part in the vmss extensions. The extension in place is all configured by Azure DevOps itself (extension name: Microsoft.Azure.DevOps.Pipelines.Agent). In our process to create the generalized image used in our vmss, we first created build a generalized image with Packer and push it in an image Gallery. From there,

We finally found a way to fix the current issue (as a workaround)

Here is the specialized vm extension custom script We tried various ways of creating files in /etc/sudoers.d/ and modifying env_keep, env_reset, disabled secure_path, etc. While the behavior seemed OK while connecting to the specialized vm, and that the secure_path was either removed or replaced in the sudoers.d files, the azure extension always wrote the default secure_path in the .path on the resulting vmss. Forcing the right path in the secure_path is the only thing that worked, which points to a weird behavior when using azure extensions on a vm/vmss. When we use this new image version for the vmss, the problem is fixed. *This is a workaround and not something we consider using as a official fix since it modify the sudoers file directly. We also noticed a difference in the machine from Azure pipelines pools. The configuration for the vsts user Thank you for your support on this issue. By the way I work in the same team as @marcuslopes and @ChristopheLav |

|

@Jean-FrancoisBeaudet @marcuslopes @ChristopheLav As I said above, that doesn't seem to be agent related, but more has to do with the way your setup works. What you do with the custom script is just a way to force /etc/environemnt through a sudoers file.

But, you can make changes to PATH afterwards in your pipelines. Re-reading /etc/environment is possible with something like |

|

Thank you for your reply @kuleshovilya @Jean-FrancoisBeaudet describes what we do for building the master image that we use in an Azure Virtual Machine Scale Set. As indicated by @marcuslopes, we use the official master image sources in https://github.com/actions/virtual-environments. To manage the Scale Set, we use the native Azure DevOps Scale Sets feature. As you can read in the Lifecycle of a Scale Set Agent section, the installation of the agent is directly managed by Microsoft with an own extension (automatically injected in the Scale Set by ADO). The documentation show the used (and not customizable) script. We have no custom script currently because we privilege the personnalisation during the build of the master image to keep good (start) performance because we want to use the tear down after each use feature at some time. Maybe we can attempt to add a Custom Script Extension with what you indicates ( I hope it's more clear for you. |

|

Hmm, yes, a bit more clear, but sadly I don't think that would be changed for images, since this behaviour is expected from Bash. So I would recommend to just add step with |

|

Put this command into our pipelines to fix a global agent installation issue (maybe from Bash) impact all our developers pipelines (not a few). But it's a workaround for now. Because this issue impact the usage of the master images (from Microsoft) with a native feature of ADO (from Microsoft) - I think the installation script (from Microsoft, https://vstsagenttools.blob.core.windows.net/tools/ElasticPools/Linux/6/enableagent.sh) should be updated to fix this issue. This is the most clean way, I think. We can't do anything on our side on this one. Other consideration, native Azure Pipelines pool (managed entirely by Microsoft) don't have this issue and does not require to add the |

|

Don't think enableagen.sh requires updating as well, as it is a case that is specific for you. |

|

I don't agree with the statement "it's specific for you". We use this Microsoft documentation without any customization 🙂 For Windows agents, all is/seems good ✔ For Linux agent, the $PATH is provisionned with the values of The agent installation is done on the Microsoft side (see the bellow documentation) and it is not working out of the box. I think there is an issue here (maybe not related to the agent itself) but @marcuslopes was redirect in this repo from actions/runner-images#3695 We have done one more test on our side. We have added a Custom Script Extension on the VMMS then:

This test show the potential issue with the extension on the VMMS (note: Microsoft use an extension to install the agent). As I indicate in the beginning of my reply, we use the Microsoft documentation without any customization. So, the feature (Elastic Pools) is not working out of the box with Linux images possibly related to a specific Extension issue. Microsoft don't have this issue with their own Hosted Pools, so two possibilities:

Can you find the missing information on your side, or redirect the issue to the team that is responsible of the Elastic Pools feature? Thank you! |

|

@ChristopheLav With statement "it's specific for you" I mean that it's something that you specifically require and it can be fixed locally, so we are not sure that global change is required. |

|

I don't agree with that @kuleshovilya 🙂 The Ubuntu (18.04 and 20.04) images on actions/virtual-environments repo are provisionned with values into Also, my last test clearly show the issue:

With the Elastic Pools feature, the agent is automatically installed with an VMMS extension (it's not customizable) and the issue is encountered. In the result, some tools of the Microsoft images that required right values from $PATH are not working as expected (PIPX for example). Because Microsoft don't have this issue with their own pools, so Microsoft don't use the same Elastic Pools features or have a custom undocumented step. Can you request some help from the Team that is responsible of the Elastic Pools feature if not related to the agent directly? Maybe @MaksimZhukov can jump in the discussion and help you to better understand (he redirect us into this repo from the original issue actions/runner-images#3695) |

Sounds like PAM doing it's work during logon. You could try verifying if after logon you can access |

|

If I manually connect to the VM instance that previously run the VMMS Custom Script Extension, the values from $PATH are correct yes. The agent is injected automatically by MS with an extension (not customizable) and the $PATH values are only read during the installation (so in the context of the Extension, with seems not loaded values). |

That's exactly what I've said in my first reply in this thread, /etc/environment is loaded into PATH at logon that's why it works, and the |

And I respond to you: we use the official Elastic Pools ADO feature that is documented here. The documentation indicates to build and use the images from the actions/virtual-environments then the Virtual Machine Scale Set is automatically managed by the ADO feature (so Microsoft) that inject an extension automatically to install the agent when an VM instance is created. If I'm connect manually to the VM and see the $PATH correctly provisioned and that the image tools are working correctly - I can conclude the image generation was succeed (I think). My test demonstrate the issue is related to the lifecycle of the $PATH (I'm potentially ok with the explanation) but the Elastic Pools feature is not working out of the box because of this (with Linux images only). The $PATH variable need to be provisioned correctly and the Azure Pipelines pools don't have this issue. So, what is the undocumented thing that Microsoft use to get the $PATH correctly provisioned in their VM instances? The Elastic Pools feature don't have the same behaviour out of the box. And, that is the issue. I'm expect that the feature works correctly out of the box like Azure Pipelines agent! In particular because the installation is managed by Microsoft automatically (with no customization possibility) with the Elastic Pools feature. Have to put a |

|

Sorry, I meant if you try |

Yes if I'm logon on the VM instance manually. This is my tests and associated results:

This is the script used for the tests: # Create our user account (same as MS)

echo creating AzDevOps account

sudo useradd -m AzDevOps

sudo usermod -a -G docker AzDevOps

sudo usermod -a -G adm AzDevOps

sudo usermod -a -G sudo AzDevOps

echo "Giving AzDevOps user access to the '/home' directory"

sudo chmod -R +r /home

setfacl -Rdm "u:AzDevOps:rwX" /home

setfacl -Rb /home/AzDevOps

echo 'AzDevOps ALL=NOPASSWD: ALL' >> /etc/sudoers

# Diagnostics PATH issue (custom part)

mkdir -p "/opt/dbg"

{ whoami; echo $PATH; } > /opt/dbg/1.txt

sudo login -f AzDevOps

{ whoami; echo $PATH; } > /opt/dbg/2.txtThe AzDevOps account is created like what Microsoft does in enableagent.sh I noted that on a VMSS Custom Script Extension, the command sudo runuser AzDevOps -c "{ echo \$PATH; } > /opt/dbg/3.txt"These are the results:

I got this time the same results when I'm logon manually on the VM instance or when the script is executed in a VMSS Custom Script Extension. I modify a little bit the command to include the sudo runuser AzDevOps -c "{ source /etc/environment; echo \$PATH; } > /opt/dbg/4.txt"These are the results:

As I understand with this conversation and some researches: the configuration from Go back to how the agent installation is done:

Remember the Elastic Pools automatically install the agent with a VMSS Extension and it is not customizable from the users: https://vstsagenttools.blob.core.windows.net/tools/ElasticPools/Linux/6/enableagent.sh The issue for me a couple of things:

Seems like a deadlock because I can't personnalize anything with the Elastic Pools feature that is not working correctly out of the box due to decisions of differents teams. I think some changes are required:

Let me known if you see something else. |

|

While we can't talk for the team that manages enableagent.sh, we won't be adding |

It's not a valid workaround. It's not required with Azure Pipelines, so why it's required with Elastic Pools? Because MS uses the same master images, there is a custom undocumented configuration step here. I would like to know this step. If no one adapt something, the Elastics Pool don't work out of the box. The documentation need to be updated to indicates this MAJOR point! Whatever it's working as Linux expect, if the feature Elastic Pools don't work there is something to fix in your (MS) end. I don't understand why you don't want to push the issue to the right team if it's not related to you. I really don't understand that point. @MaksimZhukov the design of the master images that use /etc/environment is not working with the Elastic Pools. |

|

@ChristopheLav Because this is not an unexpected or incorrect behaviour, and so we are not sure to what department we shall push this, the issue is not in the setup or anything like that, the way you are trying to use /etc/environment itself in not the way it's supposed to be used, thus you will need to make customization to your pipelines. |

Like I said previously - I don't want to use this way! I don't want myself. It's used by the master images itself that are maintained by Microsoft! We opened a ticket on their repo and @MaksimZhukov redirect you here (see the link in the first post). You can post an update on the original issue. This can help 😉

We speak about the Elastic Pools feature... not manually self hosted agents. This is not the same! |

|

@ChristopheLav Well yeah, not self-hosted, my bad. But still, this is not something we manage, or managed through GitHub, so opening a ticket on AzDO would be the way to learn the difference. |

Ok

We post an issue here because MS indicates that to us in this parent issue: Can you post a message on the parent issue to indicates the usage of /etc/environment seems not the right way to do the things? |

|

@ChristopheLav Sure, left a comment in the discussion |

|

@kuleshovilya Thank you! |

|

I'll close the issue for now, will reopen if something arises. Feel free to ping me here or at v-ikuleshov@microsoft.com |

|

Why was this closed? This is still a major issue with self-hosted agents. The PATH is read from You can't expect us to edit The problem lies in the ADO agent extension - and should be fixed there. |

|

This is still the case and should be fixed! |

|

I have been working on a three part blog series on Azure DevOps Self-Hosted VMSS Agents. I looked at this issue again while working through that and came up with a workaround that seems to work for me, based on various comments in the history of this issue. Specifically, in the PATH issue in Part 2 I mention what I did to workaround this. Would be interested to get any feedback. |

|

Thanks @ChristopheLav, @marcuslopes, @Jean-FrancoisBeaudet I think i found the workaround (by using the command @tonyskidmore, I looked your workaround but I agree with @ChristopheLav, we should not change the "sudoers" file. I run plenty of pipeline on the Microsoft-hosted "Azure Pipeline" and indeed the 2 images (MS-hosted and the one from the official repo runner-images with packer) are the same however the way the pipeline agent is deployed is totally different. Then MS-hosted is running the pipeline agent as a service in systemd. So here my workaround and i hope it will help others: <--> Anyway, so here the content of the custom script: I create the AzDevOps user instead of letting the VM pipeline extension to do it as the 'enableagent.sh' check if the account has been already created. And the main reason is to be sure that the script in '/opt/post-generation' run after AzDevOps user creation, so the /etc/environment will contain AzDevOps instead of the default user name or $HOME # copied from enableagent.sh script

sudo useradd -m AzDevOps

sudo usermod -a -G docker AzDevOps

sudo usermod -a -G adm AzDevOps

sudo usermod -a -G sudo AzDevOps

sudo chmod -R +r /home

setfacl -Rdm "u:AzDevOps:rwX" /home

setfacl -Rb /home/AzDevOps

# I do not want to modify the sudoers file like on the MS hosted agent. so i create the config in sudoers.d

# As recommended on the sudoers man, I do chmod 0440 for the files

# NOPASSWD:ALL should be enough too. SETENV can be removed

sudo su -c "echo 'AzDevOps ALL=(ALL) NOPASSWD:SETENV: ALL' | sudo tee /etc/sudoers.d/02_keep_env_for_AzDevOps && chmod 0440 /etc/sudoers.d/02_keep_env_for_AzDevOps"

# I think this one is useless but my idea is to tell not to env_reset for that particular user (AzDevOps) but it doesn't work, I always got the secure_path from sudoers file

sudo su -c "echo 'Defaults:AzDevOps !env_reset' | sudo tee /etc/sudoers.d/01_keep_env_for_AzDevOps && chmod 0440 /etc/sudoers.d/01_keep_env_for_AzDevOps"

# copied from "https://github.com/actions/runner-images/blob/main/docs/create-image-and-azure-resources.md#ubuntu"

sudo su -c "find /opt/post-generation -mindepth 1 -maxdepth 1 -type f -name '*.sh' -exec bash {} \;"

# I believed that it will help to put something in profile.d but it doesn't change anything so this line can be removed too

sudo su -c "echo 'source /etc/environment' > /etc/profile.d/agent_env_vars.sh && chmod 755 /etc/profile.d/agent_env_vars.sh"

# Useless the 3 below - it doesnt change anything for AzDevOps PATH when I run a pipeline

echo "source /etc/environment" >> /home/AzDevOps/.bashrc

echo "source /etc/environment" >> /home/AzDevOps/.profile

echo "source /etc/environment" >> /home/AzDevOps/.bash_profile

# Thanks @tonyskidmore, I reuse your line :)

pathFromEnv=$(cut -d= -f2 /etc/environment | tail -1)

# I create the /agent folder with the same permission as what the VM pipeline agent extension will do after that custom script

mkdir /agent && chmod 775 /agent

# I will put the proper PATH in the .path file and i will set the same permission as what the VM pipeline agent extension will do after that custom script

echo $pathFromEnv > /agent/.path && chmod 444 /agent/.path

# I do the same on /agent/.env file as the VM pipeline agent extension will append on that file instead of overwriting it (=not like for /agent/.path)

echo "PATH=$pathFromEnv" > /agent/.env && chmod 644 /agent/.env

# Change the ownership and group owner for the whole /agent folder (so recursively with -R)

sudo -E su -c 'chown -R AzDevOps:AzDevOps /agent'

# THE WORKAROUND!!! : make the /agent/.path immutable, so the VM pipeline agent extension won't be able to overwrite it even though the extension runs as root

# https://www.golinuxcloud.com/restrict-root-directory-extended-attributes/

chattr +i /agent/.path

Then I use bastion to check that it's really immutable: Then I run a pipeline which I print my PATH and I run |

|

Here is my final script without comments and I removed unused commands: sudo useradd -m AzDevOps

sudo usermod -a -G docker AzDevOps

sudo usermod -a -G adm AzDevOps

sudo usermod -a -G sudo AzDevOps

sudo chmod -R +r /home

setfacl -Rdm "u:AzDevOps:rwX" /home

setfacl -Rb /home/AzDevOps

sudo su -c "echo 'AzDevOps ALL=(ALL) NOPASSWD:ALL' | sudo tee /etc/sudoers.d/01_AzDevOps && chmod 0440 /etc/sudoers.d/01_AzDevOps"

# Must be done after AzDevOps user creation

sudo su -c "find /opt/post-generation -mindepth 1 -maxdepth 1 -type f -name '*.sh' -exec bash {} \;"

pathFromEnv=$(cut -d= -f2 /etc/environment | tail -1)

mkdir /agent && chmod 775 /agent

echo $pathFromEnv > /agent/.path && chmod 444 /agent/.path

echo "PATH=$pathFromEnv" > /agent/.env && chmod 644 /agent/.env

chown -R AzDevOps:AzDevOps /agent

chattr +i /agent/.pathIn my terraform vmss module, my extension block looks like this: extension = {

name = var.extension["name"]

publisher = var.extension["publisher"]

type = var.extension["type"]

type_handler_version = var.extension["type_handler_version"]

settings = jsonencode({

"script" = base64encode(data.local_file.sh.content)

})

}

# -

# - Custom Scripts

# -

data "local_file" "sh" {

#filename = "${path.module}/files/${var.vmss_linux_script_filename}"

filename = join("/", [path.module, "files", var.vmss_linux_script_filename])

} |

|

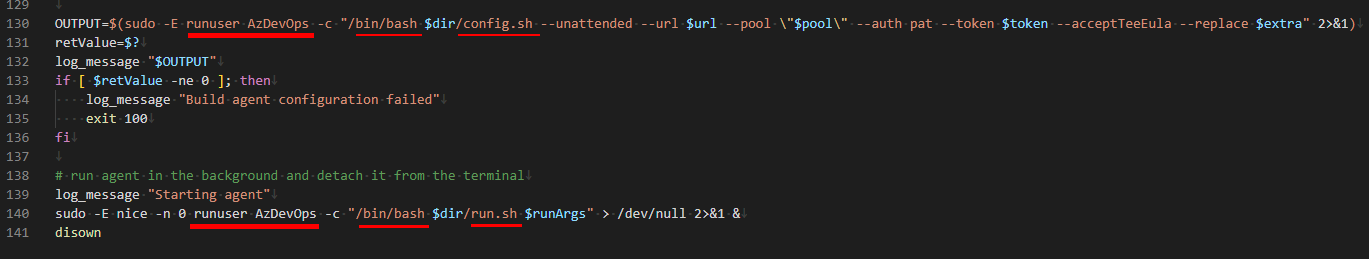

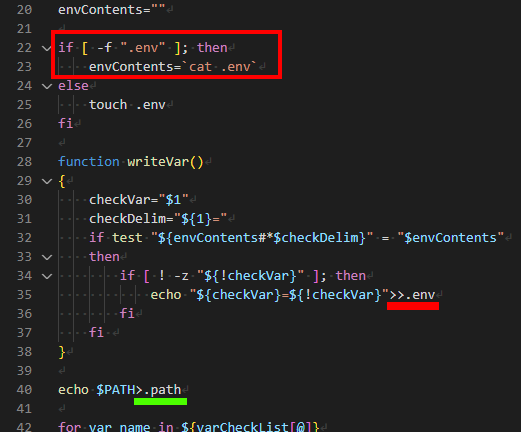

Additional investigation:

I run multiple pipeline with Microsoft hosted agent, and I didn't find any .env or/and .path file in their image (by running script on the pipeline So the issue is clearly how the VM pipeline agent extension called the 'config.sh' and the 'run.sh' file: I also tried to play with runuser config file (/etc/default/runuser) #pathFromEnv=$(cut -d= -f2 /etc/environment | tail -1)

#echo "ALWAYS_SET_PATH=yes" >> /etc/default/runuser

#echo "ENV_PATH=$pathFromEnv" >> /etc/default/runuser

#echo "ENV_ROOTPATH=$pathFromEnv" >> /etc/default/runuserbut it didn't work, I got an error 100 from the VM pipeline agent extension. and if I tried to run the config.sh manually through bastion on that VM instance, I will get multiple errors related to a lib....so missing So the dev team which has developed I have also find that post very useful (#3494) .env file and PATH variable |

|

@HoLengZai : Thanks for the script, saved me a lot of headache after i fell face down into troubleshooting the issue. Can confirm your approach makes the PATH correctly populated when running logic through the pipeline. |

|

@HoLengZai You made my day. Thank you very much for your work. |

|

We also ran into this problem with ubuntu2204. Eventhough I like the workaround from @HoLengZai - it's still a work around. A permanent solution by Microsoft is favoured by us. |

|

@kuleshovilya This is not a use-case specific problem to @ChristopheLav. With the workarounds suggested in this thread it means that there is no way to create a reusable ScaleSet image with custom software to use for agents in an agent pool. In our case, we need to have ansible installed on the agent, which is installed via pipx, and therefore the Please re-open this issue as it is a problem created by Microsoft's approach to custom image use, rather than a user specific scenario |

|

Been struggling with this issue for a week. Appreciate the workaround. @kuleshovilya Not sure why this is closed very clearly an issue many people are encountering while generating images for self-hosted build agents |

While testing our self-hosted GitHub runners on a rust repository, it

failed with:

line 1: cargo: command not found

I found out it related to $PATH environment variable, and it revealed

additional issues.

First when I run `echo $PATH` it printed:

PATH=$HOME/.local/bin:/opt/pipx_bin:$HOME/.cargo/bin:....:/usr/local/bin:/usr/sbin:/usr/bin:/sbin:/bin:/usr/games:/usr/local/games:/snap/bin

Also `echo $HOME` prints "/home/runner". I couldn't understand why cargo

command not found at the beginning. Then I learned in Unix-like operating

systems the `PATH` environment variable is just a string that holds a list

of directories separated by colons (`:`). Variable substitution does

occur at the moment of assignment.

I found similar issues

(actions/runner-images#3695 (comment))

Some configuration files such as $PATH related to the user's home

directory need to be changed. We need to run post-generations scripts

after first boot to configure them.

https://github.com/actions/runner-images/blob/main/docs/create-image-and-azure-resources.md#post-generation-scripts

Post-generation scripts use latest record at /etc/passwd as default

user.

We need to reconnect to vm to reload environment variables, so we

invalidate ssh cache.

This change alone was not enough. I noticed $PATH inside at workflow job

was printed as:

/usr/local/sbin:/usr/local/bin:/usr/sbin:/usr/bin:/sbin:/bin:/snap/bin

After some research I found another issue:

microsoft/azure-pipelines-agent#3461

runner script doesn't use global $PATH variable by default. It gets path

from secure_path at /etc/sudoers. Changing sudoers files isn't a good

thing to do. Also script load .env file, so we are able to overwrite

default path value of runner script with $PATH.

After solving these two puzzling issues, runner script is able to load

correct $PATH value.

/home/runner/.local/bin:/opt/pipx_bin:/home/runner/.cargo/bin:...:/usr/bin:/sbin:/bin:/usr/games:/usr/local/games:/snap/bin

While testing our self-hosted GitHub runners on a rust repository, it

failed with:

line 1: cargo: command not found

I found out it related to $PATH environment variable, and it revealed

additional issues.

First when I run `echo $PATH` it printed:

PATH=$HOME/.local/bin:/opt/pipx_bin:$HOME/.cargo/bin:....:/usr/local/bin:/usr/sbin:/usr/bin:/sbin:/bin:/usr/games:/usr/local/games:/snap/bin

Also `echo $HOME` prints "/home/runner". I couldn't understand why cargo

command not found at the beginning. Then I learned in Unix-like operating

systems the `PATH` environment variable is just a string that holds a list

of directories separated by colons (`:`). Variable substitution does

occur at the moment of assignment.

I found similar issues

(actions/runner-images#3695 (comment))

Some configuration files such as $PATH related to the user's home

directory need to be changed. We need to run post-generations scripts

after first boot to configure them.

https://github.com/actions/runner-images/blob/main/docs/create-image-and-azure-resources.md#post-generation-scripts

Post-generation scripts use latest record at /etc/passwd as default

user.

We need to reconnect to vm to reload environment variables, so we

invalidate ssh cache.

This change alone was not enough. I noticed $PATH inside at workflow job

was printed as:

/usr/local/sbin:/usr/local/bin:/usr/sbin:/usr/bin:/sbin:/bin:/snap/bin

After some research I found another issue:

microsoft/azure-pipelines-agent#3461

runner script doesn't use global $PATH variable by default. It gets path

from secure_path at /etc/sudoers. Changing sudoers files isn't a good

thing to do. Also script load .env file, so we are able to overwrite

default path value of runner script with $PATH.

After solving these two puzzling issues, runner script is able to load

correct $PATH value.

/home/runner/.local/bin:/opt/pipx_bin:/home/runner/.cargo/bin:...:/usr/bin:/sbin:/bin:/usr/games:/usr/local/games:/snap/bin

While testing our self-hosted GitHub runners on a rust repository, it

failed with:

line 1: cargo: command not found

I found out it related to $PATH environment variable, and it revealed

additional issues.

First when I run `echo $PATH` it printed:

PATH=$HOME/.local/bin:/opt/pipx_bin:$HOME/.cargo/bin:....:/usr/local/bin:/usr/sbin:/usr/bin:/sbin:/bin:/usr/games:/usr/local/games:/snap/bin

Also `echo $HOME` prints "/home/runner". I couldn't understand why cargo

command not found at the beginning. Then I learned in Unix-like operating

systems the `PATH` environment variable is just a string that holds a list

of directories separated by colons (`:`). Variable substitution does

occur at the moment of assignment.

I found similar issues

(actions/runner-images#3695 (comment))

Some configuration files such as $PATH related to the user's home

directory need to be changed. We need to run post-generations scripts

after first boot to configure them.

https://github.com/actions/runner-images/blob/main/docs/create-image-and-azure-resources.md#post-generation-scripts

Post-generation scripts use latest record at /etc/passwd as default

user.

We need to reconnect to vm to reload environment variables, so we

invalidate ssh cache.

This change alone was not enough. I noticed $PATH inside at workflow job

was printed as:

/usr/local/sbin:/usr/local/bin:/usr/sbin:/usr/bin:/sbin:/bin:/snap/bin

After some research I found another issue:

microsoft/azure-pipelines-agent#3461

runner script doesn't use global $PATH variable by default. It gets path

from secure_path at /etc/sudoers. Changing sudoers files isn't a good

thing to do. Also script load .env file, so we are able to overwrite

default path value of runner script with $PATH.

After solving these two puzzling issues, runner script is able to load

correct $PATH value.

/home/runner/.local/bin:/opt/pipx_bin:/home/runner/.cargo/bin:...:/usr/bin:/sbin:/bin:/usr/games:/usr/local/games:/snap/bin

While testing our self-hosted GitHub runners on a rust repository, it

failed with:

line 1: cargo: command not found

I found out it related to $PATH environment variable, and it revealed

additional issues.

First when I run `echo $PATH` it printed:

PATH=$HOME/.local/bin:/opt/pipx_bin:$HOME/.cargo/bin:....:/usr/local/bin:/usr/sbin:/usr/bin:/sbin:/bin:/usr/games:/usr/local/games:/snap/bin

Also `echo $HOME` prints "/home/runner". I couldn't understand why cargo

command not found at the beginning. Then I learned in Unix-like operating

systems the `PATH` environment variable is just a string that holds a list

of directories separated by colons (`:`). Variable substitution does

occur at the moment of assignment.

I found similar issues

(actions/runner-images#3695 (comment))

Some configuration files such as $PATH related to the user's home

directory need to be changed. We need to run post-generations scripts

after first boot to configure them.

https://github.com/actions/runner-images/blob/main/docs/create-image-and-azure-resources.md#post-generation-scripts

Post-generation scripts use latest record at /etc/passwd as default

user.

We need to reconnect to vm to reload environment variables, so we

invalidate ssh cache.

This change alone was not enough. I noticed $PATH inside at workflow job

was printed as:

/usr/local/sbin:/usr/local/bin:/usr/sbin:/usr/bin:/sbin:/bin:/snap/bin

After some research I found another issue:

microsoft/azure-pipelines-agent#3461

runner script doesn't use global $PATH variable by default. It gets path

from secure_path at /etc/sudoers. Changing sudoers files isn't a good

thing to do. Also script load .env file, so we are able to overwrite

default path value of runner script with $PATH.

After solving these two puzzling issues, runner script is able to load

correct $PATH value.

/home/runner/.local/bin:/opt/pipx_bin:/home/runner/.cargo/bin:...:/usr/bin:/sbin:/bin:/usr/games:/usr/local/games:/snap/bin

|

@ChristopheLav deserves the github medal of honor for his patience. We have run into the same issue on our agent pools built based on runner-images, and I certainly echo the astonishment that this issue is closed. Can this be re-evaluated please? |

|

This problem is clearly still afflicting users and should not be closed. Thanks for the workaround @HoLengZai! |

|

We just encountered this issue as well, same use case (self-hosted buildagents with packer images). |

|

I have just run into this issue - pip3 packages warn they are being installed to a location outside the PATH. Works absolutely fine using Microsoft-hosted ubuntu-latest agents. But broken for self-hosted. How can this issue be closed while something as fundamental as the path remains divergent between Azure DevOps agent types? |

|

Encountering this issue on Ubuntu 22 images/agents as well. Please reopen. All those custom scripts and workarounds should not be needed for something as simple as the agent reading the PATH defined in /etc/environment. Especially given this works fine on the windows images/agents when the system PATH is customized. |

Agent Version and Platform

Version of your agent? 2.187.2, 2.188.3

OS of the machine running the agent? Ubuntu 18.04, Ubuntu 20.04

Azure DevOps Type and Version

dev.azure.com (Cloud)

What's not working?

Issue created after request from the contributors of the /actions/virtual-environments repository (see:

actions/runner-images#3695), saying this issue is probably related to the agents and not the images themselves.

Description

When running a task inside a self-hosted Ubuntu build agent (either manual or automatic scaleset), running

echo $PATHon a self hosted agent returns a very limited number of paths.Connecting to the agent via SSH and running

echo $PATHdirectly will return the full range of paths inside the PATH variable though.Virtual environments affected

Image version and build link

(using the https://github.com/actions/virtual-environments repository)

Ubuntu 18

Image version: releases/ubuntu18/20210606 commit 58b026cedf2363aee66fcdde3981b09704d5bd79

Agent version: 2.187.2

Ubuntu 20

Image version: releases/ubuntu20/20210606 commit a26b241d4791b9af60f069b29c6e993595d75349

Agent version: 2.188.3

Packer: 1.6.2, 1.7.2

Expected behavior

Running a shell script task

echo $PATHon a self hosted Ubuntu agent should return all of the paths defined inside the /etc/environment file:PATH=/home/linuxbrew/.linuxbrew/bin:/home/linuxbrew/.linuxbrew/sbin:$HOME/.local/bin:/opt/pipx_bin:/usr/share/rust/.cargo/bin:$HOME/.config/composer/vendor/bin:/usr/local/.ghcup/bin:$HOME/.dotnet/tools:/snap/bin:/usr/local/sbin:/usr/local/bin:/usr/sbin:/usr/bin:/sbin:/bin:/usr/games:/usr/local/games:/snap/binActual behavior

Running a shell script task

echo $PATHon a self hosted Ubuntu agent returns a very limited number of paths:/usr/local/sbin:/usr/local/bin:/usr/sbin:/usr/bin:/sbin:/bin:/snap/binIf I perform the following steps, $PATH gets filled as expected though:

/opt/vsts/a1Repro steps

echo $PATHAgent and Worker's Diagnostic Logs

Agent_20210714-172015-utc.log

Worker_20210714-155257-utc.log

The text was updated successfully, but these errors were encountered: