New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

IPv6 nightmare, can someone explain the magic numbers please? #37779

Comments

|

The 2001:db8::/32 subnet is reserved for examples/documentation purposes. https://tools.ietf.org/html/rfc3849 You should input your IPv6-prefix provided by your ISP/Router. If you don't one, you can using ULA subnet/addresses ( https://en.wikipedia.org/wiki/Unique_local_address ) but you will only be able to communicate locally. |

|

Hi @DennisGlindhart, that makes sense to me now. Thank you for that :) But i still can't get docker with ipv6 running on a vultr server. These are the steps i did:

It must be either something insanely simple that i keep missing somehow that might make it work. |

|

ipv6 setups is very simple, if you know how routing works. The Vultr router thinks it has a /64 on the one side, with hosts inside it.

What should happen instead of step 7-9 should be obvious, but because you only got a /64 instead of a routable prefix, you're kinda stuck for now. In IPv6 a /64 is considered the same as a single IP in ipv4, many things rely upon one host having multiple addresses (2^64 IPs), for example SLAAC or the IPv6 Privacy extension (even though most of the time not needed on servers). IPv6 was designed to never have to care about IP shortage ever again. As long as you don't burn bits of the address, that assumption holds true (story for another time). But it is not game over, there are four things you can do (order of preference):

The last one is the same, as if you have a ISP, that assigns a /64 to your router. Than you have a IPv6 on your router, but no addresses for your home network behind the router. For solutions you can refere to this question https://serverfault.com/questions/714890/ipv6-subnetting-a-64-what-will-break-and-how-to-work-around-it Someone on reddit pointed me to the 2nd one and it works quite well. For that you need to do:

For your custom networks you could use these addresses if your assignment is 2001:db8::/48: If you have any question regarding IPv6, don't hesitate to ask, so that I can improve the documentation later on. |

|

Hi @agowa338, that is quite a comprehensive write-up! While i do have a little bit of a network background, i apparently lack a lot of knowledge there :) For instance, why is a /48 subnet required? Or bigger then /64? That /64 subnet already allows a whopping 18,446,744,073,709,551,616 ip's in it. To me it makes no sense to allocate that much ip's to a container that really only needs one. Sure, ipv6 has an insane amount of ip's available so we can be generous when giving hosts a bucket of ip's, but this is more like you gave the container an ocean full of ip's. Also the concept of docker is one service per container so scoping it down to something tiny really seems to be OK in my opinion. And when you have that many ip's it seems like total madness to me to use an external service for even more. But perhaps that's just my mindset ;) Also, i still don't quite get (you did explain it) why passing traffic into a container from the host is this difficult in ipv6 where it's dead simple with ipv4. There is no such thing as an NDP that needs to be tweaked for ipv4 yet it works just perfectly. |

No worry, can you please mark sections, where you think I'm to technical? It should become readable for everyone that knows how routing works with IPv4. If you don't mind, would you like to review the doc change? Just click review and mark everything that you think needs to be changed, or further explained and maybe also why you have problems understanding a specific part.

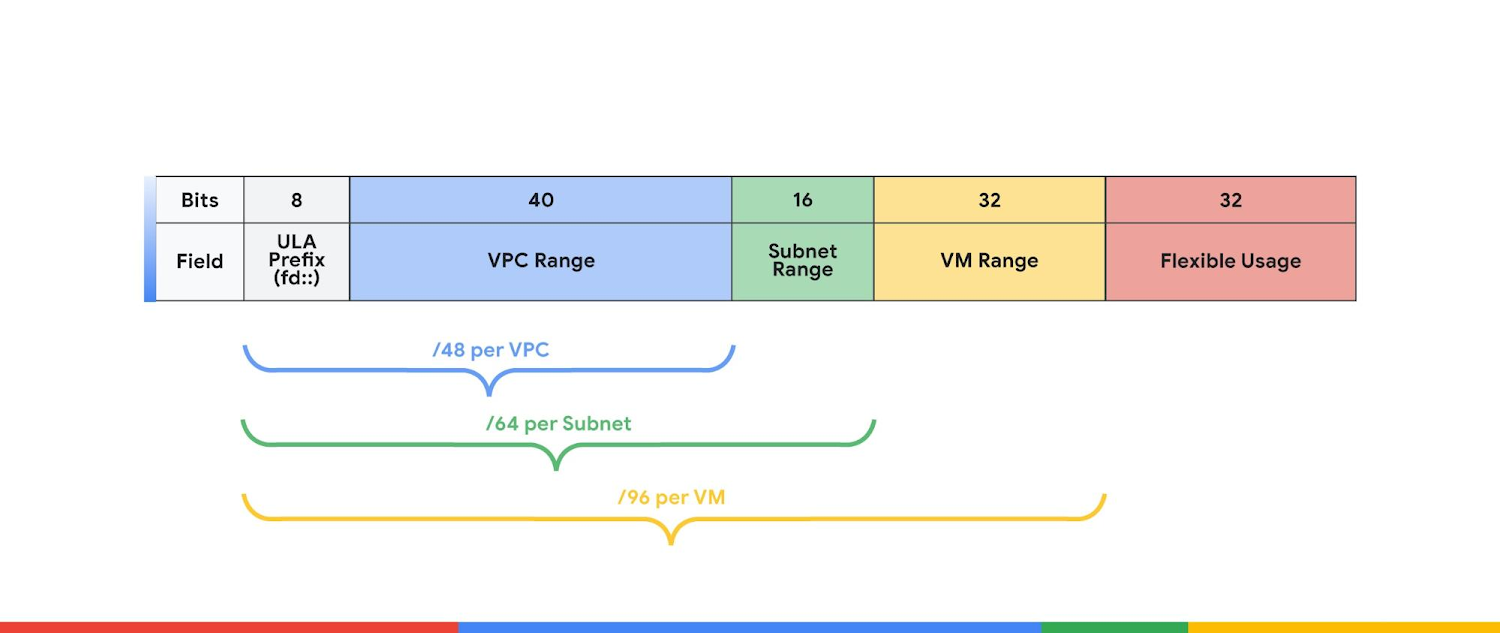

IPv6 is designed to be wastefull with IPs. Therefore some bits have meaning.

In fact, for most applications you just need one ip per container. But for simplicity and to avoid routing issues (like you have when subnetting a 2001:0db8:85a3:08d3::/64 further) you want to have more than just one /64. If you want to deploy a webservice, you need 3 networks. So been assigned a 2001:0db8:85a3:08d0::/62 would be enough, but providers should assign you a /56 or even a /48 without even thinking about it, as there are that many IPs, that it just does not matter. For your webservice you would divide the 2001:0db8:85a3:08d0::/62 into:

In ipv4 you would tweak arp instead of NDP. I wanted to add a image to make that clear, but couldn't figure out how to embed a image. Do you know how to include a image into the docs? The problem is, a /64 is not further subnetable, therefore you cannot further assign a /64. That's like if your provider does assign you a 192.0.2.0/29 (6 hosts) and you want to subnet that. |

|

@agowa338 I don't understand the reason why you need more subnets than a single /64 for your containers. That is not a pattern I've seen used any other places before unless one have multiple "trust-zones" like DMZ etc. Can you explain why the web service in your example need 3 /64 subnets? The only place where multiple /64 subnets is needed is that you typically need one for the container-network and another subnet for the Docker-hosts (they might be on the same, but it can potentially be a bit tricky). @markg85 To answer your question on why IPv6 seems more difficult to get to work. You will need a /56 or /48 subnet from your ISP (a /64 might also be possible, but might be a bit more tricky) Basically you have a LAN /64 IPv6-subnet where your Docker-hosts are attachedto your network - lets say 2001:db8:1::/64. Router address: 2001:db8:1::1 and your docker host(s) will then have 2001:db8:1::2, 2001:db8:1::3, 2001:db8:1::4 etc. Container subnet we can then take 2001:db8:2::/64 let each Docker-host have a /80 (This is the subnet in fixed-cdr) Docker Host 1: IP: 2001:db8:1::2, Fixed-CIDR: 2001:db8:2:0:1::/80 On router you will then need to add the following routes: ip -6 route add 2001:db8:2:0:1::/80 via 2001:db8:1::2 |

/64 is the smallest subnet, everything beneath is just ugly and can cause problems, as outlined above, also most providers don't route the /64 to your host, but only configure it on the link.

The core problem is that docker does currently not manage the ipv6 routes.

You really shouldn't go below /64 per docker network. Also there is no need for IPv4, in a cloud environment. For example Facebook has internally only IPv6. Only there public facing load balancers have IPv4. |

|

To make it short: Your problem is that a package arriving to Vultr's router from the outside to destination 2001:19f0:5001:2f9d::242:ac11:2 (Your container IP) has no idea that it should go through your Docker-host (Lets say it's 2001:19f0:5001:2f9d::1) to reach the container (@agowa338 explains this very well in details, but this is the ultra short version). So to make it work you need Vultr to add a route to their router like:

This should be "scaled up" to be a whole subnet (the subnet in fixed-cidr-v6) being routed (preferably a subnet different from the subnet the Docker-host is in) so not every container/ip needs its own seperate routing rule. But if you cannot make them do that (it sounds like you can't) you might have another option (Beside --net=host & using Tunnelbroker): I don't know how Vultr network works, but if it is just a simple Layer2 network attached to your Docker-host, then maybe macvlan (or ipvlan) would work. Read more here: https://docs.docker.com/network/macvlan/ - That way you don't need configuration outside your Docker-host, but I have my doubts that it actually IS a clean layer 2 network, but it might be worth a try.

IMHO I think this can very easily be misinterpreted. I think it only holds true if by IPv4 you mean a single external Ipv4 address (with NAT'ed clients behind) and in that case a /48 or /56 would probably be more correct as they are the most common allocations by ISP's. In an typical network your clients/computers would all be in a single /64 which is on the same Layer 2 network where SLAAC, NDP, mDNS etc can be used. In companies you might create different segregation zones (DMZ, Clients, Internal servers etc.), but i would definitely expect more than a single device in a /64 subnet.

I'm not sure we have understanding when using the word subnet. You mention docker networks and it seems, based on your example, that you create multiple networks by

I'm curious - why can't the Websevrer & DB-server in your example be in the same network/subnet (/64) - i.e be in the default_bridge? Why need the custom subnet 1 & 2 ? If you use multiple/different /64 subnets you will have to get to Layer 3 (Routing) to communicate between them and thus the features you can only have on a L2 with /64 bit is lost anyways (mDNS i.e) How will you have different containers in the same /64 spawn multiple docker-hosts in that setup without dividing it into smaller "parts"? The way it is done in both Swarm & Kubernetes (all the "big" L3 CNI-providers like Calico, Cilium, Static-routing, Kube-router etc.) is that part of a /64-subnet is split up in sizes between /80 to /122 and allocated to each node and not bad practice in any way AFAIK. Of course this is Layer 3 network, but that is also the case when you use different /64's in your Docker-example setup. |

Or by using NDP Proxy:

Only if you also do dhcp-pd, or how would you do virtualization like VirtualBox/VMwarePlayer/... (ok, may be not needed in your business, depending on what your needs are). And having a /64 per host within your network is just how you can future proof yourself.

In the above example, I subnetted the prefix to gain separate networks that I could assign to the docker networks. To illustrate how one would use the prefix to create separate ipv6 networks for each docker network.

They might as well be within the same /64, but if you want to use auto scaling, having different networks can just be handy for administrative purposes and readability of logfiles. It was just a simple example of where you would use different docker networks. It might as well be two networks with your webserver and another one with workers, or you only have one with everything inside it. That is up to you how you design your application/network.

Up to your design.

RFC6275, but docker currently does not implement that. So you'll have to do it yourself.

Until now, but the IPv6 implementation in docker is everything, but not usable without pain. If you host the docker hosts yourself, I would currently recommend creating multiple vlans and using macvlan and it's ipvlan option so you can handle networking outside of docker. As this will also allow you to create networks without a IPv6 gateway to create non routed networks, that are only reachable from your docker nodes. And also to assign multiple IPv6 addresses to a single interface for redundant uplinks. |

|

Aargh, i'm getting totally insane by this damned IPv6 crap. Really, it is not intuitive! It is not just the replacing the dots with semicolons and digits for hex. It's far more complicated. Each time i try it i spend hours and ending up getting exactly nowhere. Sorry if i sound a bit agitated, that's not because of you answering (i'm honestly happy you do!) but just because it in my mind is just really overly and needlessly complex. I consider myself quite technological advanced. I'm a programmer, i know my stuff or know how to find the information to learn and understand it. IPv6 does not fall into that category. So, what do i get thus far?

Now here's the real trouble. I - again - tried a vultr instance with docker and IPv6 following this guide nearly to the letter: https://docs.docker.com/v17.09/engine/userguide/networking/default_network/ipv6/ It specifically talks about the case where you get a /64 block and need to subnet it further. It also gives me the impression that i can make it work without any external hackary (like tunnelbroker or asking for an even bigger ipv6 range from vultr). The section that makes me think that:

When following that exactly (and adjusting IP's to what i have) i just don't get it working. I might be doing the same thing wrong over and over. But if i do that, even after literally years (as i tried the same a couple years ago) then the problem likely is the documentation. Now that very documentation (https://docs.docker.com/v17.09/engine/userguide/networking/default_network/ipv6/) is very elaborate and probably works well for people who are deep into ipv6 that have gone through the insane pains of getting it working and now know how to interpret things written in there. It is not easy to follow if you just want to get it working and don't know the ins and outs of ipv6. I'm certainly not going to follow an ipv6 course just to be able to setup a docker container, i just keep using ipv4 if v6 requires that amount of knowledge. A very big improvement for that documentation page is to not solely use the reserved ipv6 address all over the place. It needs to explain what 2001:db8:1:: is (i know, it's a reserved example address). It needs to show real examples so that users get a better feeling of how ip's look. Right now everything is based on that example and my ip's just don't look like that. And because you can omit alot in ipv6 it doesn't make it clear to the reader and just seems like magic. So again, it needs to be easy to understand in bite-sized pieces of information. It doesn't need to explain the protocol in depth. It needs to explain it to get it working and provide enough information for the user to build upon. If you need hours and hours of time (days even) where you would setup an ipv4 container in mere minutes then the documentation is (very) wrong. Cloud hosting I'd recommend basing documentation on /64 where the host is in fact going to subnet further down. Bad practice or not. Therefore explain why you need an NDP proxy (that much is clear to me, even though i only get that i "need" it, not why ipv6 does not route below /64) and describe how to set it up in clear terms and with real world examples. |

Ok, may I'm just assuming to much. Have you ever done anything with routing? Do you know how routing tables work? Subnetting? Supernetting? CIDR? Within IPv4 you basically have two different concepts of how networking is understood to work by people. The first is one network internally and nat to public, it simply does not care what you do internally, as you're hidden. The other is within enterprise environments where also routing, bgp, spinning tree, ... come into play. With IPv6 this all got way simpler, as the first one can be dropped entirely. And that is also a problem for people that don't know how routing works.

No problem, I see that all the time, and as soon as people understand IPv6 they're like: As you're a programmer and not a devop/sysadmin/networkadmin, have you ever looked at WebRTC/P2P, NAT Holepunching, UPnP? If so, than you know the limitations of IPv4 and what crude and error proun workarounds and problems that causes. IPv6 fixes all of them.

You shouldn't, as routing internally works just the same. There is no in public it's like a and internally its like b. It's always a.

Not really, your provider needs to route whatever prefix you want to have to your router e.g. docker host. Instead of just dropping it out on the interface. A routing table of the router would look like:

That will happen automatically, if you don't want to do fancy stuff, your host will start to route every network it knows into every other network, after you set

The problem is not that you need a bigger prefix, the problem is how vultra routes you the /64. And according to best practice and RIR assignment policies, they should just provide a routable prefix either by assigning a larger prefix (which when I say implies that they add a route to your instance ip to there routing table) or by using dhcp-pd. They could also just provide you another /64 via a route to your instance, than you would be able to assign that prefix to your instance instead of the one you got on the wan side of your instance (like your home router gets at least a /64 (or /127) for its ISP facing side a /64 for your lan). Or what I've seen from other providers, they do this within there routing table: This is a crude hack, that the provider could do to allow you to subnet the network as you like. So the whole problem is how the network is routed at the handover between vultr and you.

That's exactly what we said above as another workaround.

As I also said above, Vultr, may just block NDP Proxies, as that is basically the same as an NDP Exhaustion Attack... You may try macvlan instead of a bridge, as @DennisGlindhart outlined above.

What does using another prefix change? That's like having a guide where 172.0.0.1 is the server instead of 10.0.0.1, can you explain your thought process about that? I've problems understanding why that should make any difference. Or is it because of the abbreviation with 0 instead of 0000, db8 instead of 0db8 and :: instead of multiple zero blocks?

That's what I tried above and in the linked doc change. But it is not that easy, if you come from two different angles. I started That's why I asked you to review my explanation of what you need to configure. And as you cannot tell me how I would have to implement a Interface for my specific use case, I cannot tell you how you need to design your network.

Hetzner profides you also an additional /48 for assignment behind or inside your host if you ask for it (at least for root server, that's all I used from them until now).

There are multiple reasons, why it is bad practice, and that is because it can cause problems, if one assumes, you're following RIR assignment policies, RFC standards, common practices, ... And without knowing that, you're also unable to debug these issues... Bytheway that's also the reason you got a networking/infrastructure department within organisations, because you as a developer than just request to have a docker with ipv6 for containers instead of having to think about how the whole infrastructure before your app should look like. P. S. Just to mention the one problem that could have the most impact after you've setup such a prefix and have your service up and running. Let's say your provider assigns you 16 ips in the case of digital ocean. The next 16 IPs are assigned to another customer. You host a mailserver/webserver that sends emails within your 16 ips. The other customer uses his 16 IPs to send spam emails. the whole /64 or bigger depending on what the provider specified as the "customer allocation size" within the RIR will be blacklisted. Therefore you'll be unable to send mails to anybody until the /64 is either removed from the blacklist again which takes time or you get another 16 IPs from a different /64 assigned, for which you've to change your whole crude setup. |

I've done subnetting and site-to-site connections where both sides use DHCP (that's more of a firewall issue though). I have tweaked routes before to isolate networks, but i admit i try to stay away from that. I know how the netmask works to scope your network in smaller chunks. I am at the level where i can easily setup networks, but when it comes to setting up VLAN's i have some trouble. I'd say that is more than the average tech guy, but less than someone who has actually went to network management courses.

I know P2P and UPnP. The latter tells the router to punch a hole :)

I doubt that, but then again i'm not getting it working so it might just be the case.

Yes, sure.

Hahaha, fair enough ;) (FYI, i started totally wrong in programming. Beginning from PHP (where everything is allowed) working up till C++ and staying there). I did look over it and my first impression was "this is a lot better!". But yeah, i will give a detailed review with inline comments later today or this week at the very latest.

I've seen that example a couple of times when looking for digitalocean indeed. Seems like /64 is the bare minimum a provider should assign :) Am i correct in assuming that if docker would have implemented RFC6275, this whole issue would not exist at all? Aka, docker would then work as easy as ipv4 currently does? If the answer to this is "yes", then i'm curious to know about any docker bugs tracking progress on that RFC. |

Not only that UPnP can do way more. For example it tells you your public IP or helps you discover printers. But only if the vendor implemented it correctly and nearly all programs or devices that claim to support UPnP fail at some point or another (ktorrent does not work with ubnt gateways for example)...

Most likely not before friday.

No worry, that should not be needed, as I have a few bugs on vultr.

Let me see if I can come up with some good graphic to illustrate that and also subnetting visually...

Networking is also not that hard ;-)

👍

no, they should assign multiple /64's to customers.

Yes and no, yes, because you as a user would not notice it. No, as it is just abstracted away from you and that problem just occurs under the hood. The container would have assigned a "technical" care off address from each wan links subnet and a home address, what you add to your dns and others use to connect to your server. But therefore you also need more than one /64 subnet (but it could be abstracted for single servers by using link local addresses instead of GUAs)... |

|

Hi @agowa338 and @DennisGlindhart ! Since the issue remains open and you both seem quite knowledgeable on the subject, any help clarifying how the subnet with IPv6 works would be greatly appreciated 😀 Docker specificNAT via

|

Hi,

I've literally spend days following all kind of shady guides on enabling ipv6 on docker and i just can't get it to work.

My hosting is at vultr (digitalocean will also work) so i can make a new instance any moment and try out whatever you folks are going to suggest ;) They support ipv6. Infact, i can ping the ipv6 instance itself so there isn't anything wrong on that side. Lets take Fedora (or centos) as example distributions.

The issue i have is understanding what is said here: https://docs.docker.com/v17.09/engine/userguide/networking/default_network/ipv6/ (or whatever the current page is)

That page - and literally a million others - talk about 2001:db8:1::/64. Where does that come from?

Other guides offer more information and show their ifconfig output. In my case that begins with: "2001:19f0:".... some of those other guides then also still use 2001:db8:1::/64 in their docker config while their own ipv6 ip doesn't even begin with that.

So, please, explain to me in clear short instructions which IPv6 should be filled in the fixed-cidr-v6 config value. Some increment it by 1, some don't. But none tell why they do what they do.

It makes absolutely no sense to me why i should fill in "2001:db8:1::/64" and how that is supposed to work if my own host starts with something different. And yes, i tried. Not working for me. But then again, i haven't gotten any IPv6 to work from within the container to the outside world or ping from the outside to my container.

Please, once we get this working, update the documentation to explain what is going on and why. ipv6 (even though it's here for years by now) is still something very difficult to wrap your head around for basically everybody who needs to use it.

Cheers,

Mark

The text was updated successfully, but these errors were encountered: