New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

IPv6 address pool subnet smaller than /80 causes dockerd to consume all available RAM #40275

Comments

|

ping @selansen @arkodg PTAL - looks related to the comments on https://github.com/docker/libnetwork/pull/2058/files#r166658653 |

|

yes the issue is we are allocating too much space https://github.com/docker/libnetwork/blob/1680ce717394f8aa9ba6de26b851b7e02699d490/ipamutils/utils.go#L114 |

|

+1 as this happened to me and caused my server to crash. Tried to limit the allocated IPv6 space used by leveraging "default-address-pools" in daemon.json. Size 80 works fine but 112 would not allow dockerd to start, and 96 crippled my server. It is very interesting to me that this happens with the default docker bridge, but you can easily achieve(no crashing) this with IPAM driver (shown in docker-compose.yml): Workaround in place but hope it gets fixed soon. 😃 |

|

Is this still an issue? I just tried the below config: which starts docker without problems. I cannot actually get any ipv6 addresses auto-assigned to custom created networks, though, so maybe there's some other issue in my setup that prevents this issue from triggering.

Is this a typo? "a few GB of extra RAM" does not sound negligable to me? Or do you mean the total usage is a few GB with or without this address pool? |

It was not a typo. It means the increase due to the pool configuration was a few GB. The "negligible" may be debatable - a few GB may be a lot usually but compared to the increase of hundreds of GB for the smaller subnets it could be said to be negligible. All of it is obviously a bug, even a few GB of increase just because using a /81 subnet makes no sense. |

It sounds like we might be working in different types of environment, then. In my environment, servers have a couple of GB of RAM, maybe 8G if I'm lucky. Sounds like "just a few GB" might be acceptable in your environment, from where I'm standing, this would mean it's not even near usable at all. Anyway, let's not waste too much time on this, thanks for confirming in any case :-) |

|

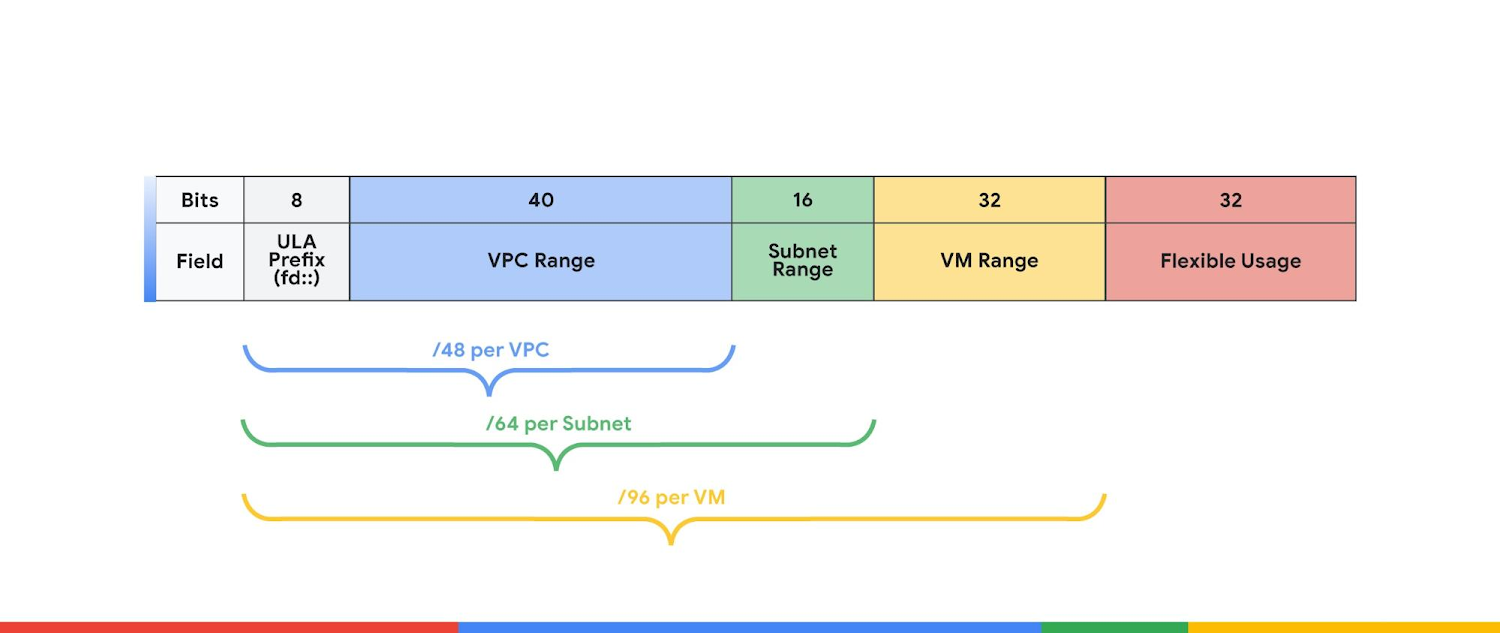

AWS is delegating a prefix up to a /80 per instance: https://docs.aws.amazon.com/AWSEC2/latest/UserGuide/ec2-prefix-eni.html#ec2-prefix-basics GCP is delegating a prefix of /96 per instance: https://cloud.google.com/compute/docs/ip-addresses/configure-ipv6-address#ipv6-assignment There are other cloud providers that are offering even smaller sizes for their prefix delegations. Docker should work with smaller allocations too. |

|

LOL. So there is bug like this and then other hand Calico which only allow IPv6 prefixes /116 - 128: https://docs.projectcalico.org/networking/change-block-size I opened PR projectcalico/node#1337 to improve situation on Calico side but what is plan here? I think that at least /96 which GCP support should be supported by Docker too. |

This commit resolves moby#40275 by implementing a custom iterator named NetworkSplitter. It splits a set of NetworkToSplit into smaller subnets on-demand by calling its Get method. Prior to this change, the list of NetworkToSplit was split into smaller subnets when ConfigLocalScopeDefaultNetworks or ConfigGlobalScopeDefaultNetworks were called or when ipamutils package was loaded. When one of the Config function was called with an IPv6 net to split into small subnets, all the available memory was consumed. For instance, fd00::/8 split into /96 would take ~5*10^27 bytes. Although this change trades memory consumption for computation cost, the NetworkSplitter is used by libnetwork/ipam package in such a way that it only have to compute the address of the next subnet. When NetworkSplitter reach the end of NetworkToSplit, it's resetted by libnetwork/ipam only if there were some subnets released beforehand. In such case, ipam package might iterate over all the subnets before finding one available subnet. This is the worst-case but I believe it's not really impactful as either many subnets exists (more than one host can handle) or not so many subnets exists and full iteration over NetworkSplitter is fast. Signed-off-by: Albin Kerouanton <albinker@gmail.com>

This commit resolves moby#40275 by implementing a custom iterator named NetworkSplitter. It splits a set of NetworkToSplit into smaller subnets on-demand by calling its Get method. Prior to this change, the list of NetworkToSplit was split into smaller subnets when ConfigLocalScopeDefaultNetworks or ConfigGlobalScopeDefaultNetworks were called or when ipamutils package was loaded. When one of the Config function was called with an IPv6 net to split into small subnets, all the available memory was consumed. For instance, fd00::/8 split into /96 would take ~5*10^27 bytes. Although this change trades memory consumption for computation cost, the NetworkSplitter is used by libnetwork/ipam package in such a way that it only have to compute the address of the next subnet. When NetworkSplitter reach the end of NetworkToSplit, it's resetted by libnetwork/ipam only if there were some subnets released beforehand. In such case, ipam package might iterate over all the subnets before finding one available subnet. This is the worst-case but it shall not be really impactful as either many subnets exists (more than one host can handle) or not so many subnets exists and full iteration over NetworkSplitter is fast. Signed-off-by: Albin Kerouanton <albinker@gmail.com>

This commit resolves moby#40275 by implementing a custom iterator named NetworkSplitter. It splits a set of NetworkToSplit into smaller subnets on-demand by calling its Get method. Prior to this change, the list of NetworkToSplit was split into smaller subnets when ConfigLocalScopeDefaultNetworks or ConfigGlobalScopeDefaultNetworks were called or when ipamutils package was loaded. When one of the Config function was called with an IPv6 net to split into small subnets, all the available memory was consumed. For instance, fd00::/8 split into /96 would take ~5*10^27 bytes. Although this change trades memory consumption for computation cost, the NetworkSplitter is used by libnetwork/ipam package in such a way that it only have to compute the address of the next subnet. When NetworkSplitter reach the end of NetworkToSplit, it's resetted by libnetwork/ipam only if there were some subnets released beforehand. In such case, ipam package might iterate over all the subnets before finding one available subnet. This is the worst-case but it shall not be really impactful as either many subnets exists (more than one host can handle) or not so many subnets exists and full iteration over NetworkSplitter is fast. Signed-off-by: Albin Kerouanton <albinker@gmail.com>

This commit resolves moby#40275 by implementing a custom iterator named NetworkSplitter. It splits a set of NetworkToSplit into smaller subnets on-demand by calling its Get method. Prior to this change, the list of NetworkToSplit was split into smaller subnets when ConfigLocalScopeDefaultNetworks or ConfigGlobalScopeDefaultNetworks were called or when ipamutils package was loaded. When one of the Config function was called with an IPv6 net to split into small subnets, all the available memory was consumed. For instance, fd00::/8 split into /96 would take ~5*10^27 bytes. Although this change trades memory consumption for computation cost, the NetworkSplitter is used by libnetwork/ipam package in such a way that it only have to compute the address of the next subnet. When NetworkSplitter reach the end of NetworkToSplit, it's resetted by libnetwork/ipam only if there were some subnets released beforehand. In such case, ipam package might iterate over all the subnets before finding one available subnet. This is the worst-case but it shall not be really impactful as either many subnets exists (more than one host can handle) or not so many subnets exists and full iteration over NetworkSplitter is fast. Signed-off-by: Albin Kerouanton <albinker@gmail.com>

This commit resolves moby#40275 by implementing a custom iterator named NetworkSplitter. It splits a set of NetworkToSplit into smaller subnets on-demand by calling its Get method. Prior to this change, the list of NetworkToSplit was split into smaller subnets when ConfigLocalScopeDefaultNetworks or ConfigGlobalScopeDefaultNetworks were called or when ipamutils package was loaded. When one of the Config function was called with an IPv6 net to split into small subnets, all the available memory was consumed. For instance, fd00::/8 split into /96 would take ~5*10^27 bytes. Although this change trades memory consumption for computation cost, the NetworkSplitter is used by libnetwork/ipam package in such a way that it only have to compute the address of the next subnet. When NetworkSplitter reach the end of NetworkToSplit, it's resetted by libnetwork/ipam only if there were some subnets released beforehand. In such case, ipam package might iterate over all the subnets before finding one available subnet. This is the worst-case but it shall not be really impactful as either many subnets exists (more than one host can handle) or not so many subnets exists and full iteration over NetworkSplitter is fast. Signed-off-by: Albin Kerouanton <albinker@gmail.com>

|

Still happening with current docker 20.10.18 👎 |

This commit resolves moby#40275 by implementing a custom iterator named NetworkSplitter. It splits a set of NetworkToSplit into smaller subnets on-demand by calling its Get method. Prior to this change, the list of NetworkToSplit was split into smaller subnets when ConfigLocalScopeDefaultNetworks or ConfigGlobalScopeDefaultNetworks were called or when ipamutils package was loaded. When one of the Config function was called with an IPv6 net to split into small subnets, all the available memory was consumed. For instance, fd00::/8 split into /96 would take ~5*10^27 bytes. Although this change trades memory consumption for computation cost, the NetworkSplitter is used by libnetwork/ipam package in such a way that it only have to compute the address of the next subnet. When NetworkSplitter reach the end of NetworkToSplit, it's resetted by libnetwork/ipam only if there were some subnets released beforehand. In such case, ipam package might iterate over all the subnets before finding one available subnet. This is the worst-case but it shall not be really impactful as either many subnets exists (more than one host can handle) or not so many subnets exists and full iteration over NetworkSplitter is fast. Signed-off-by: Albin Kerouanton <albinker@gmail.com>

This commit resolves moby#40275 by implementing a custom iterator named NetworkSplitter. It splits a set of NetworkToSplit into smaller subnets on-demand by calling its Get method. Prior to this change, the list of NetworkToSplit was split into smaller subnets when ConfigLocalScopeDefaultNetworks or ConfigGlobalScopeDefaultNetworks were called or when ipamutils package was loaded. When one of the Config function was called with an IPv6 net to split into small subnets, all the available memory was consumed. For instance, fd00::/8 split into /96 would take ~5*10^27 bytes. Although this change trades memory consumption for computation cost, the NetworkSplitter is used by libnetwork/ipam package in such a way that it only have to compute the address of the next subnet. When NetworkSplitter reach the end of NetworkToSplit, it's resetted by libnetwork/ipam only if there were some subnets released beforehand. In such case, ipam package might iterate over all the subnets before finding one available subnet. This is the worst-case but it shall not be really impactful as either many subnets exists (more than one host can handle) or not so many subnets exists and full iteration over NetworkSplitter is fast. Signed-off-by: Albin Kerouanton <albinker@gmail.com>

This commit resolves moby#40275 by implementing a custom iterator named NetworkSplitter. It splits a set of NetworkToSplit into smaller subnets on-demand by calling its Get method. Prior to this change, the list of NetworkToSplit was split into smaller subnets when ConfigLocalScopeDefaultNetworks or ConfigGlobalScopeDefaultNetworks were called or when ipamutils package was loaded. When one of the Config function was called with an IPv6 net to split into small subnets, all the available memory was consumed. For instance, fd00::/8 split into /96 would take ~5*10^27 bytes. Although this change trades memory consumption for computation cost, the NetworkSplitter is used by libnetwork/ipam package in such a way that it only have to compute the address of the next subnet. When NetworkSplitter reach the end of NetworkToSplit, it's resetted by libnetwork/ipam only if there were some subnets released beforehand. In such case, ipam package might iterate over all the subnets before finding one available subnet. This is the worst-case but it shall not be really impactful as either many subnets exists (more than one host can handle) or not so many subnets exists and full iteration over NetworkSplitter is fast. Signed-off-by: Albin Kerouanton <albinker@gmail.com>

This commit resolves moby#40275 by implementing a custom iterator named NetworkSplitter. It splits a set of NetworkToSplit into smaller subnets on-demand by calling its Get method. Prior to this change, the list of NetworkToSplit was split into smaller subnets when ConfigLocalScopeDefaultNetworks or ConfigGlobalScopeDefaultNetworks were called or when ipamutils package was loaded. When one of the Config function was called with an IPv6 net to split into small subnets, all the available memory was consumed. For instance, fd00::/8 split into /96 would take ~5*10^27 bytes. Although this change trades memory consumption for computation cost, the NetworkSplitter is used by libnetwork/ipam package in such a way that it only have to compute the address of the next subnet. When NetworkSplitter reach the end of NetworkToSplit, it's resetted by libnetwork/ipam only if there were some subnets released beforehand. In such case, ipam package might iterate over all the subnets before finding one available subnet. This is the worst-case but it shall not be really impactful as either many subnets exists (more than one host can handle) or not so many subnets exists and full iteration over NetworkSplitter is fast. Signed-off-by: Albin Kerouanton <albinker@gmail.com>

This commit resolves moby#40275 by implementing a custom iterator named NetworkSplitter. It splits a set of NetworkToSplit into smaller subnets on-demand by calling its Get method. Prior to this change, the list of NetworkToSplit was split into smaller subnets when ConfigLocalScopeDefaultNetworks or ConfigGlobalScopeDefaultNetworks were called or when ipamutils package was loaded. When one of the Config function was called with an IPv6 net to split into small subnets, all the available memory was consumed. For instance, fd00::/8 split into /96 would take ~5*10^27 bytes. Although this change trades memory consumption for computation cost, the NetworkSplitter is used by libnetwork/ipam package in such a way that it only have to compute the address of the next subnet. When NetworkSplitter reach the end of NetworkToSplit, it's resetted by libnetwork/ipam only if there were some subnets released beforehand. In such case, ipam package might iterate over all the subnets before finding one available subnet. This is the worst-case but it shall not be really impactful as either many subnets exists (more than one host can handle) or not so many subnets exists and full iteration over NetworkSplitter is fast. Signed-off-by: Albin Kerouanton <albinker@gmail.com>

This commit resolves moby#40275 by implementing a custom iterator named NetworkSplitter. It splits a set of NetworkToSplit into smaller subnets on-demand by calling its Get method. Prior to this change, the list of NetworkToSplit was split into smaller subnets when ConfigLocalScopeDefaultNetworks or ConfigGlobalScopeDefaultNetworks were called or when ipamutils package was loaded. When one of the Config function was called with an IPv6 net to split into small subnets, all the available memory was consumed. For instance, fd00::/8 split into /96 would take ~5*10^27 bytes. Although this change trades memory consumption for computation cost, the NetworkSplitter is used by libnetwork/ipam package in such a way that it only have to compute the address of the next subnet. When NetworkSplitter reach the end of NetworkToSplit, it's resetted by libnetwork/ipam only if there were some subnets released beforehand. In such case, ipam package might iterate over all the subnets before finding one available subnet. This is the worst-case but it shall not be really impactful as either many subnets exists (more than one host can handle) or not so many subnets exists and full iteration over NetworkSplitter is fast. Signed-off-by: Albin Kerouanton <albinker@gmail.com>

This commit resolves moby#40275 by implementing a custom iterator named NetworkSplitter. It splits a set of NetworkToSplit into smaller subnets on-demand by calling its Get method. Prior to this change, the list of NetworkToSplit was split into smaller subnets when ConfigLocalScopeDefaultNetworks or ConfigGlobalScopeDefaultNetworks were called or when ipamutils package was loaded. When one of the Config function was called with an IPv6 net to split into small subnets, all the available memory was consumed. For instance, fd00::/8 split into /96 would take ~5*10^27 bytes. Although this change trades memory consumption for computation cost, the NetworkSplitter is used by libnetwork/ipam package in such a way that it only have to compute the address of the next subnet. When NetworkSplitter reach the end of NetworkToSplit, it's resetted by libnetwork/ipam only if there were some subnets released beforehand. In such case, ipam package might iterate over all the subnets before finding one available subnet. This is the worst-case but it shall not be really impactful as either many subnets exists (more than one host can handle) or not so many subnets exists and full iteration over NetworkSplitter is fast. Signed-off-by: Albin Kerouanton <albinker@gmail.com>

This commit resolves moby#40275 by implementing a custom iterator named NetworkSplitter. It splits a set of NetworkToSplit into smaller subnets on-demand by calling its Get method. Prior to this change, the list of NetworkToSplit was split into smaller subnets when ConfigLocalScopeDefaultNetworks or ConfigGlobalScopeDefaultNetworks were called or when ipamutils package was loaded. When one of the Config function was called with an IPv6 net to split into small subnets, all the available memory was consumed. For instance, fd00::/8 split into /96 would take ~5*10^27 bytes. Although this change trades memory consumption for computation cost, the NetworkSplitter is used by libnetwork/ipam package in such a way that it only have to compute the address of the next subnet. When NetworkSplitter reach the end of NetworkToSplit, it's resetted by libnetwork/ipam only if there were some subnets released beforehand. In such case, ipam package might iterate over all the subnets before finding one available subnet. This is the worst-case but it shall not be really impactful as either many subnets exists (more than one host can handle) or not so many subnets exists and full iteration over NetworkSplitter is fast. Signed-off-by: Albin Kerouanton <albinker@gmail.com>

A new Subnetter structure is added to lazily sub-divide an address pool into subnets. This fixes moby#40275. Prior to this change, the list of NetworkToSplit was eagerly split into smaller subnets when ipamutils package was loaded, when ConfigGlobalScopeDefaultNetworks was called or when the function SetDefaultIPAddressPool from the default IPAM driver was called. In the latter case, if the list of NetworkToSplit contained an IPv6 prefix, eagerly enumerating all subnets could eat all the available memory. For instance, fd00::/8 split into /96 would take ~5*10^27 bytes. Although this change trades memory consumption for computation cost, the Subnetter is used by libnetwork/ipam package in such a way that it only have to compute the address of the next subnet. When the Subnetter reach the end of NetworkToSplit, it's resetted by libnetwork/ipam only if there were some subnets released beforehand. In such case, ipam package might iterate over all the subnets before finding one available. Also, the Subnetter leverages the newly introduced ipbits package, which handles IPv6 addresses correctly. Before this commit, a bitwise shift was overflowing uint64 and thus only a single subnet could be enumerated from an IPv6 prefix. This fixes moby#42801. Signed-off-by: Albin Kerouanton <albinker@gmail.com>

A new Subnetter structure is added to lazily sub-divide an address pool into subnets. This fixes moby#40275. Prior to this change, the list of NetworkToSplit was eagerly split into smaller subnets when ipamutils package was loaded, when ConfigGlobalScopeDefaultNetworks was called or when the function SetDefaultIPAddressPool from the default IPAM driver was called. In the latter case, if the list of NetworkToSplit contained an IPv6 prefix, eagerly enumerating all subnets could eat all the available memory. For instance, fd00::/8 split into /96 would take ~5*10^27 bytes. Although this change trades memory consumption for computation cost, the Subnetter is used by libnetwork/ipam package in such a way that it only have to compute the address of the next subnet. When the Subnetter reach the end of NetworkToSplit, it's resetted by libnetwork/ipam only if there were some subnets released beforehand. In such case, ipam package might iterate over all the subnets before finding one available. Also, the Subnetter leverages the newly introduced ipbits package, which handles IPv6 addresses correctly. Before this commit, a bitwise shift was overflowing uint64 and thus only a single subnet could be enumerated from an IPv6 prefix. This fixes moby#42801. Signed-off-by: Albin Kerouanton <albinker@gmail.com>

Can a link be provided? No mention in the current Docker IPv6 docs page EDIT: From old Docker docs authored Oct 2015:

All of that was stripped away in a Feb 2018 rewrite, but has been cached by various other sites which show up in search engine results for queries with Docker + IPv6. I am curious if that's still applicable or outdated information given this tidbit:

That would seem to potentially align with why a prefix of UPDATE: Looked into this further.

"default-address-pools": [

{ "base": "192.0.2.0/16", "size": 24 },

{ "base": "2001:db8:1:1f00::/64", "size": 96 }

],

An IPv6 address block with a That is much smaller than 4 billion |

What you've linked to is vendor docs for IP address assignment within a given CIDR prefix range, not the full CIDR block? Similar to a host/server with a single IPv4 public address, you can bind your containers ports to that address, but the containers may be using subnets of IPv4 private range addresses internally. You can likewise have a public IPv6 address and have containers use private range ULA addresses that you can subnet too. Or bind to the public IPv6 address(es) your server NIC has available. The problem described in this issue AFAIK is Docker is being given a large block ("base" in config) to divide into billions of subnets (due to "size" prefix reducing number of hosts per subnet).

I don't think that is the actual problem? This isn't my forte, but I'd assume you could provide a longer prefix length for "base" (less networks), or lower "size" value (larger IP range per network). I don't think the Docker docs explain the settings too well, but there are some decent articles on the subject that make up for that. Someone might want to correct me, but this might work: With IPv6, a subnet is not meant to be smaller than

And wikipedia on the address formats:

UPDATE: I might be mistaken? There is this Google blogpost about using ULA It's still a

If you use Docker on one of those VMs, then perhaps the networks it creates become "subnets" smaller than But these are different from the |

|

Just for reference, since some config examples shared in this discussion have a variety in IP addresses used.

Since the default "default-address-pools": [

{

"base": "fd00:face:feed::/48",

"size": 64

}

]Documentation is lacking a bit, and a few bugs that have been unresolved for years (undocumented) added to the confusion. I get the impression that the above snippet is how IPv6 subnet pools should be configured, but as detailed below is not practical due to bugs. Behaviour (with gotchas)This will kind of work. You can't use "default-address-pools": [

{

"base": "172.17.0.0/12",

"size": 16

},

{

"base": "fd00:face:feed::/48",

"size": 64

}

]With that resolved, if you create an IPv6 enabled network, it'll be assigned two subnet: Unless you enabled IPv6 on the default bridge ( AFAIK

Perhaps I misunderstood how

Could this be clarified?

Related issuesFound these during the above investigation write-up 😅

|

|

I am a plain old regular user (PORU?) i have read the current live docs, the threads linked above, and I am still utterly confused on the scenarios vs the best practice. The docs IMO really need to be clear on:

For me i am stumped. I have a swarm. The questions I have are:

I see no reason i wouldn't want my containers globally routable like any other host on my network. I think the ip6tables is basically required if i want connectivity from the container to external i also set I assume ip6tables effectively NAT'd or firewalled the incoming packets OR that the host doesn't advertise routes so no client on my network can get ingress to the containers with IPv6? |

I've not had to look at the Docker documents in years, lucky me, but I assume they still only try to vaguely describe basic concepts like networking and omit all the technical details specific to Docker that us administrators would really need.

Swarm does not support IPv6? You should probably open a new issue as it has nothing to do with the issue described in the OP. |

I don't know, it was supposed to be a question not a statement :-) I was more meaning the documentation needs to be clear thats all and why i posted on the documentation thread. It seems the docker_gwbridge does get scope local but doesn't get assigned anything from the main bridge ipv6 range or the pool range (docker0 did). I am working through about 15 open tabs with different guidance ... at least I am now at a point where a docker run -it -rm container on the default bridge it has simillar levels of IPv4 and IPv6 connectivity... next up, what happens when i push a service to that node.... |

I phrased that not well. I meant that a few years ago swarm did not really support IPv6, even if you might see references to it working with IPv6 in the fine documentation. And as late of just a few months ago I have seen comments by those "in the know" that swarm does not work -- or does not work "right" -- with IPv6. |

As mentioned in my reply above, I'm fairly certain the docs used to proudly and explicitly state that swarm supports IPv6 while it really did not. I would wager it's still like that in one way or another; if not explicitly stating that IPv6 works then at least implicitly. You might have better luck trawling the issues here, I'm sure there are some very lengthy comment threads on swarm & IPv6. |

|

yeah that was what i was doing, for example even if i take swarm out of the equation the example in the compose 3 reference only shows this, who on earth what to statically IPv6 address anything but the most critical hosts (DNS servers, like i mean thats it, thats the only ones) routers should be discovered by RAs. Everything else should be dynamic. Also what was the point of me setting a base pool if the ipam commands can't use it? Or maybe if i didn't put a v6 address it would pick from the pool? who knows the docs don't say, lo. anyway enough of my griping, i will go see what i can find with more searches - this IPv6 / docker task has been on my to do list for ages, - just my sort of holiday weekend research :-) |

I can't advise on swarm and my experience with GUA network had various gotchas I ran into that I didn't find time to document that better, but you may find these IPv6 with Docker docs I wrote helpful? It shows how to setup with Docker CLI or Docker Compose. The official Docker IPv6 docs were in worse shape until recently (May) when they received a big revision (I provided some review feedback). My unofficial docs might provide a helpful resource though 😅 You can definitely create an IPv6 network via the CLI and reference it via Here's a preview: If you're using IPv4 NAT (default), IPv6 ULA works well at providing IPv6 networking that works in the same way between containers and the default enabled IPv6 ULA benefit over IPv6 GUA:

|

|

From a comment on 43033, it seems that #46755 may provide a fix for this issue. Can anyone verify one way or another? |

Description

It is documented that IPv6 pool "should" be at least /80 so that MAC address can fit in the last 48 bits.

Using a default-address-pools size larger than 80 causes dockerd to consume too much RAM - the longer the prefix (smaller subnet), the more RAM dockerd will use:

The pool prefix length could be set to larger by 80 by a typing error or a mistake and if this leads to dockerd consuming copious amounts of RAM will cause the administrator to possibly lose time troubleshooting the situation.

It looks like prefix length like /96 is totally unusable and dockerd should refuse to start instead of starting to allocate ridiculous amounts of RAM.

At minimum a warning message should be printed.

Steps to reproduce the issue:

Describe the results you received:

dockerd consumes very large amounts of RAM (tens of GB).

Describe the results you expected:

Either IPv6 pool prefix lengths longer than 80 should work, or dockerd should refuse start with a configuration that cannot be used.

At minimum a warning message should be printed for prefix lengths longer than 80.

The documentation does not mention the RAM usage effect either:

Additional information you deem important (e.g. issue happens only occasionally):

100% reproducible.

Output of

docker version:Output of

docker info:Additional environment details (AWS, VirtualBox, physical, etc.):

The text was updated successfully, but these errors were encountered: