New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

Fix timeouts from very large raft messages #3122

Conversation

|

Fixes #3113 |

0c75844

to

0f2c605

Compare

|

Fixed a spelling issue. |

|

PTAL @thaJeztah |

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

SGTM

perhaps our "context aficionado" @corhere wants to have a look as well?

|

CI failing again, but I think it's a different test now. Let me post that failure in case it was not (yet) known as a flaky |

|

|

|

Can we kick CI again on this one? |

|

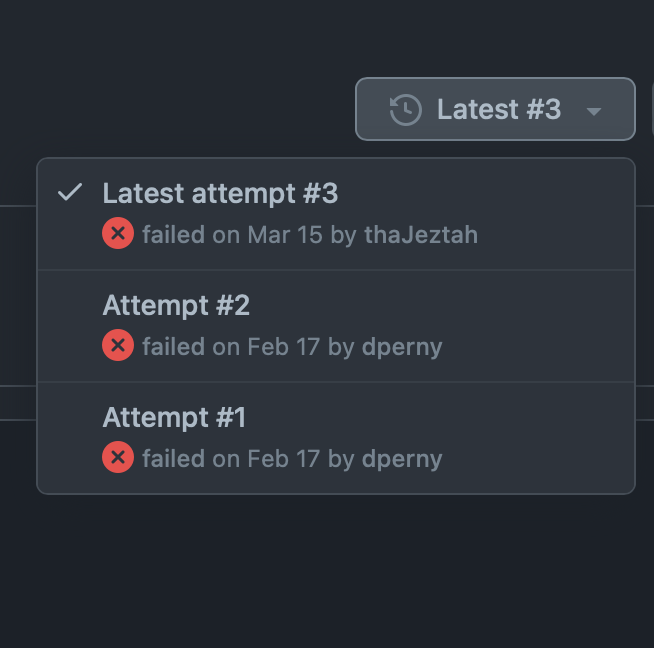

Yeah, I can try it again; it kept failing 😔 Let me give it another go |

0f2c605

to

ba3004f

Compare

|

This is still failing CI, but I believe the issue with this specific PR has been fixed by adding this watchdog timer thing. I need to spend some more time on the other issue, which I believe applies to all PRs right now. |

|

@dperny looks like CI is happy now, except for a typo; |

manager/state/raft/transport/peer.go

Outdated

| // We cannot just do a naked send to the bump channel. If we try to | ||

| // send, for example, and the timer has elapsed, then the context | ||

| // will have been canceled, the watchdog loop will have exited, and | ||

| // there would be no reciever. We'd block here forever. |

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

s/reciever/receiver/ here

Rationale is in a comment explaining the two removed lines. Signed-off-by: Drew Erny <derny@mirantis.com>

ba3004f

to

c963e16

Compare

Codecov Report

@@ Coverage Diff @@

## master #3122 +/- ##

===========================================

+ Coverage 0 61.71% +61.71%

===========================================

Files 0 154 +154

Lines 0 31120 +31120

===========================================

+ Hits 0 19207 +19207

- Misses 0 10369 +10369

- Partials 0 1544 +1544 |

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

LGTM

| for { | ||

| select { | ||

| case <-bump: | ||

| case <-time.After(p.tr.config.SendTimeout): |

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

A new timer will be created on each turn of the loop, which won't be cleaned up until after the timer fires. Memory consumption would increase proportionally to the number of messages the snapshot data is split into, and won't be fully garbage-collectable until a nontrivial amount of time after the snapshot is fully transmitted. The memory usage could potentially become significant when there's a thundering herd of a hundred nodes joining a cluster.

Active timers created with timer.AfterFunc are allowed to be reset, and context.CancelFunc closures are idempotent, so a resettable watchdog timer which cancels a context on expiry and consumes O(1) memory can be implemented quite simply:

t := timer.AfterFunc(p.tr.config.SendTimeout, cancel)

defer t.Stop()

bump := func() { t.Reset(p.tr.config.SendTimeout) }There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

🙈 good catch (I always forget the caveats with these); @dperny can have a look?

Address moby#3122 (comment) by taking the recommendation to reduce resource churn. Signed-off-by: Bjorn Neergaard <bneergaard@mirantis.com>

Rationale is in a comment in the diff explaining the two removed lines.

fixes #3113