Commit

This commit does not belong to any branch on this repository, and may belong to a fork outside of the repository.

fix: reduce overly-eager connection reaping for slow connections (#3308)

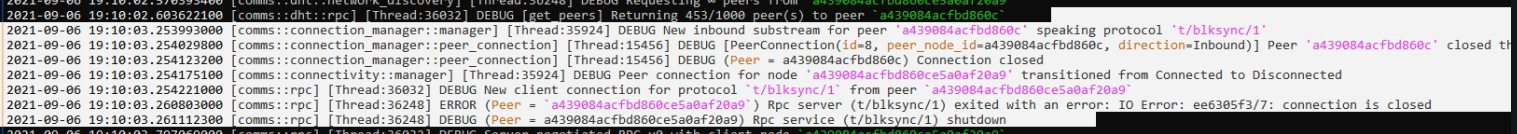

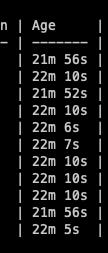

Description --- Connection reaping has been improved for use with slower connections (network IO or slow thread scheduling): - A connection is counted as used as soon as a request for a substream has been started. - The minimum age of a connection before it can be reaped changed from 1 minute to 20 minutes - Reduces the refresh interval for pool refresh from every 30s to every 60s - Set `MissedTickBehaviour::Delay` for refresh interval, as bursting is undesired - fixes the stress_test example - fix (rare) flakiness for `dial_cancelled` comms test Motivation and Context --- Connections should not be considered unused while they are attempting to establish a substream. This typically takes < 1 second over tor, however a slow network and/or thread starvation can cause substream establishment to take many seconds. Previously, the connection would be counted as unused for that period, resulting in reaping while a substream is being established.  How Has This Been Tested? --- Basic base node test

- Loading branch information

Showing

9 changed files

with

51 additions

and

30 deletions.

There are no files selected for viewing

This file contains bidirectional Unicode text that may be interpreted or compiled differently than what appears below. To review, open the file in an editor that reveals hidden Unicode characters.

Learn more about bidirectional Unicode characters

This file contains bidirectional Unicode text that may be interpreted or compiled differently than what appears below. To review, open the file in an editor that reveals hidden Unicode characters.

Learn more about bidirectional Unicode characters

This file contains bidirectional Unicode text that may be interpreted or compiled differently than what appears below. To review, open the file in an editor that reveals hidden Unicode characters.

Learn more about bidirectional Unicode characters

This file contains bidirectional Unicode text that may be interpreted or compiled differently than what appears below. To review, open the file in an editor that reveals hidden Unicode characters.

Learn more about bidirectional Unicode characters

This file contains bidirectional Unicode text that may be interpreted or compiled differently than what appears below. To review, open the file in an editor that reveals hidden Unicode characters.

Learn more about bidirectional Unicode characters

This file contains bidirectional Unicode text that may be interpreted or compiled differently than what appears below. To review, open the file in an editor that reveals hidden Unicode characters.

Learn more about bidirectional Unicode characters

This file contains bidirectional Unicode text that may be interpreted or compiled differently than what appears below. To review, open the file in an editor that reveals hidden Unicode characters.

Learn more about bidirectional Unicode characters

This file contains bidirectional Unicode text that may be interpreted or compiled differently than what appears below. To review, open the file in an editor that reveals hidden Unicode characters.

Learn more about bidirectional Unicode characters

This file contains bidirectional Unicode text that may be interpreted or compiled differently than what appears below. To review, open the file in an editor that reveals hidden Unicode characters.

Learn more about bidirectional Unicode characters