New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

Reduced Performance in PowerShell 7.1? #14087

Comments

|

Try to use .ForEach{} and .Where{} methods, seems to often be faster than piping in to Where-Object or ForEach-Object. |

|

@hl2guide We need a demo script and txt files to investigate the issue. Could you please share more info? |

@hl2guide if you can't share the source of the script, could you please tell us exactly which methods do you use to match the criteria and to remove the line? |

|

@adamsitnik I'll upload a demo file very soon. |

|

Please find the demo script file attached as a ZIP file. The demo script sorts and filters out lines in a text file (filter_blocklist.txt). My PC Info CPU: Intel Core i5-4690 CPU @ 3.5GHz [quad core] My Results of Demo Script (Obtained from Windows Task Manager, with all other applications closed off, just a single cmd.exe window) PowerShell 5.1 (powershell.exe) : Max 5.3 GB of RAM usage, Time Taken: 37.6663 minutes |

|

@hl2guide Thanks for sharing demo script and data! It is great case I see. I was never able to get results from original script on my old notebook so I truncated the data file to 100000 lines and modify the script to measure parts of the script - (1) Get-Content and (2) "Removing duplicate lines..." block. Get-Content works the same on all versions but I wonder how many allocations it does and how slow it is on large files. We could investigate this as separate issue and maybe request new .Net API. Second block demonstrates the same regression from 7.1 Preview1 to Preview7:

Read file..

Read file time Taken: 2.651 seconds.

Removing duplicate lines, please wait..

Read file time Taken: 12.863 seconds.

Time Taken: 15.519 seconds.

Read file..

Read file time Taken: 1.865 seconds.

Removing duplicate lines, please wait..

Read file time Taken: 110.019 seconds.

Time Taken: 111.889 seconds.

Read file..

Read file time Taken: 2.126 seconds.

Removing duplicate lines, please wait..

Read file time Taken: 305.829 seconds.

Time Taken: 307.958 seconds.This requires a deeper investigations. |

|

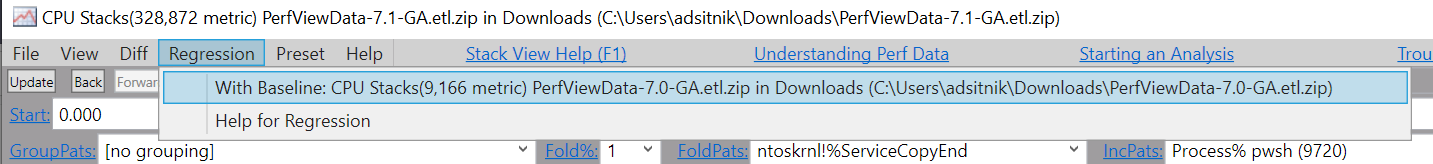

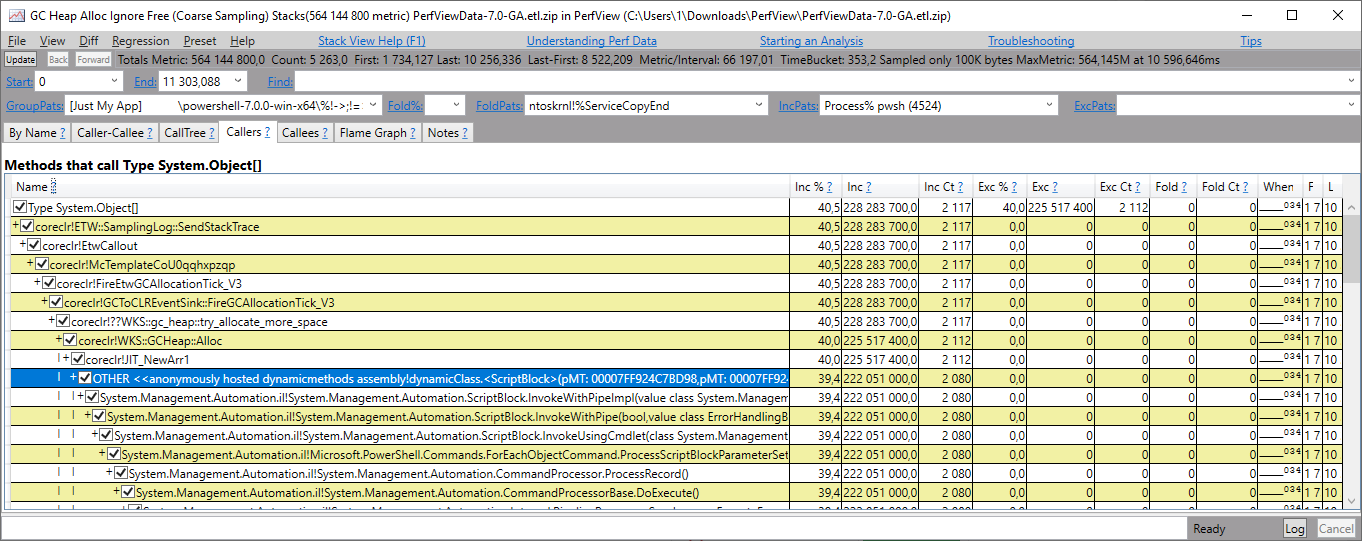

PerfView data files for 7.0 GA and 7.1 GA https://ru.files.fm/u/nnz6vztgj Looks like an issue with GC. If I drill into the first line Perhaps it was a change in Regex - now it allocates too many arrays.

|

|

@iSazonov Thanks for the profiling and analysis! @iSazonov Can you please open an issue in the dotnet/runtime repo? It looks to me they should use |

|

@iSazonov thanks for sharing the trace files! I've used PerfView to compare both trace files and it looks like the regression is caused by Overweight report for symbols common between both files

In this report, overweight is ratio of actual growth compared to 3488.0%.

|

|

@stephentoub For the PerfVew results I used a data file with 100000 lines and the test script has 54 replace operators called per line. Line size is < 70 chars. |

|

@daxian-dbw Please help with understanding: We allocate a lot of System.Object[]. Is this creating a script block? If so I wonder why our script block cache does not work? |

|

Close the issue as @iSazonov has confirmed that the issue has been fixed: dotnet/runtime#44808 (comment) |

|

@iSazonov The call stack from your screenshot suggests that the arrays are allocated from the generated code when running PowerShell/src/System.Management.Automation/engine/runtime/CompiledScriptBlock.cs Lines 1200 to 1203 in e0cda04

I haven't looked at #13673 closely (will do so), but I don't think the allocation in the screenshot is resulted by creating script blocks. |

A script of mine that reliably took 26 or 27 minutes to run in 7.0 now does not complete within 2 hours in 7.1.

The script uses "ForEach-Object" and "Where-Object" on many lines of text to remove lines matching a criteria in a 85MB text file.

Maybe a performance bottleneck in 7.1?

Repro script and profiling traces (updated by @daxian-dbw)

The text was updated successfully, but these errors were encountered: