forked from alteryx/spark

-

Notifications

You must be signed in to change notification settings - Fork 0

New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

AE_1 #3

Open

markhamstra

wants to merge

2,462

commits into

markhamstra:AE

Choose a base branch

from

carsonwang:AE_1

base: AE

Could not load branches

Branch not found: {{ refName }}

Loading

Could not load tags

Nothing to show

Loading

Are you sure you want to change the base?

Some commits from the old base branch may be removed from the timeline,

and old review comments may become outdated.

Open

AE_1 #3

Conversation

This file contains bidirectional Unicode text that may be interpreted or compiled differently than what appears below. To review, open the file in an editor that reveals hidden Unicode characters.

Learn more about bidirectional Unicode characters

## What changes were proposed in this pull request? In the DDLSuit, there are four test cases have the same codes , writing a function can combine the same code. ## How was this patch tested? existing tests. Closes apache#23194 from CarolinePeng/Update_temp. Authored-by: 彭灿00244106 <00244106@zte.intra> Signed-off-by: Takeshi Yamamuro <yamamuro@apache.org>

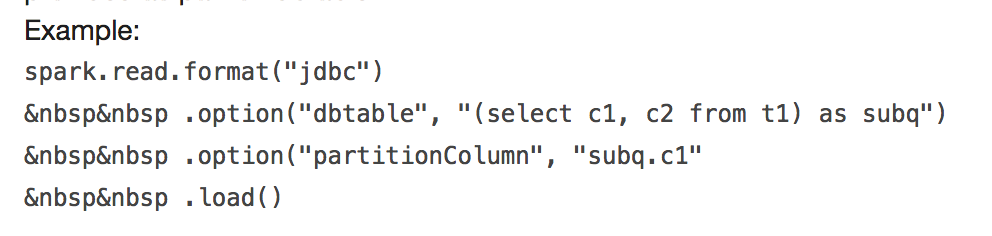

## What changes were proposed in this pull request?  It will throw: ``` requirement failed: When reading JDBC data sources, users need to specify all or none for the following options: 'partitionColumn', 'lowerBound', 'upperBound', and 'numPartitions' ``` and ``` User-defined partition column subq.c1 not found in the JDBC relation ... ``` This PR fix this error example. ## How was this patch tested? manual tests Closes apache#23170 from wangyum/SPARK-24499. Authored-by: Yuming Wang <yumwang@ebay.com> Signed-off-by: Sean Owen <sean.owen@databricks.com>

… from CSV ## What changes were proposed in this pull request? In the PR, I propose to use **java.time API** for parsing timestamps and dates from CSV content with microseconds precision. The SQL config `spark.sql.legacy.timeParser.enabled` allow to switch back to previous behaviour with using `java.text.SimpleDateFormat`/`FastDateFormat` for parsing/generating timestamps/dates. ## How was this patch tested? It was tested by `UnivocityParserSuite`, `CsvExpressionsSuite`, `CsvFunctionsSuite` and `CsvSuite`. Closes apache#23150 from MaxGekk/time-parser. Lead-authored-by: Maxim Gekk <max.gekk@gmail.com> Co-authored-by: Maxim Gekk <maxim.gekk@databricks.com> Signed-off-by: Sean Owen <sean.owen@databricks.com>

## What changes were proposed in this pull request? When we encode a Decimal from external source we don't check for overflow. That method is useful not only in order to enforce that we can represent the correct value in the specified range, but it also changes the underlying data to the right precision/scale. Since in our code generation we assume that a decimal has exactly the same precision and scale of its data type, missing to enforce it can lead to corrupted output/results when there are subsequent transformations. ## How was this patch tested? added UT Closes apache#23210 from mgaido91/SPARK-26233. Authored-by: Marco Gaido <marcogaido91@gmail.com> Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

…cessful tasks' metrics ## What changes were proposed in this pull request? Task summary table in the stage page currently displays the summary of all the tasks. However, we should display the task summary of only successful tasks, to follow the behavior of previous versions of spark. ## How was this patch tested? Added UT. attached screenshot Before patch:   Closes apache#23088 from shahidki31/summaryMetrics. Authored-by: Shahid <shahidki31@gmail.com> Signed-off-by: Marcelo Vanzin <vanzin@cloudera.com>

## What changes were proposed in this pull request? We explicitly avoid files with hdfs erasure coding for the streaming WAL and for event logs, as hdfs EC does not support all relevant apis. However, the new builder api used has different semantics -- it does not create parent dirs, and it does not resolve relative paths. This updates createNonEcFile to have similar semantics to the old api. ## How was this patch tested? Ran tests with the WAL pointed at a non-existent dir, which failed before this change. Manually tested the new function with a relative path as well. Unit tests via jenkins. Closes apache#23092 from squito/SPARK-26094. Authored-by: Imran Rashid <irashid@cloudera.com> Signed-off-by: Marcelo Vanzin <vanzin@cloudera.com>

…perly the limit size ## What changes were proposed in this pull request? The PR starts from the [comment](apache#23124 (comment)) in the main one and it aims at: - simplifying the code for `MapConcat`; - be more precise in checking the limit size. ## How was this patch tested? existing tests Closes apache#23217 from mgaido91/SPARK-25829_followup. Authored-by: Marco Gaido <marcogaido91@gmail.com> Signed-off-by: Wenchen Fan <wenchen@databricks.com>

…ctests in python/run-tests script

## What changes were proposed in this pull request?

This PR proposes add a developer option, `--testnames`, to our testing script to allow run specific set of unittests and doctests.

**1. Run unittests in the class**

```bash

./run-tests --testnames 'pyspark.sql.tests.test_arrow ArrowTests'

```

```

Running PySpark tests. Output is in /.../spark/python/unit-tests.log

Will test against the following Python executables: ['python2.7', 'pypy']

Will test the following Python tests: ['pyspark.sql.tests.test_arrow ArrowTests']

Starting test(python2.7): pyspark.sql.tests.test_arrow ArrowTests

Starting test(pypy): pyspark.sql.tests.test_arrow ArrowTests

Finished test(python2.7): pyspark.sql.tests.test_arrow ArrowTests (14s)

Finished test(pypy): pyspark.sql.tests.test_arrow ArrowTests (14s) ... 22 tests were skipped

Tests passed in 14 seconds

Skipped tests in pyspark.sql.tests.test_arrow ArrowTests with pypy:

test_createDataFrame_column_name_encoding (pyspark.sql.tests.test_arrow.ArrowTests) ... skipped 'Pandas >= 0.19.2 must be installed; however, it was not found.'

test_createDataFrame_does_not_modify_input (pyspark.sql.tests.test_arrow.ArrowTests) ... skipped 'Pandas >= 0.19.2 must be installed; however, it was not found.'

test_createDataFrame_fallback_disabled (pyspark.sql.tests.test_arrow.ArrowTests) ... skipped 'Pandas >= 0.19.2 must be installed; however, it was not found.'

test_createDataFrame_fallback_enabled (pyspark.sql.tests.test_arrow.ArrowTests) ... skipped

...

```

**2. Run single unittest in the class.**

```bash

./run-tests --testnames 'pyspark.sql.tests.test_arrow ArrowTests.test_null_conversion'

```

```

Running PySpark tests. Output is in /.../spark/python/unit-tests.log

Will test against the following Python executables: ['python2.7', 'pypy']

Will test the following Python tests: ['pyspark.sql.tests.test_arrow ArrowTests.test_null_conversion']

Starting test(pypy): pyspark.sql.tests.test_arrow ArrowTests.test_null_conversion

Starting test(python2.7): pyspark.sql.tests.test_arrow ArrowTests.test_null_conversion

Finished test(pypy): pyspark.sql.tests.test_arrow ArrowTests.test_null_conversion (0s) ... 1 tests were skipped

Finished test(python2.7): pyspark.sql.tests.test_arrow ArrowTests.test_null_conversion (8s)

Tests passed in 8 seconds

Skipped tests in pyspark.sql.tests.test_arrow ArrowTests.test_null_conversion with pypy:

test_null_conversion (pyspark.sql.tests.test_arrow.ArrowTests) ... skipped 'Pandas >= 0.19.2 must be installed; however, it was not found.'

```

**3. Run doctests in single PySpark module.**

```bash

./run-tests --testnames pyspark.sql.dataframe

```

```

Running PySpark tests. Output is in /.../spark/python/unit-tests.log

Will test against the following Python executables: ['python2.7', 'pypy']

Will test the following Python tests: ['pyspark.sql.dataframe']

Starting test(pypy): pyspark.sql.dataframe

Starting test(python2.7): pyspark.sql.dataframe

Finished test(python2.7): pyspark.sql.dataframe (47s)

Finished test(pypy): pyspark.sql.dataframe (48s)

Tests passed in 48 seconds

```

Of course, you can mix them:

```bash

./run-tests --testnames 'pyspark.sql.tests.test_arrow ArrowTests,pyspark.sql.dataframe'

```

```

Running PySpark tests. Output is in /.../spark/python/unit-tests.log

Will test against the following Python executables: ['python2.7', 'pypy']

Will test the following Python tests: ['pyspark.sql.tests.test_arrow ArrowTests', 'pyspark.sql.dataframe']

Starting test(pypy): pyspark.sql.dataframe

Starting test(pypy): pyspark.sql.tests.test_arrow ArrowTests

Starting test(python2.7): pyspark.sql.dataframe

Starting test(python2.7): pyspark.sql.tests.test_arrow ArrowTests

Finished test(pypy): pyspark.sql.tests.test_arrow ArrowTests (0s) ... 22 tests were skipped

Finished test(python2.7): pyspark.sql.tests.test_arrow ArrowTests (18s)

Finished test(python2.7): pyspark.sql.dataframe (50s)

Finished test(pypy): pyspark.sql.dataframe (52s)

Tests passed in 52 seconds

Skipped tests in pyspark.sql.tests.test_arrow ArrowTests with pypy:

test_createDataFrame_column_name_encoding (pyspark.sql.tests.test_arrow.ArrowTests) ... skipped 'Pandas >= 0.19.2 must be installed; however, it was not found.'

test_createDataFrame_does_not_modify_input (pyspark.sql.tests.test_arrow.ArrowTests) ... skipped 'Pandas >= 0.19.2 must be installed; however, it was not found.'

test_createDataFrame_fallback_disabled (pyspark.sql.tests.test_arrow.ArrowTests) ... skipped 'Pandas >= 0.19.2 must be installed; however, it was not found.'

```

and also you can use all other options (except `--modules`, which will be ignored)

```bash

./run-tests --testnames 'pyspark.sql.tests.test_arrow ArrowTests.test_null_conversion' --python-executables=python

```

```

Running PySpark tests. Output is in /.../spark/python/unit-tests.log

Will test against the following Python executables: ['python']

Will test the following Python tests: ['pyspark.sql.tests.test_arrow ArrowTests.test_null_conversion']

Starting test(python): pyspark.sql.tests.test_arrow ArrowTests.test_null_conversion

Finished test(python): pyspark.sql.tests.test_arrow ArrowTests.test_null_conversion (12s)

Tests passed in 12 seconds

```

See help below:

```bash

./run-tests --help

```

```

Usage: run-tests [options]

Options:

...

Developer Options:

--testnames=TESTNAMES

A comma-separated list of specific modules, classes

and functions of doctest or unittest to test. For

example, 'pyspark.sql.foo' to run the module as

unittests or doctests, 'pyspark.sql.tests FooTests' to

run the specific class of unittests,

'pyspark.sql.tests FooTests.test_foo' to run the

specific unittest in the class. '--modules' option is

ignored if they are given.

```

I intentionally grouped it as a developer option to be more conservative.

## How was this patch tested?

Manually tested. Negative tests were also done.

```bash

./run-tests --testnames 'pyspark.sql.tests.test_arrow ArrowTests.test_null_conversion1' --python-executables=python

```

```

...

AttributeError: type object 'ArrowTests' has no attribute 'test_null_conversion1'

...

```

```bash

./run-tests --testnames 'pyspark.sql.tests.test_arrow ArrowT' --python-executables=python

```

```

...

AttributeError: 'module' object has no attribute 'ArrowT'

...

```

```bash

./run-tests --testnames 'pyspark.sql.tests.test_ar' --python-executables=python

```

```

...

/.../python2.7: No module named pyspark.sql.tests.test_ar

```

Closes apache#23203 from HyukjinKwon/SPARK-26252.

Authored-by: Hyukjin Kwon <gurwls223@apache.org>

Signed-off-by: Hyukjin Kwon <gurwls223@apache.org>

## What changes were proposed in this pull request? This fixes doc of renamed OneHotEncoder in PySpark. ## How was this patch tested? N/A Closes apache#23230 from viirya/remove_one_hot_encoder_followup. Authored-by: Liang-Chi Hsieh <viirya@gmail.com> Signed-off-by: Hyukjin Kwon <gurwls223@apache.org>

## What changes were proposed in this pull request? this code come from PR: apache#11190, but this code has never been used, only since PR: apache#14548, Let's continue fix it. thanks. ## How was this patch tested? N / A Closes apache#23227 from heary-cao/unuseSparkPlan. Authored-by: caoxuewen <cao.xuewen@zte.com.cn> Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request? Updated SQL migration guide according to changes in apache#23120 Closes apache#23235 from MaxGekk/failuresafe-partial-result-followup. Lead-authored-by: Maxim Gekk <maxim.gekk@databricks.com> Co-authored-by: Maxim Gekk <max.gekk@gmail.com> Signed-off-by: Wenchen Fan <wenchen@databricks.com>

…essionWithSGDTests.test_training_and_prediction test ## What changes were proposed in this pull request? Looks this test is flaky https://amplab.cs.berkeley.edu/jenkins/job/SparkPullRequestBuilder/99704/console https://amplab.cs.berkeley.edu/jenkins/job/SparkPullRequestBuilder/99569/console https://amplab.cs.berkeley.edu/jenkins/job/SparkPullRequestBuilder/99644/console https://amplab.cs.berkeley.edu/jenkins/job/SparkPullRequestBuilder/99548/console https://amplab.cs.berkeley.edu/jenkins/job/SparkPullRequestBuilder/99454/console https://amplab.cs.berkeley.edu/jenkins/job/SparkPullRequestBuilder/99609/console ``` ====================================================================== FAIL: test_training_and_prediction (pyspark.mllib.tests.test_streaming_algorithms.StreamingLogisticRegressionWithSGDTests) Test that the model improves on toy data with no. of batches ---------------------------------------------------------------------- Traceback (most recent call last): File "/home/jenkins/workspace/SparkPullRequestBuilder/python/pyspark/mllib/tests/test_streaming_algorithms.py", line 367, in test_training_and_prediction self._eventually(condition) File "/home/jenkins/workspace/SparkPullRequestBuilder/python/pyspark/mllib/tests/test_streaming_algorithms.py", line 78, in _eventually % (timeout, lastValue)) AssertionError: Test failed due to timeout after 30 sec, with last condition returning: Latest errors: 0.67, 0.71, 0.78, 0.7, 0.75, 0.74, 0.73, 0.69, 0.62, 0.71, 0.69, 0.75, 0.72, 0.77, 0.71, 0.74 ---------------------------------------------------------------------- Ran 13 tests in 185.051s FAILED (failures=1, skipped=1) ``` This looks happening after increasing the parallelism in Jenkins to speed up at apache#23111. I am able to reproduce this manually when the resource usage is heavy (with manual decrease of timeout). ## How was this patch tested? Manually tested by ``` cd python ./run-tests --testnames 'pyspark.mllib.tests.test_streaming_algorithms StreamingLogisticRegressionWithSGDTests.test_training_and_prediction' --python-executables=python ``` Closes apache#23236 from HyukjinKwon/SPARK-26275. Authored-by: Hyukjin Kwon <gurwls223@apache.org> Signed-off-by: Hyukjin Kwon <gurwls223@apache.org>

…ord batches to improve performance ## What changes were proposed in this pull request? When executing `toPandas` with Arrow enabled, partitions that arrive in the JVM out-of-order must be buffered before they can be send to Python. This causes an excess of memory to be used in the driver JVM and increases the time it takes to complete because data must sit in the JVM waiting for preceding partitions to come in. This change sends un-ordered partitions to Python as soon as they arrive in the JVM, followed by a list of partition indices so that Python can assemble the data in the correct order. This way, data is not buffered at the JVM and there is no waiting on particular partitions so performance will be increased. Followup to apache#21546 ## How was this patch tested? Added new test with a large number of batches per partition, and test that forces a small delay in the first partition. These test that partitions are collected out-of-order and then are are put in the correct order in Python. ## Performance Tests - toPandas Tests run on a 4 node standalone cluster with 32 cores total, 14.04.1-Ubuntu and OpenJDK 8 measured wall clock time to execute `toPandas()` and took the average best time of 5 runs/5 loops each. Test code ```python df = spark.range(1 << 25, numPartitions=32).toDF("id").withColumn("x1", rand()).withColumn("x2", rand()).withColumn("x3", rand()).withColumn("x4", rand()) for i in range(5): start = time.time() _ = df.toPandas() elapsed = time.time() - start ``` Spark config ``` spark.driver.memory 5g spark.executor.memory 5g spark.driver.maxResultSize 2g spark.sql.execution.arrow.enabled true ``` Current Master w/ Arrow stream | This PR ---------------------|------------ 5.16207 | 4.342533 5.133671 | 4.399408 5.147513 | 4.468471 5.105243 | 4.36524 5.018685 | 4.373791 Avg Master | Avg This PR ------------------|-------------- 5.1134364 | 4.3898886 Speedup of **1.164821449** Closes apache#22275 from BryanCutler/arrow-toPandas-oo-batches-SPARK-25274. Authored-by: Bryan Cutler <cutlerb@gmail.com> Signed-off-by: Bryan Cutler <cutlerb@gmail.com>

## What changes were proposed in this pull request? Kafka delegation token support implemented in [PR#22598](apache#22598) but that didn't contain documentation because of rapid changes. Because it has been merged in this PR I've documented it. ## How was this patch tested? jekyll build + manual html check Closes apache#23195 from gaborgsomogyi/SPARK-26236. Authored-by: Gabor Somogyi <gabor.g.somogyi@gmail.com> Signed-off-by: Marcelo Vanzin <vanzin@cloudera.com>

This change modifies the logic in the SecurityManager to do two things: - generate unique app secrets also when k8s is being used - only store the secret in the user's UGI on YARN The latter is needed so that k8s won't unnecessarily create k8s secrets for the UGI credentials when only the auth token is stored there. On the k8s side, the secret is propagated to executors using an environment variable instead. This ensures it works in both client and cluster mode. Security doc was updated to mention the feature and clarify that proper access control in k8s should be enabled for it to be secure. Author: Marcelo Vanzin <vanzin@cloudera.com> Closes apache#23174 from vanzin/SPARK-26194.

…ytesMap ## What changes were proposed in this pull request? `enablePerfMetrics `was originally designed in `BytesToBytesMap `to control `getNumHashCollisions getTimeSpentResizingNs getAverageProbesPerLookup`. However, as the Spark version gradual progress. this parameter is only used for `getAverageProbesPerLookup ` and always given to true when using `BytesToBytesMap`. it is also dangerous to determine whether `getAverageProbesPerLookup `opens and throws an `IllegalStateException `exception. So this pr will be remove `enablePerfMetrics `parameter from `BytesToBytesMap`. thanks. ## How was this patch tested? the existed test cases. Closes apache#23244 from heary-cao/enablePerfMetrics. Authored-by: caoxuewen <cao.xuewen@zte.com.cn> Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request?

Currently if user provides data schema, partition column values are converted as per it. But if the conversion failed, e.g. converting string to int, the column value is null.

This PR proposes to throw exception in such case, instead of converting into null value silently:

1. These null partition column values doesn't make sense to users in most cases. It is better to show the conversion failure, and then users can adjust the schema or ETL jobs to fix it.

2. There are always exceptions on such conversion failure for non-partition data columns. Partition columns should have the same behavior.

We can reproduce the case above as following:

```

/tmp/testDir

├── p=bar

└── p=foo

```

If we run:

```

val schema = StructType(Seq(StructField("p", IntegerType, false)))

spark.read.schema(schema).csv("/tmp/testDir/").show()

```

We will get:

```

+----+

| p|

+----+

|null|

|null|

+----+

```

## How was this patch tested?

Unit test

Closes apache#23215 from gengliangwang/SPARK-26263.

Authored-by: Gengliang Wang <gengliang.wang@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request? This PR aims to upgrade Janino compiler to the latest version 3.0.11. The followings are the changes from the [release note](http://janino-compiler.github.io/janino/changelog.html). - Script with many "helper" variables. - Java 9+ compatibility - Compilation Error Messages Generated by JDK. - Added experimental support for the "StackMapFrame" attribute; not active yet. - Make Unparser more flexible. - Fixed NPEs in various "toString()" methods. - Optimize static method invocation with rvalue target expression. - Added all missing "ClassFile.getConstant*Info()" methods, removing the necessity for many type casts. ## How was this patch tested? Pass the Jenkins with the existing tests. Closes apache#23250 from dongjoon-hyun/SPARK-26298. Authored-by: Dongjoon Hyun <dongjoon@apache.org> Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

## What changes were proposed in this pull request? This is a follow-up of apache#23031 to rename the config name to `spark.sql.legacy.setCommandRejectsSparkCoreConfs`. ## How was this patch tested? Existing tests. Closes apache#23245 from ueshin/issues/SPARK-26060/rename_config. Authored-by: Takuya UESHIN <ueshin@databricks.com> Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

Adds a new method to SparkAppHandle called getError which returns the exception (if present) that caused the underlying Spark app to fail. New tests added to SparkLauncherSuite for the new method. Closes apache#21849 Closes apache#23221 from vanzin/SPARK-24243. Signed-off-by: Marcelo Vanzin <vanzin@cloudera.com>

## What changes were proposed in this pull request?

Delete unnecessary If statement, because it Impossible execution when

records less than or equal to zero.it is only execution when records begin zero.

...................

if (inMemSorter == null || inMemSorter.numRecords() <= 0) {

return 0L;

}

....................

if (inMemSorter.numRecords() > 0) {

.....................

}

## How was this patch tested?

Existing tests

(Please explain how this patch was tested. E.g. unit tests, integration tests, manual tests)

(If this patch involves UI changes, please attach a screenshot; otherwise, remove this)

Please review http://spark.apache.org/contributing.html before opening a pull request.

Closes apache#23247 from wangjiaochun/inMemSorter.

Authored-by: 10087686 <wang.jiaochun@zte.com.cn>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

## What changes were proposed in this pull request?

Parquet files appear to have nullability info when being written, not being read.

## How was this patch tested?

Some test code: (running spark 2.3, but the relevant code in DataSource looks identical on master)

case class NullTest(bo: Boolean, opbol: Option[Boolean])

val testDf = spark.createDataFrame(Seq(NullTest(true, Some(false))))

defined class NullTest

testDf: org.apache.spark.sql.DataFrame = [bo: boolean, opbol: boolean]

testDf.write.parquet("s3://asana-stats/tmp_dima/parquet_check_schema")

spark.read.parquet("s3://asana-stats/tmp_dima/parquet_check_schema/part-00000-b1bf4a19-d9fe-4ece-a2b4-9bbceb490857-c000.snappy.parquet4").printSchema()

root

|-- bo: boolean (nullable = true)

|-- opbol: boolean (nullable = true)

Meanwhile, the parquet file formed does have nullable info:

[]batchprod-report000:/tmp/dimakamalov-batch$ aws s3 ls s3://asana-stats/tmp_dima/parquet_check_schema/

2018-10-17 21:03:52 0 _SUCCESS

2018-10-17 21:03:50 504 part-00000-b1bf4a19-d9fe-4ece-a2b4-9bbceb490857-c000.snappy.parquet

[]batchprod-report000:/tmp/dimakamalov-batch$ aws s3 cp s3://asana-stats/tmp_dima/parquet_check_schema/part-00000-b1bf4a19-d9fe-4ece-a2b4-9bbceb490857-c000.snappy.parquet .

download: s3://asana-stats/tmp_dima/parquet_check_schema/part-00000-b1bf4a19-d9fe-4ece-a2b4-9bbceb490857-c000.snappy.parquet to ./part-00000-b1bf4a19-d9fe-4ece-a2b4-9bbceb490857-c000.snappy.parquet

[]batchprod-report000:/tmp/dimakamalov-batch$ java -jar parquet-tools-1.8.2.jar schema part-00000-b1bf4a19-d9fe-4ece-a2b4-9bbceb490857-c000.snappy.parquet

message spark_schema {

required boolean bo;

optional boolean opbol;

}

Closes apache#22759 from dima-asana/dima-asana-nullable-parquet-doc.

Authored-by: dima-asana <42555784+dima-asana@users.noreply.github.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

… is incorrect when there are failed or killed tasks and update duration metrics ## What changes were proposed in this pull request? This PR fixes 3 issues 1) Total tasks message in the tasks table is incorrect, when there are failed or killed tasks 2) Sorting of the "Duration" column is not correct 3) Duration in the aggregated tasks summary table and the tasks table and not matching. Total tasks = numCompleteTasks + numActiveTasks + numKilledTasks + numFailedTasks; Corrected the duration metrics in the tasks table as executorRunTime based on the PR apache#23081 ## How was this patch tested? test step: 1) ``` bin/spark-shell scala > sc.parallelize(1 to 100, 10).map{ x => throw new RuntimeException("Bad executor")}.collect() ```  After patch:  2) Duration metrics: Before patch:  After patch:  Closes apache#23160 from shahidki31/totalTasks. Authored-by: Shahid <shahidki31@gmail.com> Signed-off-by: Sean Owen <sean.owen@databricks.com>

…: Python API ## What changes were proposed in this pull request? Add validationIndicatorCol and validationTol to GBT Python. ## How was this patch tested? Add test in doctest to test the new API. Closes apache#21465 from huaxingao/spark-24333. Authored-by: Huaxin Gao <huaxing@us.ibm.com> Signed-off-by: Bryan Cutler <cutlerb@gmail.com>

…ice.name parameter ## What changes were proposed in this pull request? spark.kafka.sasl.kerberos.service.name is an optional parameter but most of the time value `kafka` has to be set. As I've written in the jira the following reasoning is behind: * Kafka's configuration guide suggest the same value: https://kafka.apache.org/documentation/#security_sasl_kerberos_brokerconfig * It would be easier for spark users by providing less configuration * Other streaming engines are doing the same In this PR I've changed the parameter from optional to `WithDefault` and set `kafka` as default value. ## How was this patch tested? Available unit tests + on cluster. Closes apache#23254 from gaborgsomogyi/SPARK-26304. Authored-by: Gabor Somogyi <gabor.g.somogyi@gmail.com> Signed-off-by: Marcelo Vanzin <vanzin@cloudera.com>

## What changes were proposed in this pull request? Update to Scala 2.12.8 ## How was this patch tested? Existing tests. Closes apache#23218 from srowen/SPARK-26266. Authored-by: Sean Owen <sean.owen@databricks.com> Signed-off-by: Sean Owen <sean.owen@databricks.com>

## What changes were proposed in this pull request? follow-up PR for SPARK-24207 to fix code style problems Closes apache#23256 from huaxingao/spark-24207-cnt. Authored-by: Huaxin Gao <huaxing@us.ibm.com> Signed-off-by: Hyukjin Kwon <gurwls223@apache.org>

## What changes were proposed in this pull request? A followup of apache#23043 There are 4 places we need to deal with NaN and -0.0: 1. comparison expressions. `-0.0` and `0.0` should be treated as same. Different NaNs should be treated as same. 2. Join keys. `-0.0` and `0.0` should be treated as same. Different NaNs should be treated as same. 3. grouping keys. `-0.0` and `0.0` should be assigned to the same group. Different NaNs should be assigned to the same group. 4. window partition keys. `-0.0` and `0.0` should be treated as same. Different NaNs should be treated as same. The case 1 is OK. Our comparison already handles NaN and -0.0, and for struct/array/map, we will recursively compare the fields/elements. Case 2, 3 and 4 are problematic, as they compare `UnsafeRow` binary directly, and different NaNs have different binary representation, and the same thing happens for -0.0 and 0.0. To fix it, a simple solution is: normalize float/double when building unsafe data (`UnsafeRow`, `UnsafeArrayData`, `UnsafeMapData`). Then we don't need to worry about it anymore. Following this direction, this PR moves the handling of NaN and -0.0 from `Platform` to `UnsafeWriter`, so that places like `UnsafeRow.setFloat` will not handle them, which reduces the perf overhead. It's also easier to add comments explaining why we do it in `UnsafeWriter`. ## How was this patch tested? existing tests Closes apache#23239 from cloud-fan/minor. Authored-by: Wenchen Fan <wenchen@databricks.com> Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

…ive field resolution when reading from Parquet ## What changes were proposed in this pull request? apache#22148 introduces a behavior change. According to discussion at apache#22184, this PR updates migration guide when upgrade from Spark 2.3 to 2.4. ## How was this patch tested? N/A Closes apache#23238 from seancxmao/SPARK-25132-doc-2.4. Authored-by: seancxmao <seancxmao@gmail.com> Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

## What changes were proposed in this pull request? 1. Implement `SQLShuffleWriteMetricsReporter` on the SQL side as the customized `ShuffleWriteMetricsReporter`. 2. Add shuffle write metrics to `ShuffleExchangeExec`, and use these metrics to create corresponding `SQLShuffleWriteMetricsReporter` in shuffle dependency. 3. Rework on `ShuffleMapTask` to add new class named `ShuffleWriteProcessor` which control shuffle write process, we use sql shuffle write metrics by customizing a ShuffleWriteProcessor on SQL side. ## How was this patch tested? Add UT in SQLMetricsSuite. Manually test locally, update screen shot to document attached in JIRA. Closes apache#23207 from xuanyuanking/SPARK-26193. Authored-by: Yuanjian Li <xyliyuanjian@gmail.com> Signed-off-by: Wenchen Fan <wenchen@databricks.com>

…hecking ## What changes were proposed in this pull request? If users set equivalent values to spark.network.timeout and spark.executor.heartbeatInterval, they get the following message: ``` java.lang.IllegalArgumentException: requirement failed: The value of spark.network.timeout=120s must be no less than the value of spark.executor.heartbeatInterval=120s. ``` But it's misleading since it can be read as they could be equal. So this PR replaces "no less than" with "greater than". Also, it fixes similar inconsistencies found in MLlib and SQL components. ## How was this patch tested? Ran Spark with equivalent values for them manually and confirmed that the revised message was displayed. Closes apache#23488 from sekikn/SPARK-26564. Authored-by: Kengo Seki <sekikn@apache.org> Signed-off-by: Sean Owen <sean.owen@databricks.com>

…rser.enabled ## What changes were proposed in this pull request? The SQL config `spark.sql.legacy.timeParser.enabled` was removed by apache#23495. The PR cleans up the SQL migration guide and the comment for `UnixTimestamp`. Closes apache#23529 from MaxGekk/get-rid-off-legacy-parser-followup. Authored-by: Maxim Gekk <max.gekk@gmail.com> Signed-off-by: Hyukjin Kwon <gurwls223@apache.org>

## What changes were proposed in this pull request? Make it possible for the master to enable TCP keep alive on the RPC connections with clients. ## How was this patch tested? Manually tested. Added the following: ``` spark.rpc.io.enableTcpKeepAlive true ``` to spark-defaults.conf. Observed the following on the Spark master: ``` $ netstat -town | grep 7077 tcp6 0 0 10.240.3.134:7077 10.240.1.25:42851 ESTABLISHED keepalive (6736.50/0/0) tcp6 0 0 10.240.3.134:44911 10.240.3.134:7077 ESTABLISHED keepalive (4098.68/0/0) tcp6 0 0 10.240.3.134:7077 10.240.3.134:44911 ESTABLISHED keepalive (4098.68/0/0) ``` Which proves that the keep alive setting is taking effect. It's currently possible to enable TCP keep alive on the worker / executor, but is not possible to configure on other RPC connections. It's unclear to me why this could be the case. Keep alive is more important for the master to protect it against suddenly departing workers / executors, thus I think it's very important to have it. Particularly this makes the master resilient in case of using preemptible worker VMs in GCE. GCE has the concept of shutdown scripts, which it doesn't guarantee to execute. So workers often don't get shutdown gracefully and the TCP connections on the master linger as there's nothing to close them. Thus the need of enabling keep alive. This enables keep-alive on connections besides the master's connections, but that shouldn't cause harm. Closes apache#20512 from peshopetrov/master. Authored-by: Petar Petrov <petar.petrov@leanplum.com> Signed-off-by: Sean Owen <sean.owen@databricks.com>

… projection ## What changes were proposed in this pull request? When creating some unsafe projections, Spark rebuilds the map of schema attributes once for each expression in the projection. Some file format readers create one unsafe projection per input file, others create one per task. ProjectExec also creates one unsafe projection per task. As a result, for wide queries on wide tables, Spark might build the map of schema attributes hundreds of thousands of times. This PR changes two functions to reuse the same AttributeSeq instance when creating BoundReference objects for each expression in the projection. This avoids the repeated rebuilding of the map of schema attributes. ### Benchmarks The time saved by this PR depends on size of the schema, size of the projection, number of input files (or number of file splits), number of tasks, and file format. I chose a couple of example cases. In the following tests, I ran the query ```sql select * from table where id1 = 1 ``` Matching rows are about 0.2% of the table. #### Orc table 6000 columns, 500K rows, 34 input files baseline | pr | improvement ----|----|---- 1.772306 min | 1.487267 min | 16.082943% #### Orc table 6000 columns, 500K rows, *17* input files baseline | pr | improvement ----|----|---- 1.656400 min | 1.423550 min | 14.057595% #### Orc table 60 columns, 50M rows, 34 input files baseline | pr | improvement ----|----|---- 0.299878 min | 0.290339 min | 3.180926% #### Parquet table 6000 columns, 500K rows, 34 input files baseline | pr | improvement ----|----|---- 1.478306 min | 1.373728 min | 7.074165% Note: The parquet reader does not create an unsafe projection. However, the filter operation in the query causes the planner to add a ProjectExec, which does create an unsafe projection for each task. So these results have nothing to do with Parquet itself. #### Parquet table 60 columns, 50M rows, 34 input files baseline | pr | improvement ----|----|---- 0.245006 min | 0.242200 min | 1.145099% #### CSV table 6000 columns, 500K rows, 34 input files baseline | pr | improvement ----|----|---- 2.390117 min | 2.182778 min | 8.674844% #### CSV table 60 columns, 50M rows, 34 input files baseline | pr | improvement ----|----|---- 1.520911 min | 1.510211 min | 0.703526% ## How was this patch tested? SQL unit tests Python core and SQL test Closes apache#23392 from bersprockets/norebuild. Authored-by: Bruce Robbins <bersprockets@gmail.com> Signed-off-by: Herman van Hovell <hvanhovell@databricks.com>

## What changes were proposed in this pull request? This is to fix a bug in apache#23036 that would cause a join hint to be applied on node it is not supposed to after join reordering. For example, ``` val join = df.join(df, "id") val broadcasted = join.hint("broadcast") val join2 = join.join(broadcasted, "id").join(broadcasted, "id") ``` There should only be 2 broadcast hints on `join2`, but after join reordering there would be 4. It is because the hint application in join reordering compares the attribute set for testing relation equivalency. Moreover, it could still be problematic even if the child relations were used in testing relation equivalency, due to the potential exprId conflict in nested self-join. As a result, this PR simply reverts the join reorder hint behavior change introduced in apache#23036, which means if a join hint is present, the join node itself will not participate in the join reordering, while the sub-joins within its children still can. ## How was this patch tested? Added new tests Closes apache#23524 from maryannxue/query-hint-followup-2. Authored-by: maryannxue <maryannxue@apache.org> Signed-off-by: gatorsmile <gatorsmile@gmail.com>

## What changes were proposed in this pull request? Make sure broadcast hint is applied to partitioned tables. ## How was this patch tested? - A new unit test in PruneFileSourcePartitionsSuite - Unit test suites touched by SPARK-14581: JoinOptimizationSuite, FilterPushdownSuite, ColumnPruningSuite, and PruneFiltersSuite Closes apache#23507 from jzhuge/SPARK-26576. Closes apache#23530 from jzhuge/SPARK-26576-master. Authored-by: John Zhuge <jzhuge@apache.org> Signed-off-by: gatorsmile <gatorsmile@gmail.com>

…matter ## What changes were proposed in this pull request? In the PR, I propose to switch on `TimestampFormatter`/`DateFormatter` in casting dates/timestamps to strings. The changes should make the date/timestamp casting consistent to JSON/CSV datasources and time-related functions like `to_date`, `to_unix_timestamp`/`from_unixtime`. Local formatters are moved out from `DateTimeUtils` to where they are actually used. It allows to avoid re-creation of new formatter instance per-each call. Another reason is to have separate parser for `PartitioningUtils` because default parsing pattern cannot be used (expected optional section `[.S]`). ## How was this patch tested? It was tested by `DateTimeUtilsSuite`, `CastSuite` and `JDBC*Suite`. Closes apache#23391 from MaxGekk/thread-local-date-format. Lead-authored-by: Maxim Gekk <maxim.gekk@databricks.com> Co-authored-by: Maxim Gekk <max.gekk@gmail.com> Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request? This PR allows the user to override `kafka.group.id` for better monitoring or security. The user needs to make sure there are not multiple queries or sources using the same group id. It also fixes a bug that the `groupIdPrefix` option cannot be retrieved. ## How was this patch tested? The new added unit tests. Closes apache#23301 from zsxwing/SPARK-26350. Authored-by: Shixiong Zhu <zsxwing@gmail.com> Signed-off-by: Shixiong Zhu <zsxwing@gmail.com>

… for branch-2.4+ only ## What changes were proposed in this pull request? To skip some steps to remove binary license/notice files in a source release for branch2.3 (these files only exist in master/branch-2.4 now), this pr checked a Spark release version in `dev/create-release/release-build.sh`. ## How was this patch tested? Manually checked. Closes apache#23538 from maropu/FixReleaseScript. Authored-by: Takeshi Yamamuro <yamamuro@apache.org> Signed-off-by: Sean Owen <sean.owen@databricks.com>

## What changes were proposed in this pull request? This PR reverts apache#22938 per discussion in apache#23325 Closes apache#23325 Closes apache#23543 from MaxGekk/return-nulls-from-json-parser. Authored-by: Maxim Gekk <max.gekk@gmail.com> Signed-off-by: Wenchen Fan <wenchen@databricks.com>

## What changes were proposed in this pull request? Fix typos in comments by replacing "in-heap" with "on-heap". ## How was this patch tested? Existing Tests. Closes apache#23533 from SongYadong/typos_inheap_to_onheap. Authored-by: SongYadong <song.yadong1@zte.com.cn> Signed-off-by: Hyukjin Kwon <gurwls223@apache.org>

…sions ## What changes were proposed in this pull request? This PR contains benchmarks for `In` and `InSet` expressions. They cover literals of different data types and will help us to decide where to integrate the switch-based logic for bytes/shorts/ints. As discussed in [PR-23171](apache#23171), one potential approach is to convert `In` to `InSet` if all elements are literals independently of data types and the number of elements. According to the results of this PR, we might want to keep the threshold for the number of elements. The if-else approach approach might be faster for some data types on a small number of elements (structs? arrays? small decimals?). ### byte / short / int / long Unless the number of items is really big, `InSet` is slower than `In` because of autoboxing . Interestingly, `In` scales worse on bytes/shorts than on ints/longs. For example, `InSet` starts to match the performance on around 50 bytes/shorts while this does not happen on the same number of ints/longs. This is a bit strange as shorts/bytes (e.g., `(byte) 1`, `(short) 2`) are represented as ints in the bytecode. ### float / double Use cases on floats/doubles also suffer from autoboxing. Therefore, `In` outperforms `InSet` on 10 elements. Similarly to shorts/bytes, `In` scales worse on floats/doubles than on ints/longs because the equality condition is more complicated (e.g., `java.lang.Float.isNaN(filter_valueArg_0) && java.lang.Float.isNaN(9.0F)) || filter_valueArg_0 == 9.0F`). ### decimal The reason why we have separate benchmarks for small and large decimals is that Spark might use longs to represent decimals in some cases. If this optimization happens, then `equals` will be nothing else as comparing longs. If this does not happen, Spark will create an instance of `scala.BigDecimal` and use it for comparisons. The latter is more expensive. `Decimal$hashCode` will always use `scala.BigDecimal$hashCode` even if the number is small enough to fit into a long variable. As a consequence, we see that use cases on small decimals are faster with `In` as they are using long comparisons under the hood. Large decimal values are always faster with `InSet`. ### string `UTF8String$equals` is not cheap. Therefore, `In` does not really outperform `InSet` as in previous use cases. ### timestamp / date Under the hood, timestamp/date values will be represented as long/int values. So, `In` allows us to avoid autoboxing. ### array Arrays are working as expected. `In` is faster on 5 elements while `InSet` is faster on 15 elements. The benchmarks are using `UnsafeArrayData`. ### struct `InSet` is always faster than `In` for structs. These benchmarks use `GenericInternalRow`. Closes apache#23291 from aokolnychyi/spark-26203. Lead-authored-by: Anton Okolnychyi <aokolnychyi@apple.com> Co-authored-by: Dongjoon Hyun <dongjoon@apache.org> Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

* do not re-implement exchange reuse * simplify QueryStage * add comments * new idea * polish * address comments * improve QueryStageTrigger

* insert query stages dynamically * add comment * address comments

Sign up for free

to join this conversation on GitHub.

Already have an account?

Sign in to comment

Add this suggestion to a batch that can be applied as a single commit.

This suggestion is invalid because no changes were made to the code.

Suggestions cannot be applied while the pull request is closed.

Suggestions cannot be applied while viewing a subset of changes.

Only one suggestion per line can be applied in a batch.

Add this suggestion to a batch that can be applied as a single commit.

Applying suggestions on deleted lines is not supported.

You must change the existing code in this line in order to create a valid suggestion.

Outdated suggestions cannot be applied.

This suggestion has been applied or marked resolved.

Suggestions cannot be applied from pending reviews.

Suggestions cannot be applied on multi-line comments.

Suggestions cannot be applied while the pull request is queued to merge.

Suggestion cannot be applied right now. Please check back later.

No description provided.