VmApps

This page documents efforts and methods to run apps within virtual machines, with a view to removing the dependence on the host operating system.

See the LHC@Home Twiki for previous efforts within the context of LHC@Home.

-

Information on VirtualBox from David Garcia Quintas.

-

Ben Segal's early implementation suggestion is attached to this page (code embedded in that file directly available here).

-

David Weir's proposal was:

I think, though, that the exact way in which we do this is strongly dependent on the "target audience" -- is it still the porting of applications? I'm aware that what follows is essentially a rewrite of the implementation you suggested to me a month or two back, but as I have become familiar with the capabilities of the VIX API I've been able to flesh out some of the details.

Assumptions: no "inner BOINC client", inner application does not link against BOINC libraries, inner application is small with very few dependencies not included in a basic virtual machine. No assumptions are made about whether the inner application would need access to the network, but the wrapper XML standard could be extended to include a field indicating whether this is necessary.

- We deploy a virtual machine image over BOINC, with the VMWare Tools installed. This need only be done once for our hypothetical project, with different programs being sent as part of the workunit.

- The VMWare-aware wrapper powers up the virtual machine, checks for a snapshot relevant to the current workunit with VixVM_GetNamedSnapshot() and VixVM_RevertToSnapshot() (this is our checkpointing recovery step). If so, we skip to stage 4.

- For a given workunit, the wrapper XML standard is then used to send a package containing a non-BOINCified program and dependencies (suppose a RPM for the CernVM). This is installed using a call to VixVM_RunProgramInGuest() by the VMWare-aware wrapper. This is then also used to start the program running.

- (main loop). The wrapper polls the process handle returned by RunProgramInGuest() to see if the workunit has finished. It also calls boinc_time_to_checkpoint(); then runs VixVM_CreateSnapshot() to create a snapshot as a checkpoint, if necessary.

- Upon successful completion of the inner job, we must copy out the results, uninstall the workunit package in the VM and move the checkpointed snapshot. The results are then sent back to the BOINC server. We can get the CPU time easily inside the virtual machine by calling the executable through time(1), say, but it may turn out to be more reasonable to use the cputime estimate made by the core client itself.

Note: For issuing partial credit on long processes we could require the inner program to update a file inside the VM (say /tmp/creditreport) giving the number of floating point and integer operations done so far. This could then be polled in step 4 (the main loop), and the results returned to the core client. The same thing would be necessary for reporting the fraction done. We could even re-implement fraction_done() and ops_cumulative(), writing a "fake" BOINC library to be used when compiling projects to run inside virtual machines.

-

Daniel's proposal?

-

Kevin's Project - Overview: Use VMWare Server 2.0 and the VIX API to control the operations of a virtual machine including inserting files and applications into an instance of a virtual machine running Linux. boinc_vmware.pdf some implementation details are available here: http://home.comcast.net/~kevin_reed/

-

A. Marosi, P Kacsuk, G Fedak, O. Lodygensky, "Using Virtual Machines in Desktop Grid Clients for Application Sandboxing," CoreGRID TR-140. August 31, 2008. http://www.coregrid.net/mambo/images/stories/TechnicalReports/tr-0140.pdf

-

If we specialise to VMWare Server 1, we do not gain access to all the API calls suggested in "David's proposal" above. The snapshot calls are not available until VMWare Server 2, which unfortunately does not allow anonymous logins to the local machine.

-

Much as we would like to use the latest possible release of VMWare server, if we require the user's login details we would not be able to BOINCify the VMWare calls because the user would have to provide the correct username and password for the VMWare server. This would (at least) require interactive input to the wrapper (user provides login to VMWare server), and (most probably) changes to the BOINC infrastructure. The latest VIX reference page for VixHost_Connect() mentions logging in anonymously as the current user on the current host, so it is possible that this is simply an oversight in the current release of VMWare Server.

- Do we grant credit based on virtualised CPU time, or wrapper CPU time, or something else?

New material by Jarno Rantala, on the prototype VMwrapper program, developed at CERN July-August 2009

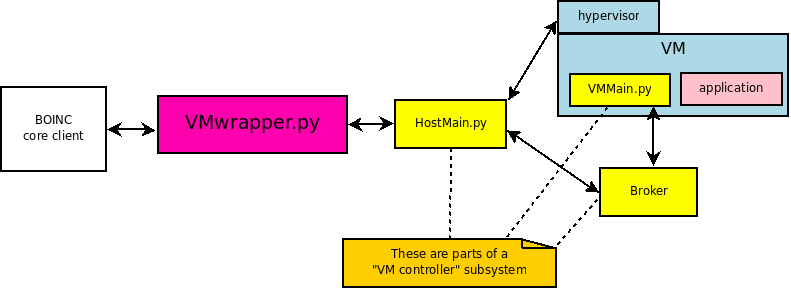

VMwrapper works like the original wrapper but besides running applications locally on a volunteer's machine it can also run applications on virtual machines (VM) hosted by that machine. It uses VM controllers ([ source code]) to communicate with these "guest" VM's. It can copy input and application files to a VM and run a command there. After that, it can copy VM output files to the volunteer's host machine. VMwrapper (and related VM controller code) is written with Python using BOINC API Python bindings [].

Architecture diagram

The source code of the VMwrapper-program is in []. It reads a file with logical name 'job.xml'. This is similar to the file which the original wrapper reads but there are a few more tags.

The job.xml file has the format:

<job_desc>

<unzip_task>

<application></application>

.

.

<command_line></command_line>

</unzip_task>

<VMmanage_task>

<application></application>

.

.

<command_line></command_line>

</VMmanage_task>

<task>

<virtualmachine></virtualmachine>

<image></image>

<application></application>

<copy_app_to_VM></copy_app_to_VM>

<copy_file_to_VM></copy_file_to_VM>

<copy_file_to_VM></copy_file_to_VM>

<stdin_filename></stdin_filename>

<stdout_filename></stdout_filename>

<stderr_filename></stderr_filename>

<copy_file_from_VM></copy_file_from_VM>

<command_line></command_line>

<weight></weight>

</task>

</job_desc>The job file describes a sequence of tasks.

The descriptor for each task includes:

The name of the virtual machine

The logical name of the image

Specifies if the application should be copied to the VM. It might be the case that the application (or script) is already in the VM. (zero or nonzero)

The logical name of a file which should be copied to the VM. (input files, stdin_filename)

The name of files copied from the VM after computation. (output files, stdout_filename)

The logical name of the application

The logical names of the files to which stdin, stdout, and stderr are to be connected (if any).

The command-line arguments to be passed to the application. One can also give a command-line to VMwrapper.py and this is passed to the task-applications such that it is unified with the command-line in job.xml (command-line in job.xml + " " + command-line for wrapper). If one gives a file name in command_line (recognized by "./") then the boinc_resolve_filename-method is used to resolve the physical name of the file.

The contribution of each task to the overall fraction done is proportional to its weight (floating-point, default 1).

The name of the checkpoint file used by the application, if any. When this is modified, the wrapper assumes that a checkpoint has been completed and notifies the core client.

There are two special kinds of task: VMmanage_task and unzip_task. unzip_task-tasks are performed before any other tasks. They are used to unpack a packed file to the slot-directory. VMmanage_task-tasks are used to control VM's and they are started only if there is a task using a VM. These tasks have to run in parallel with the tasks using a VM because they do the communication with the VM. We need to run a Python script HostMain.py and a broker (currently ActiveMQ) to be able to communicate with VM's.

Notes:

- Files opened directly by an application must have the <copy_file/> tag or one can use unzip_task to unpack needed files to the slot-directory.

- Worker programs must exit with zero status; nonzero values are interpreted as errors by the VM wrapper.

- Commands in a VM are run in the same directory where VMMain.py is run. CopyFilesToVM and CopyFilesFromVM use the home directory of the user who started VMMain.py as their base directory.

- boinc_init_options was modified in our version with Python bindings: start_worker_signals was removed because time.sleep() functions didn't work properly otherwise. Does this do any harm??

- The "boinc" user account has to be in vboxusers-group!! (at least on linux hosts)

- Python 2.6 is required (for the kill() and send_signal() methods for a subprocess)

- VM controllers also require: Netifaces (0.5), Stomper (0.2.2) and Twisted (8.2.0), which indirectly requires Zope Interfaces (3.5.1)

- VMMain.py must be started automatically in a VM (and the user-account which starts VMMain.py must have permissions to run any applications/commands to be run in the VM)

Here's an example that shows how to compute a worker.py program in a VM and get the results back from a VM. We assume that you have already created a project with root directory PROJECT/, and that in the volunteer's machine there is already a VM created called "CernVM", and when we start the VM then VMMain.py starts to run automatically. The VM should be able to be run under the "boinc" user account.

- We are going to run worker.py in a VM where Python is already installed; worker.py reads from stdin and writes to stdout; it also opens and reads a file 'in', and opens and writes a file 'out'. It takes one command-line argument: the number of CPU seconds to use.

- First Create an application named 'worker.py' and a corresponding directory 'PROJECT/apps/worker'. In this directory, create a directory 'VMwrapper_1.13_i686-pc-linux-gnu.py'. Put the files 'VMwrapper_1.13_i686-pc-linux-gnu.py', 'worker_1.13_i686-pc-linux-gnu.py', 'boinc.so' (Python pindings of BOINC api), 'boincvm.tar' (VM controller), 'apache-activemq-5.2.0.tar' (broker) and 'cctools-2_5_2-i686-linux-2.6.tar' (chirp files used by the VM controller) there. Rename the files to 'worker.py=worker_1.13_i686-pc-linux-gnu.py' and boincvm.tar=boincvm_0.01.tar (this gives it the logical names 'worker.py' and 'boincvm.tar').

- In the same directory, create a file 'job.xml=job_0.01.xml' (0.01 is a version number) containing:

<job_desc>

<unzip_task>

<application>tar</application>

<command_line>-xf ./cctools-2_5_2-i686-linux-2.6.tar</command_line>

<stdout_filename>stdout_tar</stdout_filename>

<stderr_filename>stderr_tar</stderr_filename>

</unzip_task>

<unzip_task>

<application>tar</application>

<command_line>-xf ./apache-activemq-5.2.0.tar</command_line>

<stdout_filename>stdout_tar</stdout_filename>

<stderr_filename>stderr_tar</stderr_filename>

</unzip_task>

<unzip_task>

<application>tar</application>

<command_line>-xf ./boincvm.tar</command_line>

</unzip_task>

<VMmanage_task>

<application>./apache-activemq-5.2.0/bin/activemq</application>

<stdin_filename></stdin_filename>

<stdout_filename>stdout_broker</stdout_filename>

<stderr_filename>stderr_broker</stderr_filename>

<command_line></command_line>

</VMmanage_task>

<VMmanage_task>

<application>python</application>

<stdin_filename></stdin_filename>

<stdout_filename>stdout_HostMain</stdout_filename>

<stderr_filename>stderr_HostMain</stderr_filename>

<command_line>./boincvm/HostMain.py ./boincvm/HostConfig.cfg</command_line>

</VMmanage_task>

<task>

<virtualmachine>CernVM</virtualmachine>

<image></image>

<app_pathVM></app_pathVM>

<application>./worker.py</application>

<copy_app_to_VM>1</copy_app_to_VM>

<copy_file_to_VM>in</copy_file_to_VM>

<copy_file_to_VM>stdin_worker</copy_file_to_VM>

<stdin_filename>stdin_worker</stdin_filename>

<stdout_filename>stdout_worker</stdout_filename>

<stderr_filename>stderr_worker</stderr_filename>

<copy_file_from_VM>out</copy_file_from_VM>

<command_line>5</command_line>

<weight>2</weight>

</task>

</job_desc>The above file (which has logical name 'job.xml' and physical name 'job_0.01.xml') is read by 'VMwrapper.py'; it tells it the name of the VM, the name of the worker program, what files to connect to its stdin/stdout, and a command-line. It also tells which packed files should be unpacked to the slot directory and what are the applications that should be run to be able to control the VM's.

- In the 'PROJECT/templates' directory, we now create a workunit template file called 'worker_wu':

<file_info>

<number>0</number>

</file_info>

<file_info>

<number>1</number>

</file_info>

<workunit>

<file_ref>

<file_number>0</file_number>

<open_name>in</open_name>

<copy_file/>

</file_ref>

<file_ref>

<file_number>1</file_number>

<open_name>stdin</open_name>

</file_ref>

</workunit>and a result template file called 'worker_result'

<file_info>

<name><OUTFILE_0/></name>

<generated_locally/>

<upload_when_present/>

<max_nbytes>5000000</max_nbytes>

<url><UPLOAD_URL/></url>

</file_info>

<file_info>

<name><OUTFILE_1/></name>

<generated_locally/>

<upload_when_present/>

<max_nbytes>5000000</max_nbytes>

<url><UPLOAD_URL/></url>

</file_info>

<file_info>

<name><OUTFILE_2/></name>

<generated_locally/>

<upload_when_present/>

<max_nbytes>5000000</max_nbytes>

<url><UPLOAD_URL/></url>

</file_info>

<result>

<file_ref>

<file_name><OUTFILE_0/></file_name>

<open_name>out</open_name>

<copy_file/>

</file_ref>

<file_ref>

<file_name><OUTFILE_1/></file_name>

<open_name>stdout</open_name>

</file_ref>

<file_ref>

<file_name><OUTFILE_2/></file_name>

<open_name>stderr</open_name>

<optional>1</optional>

</file_ref>

</result>- Run bin/update_versions to create an app version and to copy the application files to the 'PROJECT/download' directory.

- Run 'bin/start' to start the daemons.

- To generate a workunit, run a script like:

cp download/in `bin/dir_hier_path in`

cp download/stdin `bin/dir_hier_path stdin`

bin/create_work -appname worker -wu_name worker_nodelete \

-wu_template templates/worker_wu \

-result_template templates/worker_result \

in stdin

Note that the input files in the 'create_work' command must be in the same order as in the workunit template file (worker_wu).

To understand how all this works: at the beginning of execution, the file layout is:

| Project directory | slot directory |

| in | in (copy of project/in) |

| job_1.12.xml | job.xml (link to project/job_1.12.xml) |

| stdin | stdin (link to project/stdin) |

| worker_nodelete_0 | stdout (link to project/worker_nodelete_0) |

| worker_1.13_i686-pc-linux-gnu.py | worker.py (link to project/worker_1.13_i686-pc-linux-gnu.py) |

| VMwrapper_1.13_i686-pc-linux-gnu.py | VMwrapper_1.13_i686-pc-linux-gnu.py (link to project/VMwrapper_1.13_i686-pc-linux-gnu.py) |

| cctools-2_5_2-i686-linux-2.6.tar | cctools-2_5_2-i686-linux-2.6 (contents of the zipped file after unzip_task) |

| apache-activemq-5.2.0.tar | apache-activemq-5.2.0 (after unzip_task) |

| boincvm.tar | boincvm (after unzip_task) |

The VMwrapper.py starts the VM called "CernVM", copies input files and the application file to the VM and then starts worker.py in the VM. After the computation we copy the "out" file from the VM and write stdout to project/worker_nodelete_1 and stderr to project/worker_nodelete_2. After this, VMwrapper kills the VMmanage tasks and exits. The BOINC core client copies slot/out to projects/worker_nodelete_2.

-

Test other hypervisors than VirtualBox (VMware, kQEMU, etc.)

-

Test other host OS's than Linux (Ubuntu 9.04)

-

Decide how to compute credits in long lasting tasks (boinc_ops_cumulative?, how cpu_time->ops?). This needs trickle messaging from the wrapper to a BOINC server and vice versa. For this reason, trickle messaging should be implemented in VMwrapper (and a trickle_handler daemon run in a server). Trickle messages could be sent at the same time when we are taking snapshots of a VM.

-

Should we also include the cpu time used by the VM controller?

-

Implement measuring of cpu time for Windows guests (in Linux we read the /proc/uptime file in a guest to calculate the used cpu time)

-

Test if the cpu time of applications running on the host machine are measured properly.

-

Test snapshotting of VM's (if we always use saveState of a VM, do we need snapshotting at all??)

-

Test stopping and resuming host applications run on Windows. At the moment, stopping and resuming is implemented by sending SIGSTOP and SIGCONT signals to subprocesses. These signals are not supported on Windows so far. (This is needed only when we run applications on the host machine).

-

At the moment, we run just one task per VM at a time. If we run two tasks at the same time the problem is that one task can save the state of the VM before the other task is finished. Should we run two parallel tasks on the same VM with one VMwrapper process or should we use two different VM's?

-

Try to send the Python runtime with the BOINC task. cx_Freeze might be a good tool for this. This way we don't need to assume that a volunteer has installed Python 2.6 (needed for the subprocess send_signal() and kill() methods) and the packages needed by the VM controllers (Netifaces (0.5), Stomper (0.2.2) and Twisted (8.2.0), which indirectly requires Zope Interfaces (3.5.1)

-

Test that we can create a VM and start it.. so far the VM has already been pre-created on a host. This needs VMMain.py to be automatically started when we start the VM.

-

Change the exit codes of VMwrapper.py to be consistent with BOINC error_numbers.h