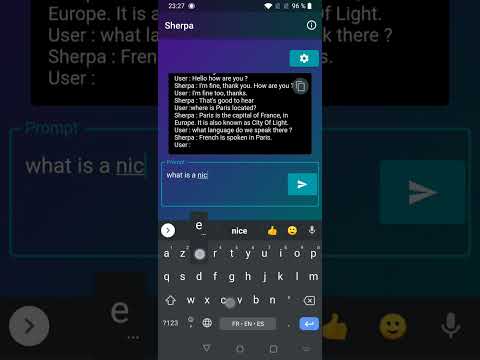

This app is a demo of the llama.cpp model that tries to recreate an offline chatbot, working similar to OpenAI's ChatGPT. The source code for this app is available on GitHub.

Windows, mac and android ! Releases page

The app was developed using Flutter and is built upon ggerganov/llama.cpp, recompiled to work on mobiles.

The app will prompt you for a model file, which you need to provide. This must be a GGML model is compatible with llama.cpp as of June 30th 2022. Models from previous versions of Sherpa (which used an older version of llama.cpp) may no longer be compatible until conversion. The llama.cpp repository provides tools to convert ggml models to the latest format, as well as to produce ggml models from the original datasets.

You can experiment with Orca Mini 3B. These models (e.g. orca-mini-3b.ggmlv3.q4_0.bin from June 24 2023) are directly compatible with this version of Sherpa.

Additionally, you can fine-tune the ouput with preprompts to improve its performance.

To use this app, follow these steps:

- Download the GGML model from your chosen source.

- Rename the downloaded file to

ggml-model.bin. - Place the file in your device's download folder.

- Run the app on your mobile device.

Please note that the llama.cpp models are owned and officially distributed by Meta. This app only serves as a demo for the model's capabilities and functionality. The developers of this app do not provide the LLaMA models and are not responsible for any issues related to their usage.