-

Notifications

You must be signed in to change notification settings - Fork 9.5k

ImproveQuality

There are a variety of reasons you might not get good quality output from Tesseract. It's important to note that, unless you're using a very unusual font or a new language, retraining Tesseract is unlikely to help.

- Image processing

- Page segmentation method

- Dictionaries, word lists, and patterns

- Still having problems?

Tesseract does various image processing operations internally (using the Leptonica library) before doing the actual OCR. It generally does a very good job of this, but there will inevitably be cases where it isn't good enough, which can result in a significant reduction in accuracy.

You can see how Tesseract has processed the image by using the configuration variable tessedit_write_images to true (or using configfile get.images) when running Tesseract. If the resulting tessinput.tif file looks problematic, try some of these image processing operations before passing the image to Tesseract.

While tesseract version 3.05 (and older) handle inverted image (dark background and light text) without problem, for 4.x version use dark text on light background.

Tesseract works best on images which have a DPI of at least 300 dpi, so it may be beneficial to resize images. For more information see the FAQ.

"Willus Dotkom" made interesting test for Optimal image resolution with suggestion for optimal Height of capital letter in pixels.

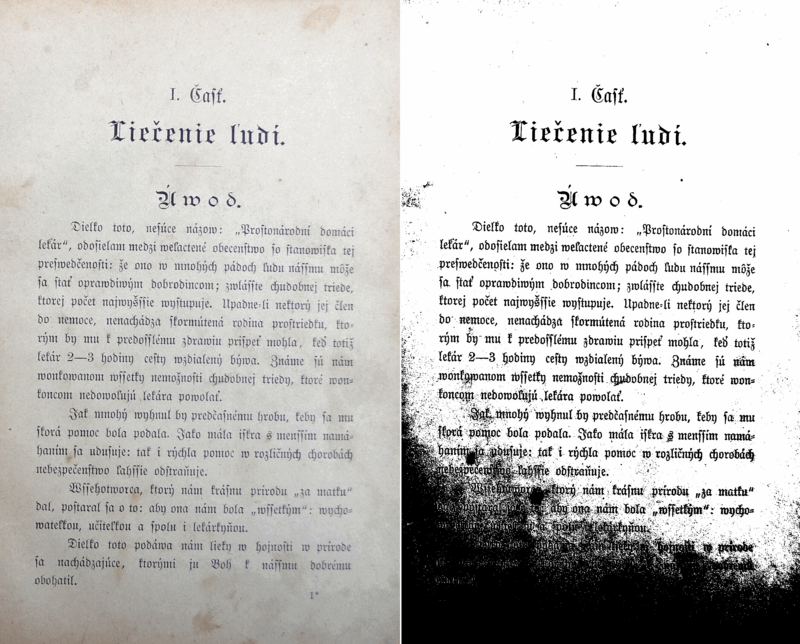

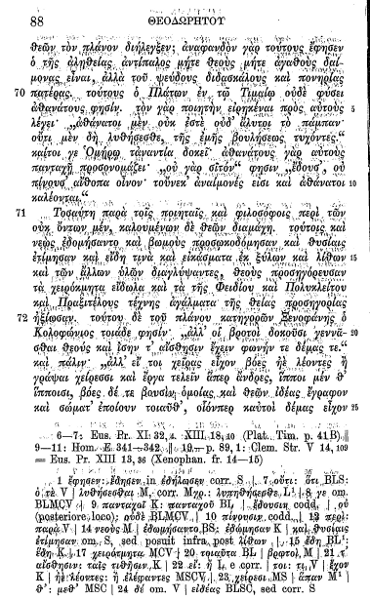

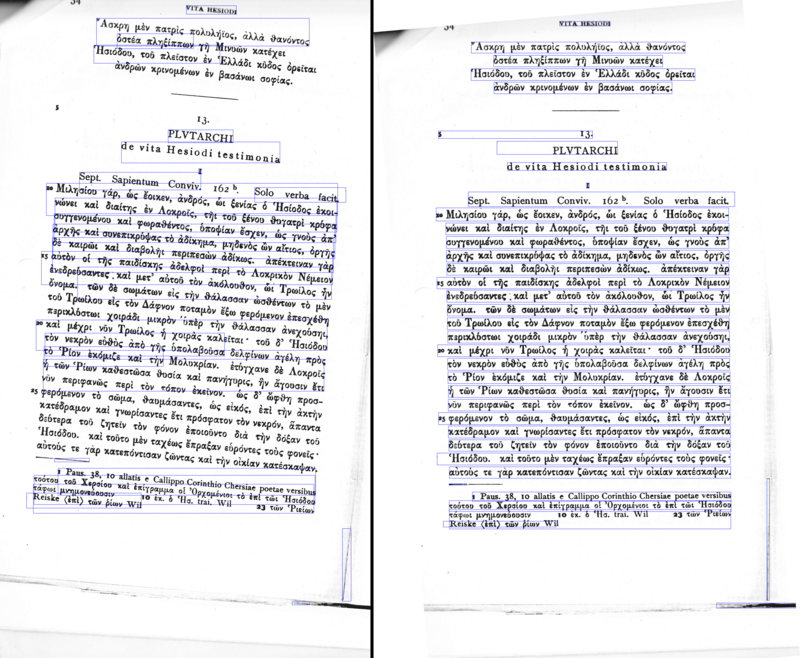

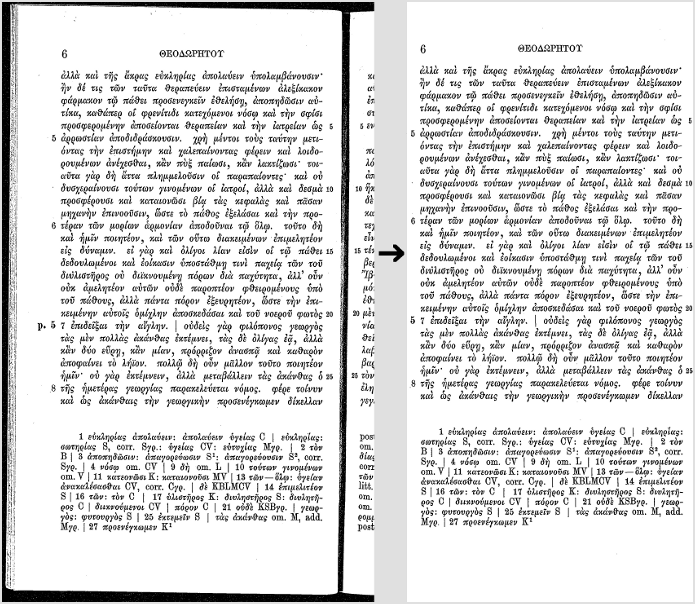

This is converting an image to black and white. Tesseract does this internally (Otsu algorithm), but the result can be suboptimal, particularly if the page background is of uneven darkness.

If you are not able to fix by better input image, you can try different algorithm. See ImageJ Auto Threshold (java) or OpenCV Image Thresholding (python) or scikit-image Thresholding documentation (python).

Noise is random variation of brightness or colour in an image, that can make the text of the image more difficult to read. Certain types of noise cannot be removed by Tesseract in the binarisation step, which can cause accuracy rates to drop.

A skewed image is when a page has been scanned when not straight. The quality of Tesseract's line segmentation reduces significantly if a page is too skewed, which severely impacts the quality of the OCR. To address this rotate the page image so that the text lines are horizontal.

Scanned pages often have dark borders around them. These can be erroneously picked up as extra characters, especially if they vary in shape and gradation.

If you OCR just text area without any border, tesseract could have problems with it. See for some details in tesseract user forum#427 . You can easy add small border (e.g. 10 pt) with ImageMagick®:

convert 427-1.jpg -bordercolor White -border 10x10 427-1b.jpg

Some image formats (e.g. png) can have alpha-channel for providing transparency feature.

Tesseract 3.0x expects that users remove alpha channel from image before using image in tesseract. This can done e.g. with ImageMagick command:

convert input.png -alpha off output.png

Tesseract 4.00 removes alpha channel with leptonica function pixRemoveAlpha(): it removes alpha component by blending with white background. In some case (e.g. OCR of movie subtitles) this can lead to problems, so users would need to remove alpha channel (or pre-process image by inverting image colors) by themself.

- Leptonica

- OpenCV

- ScanTailor Advanced

- ImageMagick

- unpaper

- ImageJ

- Gimp

- PRLib - Pre-Recognize Library with algorithms for improving OCR quality

If you need an example how to improve image quality programmatically, have a look at this examples:

- OpenCV - Rotation (Deskewing) - c++ example

- Fred's ImageMagick TEXTCLEANER - bash script for processing a scanned document of text to clean the text background.

- rotation_spacing.py - python script for automatic detection of rotation and line spacing of an image of text

- crop_morphology.py - Finding blocks of text in an image using Python, OpenCV and numpy

- Credit card OCR with OpenCV and Python

- noteshrink - python example how to clean up scans. Details in blog Compressing and enhancing hand-written notes.

- uproject text - python example how to recover perspective of image. Details in blog Unprojecting text with ellipses.

- page_dewarp - python example for Text page dewarping using a "cubic sheet" model. Details in blog Page dewarping.

By default Tesseract expects a page of text when it segments an image. If you're just seeking to OCR a small region try a different segmentation mode, using the --psm argument. Note that adding a white border to text which is too tightly cropped may also help, see issue 398.

To see a complete list of supported page segmentation modes, use tesseract -h. Here's the list as of 3.21:

0 Orientation and script detection (OSD) only.

1 Automatic page segmentation with OSD.

2 Automatic page segmentation, but no OSD, or OCR.

3 Fully automatic page segmentation, but no OSD. (Default)

4 Assume a single column of text of variable sizes.

5 Assume a single uniform block of vertically aligned text.

6 Assume a single uniform block of text.

7 Treat the image as a single text line.

8 Treat the image as a single word.

9 Treat the image as a single word in a circle.

10 Treat the image as a single character.

11 Sparse text. Find as much text as possible in no particular order.

12 Sparse text with OSD.

13 Raw line. Treat the image as a single text line,

bypassing hacks that are Tesseract-specific.

By default Tesseract is optimized to recognize sentences of words. If you're trying to recognize something else, like receipts, price lists, or codes, there are a few things you can do to improve the accuracy of your results, as well as double-checking that the appropriate segmentation method is selected.

Disabling the dictionaries Tesseract uses should increase recognition if most of your text isn't dictionary words. They can be disabled by setting both of the configuration variables load_system_dawg and load_freq_dawg to false.

It is also possible to add words to the word list Tesseract uses to help recognition, or to add common character patterns, which can further help to improve accuracy if you have a good idea of the sort of input you expect. This is explained in more detail in the Tesseract manual.

If you know you will only encounter a subset of the characters available in the language, such as only digits, you can use the tessedit_char_whitelist configuration variable. See the FAQ for an example. Note this feature is not supported in Tesseract 4. See here for a workaround.

If you've tried the above and are still getting low accuracy results, ask on the forum for help, ideally posting an example image.

Old wiki - no longer maintained. The pages were moved, see the new documentation.

These wiki pages are no longer maintained.

All pages were moved to tesseract-ocr/tessdoc.

The latest documentation is available at https://tesseract-ocr.github.io/.