-

-

Notifications

You must be signed in to change notification settings - Fork 16.3k

New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

Reduce inference time #6736

Comments

|

👋 Hello @devendraswamy, thank you for your interest in YOLOv5 🚀! Please visit our ⭐️ Tutorials to get started, where you can find quickstart guides for simple tasks like Custom Data Training all the way to advanced concepts like Hyperparameter Evolution. If this is a 🐛 Bug Report, please provide screenshots and minimum viable code to reproduce your issue, otherwise we can not help you. If this is a custom training ❓ Question, please provide as much information as possible, including dataset images, training logs, screenshots, and a public link to online W&B logging if available. For business inquiries or professional support requests please visit https://ultralytics.com or email support@ultralytics.com. RequirementsPython>=3.7.0 with all requirements.txt installed including PyTorch>=1.7. To get started: git clone https://github.com/ultralytics/yolov5 # clone

cd yolov5

pip install -r requirements.txt # installEnvironmentsYOLOv5 may be run in any of the following up-to-date verified environments (with all dependencies including CUDA/CUDNN, Python and PyTorch preinstalled):

StatusIf this badge is green, all YOLOv5 GitHub Actions Continuous Integration (CI) tests are currently passing. CI tests verify correct operation of YOLOv5 training (train.py), validation (val.py), inference (detect.py) and export (export.py) on MacOS, Windows, and Ubuntu every 24 hours and on every commit. |

|

@devendraswamy 👋 Hello! Thanks for asking about inference speed issues. YOLOv5 🚀 can be run on CPU (i.e. detect.py inferencepython detect.py --weights yolov5s.pt --img 640 --conf 0.25 --source data/images/YOLOv5 PyTorch Hub inferenceimport torch

# Model

model = torch.hub.load('ultralytics/yolov5', 'yolov5s')

# Images

dir = 'https://ultralytics.com/images/'

imgs = [dir + f for f in ('zidane.jpg', 'bus.jpg')] # batch of images

# Inference

results = model(imgs)

results.print() # or .show(), .save()

# Speed: 631.5ms pre-process, 19.2ms inference, 1.6ms NMS per image at shape (2, 3, 640, 640)Increase SpeedsIf you would like to increase your inference speed some options are:

Good luck 🍀 and let us know if you have any other questions! |

Thank you for your valuable reply and I am using yolov5s model with 640 image size and pytorch 1.10.1 and also I tried the inference with ONNX model with 640 image size but my inference time is 2sec only. As for now my prediction time is 2 sec for 4 models. if possible please guide me to reduce the inference time to 1sec. |

Any one please help to reduce the inference time. Thank you. |

|

@devendraswamy did you attempt using a half-precision model? If you have a NVIDIA GPU available, the easiest way to speed up inference may be to use TensorRT:

|

|

Thank you for your valuable reply and here the problem is the machine will

continuously run with CPU support only. That's why i didn't tried with half

precision method. If any suggestions are their, Kindly reply. Thank you in

advance for your support.

…On Mon, Mar 7, 2022, 18:32 DavidB ***@***.***> wrote:

@devendraswamy <https://github.com/devendraswamy> did you attempt using a

half-precision model?

If you have a NVIDIA GPU available, the easiest way to speed up inference

may be to use TensorRT:

python export.py --weights my-weights.pt --include engine --device 0 then python

detect.py --weights my-weights.engine --device 0

—

Reply to this email directly, view it on GitHub

<#6736 (comment)>,

or unsubscribe

<https://github.com/notifications/unsubscribe-auth/ALLD6LSOLFO5XGUXRZOVPXLU6X46DANCNFSM5PANXNMA>

.

Triage notifications on the go with GitHub Mobile for iOS

<https://apps.apple.com/app/apple-store/id1477376905?ct=notification-email&mt=8&pt=524675>

or Android

<https://play.google.com/store/apps/details?id=com.github.android&referrer=utm_campaign%3Dnotification-email%26utm_medium%3Demail%26utm_source%3Dgithub>.

You are receiving this because you were mentioned.Message ID:

***@***.***>

|

|

Is your goal increasing throughput or reducing latency? If it is about throughput, you could try increasing batch size. Latency is more difficult to increase, especially on CPU-bound devices. Do you use a custom trained model? |

|

My goal is reduce the inference time and I'm using custom model trained

weights for inference.

Actually it's taking 2 sec for inference the all the four objects with four

different weight files at a once.

I need to decreases the four models inference time to 1 sec , any help

grateful to you.

…On Mon, Mar 7, 2022, 18:44 DavidB ***@***.***> wrote:

Is your goal increasing throughput or reducing latency? If it is about

throughput, you could try increasing batch size. Latency is more difficult

to increase, especially on CPU-bound devices. Do you use a custom trained

model?

—

Reply to this email directly, view it on GitHub

<#6736 (comment)>,

or unsubscribe

<https://github.com/notifications/unsubscribe-auth/ALLD6LTBSEFE47MRD45PN73U6X6LFANCNFSM5PANXNMA>

.

Triage notifications on the go with GitHub Mobile for iOS

<https://apps.apple.com/app/apple-store/id1477376905?ct=notification-email&mt=8&pt=524675>

or Android

<https://play.google.com/store/apps/details?id=com.github.android&referrer=utm_campaign%3Dnotification-email%26utm_medium%3Demail%26utm_source%3Dgithub>.

You are receiving this because you were mentioned.Message ID:

***@***.***>

|

|

Is there a reason you are using four different models as opposed to one? Do I understand correctly that you try to run inference on a single image with four different models simultaneously? |

|

Thank you for your support . The hardware will gives four parts of image , i have to detect the objects in that four parts image and gives the results to front end with in 1 sec. currently its taking 2 sec , any suggestions kindly let me know. |

|

@devendraswamy have you tried passing all four images at once by using batch-size 4? |

That was not possible because four images are predicted with four different weight files , any other suggestions ,please let me know. |

|

@devendraswamy individual models may provide the absolute best mAP, but for most use cases best practice would be to combine your four datasets into one 4-class dataset and train a single model on it. See https://community.ultralytics.com/t/how-to-combine-weights-to-detect-from-multiple-datasets/38/9 |

|

👋 Hello, this issue has been automatically marked as stale because it has not had recent activity. Please note it will be closed if no further activity occurs. Access additional YOLOv5 🚀 resources:

Access additional Ultralytics ⚡ resources:

Feel free to inform us of any other issues you discover or feature requests that come to mind in the future. Pull Requests (PRs) are also always welcomed! Thank you for your contributions to YOLOv5 🚀 and Vision AI ⭐! |

|

But As per Accuracy concern , I need to to train the models individually , Please let me know any another solution for Inference time reduction , I train the all the model with 640 image resolution and I am using onnx models. Thank you in advance |

Can you share how u reduce inference time |

|

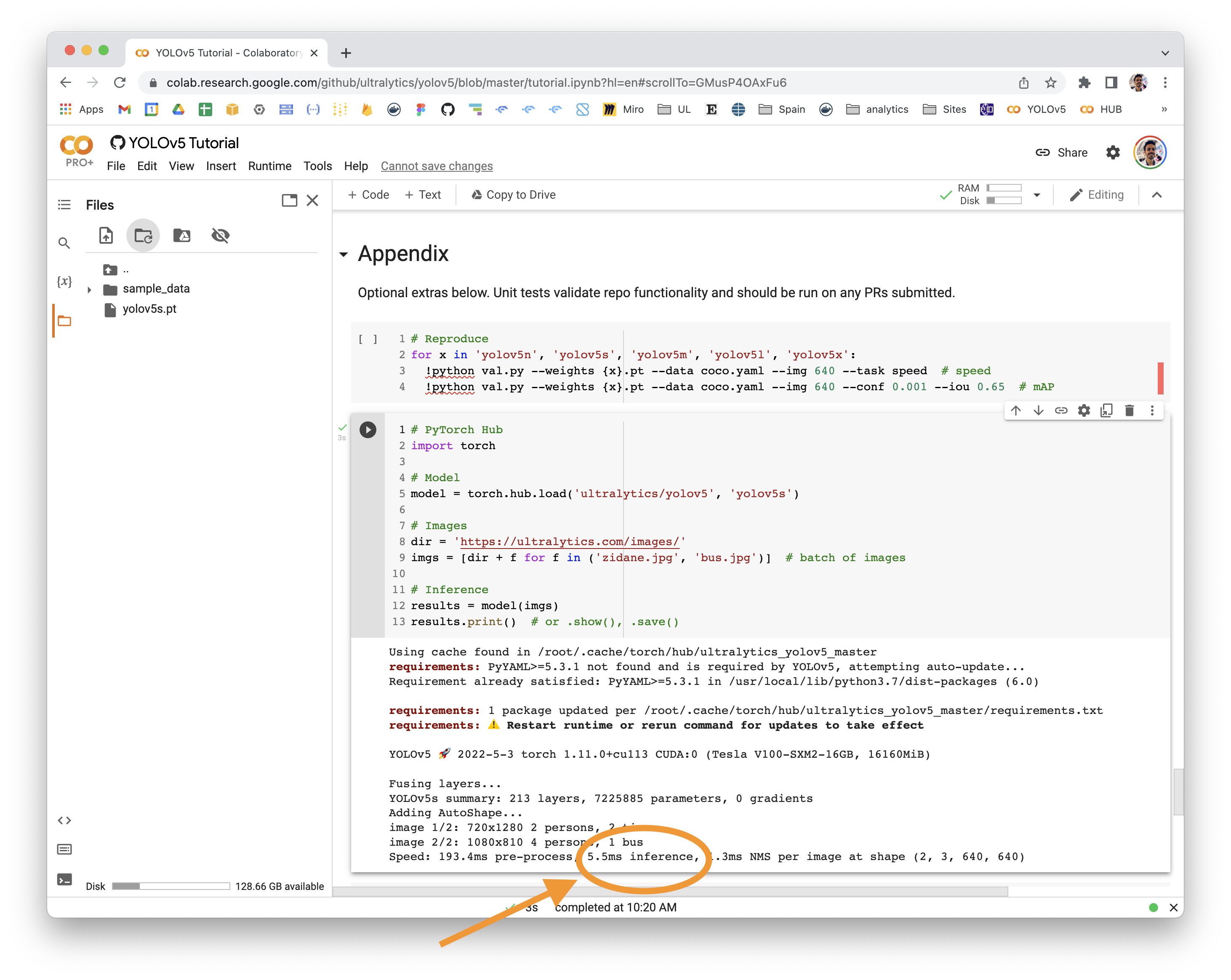

@PankajBarai 👋 Hello! Thanks for asking about inference speed issues. PyTorch Hub speeds will vary by hardware, software, model, inference settings, etc. Our default example in Colab with a V100 looks like this: YOLOv5 🚀 can be run on CPU (i.e. detect.py inferencepython detect.py --weights yolov5s.pt --img 640 --conf 0.25 --source data/images/YOLOv5 PyTorch Hub inferenceimport torch

# Model

model = torch.hub.load('ultralytics/yolov5', 'yolov5s')

# Images

dir = 'https://ultralytics.com/images/'

imgs = [dir + f for f in ('zidane.jpg', 'bus.jpg')] # batch of images

# Inference

results = model(imgs)

results.print() # or .show(), .save()

# Speed: 631.5ms pre-process, 19.2ms inference, 1.6ms NMS per image at shape (2, 3, 640, 640)Increase SpeedsIf you would like to increase your inference speed some options are:

Good luck 🍀 and let us know if you have any other questions! |

Search before asking

Question

Need a solution/suggestion for reducing the 2sec inference time to less then 1 sec.

Additional

Hi, I developed a 4 models for 4 object detectionsin single inference script and I was successfully inference the 4 models with 2 sec at once. Actually I need a solution for reducing the 2sec inference time to less then 1 sec. I'm trying with torch.set_num_threads( ) and multiprocessing separately but I did not getting results in 1 sec. kindly help me to reduce the inference time(in cpu). Thank you in advance.

The text was updated successfully, but these errors were encountered: