Google Cloud Run - FAQ

⚠️ Beware: This is a community-maintained informal knowledge base.

- It does not reflect Google’s product roadmap. (Please don't ask when a feature will ship)

- Refer to the Cloud Run documentation for the most up-to-date information.

Googlers: If you find this repo useful, you should recognize the work internally, as I actively fight for alternative forms of content like this.

- Is this repo useful? Please ⭑Star this repository and share the love.

- Curious about something? Open an issue, someone may be able to add it to the FAQ.

- Contribute if you learned something interesting about Cloud Run.

- Trouble using Cloud Run? Ask a question on Stack Overflow.

- Check out awesome-cloudrun for a curated list of Cloud Run articles, tools and examples.

- Follow me on Twitter as I frequently share Cloud Run news and tips.

- Basics

- What is Cloud Run?

- How is it different than App Engine Flexible?

- How is it different than Google Cloud Functions?

- How does it compare to AWS Fargate?

- How does it compare to AWS Lambda Container Image support?

- How does it compare to Azure Container Instances?

- What is "Cloud Run for Anthos"?

- Is Cloud Run a "hosted Knative"?

- Developing Applications

- Which applications are suitable for Cloud Run?

- What if my application is doing background work outside of request processing?

- Which languages can I run on Cloud Run?

- Can I run my own system libraries and tools?

- Where do I get started to deploy a HTTP web server container?

- How do I make my web application compatible with Cloud Run?

- Can Cloud Run receive events?

- Is Cloud Run good for running static websites?

- How can I have cronjobs on Cloud Run?

- Can I run a container only once on Cloud Run?

- How to configure secrets for Cloud Run applications?

- Can I mount storage volumes or disks on Cloud Run?

- Deploying

- How do I continuously deploy to Cloud Run?

- Which container registries can I deploy from?

- How can I deploy from other GCR registries?

- How to do canary or blue/green deployments on Cloud Run?

- How can I specify Google credentials in Cloud Run applications?

- Can I use

kubectlto deploy to Cloud Run? - Can I use Terraform to deploy to Cloud Run?

- Cold Starts

- Container Lifecycle

- Serving Traffic

- Which network protocols are supported on Cloud Run?

- Customizing port number on Cloud Run?

- What's the maximum request execution time limit?

- Does my service get a domain name on Cloud Run?

- Are all Cloud Run services publicly accessible?

- Can I run Cloud Run applications on a private IP?

- How much additional latency does running on Cloud Run add?

- Does my application get multiple requests concurrently?

- What if my application can’t handle concurrent requests?

- How do I find the right concurrency level for my application?

- Can I make request to a specific container instance?

- Can I add Cloud Run services as backends to Cloud HTTP(S) Load Balancer?

- How does Cloud Run’s load balancing compare with Cloud Load Balancer (GCLB)

- How can I configure CDN for Cloud Run services?

- Does Cloud Run offer SSL/TLS certificates (HTTPS)?

- How can I use my own TLS certificates for Cloud Run?

- How can I redirect all HTTP traffic to HTTPS?

- Is traffic between my app and Cloud Run’s load balancer encrypted?

- Does Cloud Run support load balancing among multiple regions?

- Is HTTP/2 supported on Cloud Run?

- Can my application server run on HTTP/2 protocol?

- Is gRPC supported on Cloud Run?

- How can I serve responses larger than 32MB with Cloud Run?

- Are WebSockets supported on Cloud Run?

- Microservices

- Autoscaling

- Runtime

- Which operating system Cloud Run applications run on?

- Can I use the local filesystem?

- Which system calls are supported?

- Which executable ABIs are supported?

- Where can I find the "instance ID" of my container?

- How can I find the number of instances running?

- How can my service tell it is running on Cloud Run?

- Is there a way to get static IP for outbound requests?

- VPC Support

- Monitoring and Logging

- Pricing

Cloud Run is a service by Google Cloud Platform to run your stateless HTTP containers without worrying about provisioning machines, clusters or autoscaling.

With Cloud Run, you go from a "container image" to a fully managed web application running on a domain name with TLS certificate that auto-scales with requests in a single command. You only pay while a request is handled.

GAE Flexible and Cloud Run are very similar. They both accept container images as deployment input, they both auto-scale, and manage the infrastructure your code runs on for you. However:

- GAE Flexible is built on VMs, therefore is slower to deploy and scale.

- GAE Flexible does not scale to zero, at least 1 instance must be running.

- GAE Flexible billing has 1 minute granularity, Cloud Run in 0.1 second.

Read more about choosing a container option on GCP.

GCF lets you deploy snippets of code (functions) written in a limited set of programming languages, to natively handle HTTP requests or events from many GCP sources.

Cloud Run lets you deploy using any programming language, since it accepts container images (more flexible, but also potentially more tedious to develop). It also allows using any tool or system library from your application (see here) and GCF doesn’t let you use such custom system executables.

Cloud Run can only receive HTTP requests or Pub/Sub push events. (See this tutorial).

Both services auto-scale your code, manage the infrastructure your code runs on and they both run on GCP’s serverless infrastructure.

Read more about choosing between GCP's serverless options

AWS Fargate and Cloud Run both let you run containers without managing the underlying infrastructure.

- Fargate can run a wide range of container workloads, including but not limited to HTTP servers, background or long running tasks.

- Fargate requires an ECS cluster to run tasks on. This cluster doesn't expose the underlying VM infrastructure to you. However, while using Fargate, you still need to configure infrastructure aspects like VPCs, subnets, load balancers, auto-scaling, health checks and service discovery.

- Fargate also has a fairly more complex resource model than Cloud Run, it doesn't allow request-based autoscaling, scale-to-zero, concurrency control, and it exposes container instances and their lifecycle to you.

Cloud Run is a standalone compute platform, abstracting cluster management and focusing on fast automatic scaling. Cloud Run supports running only HTTP servers, and therefore can do request-aware autoscaling, as well as scale-to-zero.

The pricing model is also different:

- On Cloud Run, you only pay while a request is being handled.

- On AWS Fargate, you pay for CPU/memory while containers are running, and since Fargate doesn't support scale-to-zero, a service receiving no traffic will still incur costs.

AWS Lambda has recently added support for running container images.

These images still have to be either an AWS-provided runtime image (i.e. limited

language support) such as public.ecr.aws/lambda/nodejs:12 or you have to

provide your own Runtime

API

implementation to be able to run arbitrary container images.

- Cloud Run can run any container images that runs an HTTP server

- AWS Lambda cannot run arbitrary container images. You have to either use an AWS-provided image, or code your own Runtime API translation layer.

- AWS Lambda doesn't support multiple requests handled by the same instance, and each request is billed separately.

- a single Cloud Run container instance can handle multiple requests simultaneously and you aren't charged for them separately. (see: When am I charged?)

Azure Container Instances and Cloud Run both let you run containers without managing the underlying infrastructure (VMs, clusters). Both ACI and Cloud Run give you a publicly accessible endpoint after deploying the application.

Cloud Run supports running only HTTP servers and offers auto-scaling, and scale to zero. ACI is for long-running containers. Therefore, the pricing model is different. On Cloud Run, you only pay while a request is being handled.

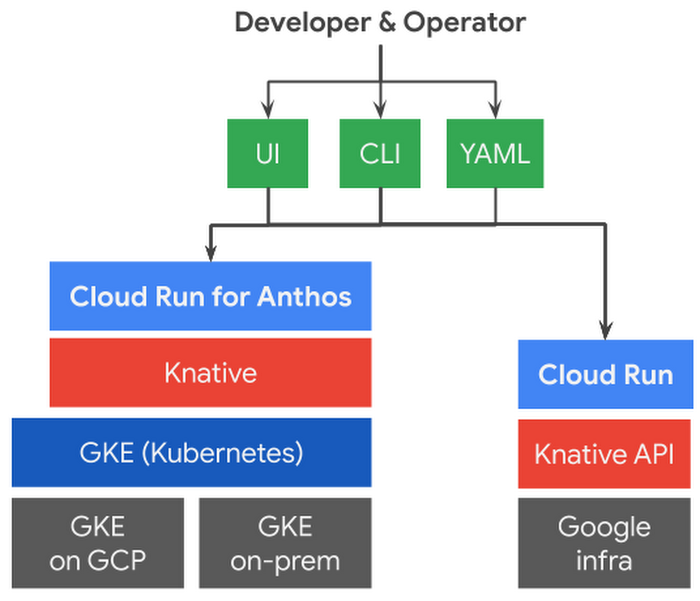

"Cloud Run for Anthos" gives you the same Cloud Run experience on your Kubernetes clusters on Anthos (either on GCP with GKE, or on-prem/other clouds). This gives you the freedom to choose where you want to deploy your applications.

"Cloud Run" and "Cloud Run for Anthos" are the same product, but running in different places:

- the same application format (container images)

- the same deployment/management experience (

gcloudor Cloud Console) - the same API (Knative serving API).

Look at this diagram, or watch this video to decide how to choose between the two.

Cloud Run for Anthos basically installs and manages a Knative installation (with some additional GCP-specific components for monitoring etc) on your Kubernetes cluster so that you don’t have to worry about installing and managing Knative yourself.

Sort of.

Cloud Run implements most parts of the Knative Serving API. However, the underlying implementation of the functionality could differ from the open source Knative implementation.

With Cloud Run for Anthos, you actually get a Knative installation (managed by Google) on your Kubernetes/GKE cluster

Cloud Run is designed to run stateless request-driven containers.

This means you can deploy:

- publicly accessible applications: web applications, APIs or webhooks

- private microservices: internal microservices, data transformation, background jobs, potentially triggered asynchronously by Pub/Sub events or Cloud Tasks.

Other kinds of applications may not be fit for Cloud Run.

If your application is doing processing while it’s not handling requests or storing in-memory state, it may not be suitable.

Your application’s CPU is significantly throttled nearly down to zero while it's not handling a request.

Therefore, your application should limit CPU usage outside request processing to a minimum. It might not be entirely possible since the programming language you use might do garbage collection or similar runtime tasks in the background.

If an application can be packaged into a container image that can run on Linux (x86-64), it can be executed on Cloud Run.

Web applications written in languages like Node.js, Python, Go, Java, Ruby, PHP, Rust, Kotlin, Swift, C/C++, C# can work on Cloud Run.

🍄 Users managed to run web servers written in x86 assembly, or 22-year old Python 1.3 on Cloud Run.

Yes, see the section above. Since Cloud Run accepts container images as the

deployment unit, you can add arbitrary executables (like grep, ffmpeg,

imagemagick) or system libraries (.so, .dll) to your container image and

use them in your application.

See this tutorial

using Graphviz dot that generates PNG diagrams.

See Cloud Run Quickstart which has sample applications written in many languages.

Your existing applications must listen on the PORT environment variable to

work on Cloud Run (see container contract). This

environment variable is given to your app by Cloud Run. It currently defaults to

8080 (but you should not rely on this) and you can customize this port

number.

Yes.

Cloud Run integrates securely with Pub/Sub push subscriptions:

- Events are delivered via HTTP to the endpoint of your Cloud Run service.

- Pub/Sub automatically validates the ownership of the

*.run.appCloud Run URLs - You can leverage Pub/Sub push authentication to securely and privately push events to Cloud Run services, without exposing them publicly to the internet.

Many GCP services like Google Cloud Storage are able to send events to a Pub/Sub topic. You can publish your own events to a Pub/Sub topic and push them to a Cloud Run service.

Follow this tutorial for instructions about how to push Pub/Sub events to Cloud Run services.

Besides Pub/Sub, Google Cloud Eventarc(in preview) allows you to trigger Cloud Run from events that originate from Cloud Storage, BigQuery, Firestore and more than 60 other Google Cloud sources. See this blog post for detail.

Potentially. Cloud Run has a generous free tier which can let you run your websites for free. However, if you have a static HTML website, using Firebase Hosting or Google Cloud Storage buckets (behind Cloudflare for HTTPS+CDN) can also be similarly cheap or free.

If you need to invoke your Cloud Run applications periodically, use Google Cloud Scheduler. It can make a request to your application’s specific URL at an interval you specify.

Short answer: No. Cloud Run is not designed for this purpose.

Sometimes you might have a container-based job (run-to-completion task) that might seem suitable for Cloud Run. However, Cloud Run is designed for running server apps (HTTP/gRPC etc).

If you want to execute run-to-completion containers on-demand or periodically on Google Cloud Platform, you can create a Compute Engine VM with a container.

You can use Secret Manager with Cloud Run. Read how to write code and set permissions to access the secrets from your Cloud Run app in the documentation.

Alternatively, if you'd like to store secrets in Cloud Storage (GCS) using Cloud KMS envelope encryption, check out the Berglas tool and library (Berglas also has support for Secret Manager).

Cloud Run currently doesn’t offer a way to bind mount additional storage volumes

(like FUSE, or persistent disks) on your filesystem. If you’re reading

data from Google Cloud Storage, instead of using solutions like gcsfuse, you

should use the supported Google Cloud Storage client libraries.

However, Cloud Run for Anthos allows you to mount Kubernetes Secrets and ConfigMaps, but this is not yet fully supported. See an example here about mounting Secrets to a Service running on GKE.

- A lot of CI/CD tutorials at awesome-cloudrun repo

- Documentation: Continuous Deployment using Google Cloud Build

- Blog: Deploy using GitLab CI/CD

(If you know of articles about other CI/CD system integrations, add them here.)

For other CI/CD systems, roughly the steps you should follow look like:

-

Create a new service account with a JSON key.

-

Give the service account IAM permissions to deploy to Cloud Run.

roles/run.adminto deploy applicationsroles/iam.serviceAccountUseron the service account that your app will use

-

Upload the JSON key to the CI/CD environment, and authenticate to

gcloudby calling:gcloud auth activate-service-account --key-file=[KEY_JSON_FILE] -

Deploy the app by calling:

gcloud run deploy [MY_SERVICE] --image=[...] [...]

Cloud Run currently only allows deploying images hosted on Google Container

Registry (*.gcr.io/*) and Cloud Artifact Registry (*.pkg.dev/*).

Deploying from external registries like Docker Hub are currently not supported.

If you're deploying from GCR registries on another GCP project:

- public registries: should be deploying without additional configuration

- private registries: need to give GCR access to service account used by Cloud Run.

To give access, go to IAM&Admin on Cloud Console, and find the email for "Google Cloud Run Service Agent". Then follow this document to give this service account GCR access on the other project.

If you updated your Cloud Run service, you probably realized it creates a new revision for every new configuration of your service.

Cloud Run allows you to split traffic between multiple revisions, so you can do gradual rollouts such as canary deployments or blue/green deployments.

For applications running on Cloud Run, you don't need to deliver JSON keys for

IAM Service Accounts, or set GOOGLE_APPLICATION_CREDENTIALS environment

variable.

Just specify the service account (--service-account) you want your application

to use automatically while deploying the app. See configuring service

identity.

Cloud Run supports the Knative Serving API. However, currently some

parts of Kubernetes Discovery API required by kubectl are not yet offered on

Cloud Run API.

As a solution, you can write your Knative Service resource as a .yaml

file and use the following command to deploy to Cloud Run:

gcloud run services replace --platform=managed <file.yaml>Since "Cloud Run for Anthos" runs Knative natively, you can use

kubectl to deploy Knative Services to your GKE cluster by writing YAML

manifests and running kubectl apply. See Knative tutorials for more info.

Yes. Terraform provides resources to define a Cloud Run deployment in Terraform. Also see this blog post and sample app.

Yes. If a Cloud Run service does not receive requests for a long time, it will take some time to start it again. This will add additional delay to the first request.

Cold start latency depends on many factors, however it is independent of the image size.

Cloud Run does not provide any guarantees on how long it will keep a container instance "warm". It depends on factors like capacity and Google’s implementation details. See: How to keep a service "warm"?.

Cloud Run allows you to have a specified number of warm instances. These instances are billed differently, but they stick around to prevent cold starts.

See performance optimization tips, basically:

- minimize the number and size of the dependencies that your app loads

- keep your app’s "time to listen for requests" startup time short

- prevent your application process from crashing

The size of your container image has almost no impact on cold starts.

Cloud Run does not have the notion of App Engine warmup requests. You can perform initialization of your application (such as loading data) until you start listening on the port number.

Note that delaying the listening on the port number causes longer cold starts, so consider lazily computing/fetching the data you need to reduce cold start latencies.

Cloud Run now allows you to keep a number of warm instances. Also called "minimum instances", Cloud Run keeps these container instances running so they're ready to serve requests.

Such warm containers are billed differently, however keeping a single 256 MB RAM / 1 vCPU container warm for a month costs around $8, which is still cheaper than the cheapest VM option (f1-micro).

These warm containers still get their CPU throttled to ~0% when they are not processing requests.

You can also work around "cold starts" by periodically making requests to your Cloud Run service which can help prevent the container instances from scaling to zero. For this, use Google Cloud Scheduler to make requests every few minutes.

Cloud Run does not mark request logs with information about whether they caused a cold start or not. However you can implement this yourself using a global variable.

Cloud Run starts sending traffic to your application once you start listening

on the port number (given to you via PORT environment variable).

Cloud Run does not offer user-configurable liveness checks or probes like Kubernetes, as explained in previous question, the moment your server starts listening on the port number, you indicate that your application is ready to receive traffic.

If the entrypoint process of a container exits, the container is stopped. A crashed container triggers cold start while the container is restarted. Avoid exiting/crashing your server process by handling exceptions. See development tips.

Currently, Cloud Run terminates containers while scaling to

zero with unix signal 15 (SIGTERM).

SIGTERM is trappable (capturable) by applications. If handled, CPU is allocated

for 10s max.

Cloud Run only supports HTTP/1.x and HTTP/2 (including gRPC) over TLS. Other TCP and UDP based protocols are not supported. This means, you can't run your arbitrary TCP based application, or a Redis/Memcached server on Cloud Run.

Cloud Run now allows you to customize which port

number

your application serves traffic on. This is for applications that cannot

change the server port by reading the PORT environment variable passed by

Cloud Run. (Upon customizing, PORT value will have the specified value.)

By default 5 minutes or up to 60 minutes, if configured. See limits.

Yes, every Cloud Run service gets a *.run.app domain name for free. You can

also use your domain names.

No. Cloud Run allows services to be either publicly accessible to anyone on the Internet, or private services that require authentication via a JWT (identity token).

Currently no. Cloud Run applications always have a *.run.app public hostname

and they cannot be placed inside a VPC (Virtual Private Cloud) network.

If any other private service (e.g. GCE VMs, GKE) needs to call your Cloud Run application, they need to use this public hostname.

With ingress settings on Cloud Run, you can allow your app to be accesible only from the VPC (e.g. VMs or clusters) or VPC+Cloud Load Balancer –but it still does not give you a private IP. You can still combine this with IAM to restrict the outside world but still authenticate and authorize other apps running the VPC network.

TODO(ahmetb): Write this section. Ideally we should link to some blog posts doing an analysis of this.

Contrary to most serverless products, Cloud Run is able to send multiple requests to be handled simultaneously to your container instances.

Each container instance on Cloud Run is (currently) allowed to handle up to 1000 concurrent requests. The default is 80.

If your application cannot handle this number, you

can configure this number while deploying your service in gcloud or Cloud

Console.

Most of the popular programming languages can process multiple requests at the same time thanks to multi-threading. But some languages may need additional components to do concurrent requests (e.g. PHP with Apache, or Python with gunicorn).

Each application and language can process different levels of simultaneously without having them time out. That's why Cloud Run allows you to configure concurrency per service.

You should do "load testing" to find out where your application should stop handling additional request and additional instances should be created. Read Tuning concurrency for more.

No, Cloud Run does not offer a "sticky session" primitive. All requests are load balanced between available container instances.

UPDATE (July 10, 2020): Yes, this is now in beta.

You need to add serverless network endpoint groups behind a Cloud HTTP(S) Load Balancer (GCLB) to achieve this. The "serverless NEG" concepts allows Cloud Run services to be added behind a load balancer, just like a VM or GCS bucket.

Cloud Run applications can be added behind a Cloud HTTP(s) load balancer (GCLB). However you might wonder, aren't Cloud Run endpoints already load-balanced? Yes, they are.

However, GCLB offers a wide variety of options that you might need, such as:

- Support for configuring GCLB products like Cloud CDN, Cloud Armor and Cloud IAP

- Routing to multiple backends (VM, GCS bucket, Run/GCF apps) on a single domain

- Bringing your own certificates

- Having a static IP (IPv4 or IPv6) for your domains

Yes, see previous question. With Cloud HTTP(S) Load Balancer (GCLB) integration, you need to add the Cloud Run service as a NEG to the load balancer.

You can also have CDN from other services if you don't want to use Cloud HTTP(S) Load Balancer:

- Firebase Hosting by:

- responding to requests with a

Cache-Controlheader, and - configuring a rewrite configuration in

firebase.jsonof your Firebase app.

- responding to requests with a

WARNING: If you are using Cloudflare with proxying capabilities, follow the guide here.

Yes. If you’re using the domain name provided by Cloud Run (*.run.app), your

application is immediately ready to serve on https:// protocol because Google

has a wildcard TLS certificate for

*.a.run.app.

If you’re using your own custom domain name, Cloud Run provisions a TLS

certificate for your domain name. This may take ~15 minutes to provision and

serve traffic on https://. Cloud Run uses its own certificate authority named

Google Trust Services or Let’s Encrypt to provision

a certificate for your domain (example).

When you use custom domain mapping feature of Cloud Run, it will provision a TLS certificate for your domain. However, if you want to use custom features, check out the [Cloud HTTP(S) Load Balancer (GCLB) integration][setup-neg].

This is built in and required. To make Cloud Run secure by default, Cloud Run services will only be accessible via HTTPS.

Any HTTP requests are automatically returned an HTTP 302 response pointing to the HTTPS version of the current URL. This was rolled out as a change in the beta service in August 2019.

Since your app serves traffic on PORT (by default 8080) unencrypted, you might

think the connection between Cloud Run’s load-balanced endpoint and your

application is unencrypted.

However, the transit between Google’s frontend/load balancer and your Cloud Run container instance is encrypted. Google terminates TLS/HTTPS connections before they reach your application, so that you don’t have to handle TLS yourself.

Not natively. Cloud Run services are regional. But it's possible to do it yourself.

Using the [Cloud Load Balancer (GCLB)][setup-neg] integration, deploying your service to multiple regions and adding them behind the load balancer, the clients connecting to the load balancer IP/domain will be routed to the Cloud Run service closest to the client.

Read documentation or my article or with Terraform.

Yes. Cloud Run’s gateway will upgrade any HTTP/1 server you write to HTTP/2. If

you query your application with https://, you should be seeing HTTP/2 protocol

used between the client and Cloud Run service:

$ curl --http2 https://<url>

...

< HTTP/2 200

...

HTTP/2 to the container is currently only supported for gRPC services.

Cloud Run requires your application to serve on an unencrypted endpoint and HTTP/2 by default requires TLS.

If your server supports HTTP/2 upgrade via the h2c (unencrypted HTTP/2)

protocol, it will safely fall-back to HTTP/1.1.

If you develop an HTTP/2 only server, Cloud Run will not currently be able to route requests to it, as Cloud Run does include prior knowledge headers by default.

Yes. Cloud Run (fully managed) can now run gRPC services, including all RPC types (unary, server-streaming, client-streaming and bidirectional).

Cloud Run can stream responses that are larger than 32MB using HTTP chunked

encoding. Add the HTTP header Transfer-Encoding: chunked to your response

if you know it will be larger than 32MB.

WebSockets are now supported on Cloud Run. Read documentation.

Since WebSockets requests are typically long-running, they will keep billing the container, and therefore can be more expensive. WebSockets requests are also subject to "request timeout" limits (i.e. they don't stay open forever).

To make requests to Cloud Run applications privately, you need to obtain an

identity token, and add it to the Authorization header of the outbound request

of the target service. You can find documentation and examples

here.

For Cloud Run service A (running with service account SA1) to be able to

connect to private Cloud Run service B, you need to:

-

Update IAM permissions of service B to give

SA1Cloud Run Invoker role (roles/run.invoker). -

Obtain an identity token (JWT) from metadata service:

curl -H "metadata-flavor: Google" \ http://metadata/instance/service-accounts/default/identity?audience=URL

where

URLis the URL of service B (i.e.https://*.run.app). -

Add header

Authorization: Bearer <TOKEN>where<TOKEN>is the response obtained in the previous command.

If you're using Kubernetes or similar systems, you might be used to calling

another service directly by name (e.g. http://hello/). However, Cloud Run does

not support this yet. Therefore you must use the full (*.run.app) URL.

Alternatively, you can try out the runsd project, which is my prototype Cloud Run DNS Service Discovery + automatic authentication implementation.

Yes. When your service is not receiving requests, you are not paying for anything.

Therefore, after not receiving any requests for a while, the first request may observe cold start latency.

By setting the Maximum number of instances parameter when deploying a new revision.

Each Cloud Run service can scale by default up to 1000 container instances, a limit that can be increase via a quota request. Each container instance can handle up to 250 simultaneous requests.

Linux.

However, since you bring your own container image, you get to decide your system libraries like libs (e.g. musl libc in alpine, or glibc in debian based images).

Your applications run on gVisor which only supports Linux (currently).

Yes, however files written to the local filesystem count towards available memory and may cause container instance to go out-of-memory and crash.

Therefore, writing files to local filesystem are discouraged, with the exception

of /var/log/* path for logging.

Cloud Run applications run on gVisor container sandbox, which executes Linux kernel system calls made by your application in userspace.

gVisor does not implement all system calls (see

here). If your app

has such a system call (quite rare), it will not work on Cloud Run. Such an

event is logged and

you can use

strace

to determine when the system call was made in your app.

Applications compiled for 64-bit Linux are supported. To be precise, ELF executables compiled to x84-64. See Container Contract.

The logs collected from a container instance specify the unique instance ID of the container when the logs are viewed on Stackdriver Logging. This instance ID is not made available to the application.

To identify your container instance while it’s running, generate a random UUID during the startup of your process and store it in a variable.

You can't see the number of instances running at a time on Cloud Run.

However, you can use the Billable container instance time metric on Cloud Run service dashboard to infer this information.

Ideally you should not care about "instant value" of number of instances in a serverless world, since your applications autoscale based on traffic patterns better and you only pay while a request is being handled (not the idle instance time).

Cloud Run provides some environment variables standard in Knative. Ideally you should explicitly deploy your app with an environment variable indicating it is running on Cloud Run.

You can also access instance

metadata

endpoints like

http://metadata.google.internal/computeMetadata/v1/project/project-id to

determine if you are on Cloud Run. However, this will not distinguish "Cloud

Run" vs "Cloud Run for Anthos" as the metadata service is available on GKE nodes

as well.

Yes. If you need to connect to an external API or database that requires IP address whitelisting, you can configure a static egress IP address for your Cloud Run service.

This involves configuring a Cloud Router and Cloud NAT for a VPC network and using VPC connector with your Cloud Run service. Read documentation and follow setup guide.

Cloud Run can connect to private IPs in VPC networks (see below).

However, you currently cannot place a Cloud Run app into a VPC so it can have a private IP address to be accessible from only within the VPC (see here).

Cloud Run now has support for "Serverless VPC Access". This feature allows Cloud Run applications to be able to connect private IPs in the VPC (but not the other way).

This way your Cloud Run applications can connect to private VPC IP addresses running:

- GCE VMs

- Cloud SQL instances

- Cloud Memorystore instances

- Kubernetes Pods/Services (on GKE public or private clusters)

- Internal Load Balancers

To learn more read my blog post here or refer to the official documentation.

VPC-SC allows you to define which endpoints your applications can connect to (to prevent exfiltration risks).

You can use Cloud Run with VPC service controls (currently in preview).

Anything your application writes to standard output (stdout) or standard error (stderr) is collected as logs by Cloud Run.

Some existing apps might not be complying with that (e.g. nginx writes logs to

/var/log/nginx/error.log). Therefore any files written under /var/log/* are

also aggregated. Learn more here.

All your log lines must be JSON objects with fields recognized by Stackdriver

Logging,

such as timestamp, severity, message.

Yes. See this document on how to view various metrics about your Cloud Run container instances.

Cloud Run supports tracing out of the box. If you go to "Tracing" section on Cloud Console, you will see the traces are being collected at a predefined sampling rate for your requests.

If you want to correlate logs to requests, or create additional trace spans, you

can use the x-cloud-trace-context header provided to each request with

OpenTelemetry or OpenCensus libraries.

Cloud Run Pricing documentation has the most up-to-date information.

Yes! See Pricing documentation.

You only pay while a request is being handled on your container instance.

This means an application that is not getting traffic is free of charge. See the next question.

Based on "time serving requests" on each instance. If your service handles multiple requests simultaneously, you do not pay for the CPU/memory time during the overlap separately (per-request costs still apply). (This is a cost saver, compared to Cloud Functions.)

Each billable timeslice is rounded up to the nearest 100 milliseconds.

Read how the billable time is calculated, it is basically like this:

request1 response1

| request2 ʌ response2

| | | ʌ

v........|......./ |

| |

v.............../

|-----FREE-----|----------BILLED----------|----FREE...

You are paying for CPU, memory and the traffic sent to the client from your application (egress traffic).

This is not an official Google project or roadmap. Refer to the Cloud Run documentation for the authoritative information. This project is licensed under Creative common Attribution 4.0 International (CC BY 4.0) license.

Your question not answered here? Open an issue and see if we can answer.