[ML] Improve robustness to outliers after detecting changes in time series #2280

Add this suggestion to a batch that can be applied as a single commit.

This suggestion is invalid because no changes were made to the code.

Suggestions cannot be applied while the pull request is closed.

Suggestions cannot be applied while viewing a subset of changes.

Only one suggestion per line can be applied in a batch.

Add this suggestion to a batch that can be applied as a single commit.

Applying suggestions on deleted lines is not supported.

You must change the existing code in this line in order to create a valid suggestion.

Outdated suggestions cannot be applied.

This suggestion has been applied or marked resolved.

Suggestions cannot be applied from pending reviews.

Suggestions cannot be applied on multi-line comments.

Suggestions cannot be applied while the pull request is queued to merge.

Suggestion cannot be applied right now. Please check back later.

We have some code which affects the weighting we apply to outliers immediately after detecting a change in a time series. This is to deal with time series which flip-flop between values: we don't want to flag every change as anomalous, but rather learn the "envelope" of the changes. However, we need to be a bit more careful than we are with allowing the model to incorporate unusual values just after a change. If we get unlucky and there is a large outlier around this time it pollutes the model.

To address the flip-flop case we simply need to increase the weight of values whose prediction error is on the same scale as the change we detected. Values whose prediction error is significantly larger can be reweighted as normal. I noticed this while debugging #2276.

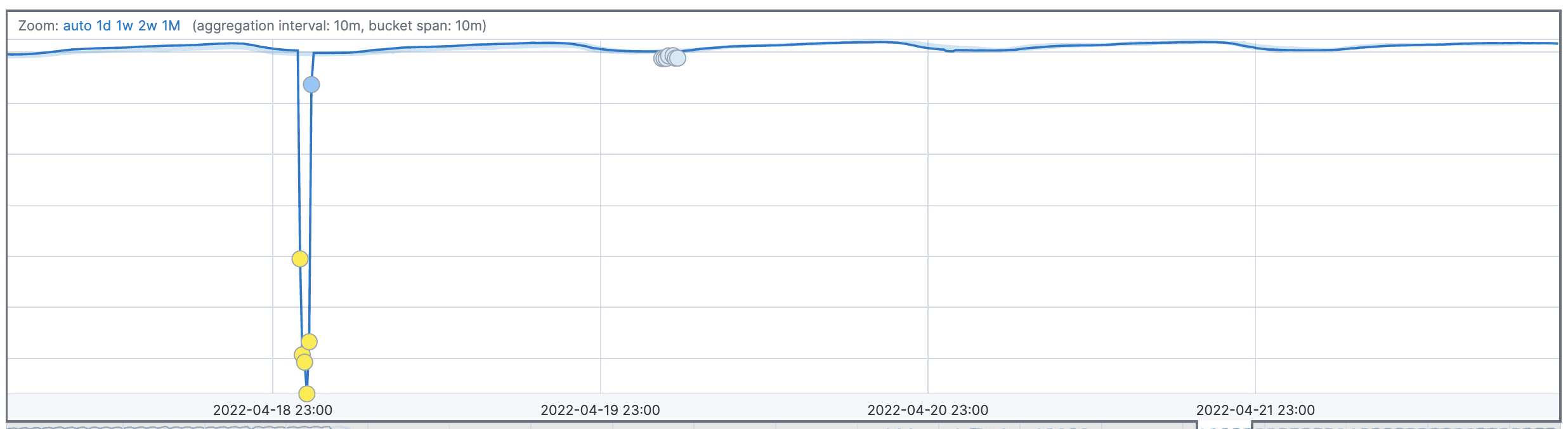

Before

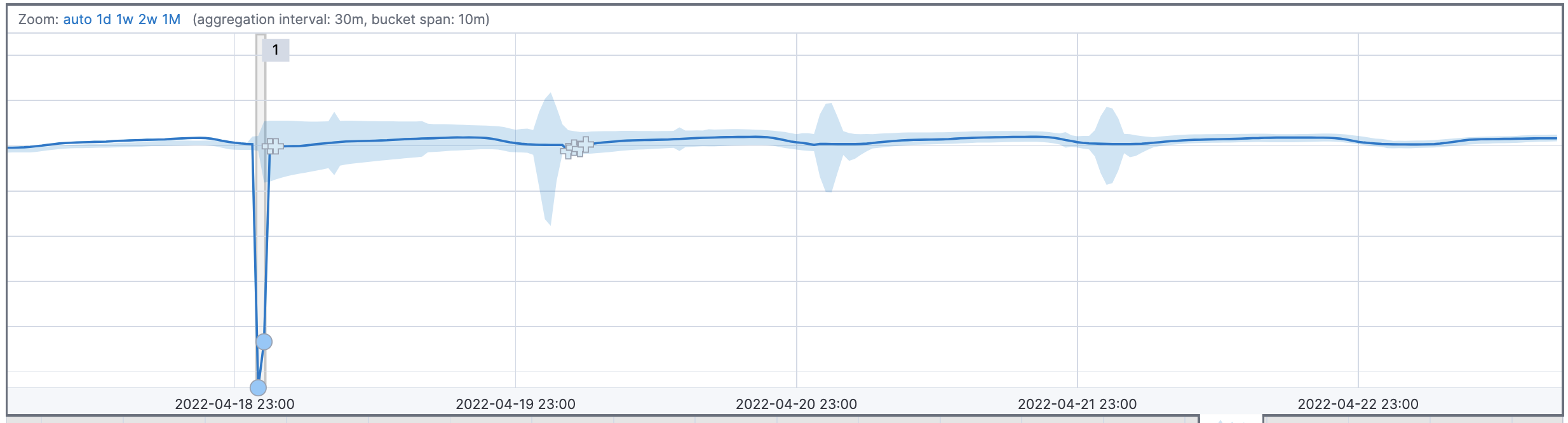

After