Pixyz Implementation: https://github.com/masa-su/pixyzoo/tree/master/GQN

- Python >=3.6

- PyTorch

- TensorBoardX

python train.py --train_data_dir /path/to/dataset/train --test_data_dir /path/to/dataset/test

# Using multiple GPUs.

python train.py --device_ids 0 1 2 3 --train_data_dir /path/to/dataset/train --test_data_dir /path/to/dataset/test

The default setting is based on the original GQN paper (In the paper, 4x GPUs with 24GB memory are used in the experiments).

If you have only limited GPU memory, I recommend to use the option of --shared_core True (default: False) or --layers 8 (default 12) to reduce parameters.

As far as I experimented, this change would not affect the quality of results so much, although the setting would be different with the original paper.

Convert TFRecords of the dataset for PyTorch implementation.

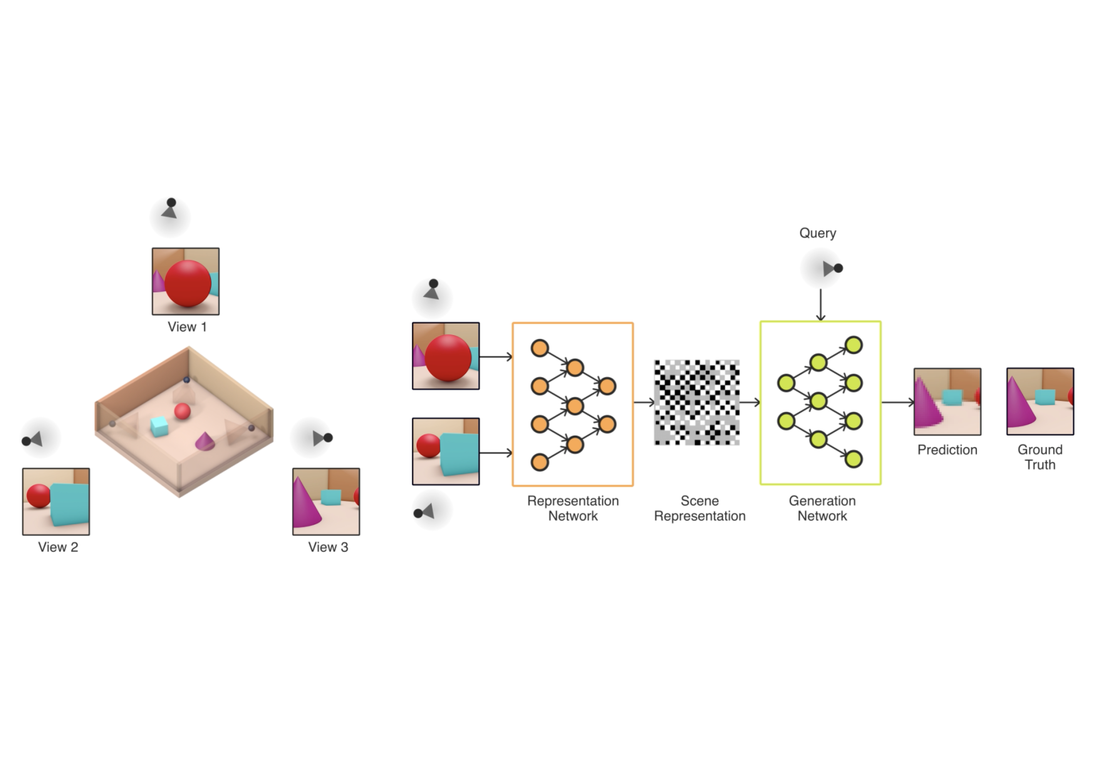

Representation networks (See Figure S1 in Supplementary Materials of the paper).

Core networks of inference and generation (See Figure S2 in Supplementary Materials of the paper).

Implementation of convolutional LSTM used in core.py.

Dataset class.

Main module of Generative Query Network.

Training algorithm.

Scheduler of learning rate used in train.py.

| Ground Truth | Generation | |

|---|---|---|

| Shepard-Metzler objects |  |

|

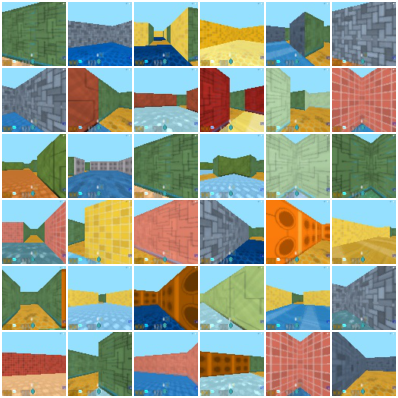

| Mazes |  |

|